Command Palette

Search for a command to run...

Recursive Multi-Agent Systems

Recursive Multi-Agent Systems

Abstract

Recursive or looped language models have recently emerged as a new scaling axis by iteratively refining the same model computation over latent states to deepen reasoning. We extend such scaling principle from a single model to multi-agent systems, and ask: Can agent collaboration itself be scaled through recursion? To this end, we introduce RecursiveMAS, a recursive multi-agent framework that casts the entire system as a unified latent-space recursive computation. RecursiveMAS connects heterogeneous agents as a collaboration loop through the lightweight RecursiveLink module, enabling in-distribution latent thoughts generation and cross-agent latent state transfer. To optimize our framework, we develop an inner-outer loop learning algorithm for iterative whole-system co-optimization through shared gradient-based credit assignment across recursion rounds. Theoretical analyses of runtime complexity and learning dynamics establish that RecursiveMAS is more efficient than standard text-based MAS and maintains stable gradients during recursive training. Empirically, we instantiate RecursiveMAS under 4 representative agent collaboration patterns and evaluate across 9 benchmarks spanning mathematics, science, medicine, search, and code generation. In comparison with advanced single/multi-agent and recursive computation baselines, RecursiveMAS consistently delivers an average accuracy improvement of 8.3%, together with 1.2times-2.4times end-to-end inference speedup, and 34.6%-75.6% token usage reduction. Code and Data are provided in https://recursivemas.github.io.

One-sentence Summary

RecursiveMAS extends recursive scaling to multi-agent systems by connecting heterogeneous agents through a lightweight RecursiveLink module for unified latent-space recursive computation, and it is optimized via an inner-outer loop learning algorithm that delivers an average 8.3% accuracy improvement, a 1.2×–2.4× end-to-end inference speedup, and 34.6%–75.6% token reduction across nine benchmarks spanning mathematics, science, medicine, search, and code generation.

Key Contributions

- RecursiveMAS extends recursive scaling to collaborative AI by treating multi-agent systems as a unified latent-space computation. The framework connects heterogeneous agents through a lightweight RecursiveLink module that enables in-distribution latent thought generation and direct cross-agent state transfer.

- An inner-outer loop learning algorithm optimizes the system through iterative whole-system co-optimization and shared gradient-based credit assignment across recursion rounds. Theoretical analysis demonstrates that this approach maintains stable gradients during recursive training and achieves lower runtime complexity than standard text-based multi-agent systems.

- Evaluations across nine benchmarks spanning mathematics, science, medicine, search, and code generation demonstrate consistent improvements over advanced single-agent, multi-agent, and recursive computation baselines. The framework delivers an average accuracy gain of 8.3%, a 1.2x to 2.4x end-to-end inference speedup, and a 34.6% to 75.6% reduction in token usage.

Introduction

Large language models frequently struggle with complex reasoning, driving the adoption of multi-agent systems that scale performance by distributing tasks across specialized models. Traditional text-based collaboration, however, introduces significant latency and prevents direct system-level optimization, as prior methods either rely on superficial prompt tuning or require prohibitively expensive isolated training. The authors leverage recursive computation to introduce RecursiveMAS, a framework that treats multi-agent interaction as a unified latent-space loop. By routing information through a lightweight RecursiveLink module and applying an inner-outer loop training paradigm, the system iteratively refines shared representations without updating full model parameters. This design maintains stable gradient propagation, cuts token usage and inference time, and delivers consistent accuracy gains across diverse reasoning benchmarks.

Dataset

-

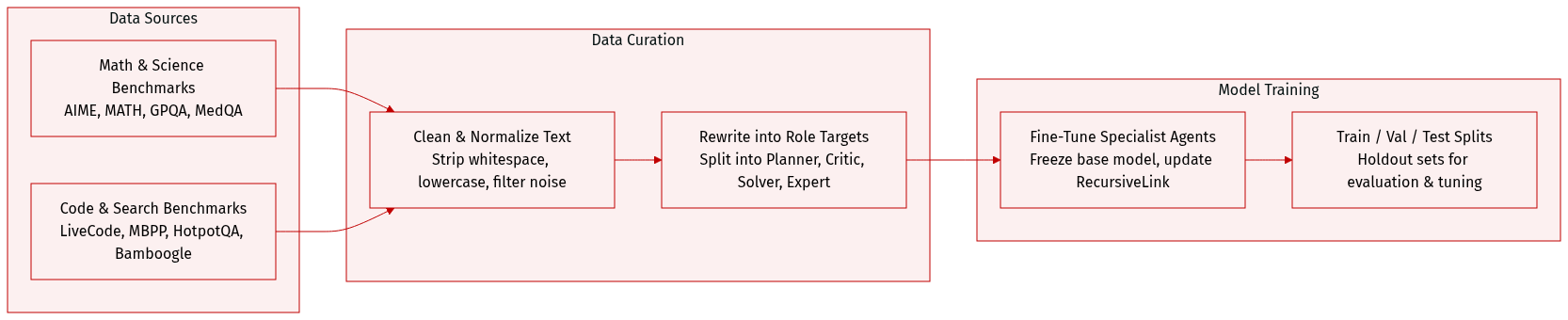

Dataset composition and sources: The authors evaluate across four primary domains: mathematical reasoning, scientific and medical tasks, code generation, and search-based question answering. Training supervision targets are constructed from four curated sources spanning these domains: s1K, m1K, OpenCodeReasoning, and ARPO-SFT.

-

Key details for each subset: MATH500 provides a standard benchmark covering algebra, geometry, and probability. AIME2025 and AIME2026 each contribute 30 highly challenging competition problems requiring precise numerical derivations. GPQA-Diamond offers graduate-level multiple-choice questions in biology, physics, and chemistry. MedQA supplies medical licensing-style questions focused on clinical reasoning and diagnostic decision-making. LiveCodeBench-v6 features contamination-resistant programming problems with hidden test cases. MBPP Plus extends Python synthesis tasks with stricter execution-based evaluation criteria. HotpotQA tests multi-hop reasoning using Wikipedia evidence. Bamboogle provides a compact benchmark requiring intermediate retrieval and answer composition.

-

How the paper uses the data: The authors transform raw question-answer pairs into role-specific supervision targets tailored to four collaboration patterns rather than fixed mixture ratios. For Sequential-Style training, a large reference model rewrites answers into an initial step-by-step plan for the Planner, a critic-guided plan for the Critic, and retains the original answer for the Solver. Mixture-Style training uses domain-specific responses generated by each specialist to supervise itself, while ground truth answers train the Summarizer. Distillation-Style training leverages guidance-style responses from an Expert model to train the Expert agent, while the Learner receives direct supervision from ground truth answers. Deliberation-Style training applies ground truth answers to supervise both the Reflector and Tool-Caller agents. Each agent is subsequently assigned dedicated input-output pairs for independent fine-tuning, with base model parameters frozen and only the RecursiveLink components updated.

-

Additional processing and evaluation details: The authors normalize all non-code outputs by stripping whitespace, punctuation, and converting to lowercase before applying task-specific correctness checks. Numerical tasks verify mathematical equivalence, multiple-choice tasks require exact letter matches, and code tasks are validated through sandboxed execution with a 10-second timeout per test case. Search-based tasks utilize a reference large language model as a binary judge to assess answer correctness. Generation is capped at 2000 tokens for MATH500, 4000 tokens for most science and code benchmarks, and 16000 tokens for AIME competitions. Outputs that hit these limits trigger an early-stopping mechanism that appends a final answer prompt to elicit a response.

Method

The RecursiveMAS framework introduces a recursive multi-agent system designed to enhance collaborative reasoning through latent-space interactions. The architecture is built around two primary components: an inner RecursiveLink for intra-agent latent thought generation and an outer RecursiveLink for inter-agent information transfer, enabling a seamless recursive loop across heterogeneous agents. The overall system operates as a closed-loop network where each agent contributes to a shared reasoning process, with information flowing both within and across agents through these links.

The framework begins with each individual agent, modeled as a Transformer-based language model, generating latent thoughts in a continuous embedding space. This is achieved by feeding the last-layer hidden state of the agent back into its input layer, effectively creating an auto-regressive process in the latent space. This mechanism, referred to as latent thoughts generation, allows the agent to iteratively refine its internal state without explicit token decoding. The key innovation lies in the RecursiveLink, a lightweight module that facilitates the transition between these latent states. The inner RecursiveLink is responsible for transforming the output embeddings of an agent into input embeddings for its next forward pass. It employs a residual connection, where the original latent embedding is added to a projected version of the transformed embedding, ensuring the preservation of original semantics while allowing for distributional alignment. This design is crucial for stable and efficient training, as it enables the network to focus on learning the necessary adjustments rather than reconstructing the full representation from scratch.

The outer RecursiveLink extends this mechanism across different agents, allowing heterogeneous models with potentially different hidden dimensions to communicate. It achieves this by mapping the output embedding of a source agent Ai into the input embedding space of a target agent Aj using an additional linear transformation layer W3. This enables the system to leverage the complementary strengths of diverse models, such as a specialized math agent or a code generator, by passing their refined latent thoughts directly to the next agent in the sequence. As illustrated in the framework diagram, the agents are connected in a loop: the output of the last agent is fed back to the first agent, creating a recursive structure. This loop allows for progressive refinement, where each agent can iteratively reflect on and build upon the collective latent state of the system across multiple rounds of interaction.

The training process for RecursiveMAS is structured in two distinct stages to optimize the system effectively. The first stage, preliminary inner-loop training, focuses on equipping each agent with the capability to generate high-quality latent thoughts. This is done by training the inner RecursiveLink of each agent independently. Given a training example, the agent generates a sequence of latent thoughts, and the training objective is to align the final latent thought distribution with the input embedding of the ground-truth answer using a cosine similarity loss. This step ensures that each agent can generate semantically meaningful latent representations that capture the essence of the solution.

The second stage, recursive outer-loop training, co-optimizes the entire system as a unified entity. During this phase, the system is unrolled across multiple recursion rounds, with the outer RecursiveLinks connecting the agents in the loop. The training process involves forward passes where information flows through the agents and back to the first agent, and backward propagation where gradients are computed based on the final text prediction. The cross-entropy loss is applied to the output of the last agent after the final recursion round, and gradients are propagated back through the entire recursive path. This allows the outer links to learn how to best transfer information across agents, ensuring that the system's collective output improves over time. The architecture's efficiency and stability are further supported by theoretical analyses, which demonstrate that the use of latent-space interactions leads to more stable gradient propagation compared to text-based communication, which suffers from gradient vanishing. The full training pipeline, as depicted in the figure, integrates these stages, first warm-starting the agents and then jointly optimizing the entire system through a recursive loop.

Experiment

Comprehensive evaluations across mathematical, scientific, code generation, and search benchmarks validate RecursiveMAS against single-agent fine-tuned models, alternative multi-agent frameworks, and text-based recursive baselines. Experiments across varying recursion depths and collaboration patterns demonstrate that the system consistently enhances both reasoning accuracy and computational efficiency as recursion deepens. Architectural analyses further confirm that latent-space interactions effectively align semantic representations, minimize training overhead, and generalize seamlessly across diverse multi-agent structures. Ultimately, the study establishes RecursiveMAS as a scalable framework that leverages iterative latent refinement to outperform existing approaches while maintaining superior efficiency.

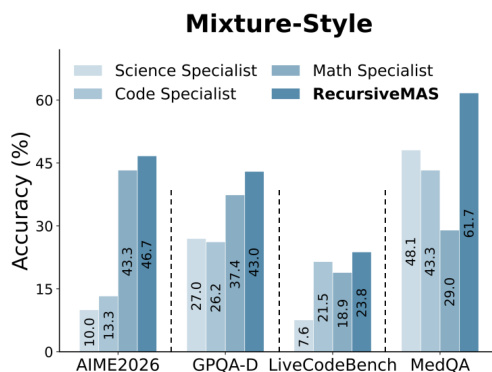

The authors evaluate RecursiveMAS under the Mixture-Style collaboration pattern, comparing its performance against individual specialist agents across multiple tasks. Results show that RecursiveMAS consistently outperforms the best single specialist agent in each domain, demonstrating its ability to integrate and leverage diverse expertise effectively. The performance advantage is particularly evident in tasks requiring cross-domain reasoning, where the collaborative system achieves higher accuracy than any single agent. RecursiveMAS outperforms individual specialist agents in all evaluated domains under the Mixture-Style collaboration pattern. The collaborative system achieves higher accuracy than any single agent, especially in tasks requiring cross-domain integration. RecursiveMAS demonstrates consistent performance gains across diverse tasks, indicating effective integration of specialized knowledge.

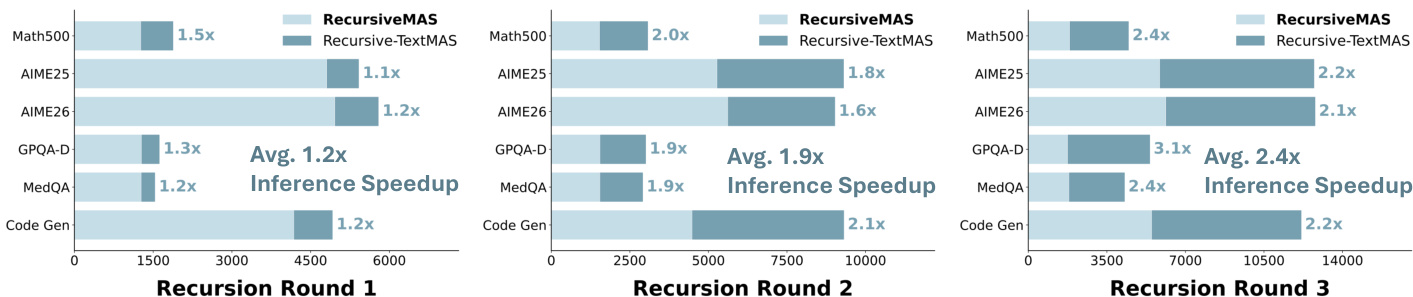

The authors evaluate RecursiveMAS across different recursion depths and compare its performance against text-based recursive baselines. Results show that RecursiveMAS achieves consistent improvements in inference speed and token efficiency as recursion depth increases, with greater advantages observed at higher recursion rounds. The method demonstrates scalability and efficiency gains in latent-space collaboration compared to text-based approaches. RecursiveMAS shows increasing inference speedup and token reduction as recursion depth increases compared to text-based baselines. The performance and efficiency advantages of RecursiveMAS become more pronounced with deeper recursion. RecursiveMAS maintains consistent gains across different recursion rounds, indicating scalable and efficient latent-space collaboration.

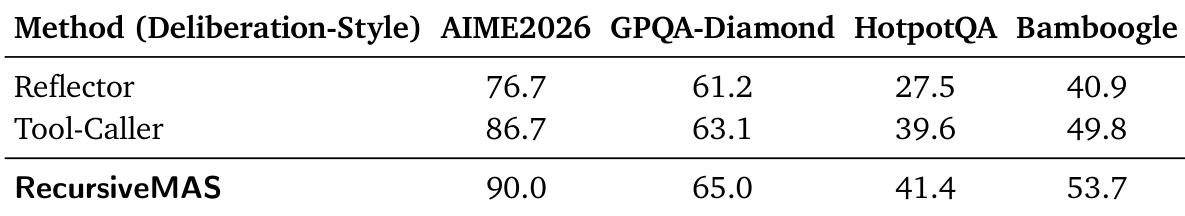

The authors evaluate RecursiveMAS in a deliberation-style multi-agent setting, comparing its performance against baseline agents such as Reflector and Tool-Caller across multiple tasks. Results show that RecursiveMAS achieves higher accuracy on all evaluated benchmarks compared to the baselines, demonstrating its effectiveness in enhancing collaborative reasoning. The method consistently outperforms individual agents, indicating improved system-level coordination and reasoning through recursive interaction. RecursiveMAS achieves higher accuracy than Reflector and Tool-Caller across all tested tasks. RecursiveMAS outperforms individual agents in a deliberation-style setup, showing improved collaborative reasoning. The method demonstrates consistent performance gains over baselines, indicating effective system-level optimization.

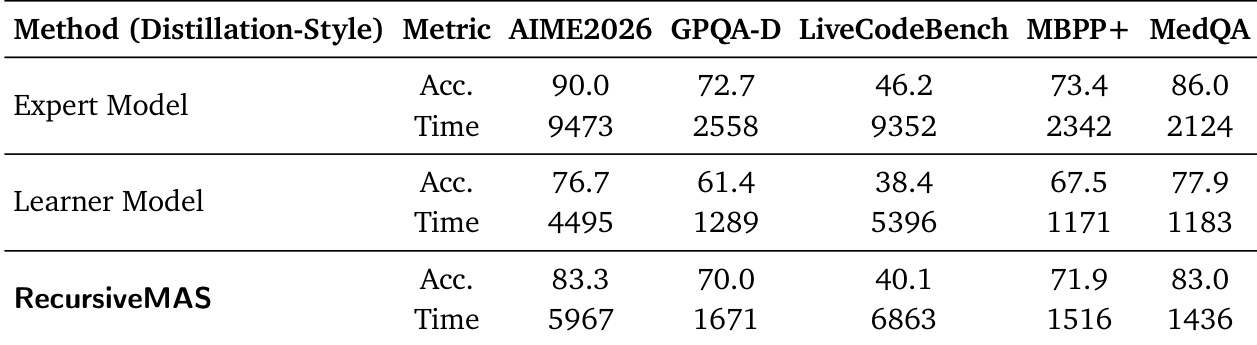

The authors compare the performance of RecursiveMAS against expert and learner models in a distillation-style multi-agent system across multiple benchmarks. Results show that RecursiveMAS achieves higher accuracy than the learner model on all tasks while significantly reducing inference time. Compared to the expert model, RecursiveMAS performs better on some tasks but with lower accuracy on others, though it consistently uses less time across all domains. RecursiveMAS achieves higher accuracy than the learner model on all evaluated tasks while using less inference time. RecursiveMAS reduces inference time significantly compared to both the expert and learner models across all domains. RecursiveMAS shows improved performance over the learner model in accuracy while maintaining a substantial speed advantage over the expert model.

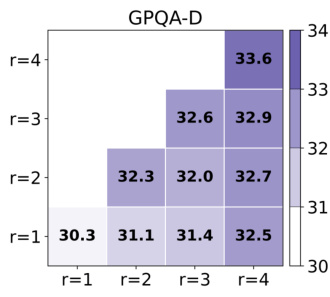

The authors analyze the performance of RecursiveMAS across different recursion depths on the GPQA-Diamond benchmark, showing that accuracy increases as recursion depth grows. The results demonstrate a consistent upward trend in performance with deeper recursion, indicating that the system benefits from iterative refinement. The performance improvement becomes more pronounced at higher recursion levels, suggesting that the method scales effectively with increased recursion depth. Accuracy improves as recursion depth increases, with higher performance at deeper levels. The performance gain becomes more pronounced with deeper recursion, indicating effective scaling. RecursiveMAS shows consistent improvement across recursion rounds, suggesting iterative refinement benefits the system.

The authors evaluate RecursiveMAS across mixture-style, deliberation-style, and distillation-style collaboration paradigms, alongside systematic tests varying recursion depth against various agent baselines. The mixture and deliberation setups validate the framework's capacity to integrate diverse expertise and enhance collaborative reasoning, while the distillation configuration demonstrates its ability to balance accuracy with computational efficiency. Qualitatively, the system consistently outperforms individual specialists and standard baselines, particularly in cross-domain tasks and iterative refinement scenarios. Furthermore, increasing recursion depth progressively amplifies both performance and efficiency, confirming the method's robust scalability in latent-space collaboration.