Command Palette

Search for a command to run...

Papers

Daily updated cutting-edge AI research papers to help you keep up with the latest AI trends

Compose Your Policies! Improving Diffusion-based or Flow-based Robot Policies via Test-time Distribution-level Composition

Large Reasoning Models Learn Better Alignment from Flawed Thinking

Compose Your Policies! Improving Diffusion-based or Flow-based Robot Policies via Test-time Distribution-level Composition

Large Reasoning Models Learn Better Alignment from Flawed Thinking

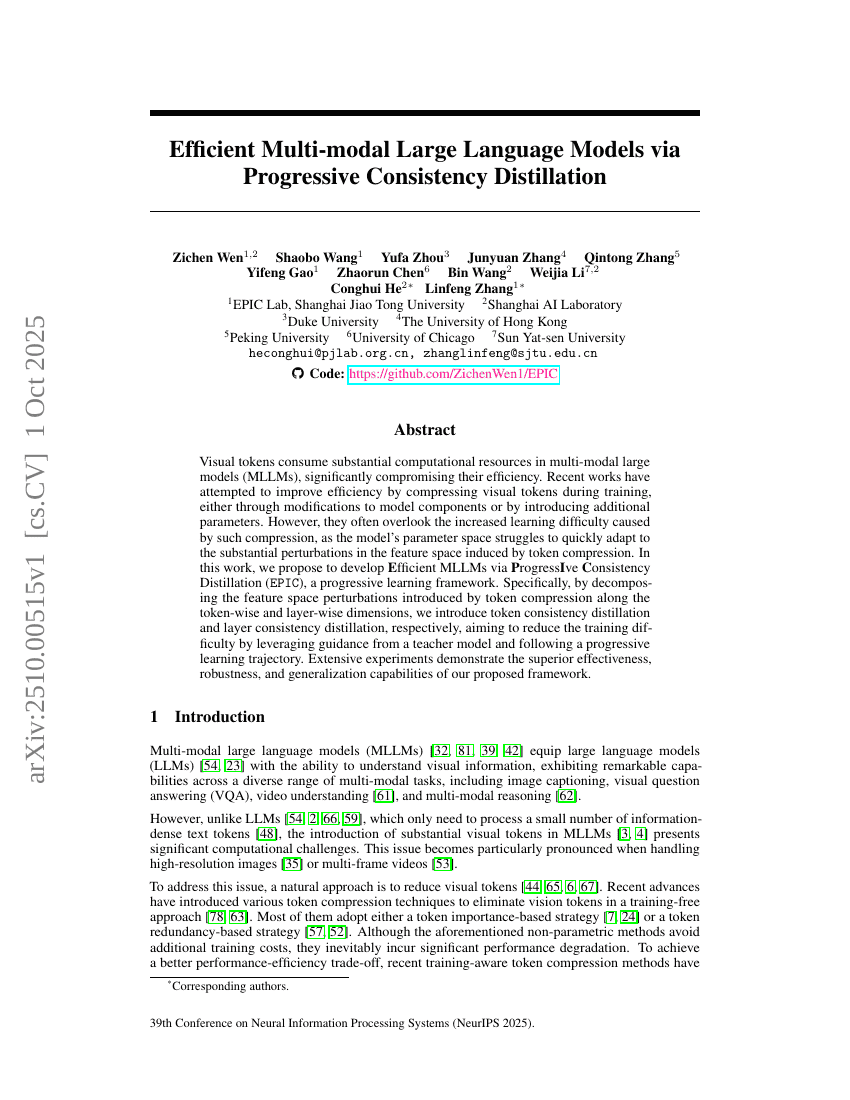

Efficient Multi-modal Large Language Models via Progressive Consistency Distillation

Apriel-1.5-15b-Thinker

StockBench: Can LLM Agents Trade Stocks Profitably In Real-world Markets?

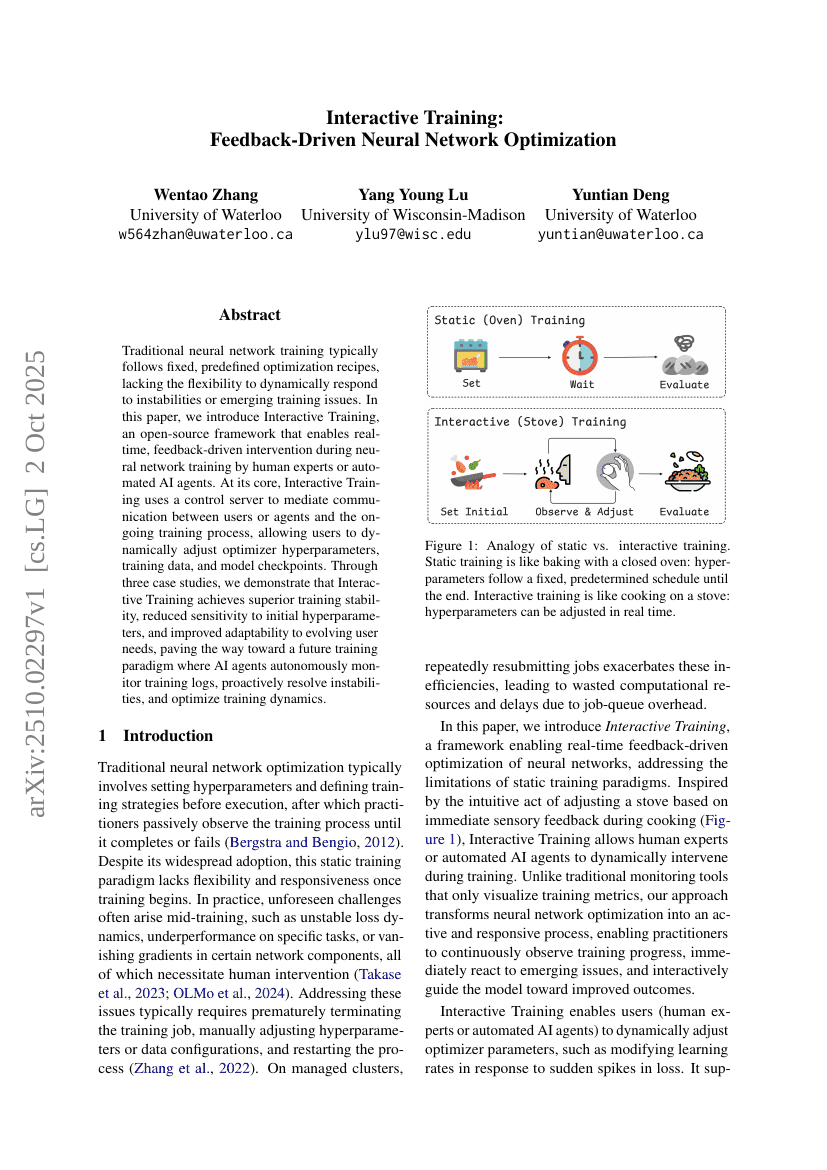

Interactive Training: Feedback-Driven Neural Network Optimization

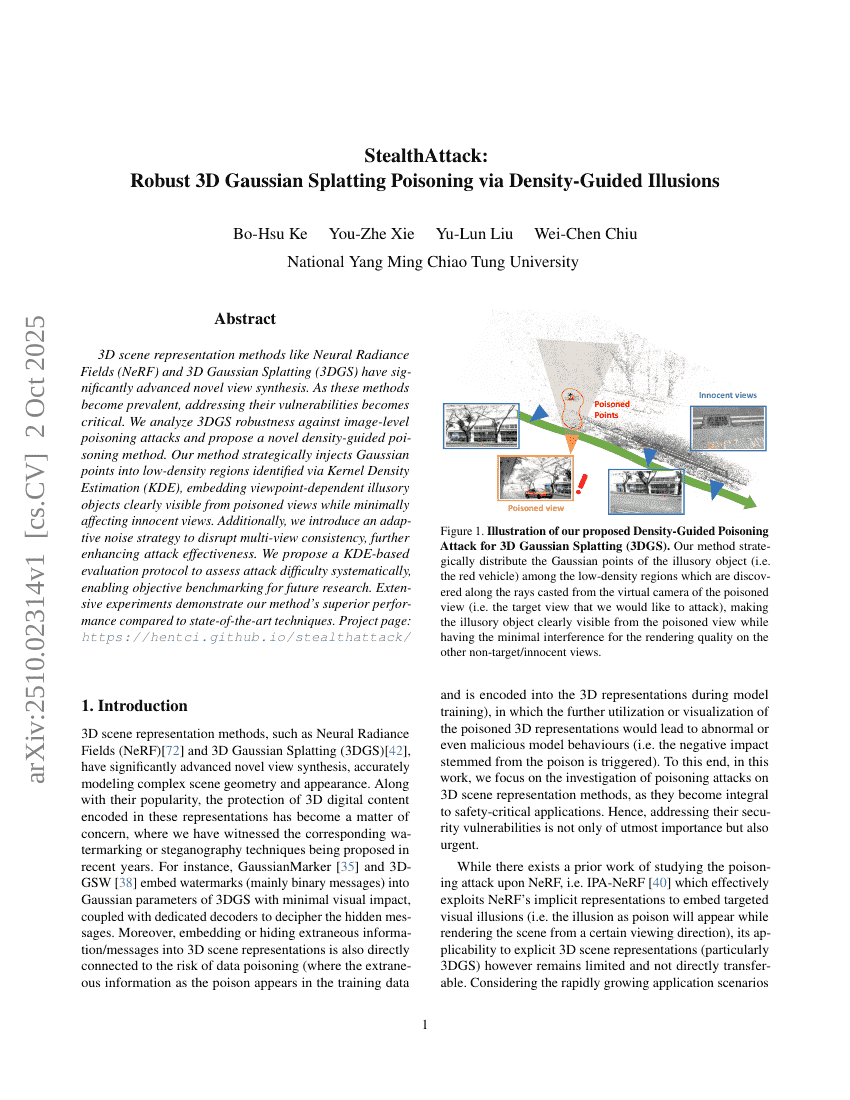

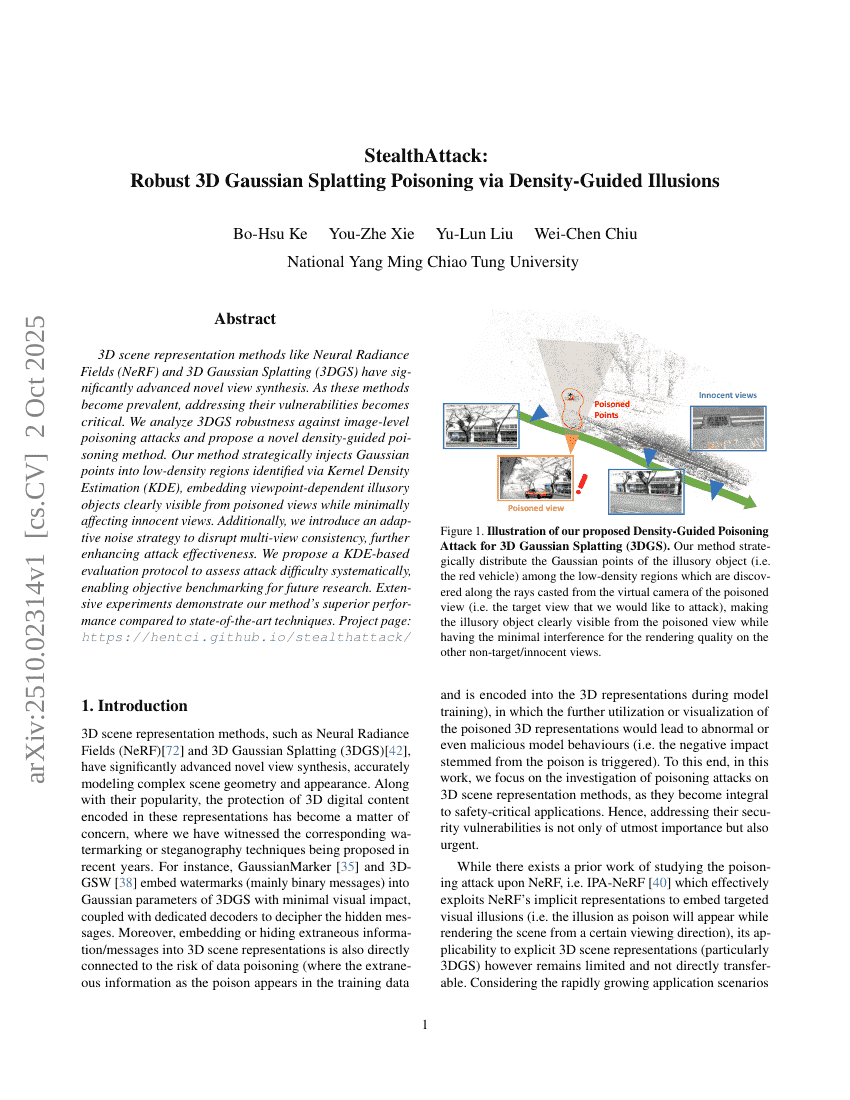

StealthAttack: Robust 3D Gaussian Splatting Poisoning via Density-Guided Illusions

ExGRPO: Learning to Reason from Experience

Self-Forcing++: Towards Minute-Scale High-Quality Video Generation

LongCodeZip: Compress Long Context for Code Language Models

PIPer: On-Device Environment Setup via Online Reinforcement Learning

Rethinking Reward Models for Multi-Domain Test-Time Scaling

Knapsack RL: Unlocking Exploration of LLMs via Optimizing Budget Allocation

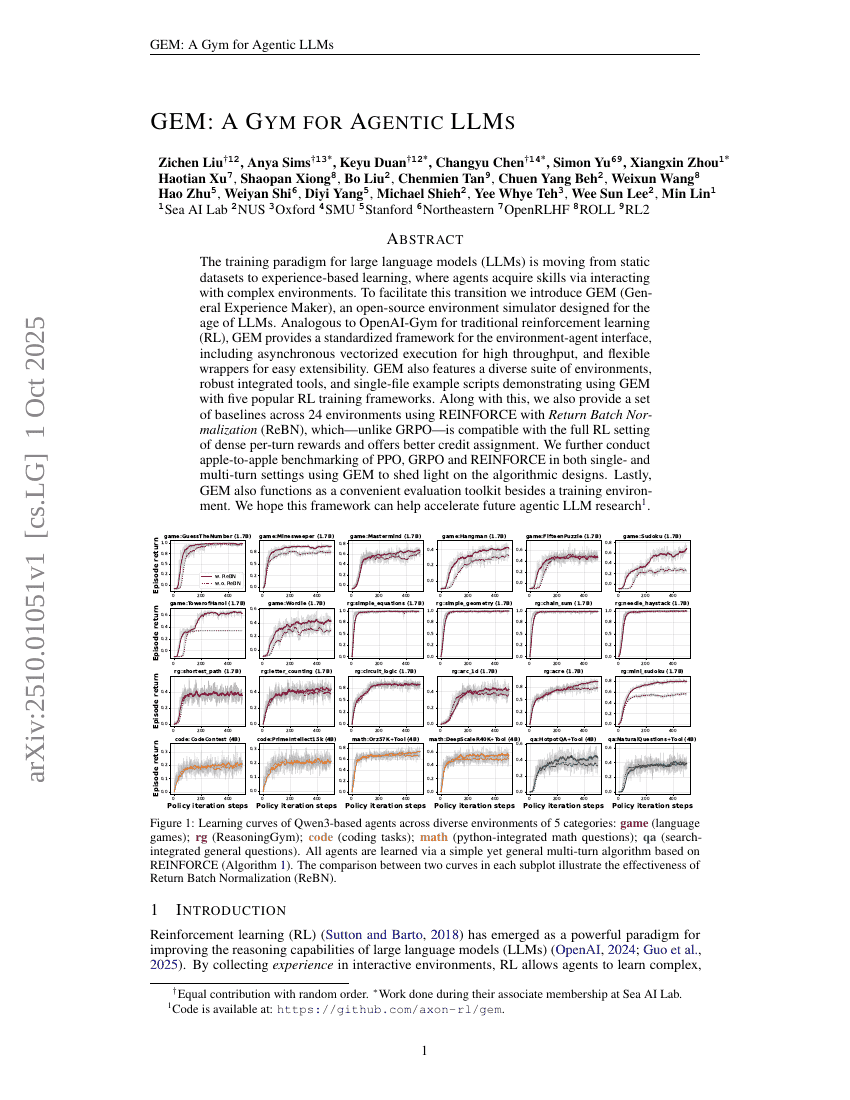

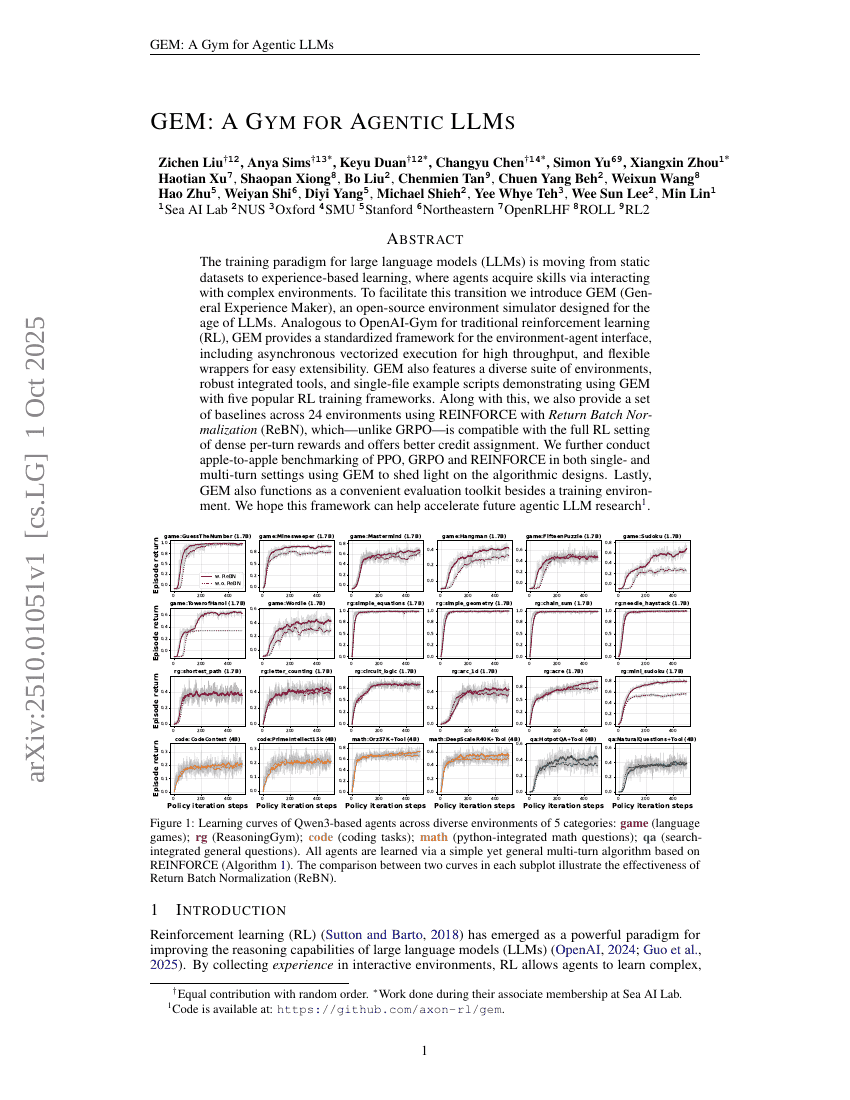

GEM: A Gym for Agentic LLMs

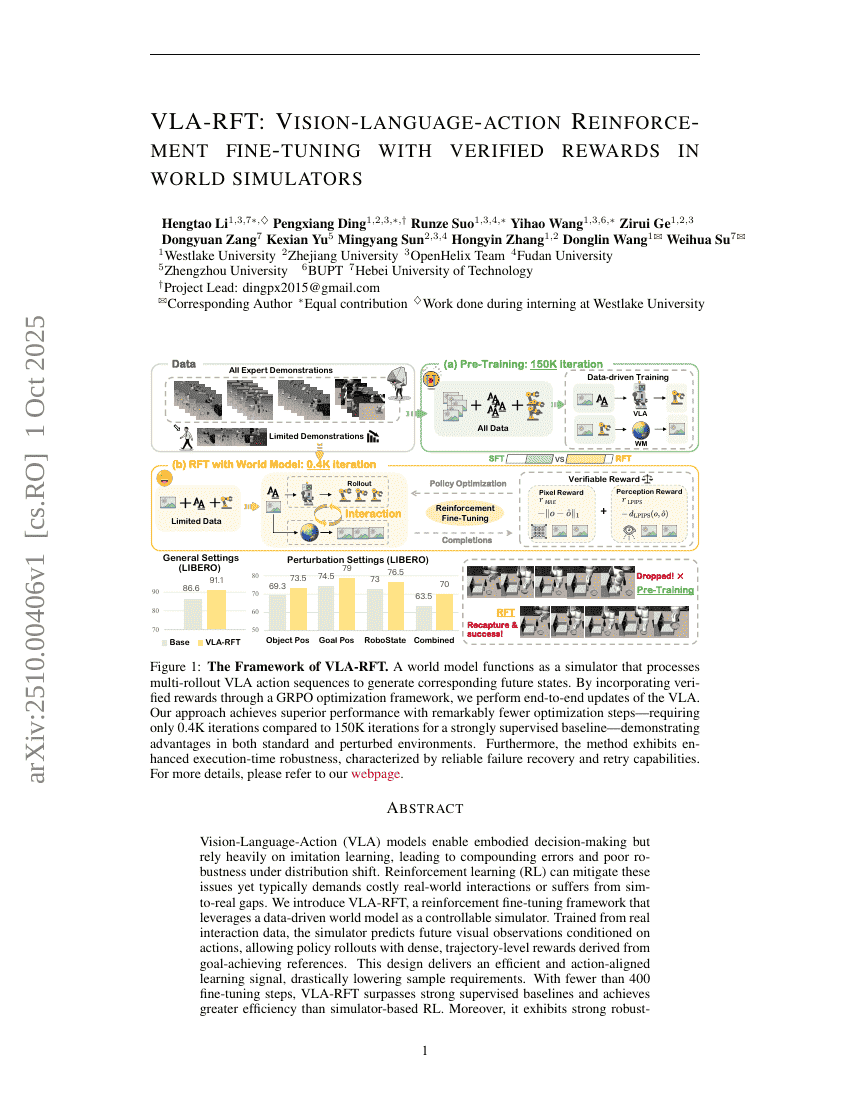

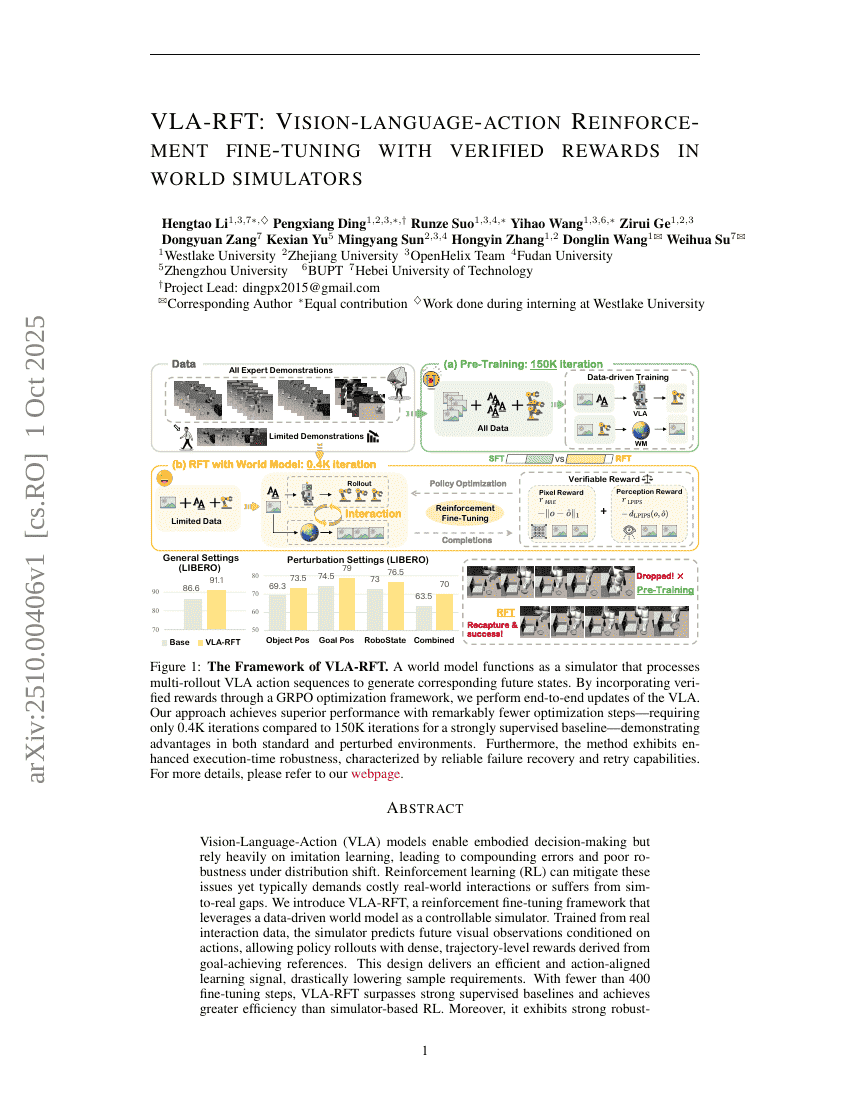

VLA-RFT: Vision-Language-Action Reinforcement Fine-tuning with Verified Rewards in World Simulators

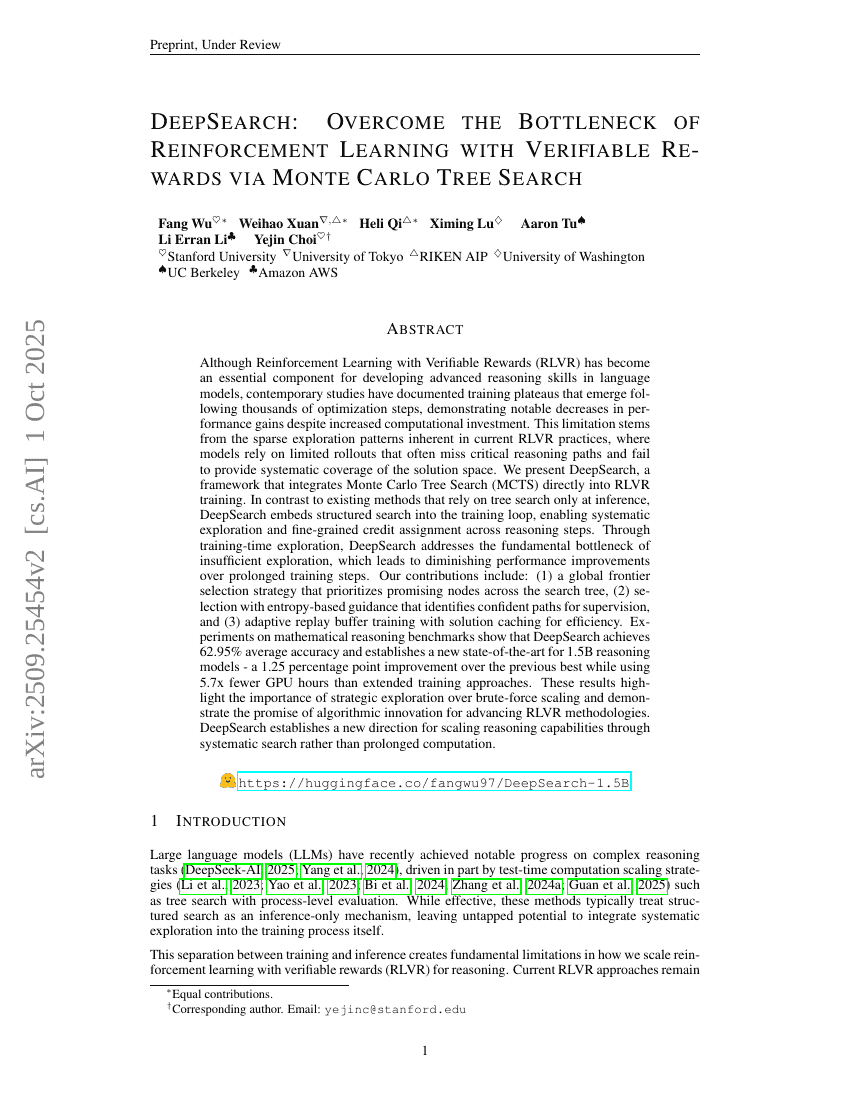

DeepSearch: Overcome the Bottleneck of Reinforcement Learning with Verifiable Rewards via Monte Carlo Tree Search

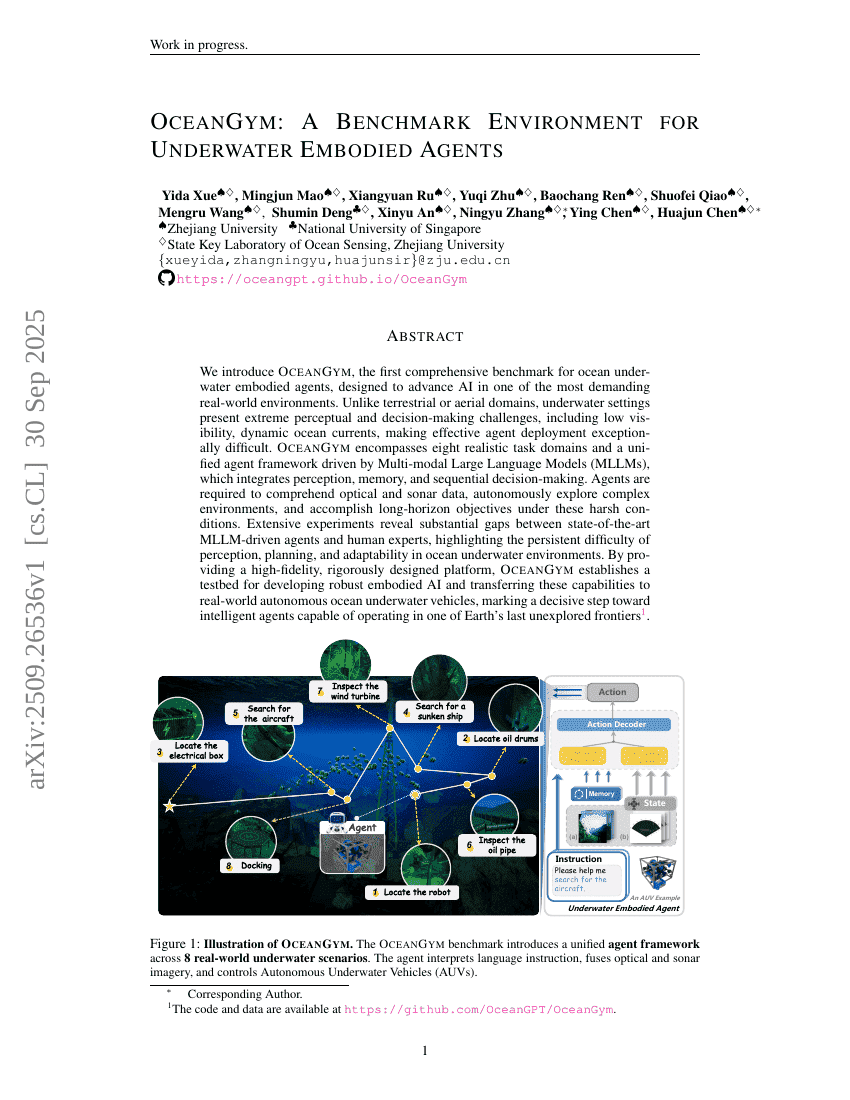

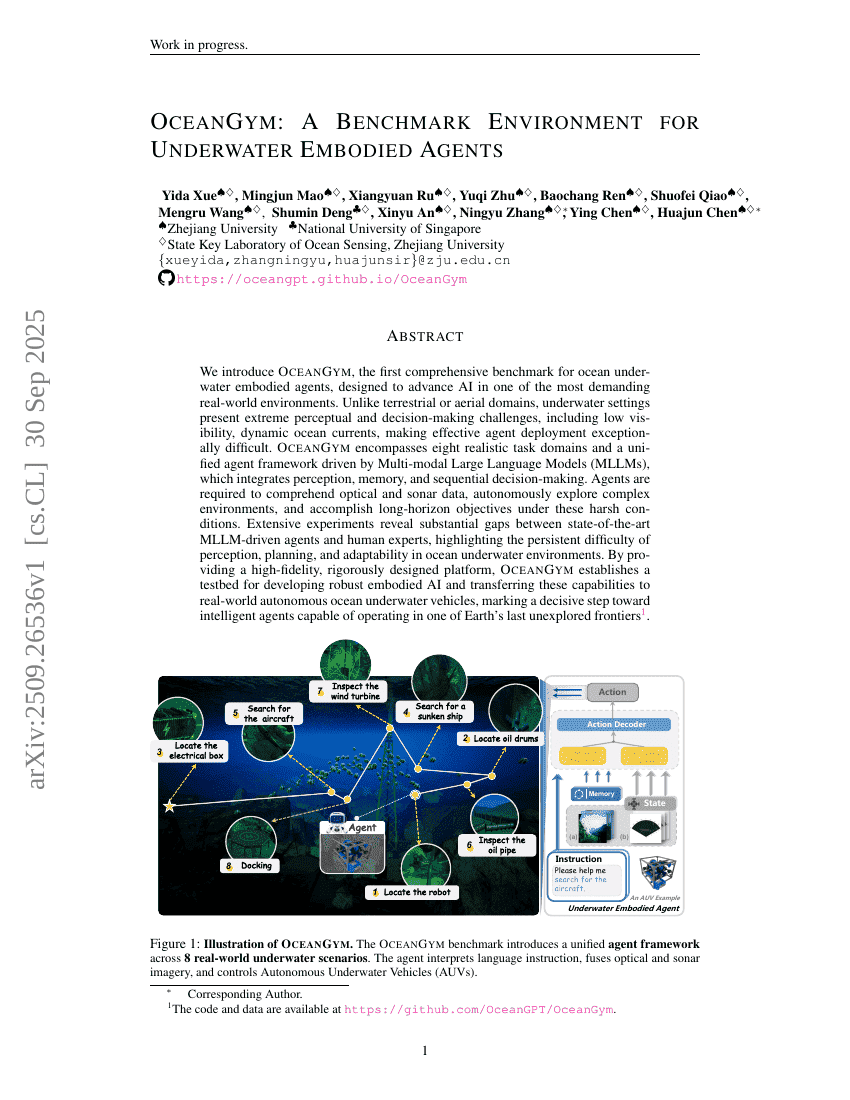

OceanGym: A Benchmark Environment for Underwater Embodied Agents

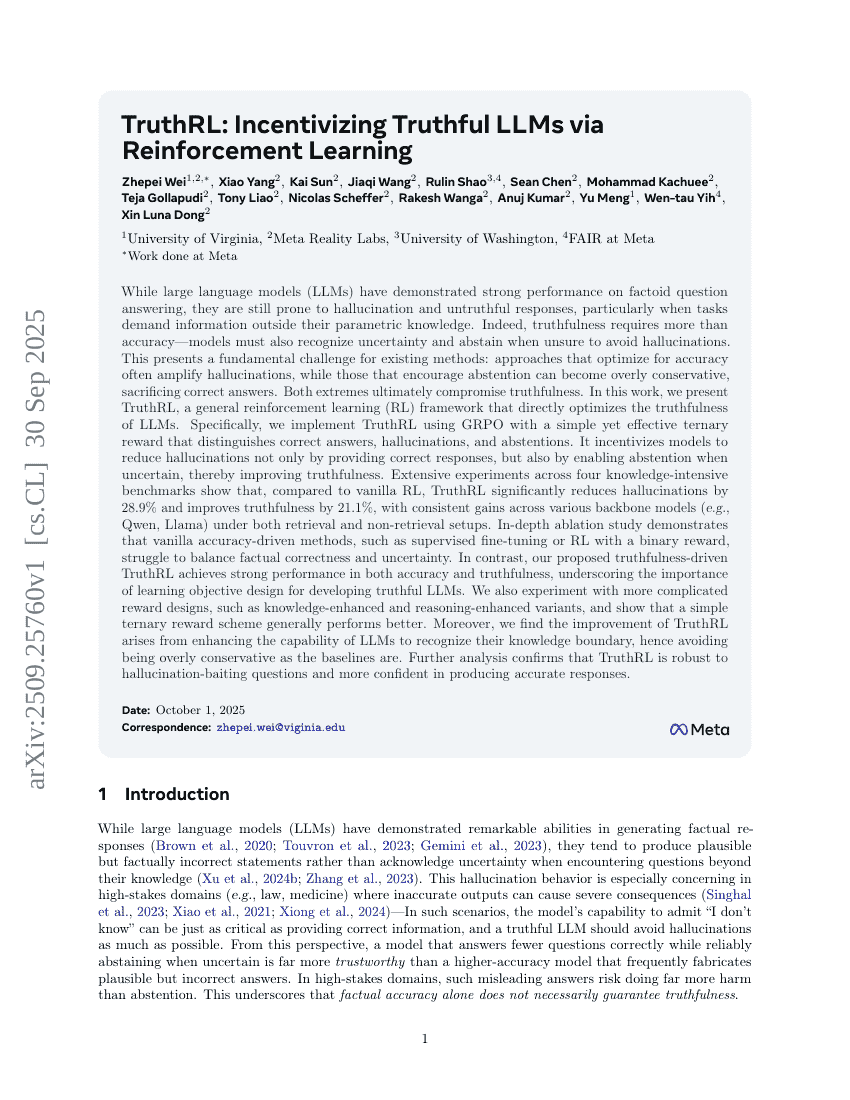

TruthRL: Incentivizing Truthful LLMs via Reinforcement Learning

Winning the Pruning Gamble: A Unified Approach to Joint Sample and Token Pruning for Efficient Supervised Fine-Tuning

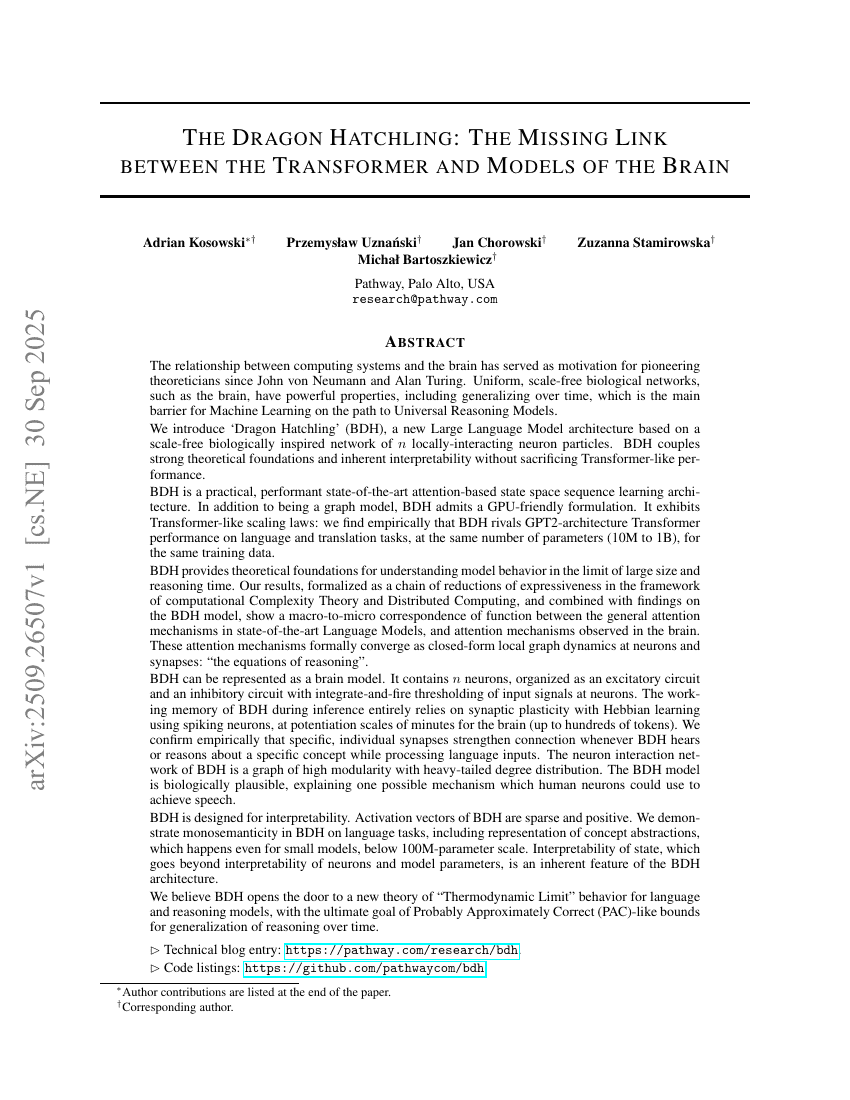

The Dragon Hatchling: The Missing Link between the Transformer and Models of the Brain

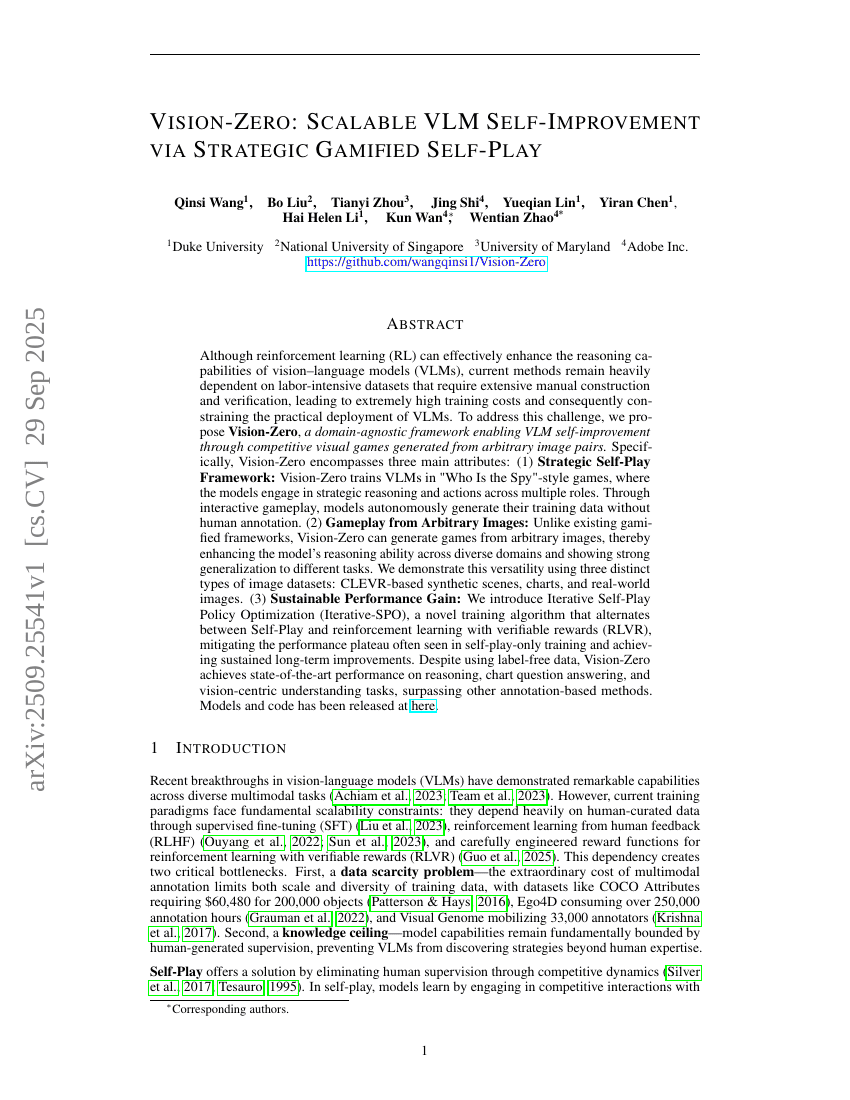

Vision-Zero: Scalable VLM Self-Improvement via Strategic Gamified Self-Play

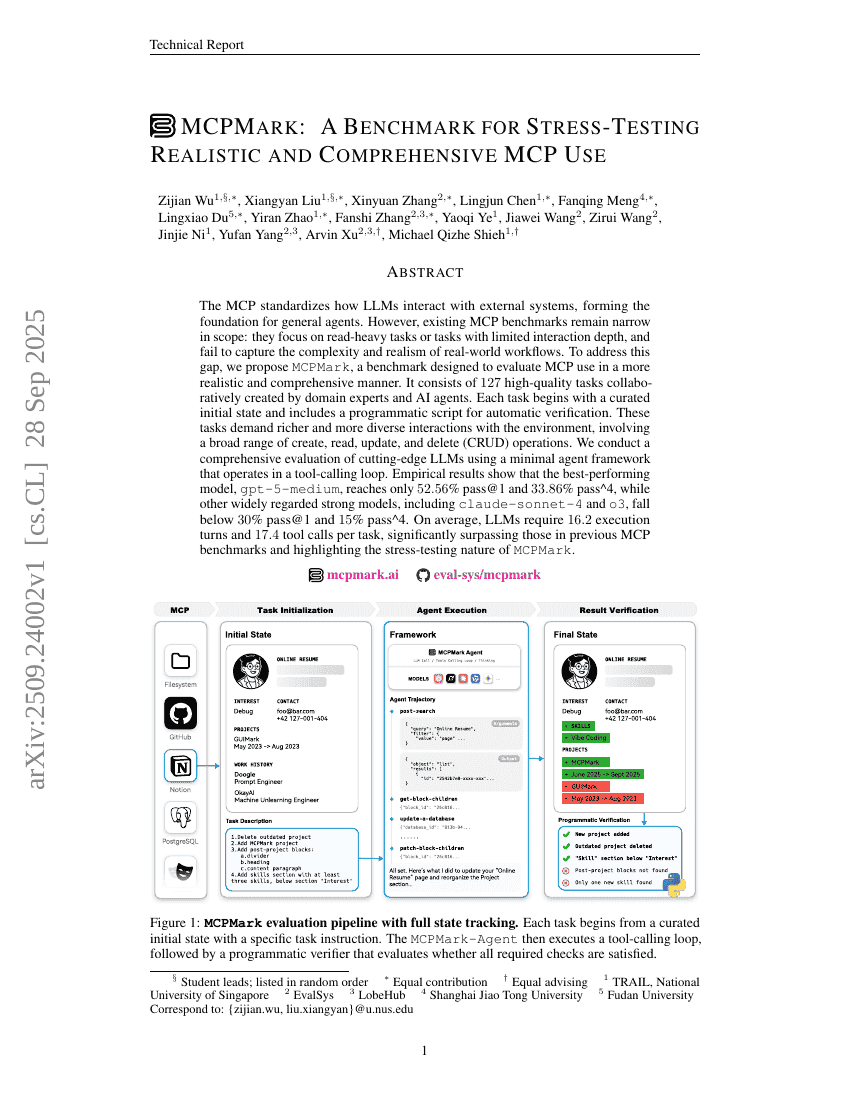

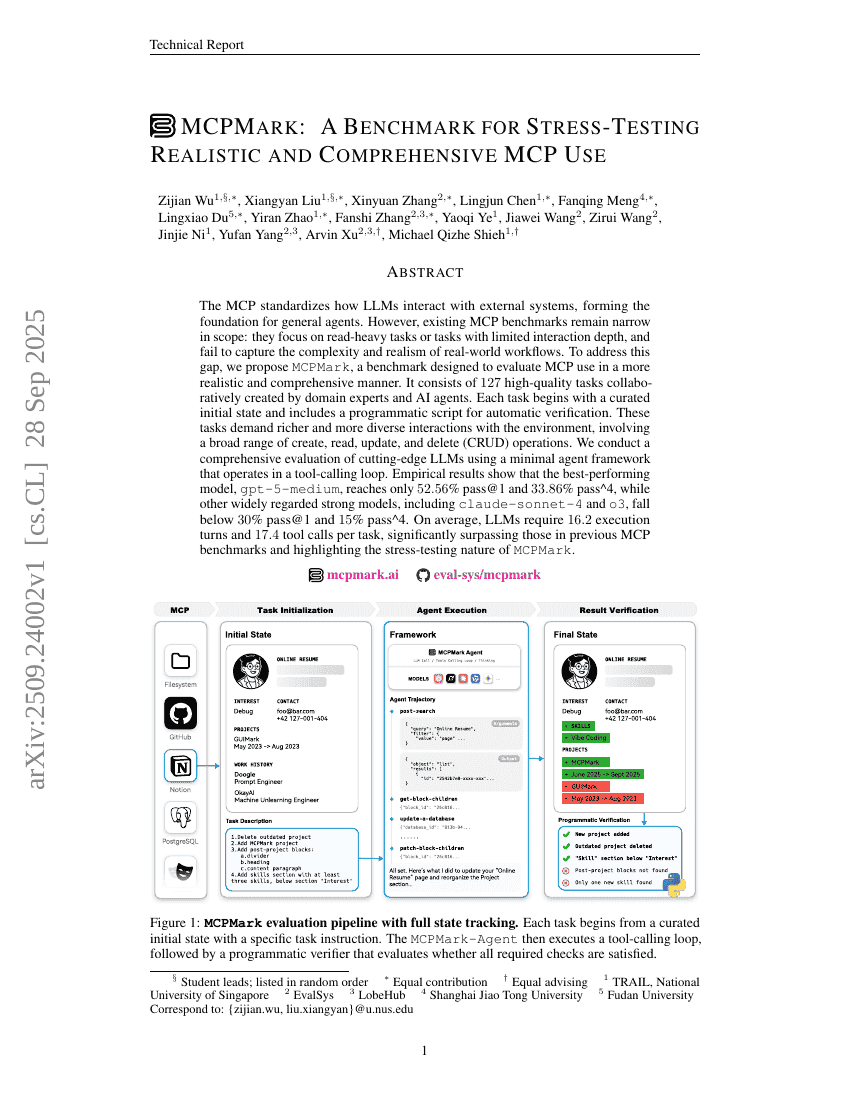

MCPMark: A Benchmark for Stress-Testing Realistic and Comprehensive MCP Use

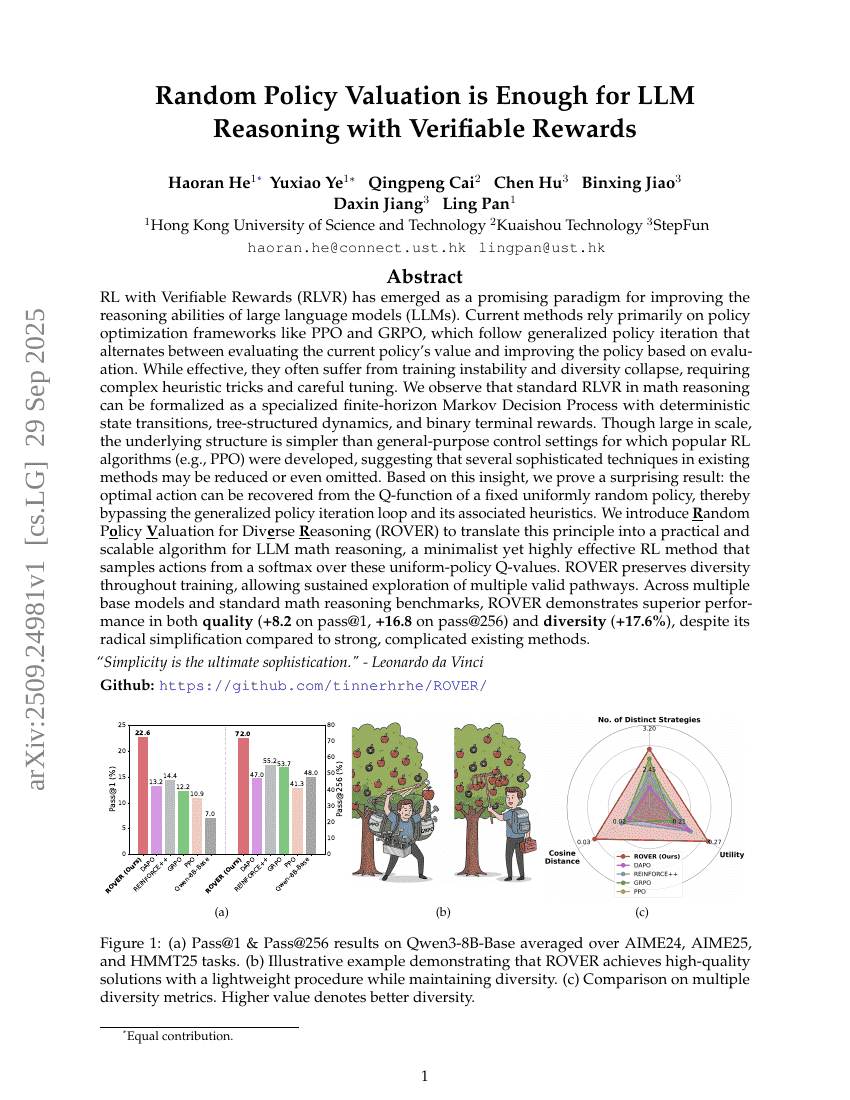

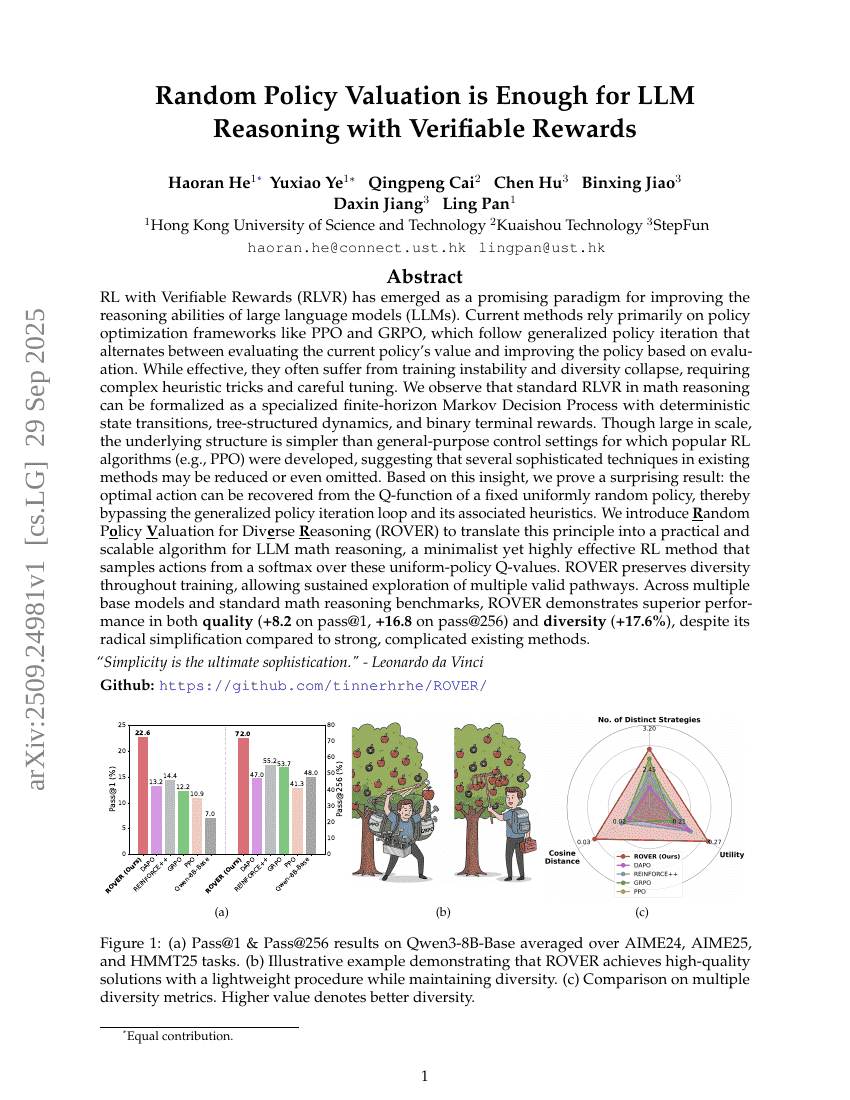

Random Policy Valuation is Enough for LLM Reasoning with Verifiable Rewards

Democratizing AI scientists using ToolUniverse

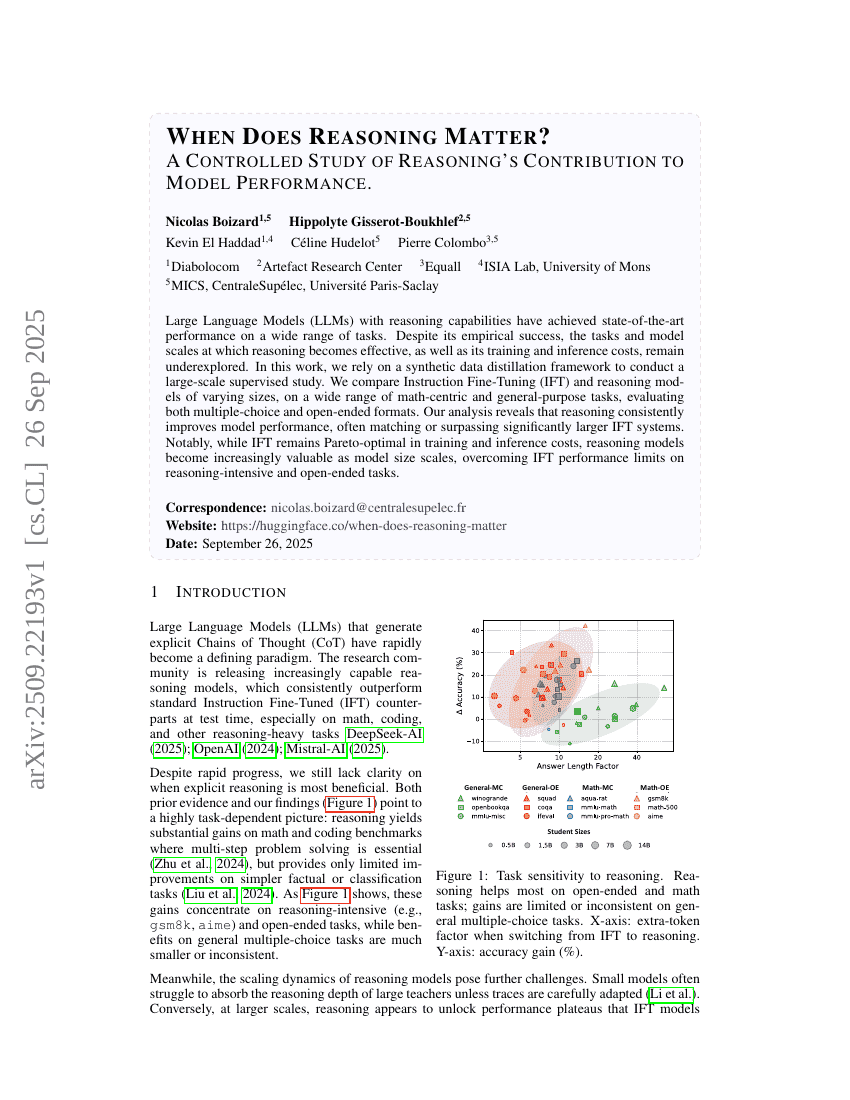

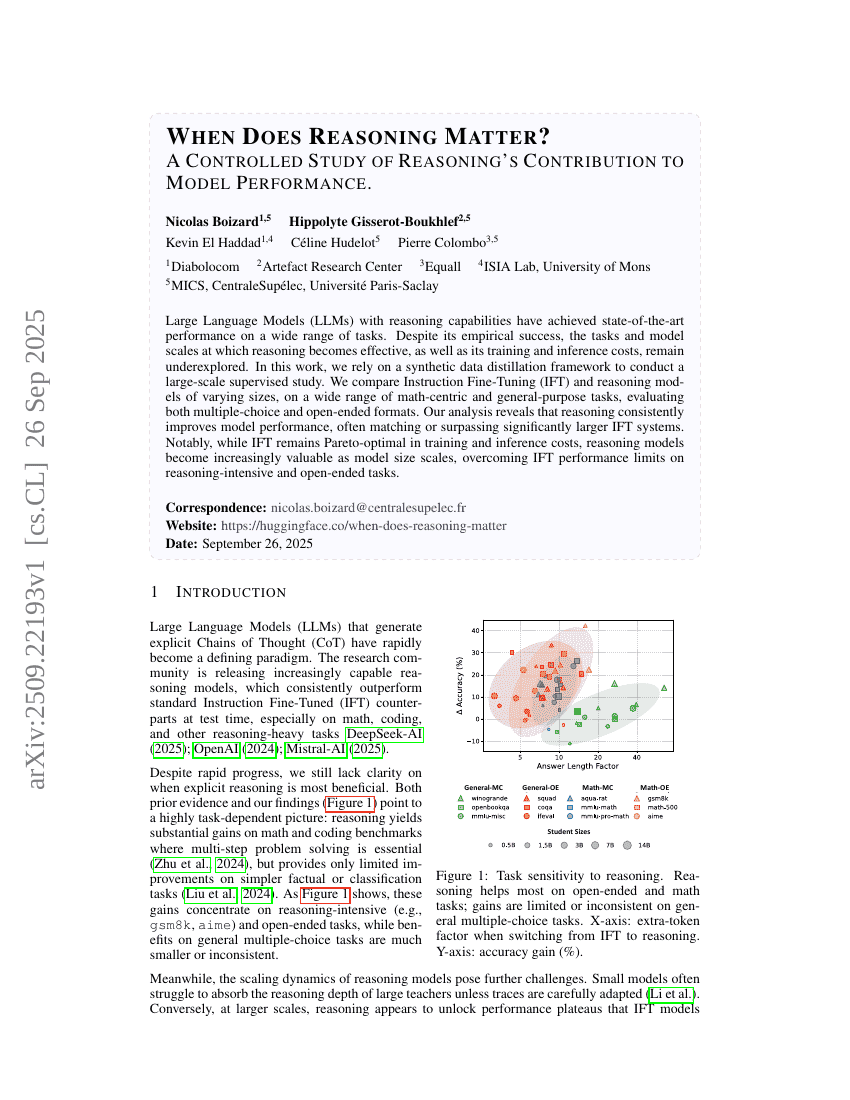

When Does Reasoning Matter? A Controlled Study of Reasoning's Contribution to Model Performance

Multiplayer Nash Preference Optimization

StableToken: A Noise-Robust Semantic Speech Tokenizer for Resilient SpeechLLMs

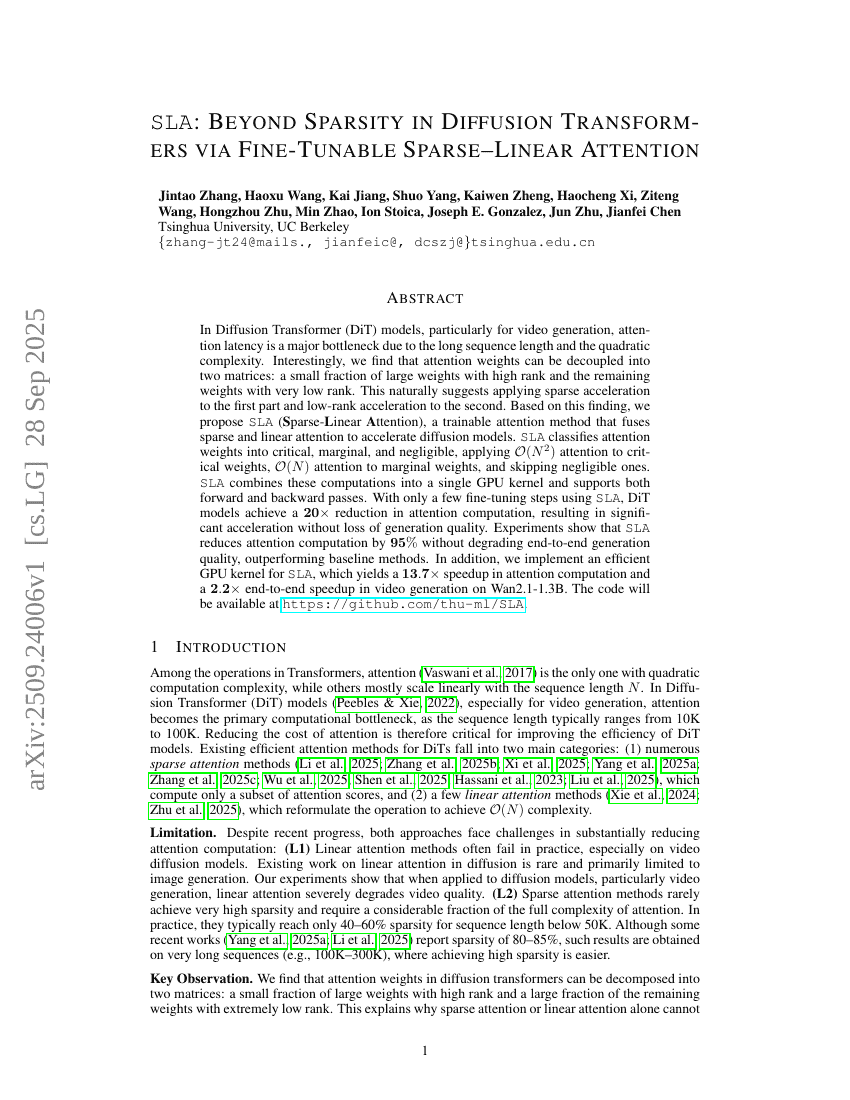

SLA: Beyond Sparsity in Diffusion Transformers via Fine-Tunable Sparse-Linear Attention

SimpleFold: Folding Proteins is Simpler than You Think

POINTS-Reader: Distillation-Free Adaptation of Vision-Language Models for Document Conversion

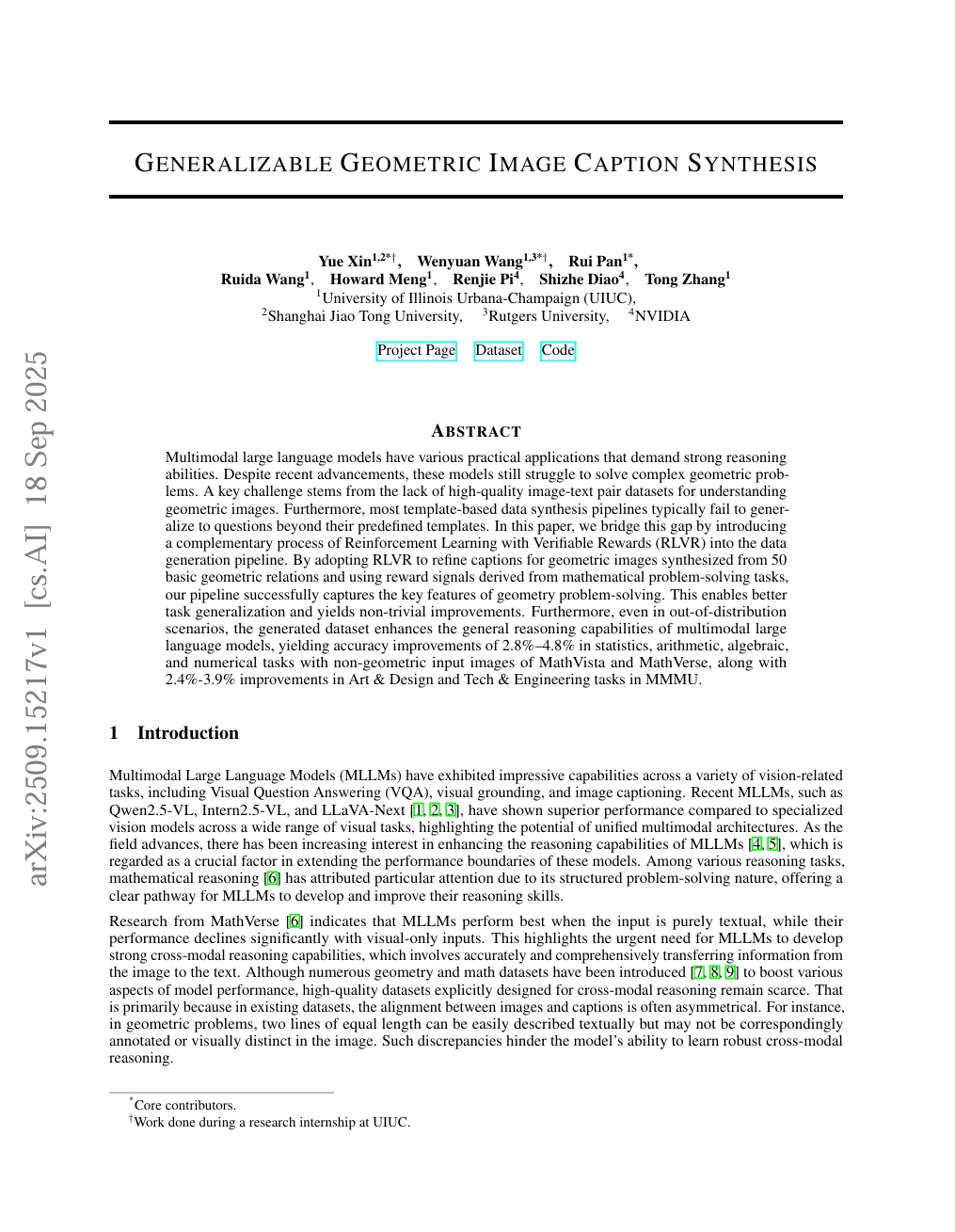

Generalizable Geometric Image Caption Synthesis

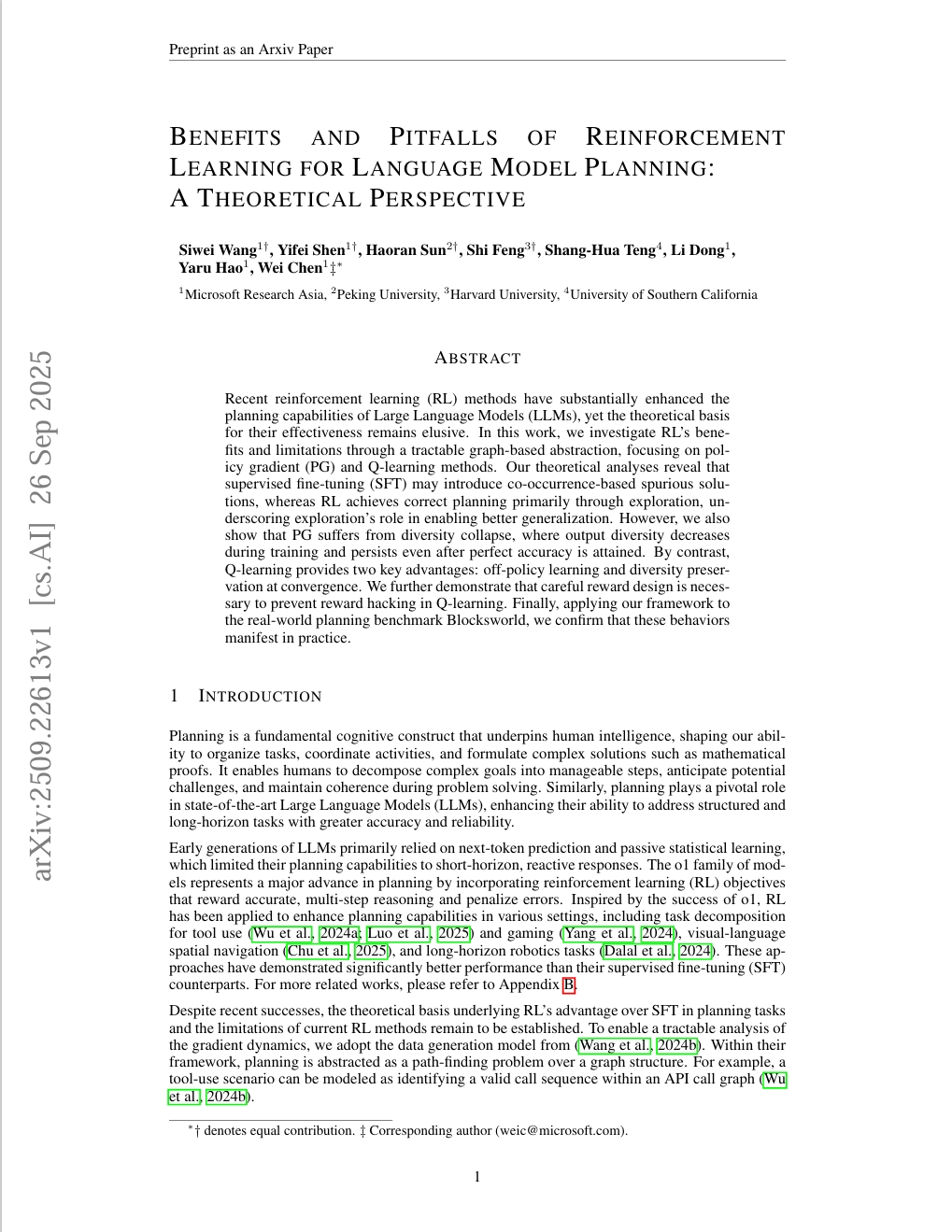

Benefits and Pitfalls of Reinforcement Learning for Language Model Planning: A Theoretical Perspective

Efficient Multi-modal Large Language Models via Progressive Consistency Distillation

Apriel-1.5-15b-Thinker

StockBench: Can LLM Agents Trade Stocks Profitably In Real-world Markets?

Interactive Training: Feedback-Driven Neural Network Optimization

StealthAttack: Robust 3D Gaussian Splatting Poisoning via Density-Guided Illusions

ExGRPO: Learning to Reason from Experience

Self-Forcing++: Towards Minute-Scale High-Quality Video Generation

LongCodeZip: Compress Long Context for Code Language Models

PIPer: On-Device Environment Setup via Online Reinforcement Learning

Rethinking Reward Models for Multi-Domain Test-Time Scaling

Knapsack RL: Unlocking Exploration of LLMs via Optimizing Budget Allocation

GEM: A Gym for Agentic LLMs

VLA-RFT: Vision-Language-Action Reinforcement Fine-tuning with Verified Rewards in World Simulators

DeepSearch: Overcome the Bottleneck of Reinforcement Learning with Verifiable Rewards via Monte Carlo Tree Search

OceanGym: A Benchmark Environment for Underwater Embodied Agents

TruthRL: Incentivizing Truthful LLMs via Reinforcement Learning

Winning the Pruning Gamble: A Unified Approach to Joint Sample and Token Pruning for Efficient Supervised Fine-Tuning

The Dragon Hatchling: The Missing Link between the Transformer and Models of the Brain

Vision-Zero: Scalable VLM Self-Improvement via Strategic Gamified Self-Play

MCPMark: A Benchmark for Stress-Testing Realistic and Comprehensive MCP Use

Random Policy Valuation is Enough for LLM Reasoning with Verifiable Rewards

Democratizing AI scientists using ToolUniverse

When Does Reasoning Matter? A Controlled Study of Reasoning's Contribution to Model Performance

Multiplayer Nash Preference Optimization

StableToken: A Noise-Robust Semantic Speech Tokenizer for Resilient SpeechLLMs

SLA: Beyond Sparsity in Diffusion Transformers via Fine-Tunable Sparse-Linear Attention

SimpleFold: Folding Proteins is Simpler than You Think

POINTS-Reader: Distillation-Free Adaptation of Vision-Language Models for Document Conversion

Generalizable Geometric Image Caption Synthesis

Benefits and Pitfalls of Reinforcement Learning for Language Model Planning: A Theoretical Perspective