Command Palette

Search for a command to run...

Papers

Daily updated cutting-edge AI research papers to help you keep up with the latest AI trends

Wait, We Don't Need to "Wait"! Removing Thinking Tokens Improves Reasoning Efficiency

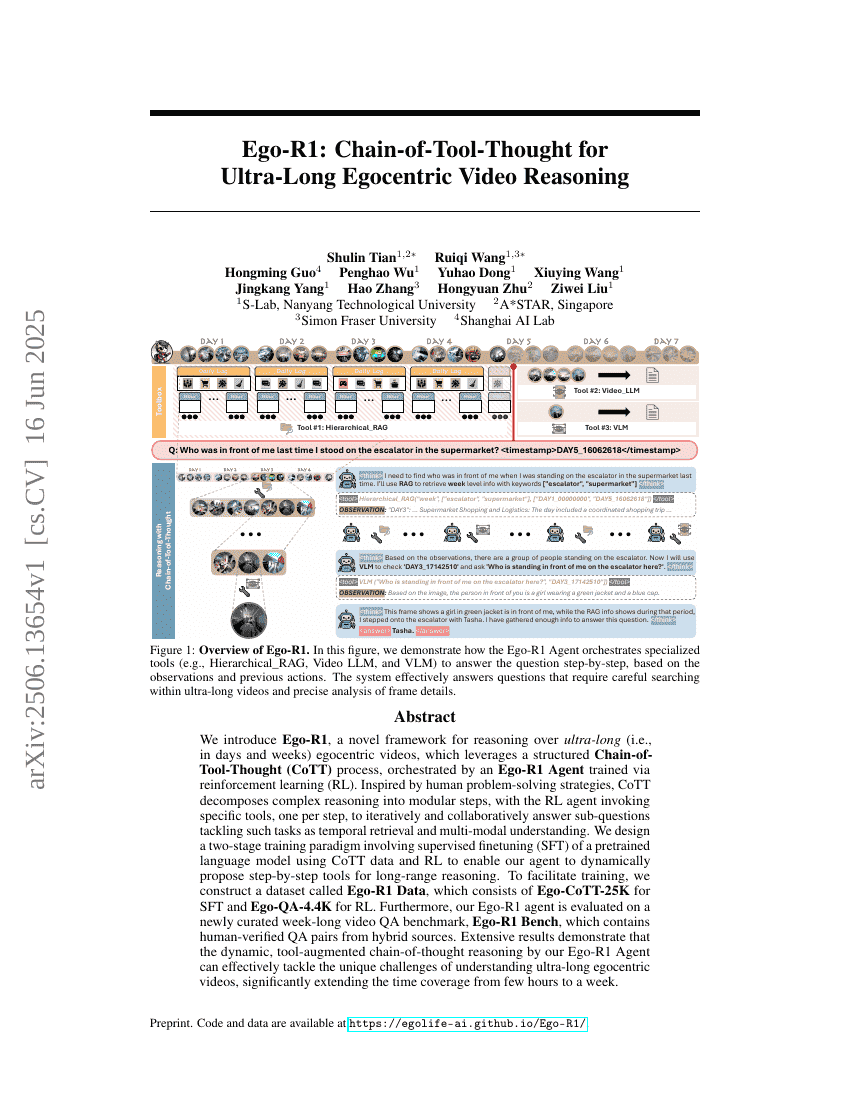

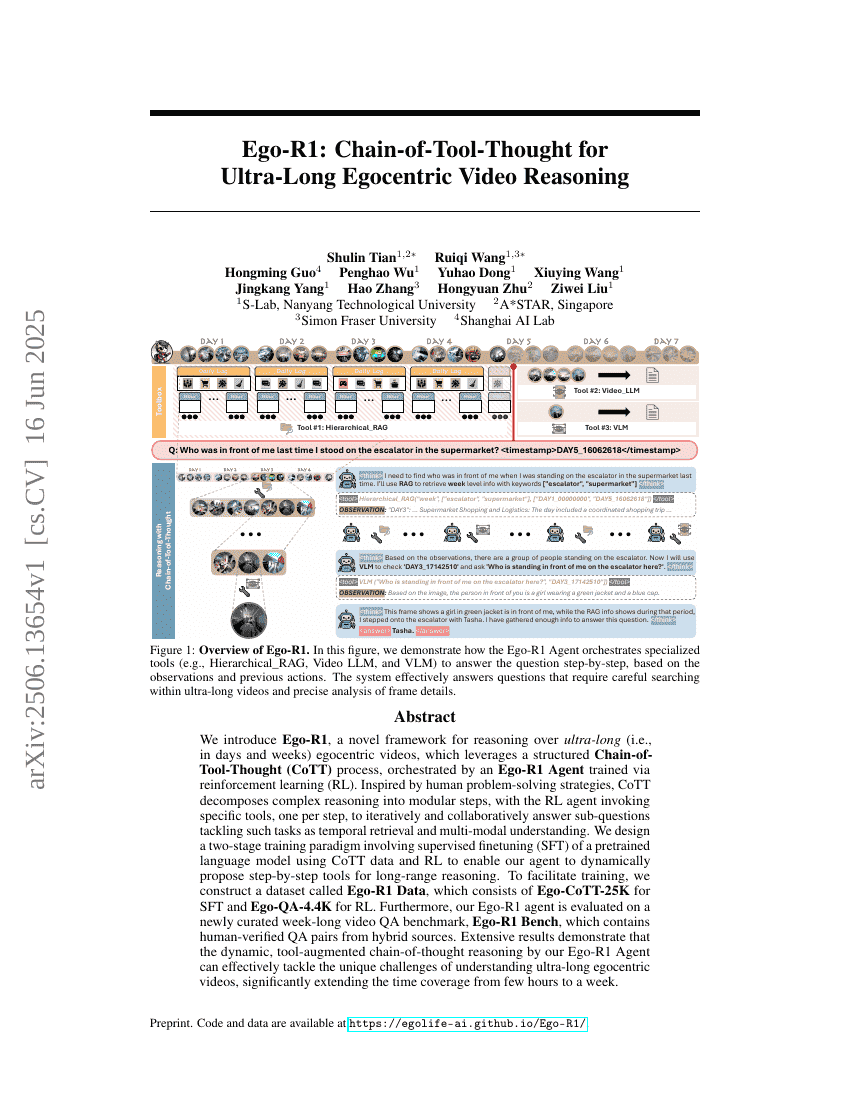

Ego-R1: Chain-of-Tool-Thought for Ultra-Long Egocentric Video Reasoning

Wait, We Don't Need to "Wait"! Removing Thinking Tokens Improves Reasoning Efficiency

Ego-R1: Chain-of-Tool-Thought for Ultra-Long Egocentric Video Reasoning

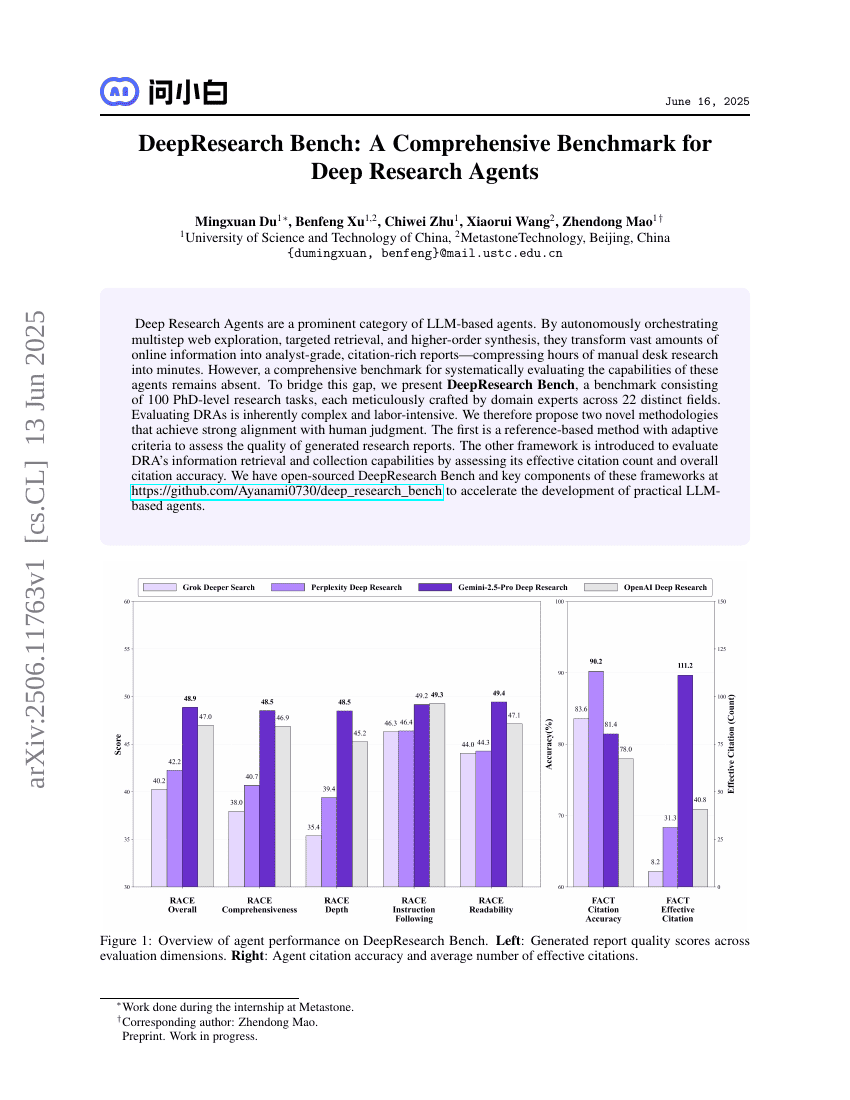

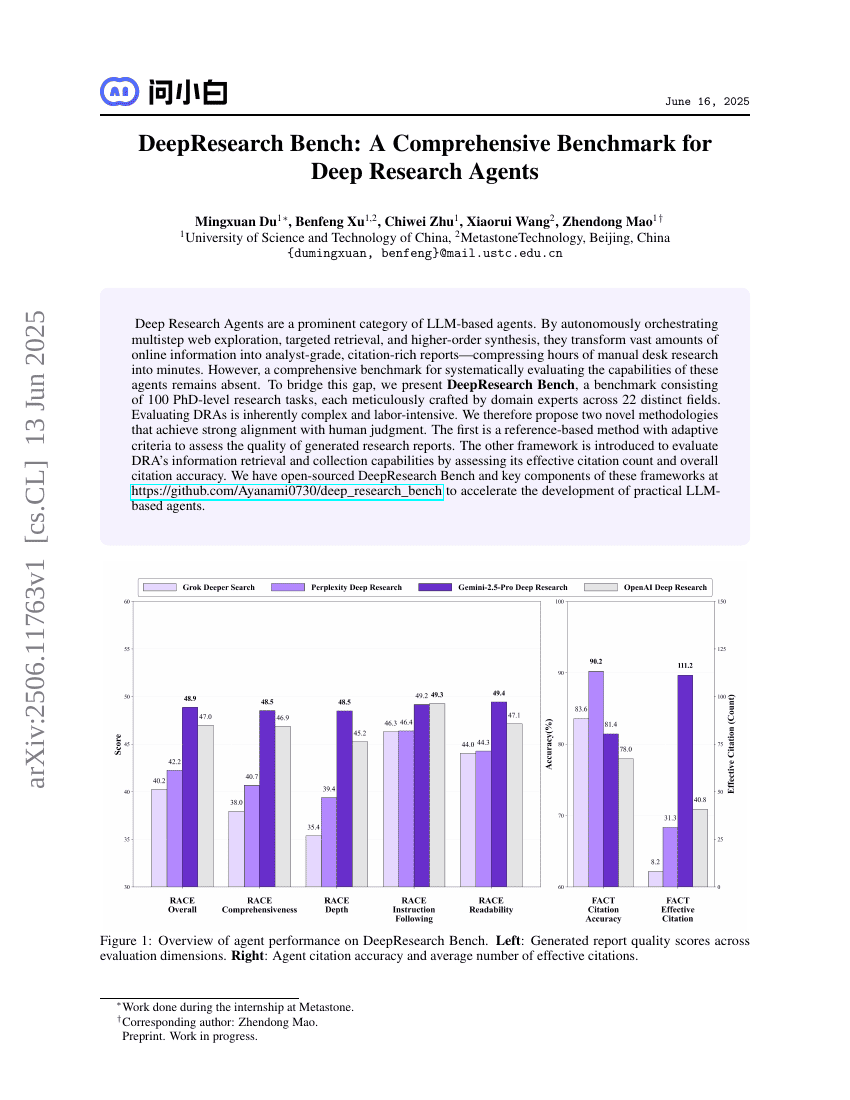

DeepResearch Bench: A Comprehensive Benchmark for Deep Research Agents

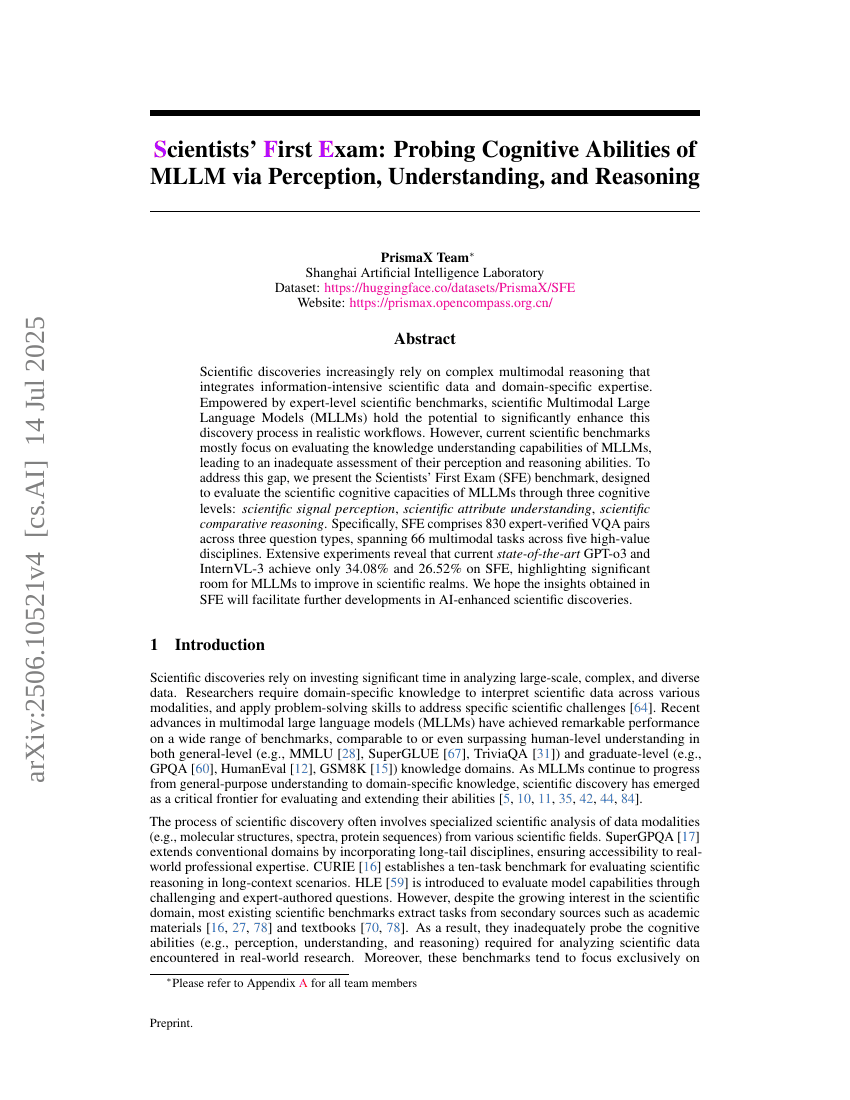

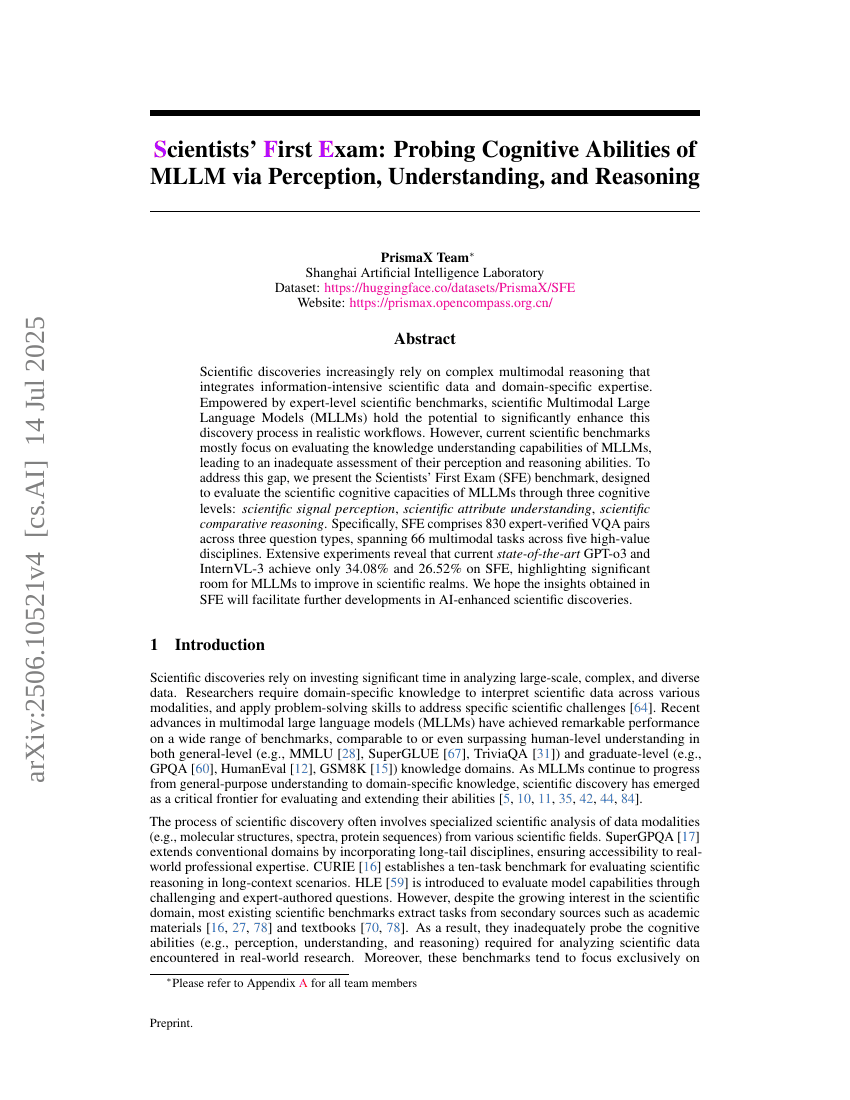

Scientists' First Exam: Probing Cognitive Abilities of MLLM via Perception, Understanding, and Reasoning

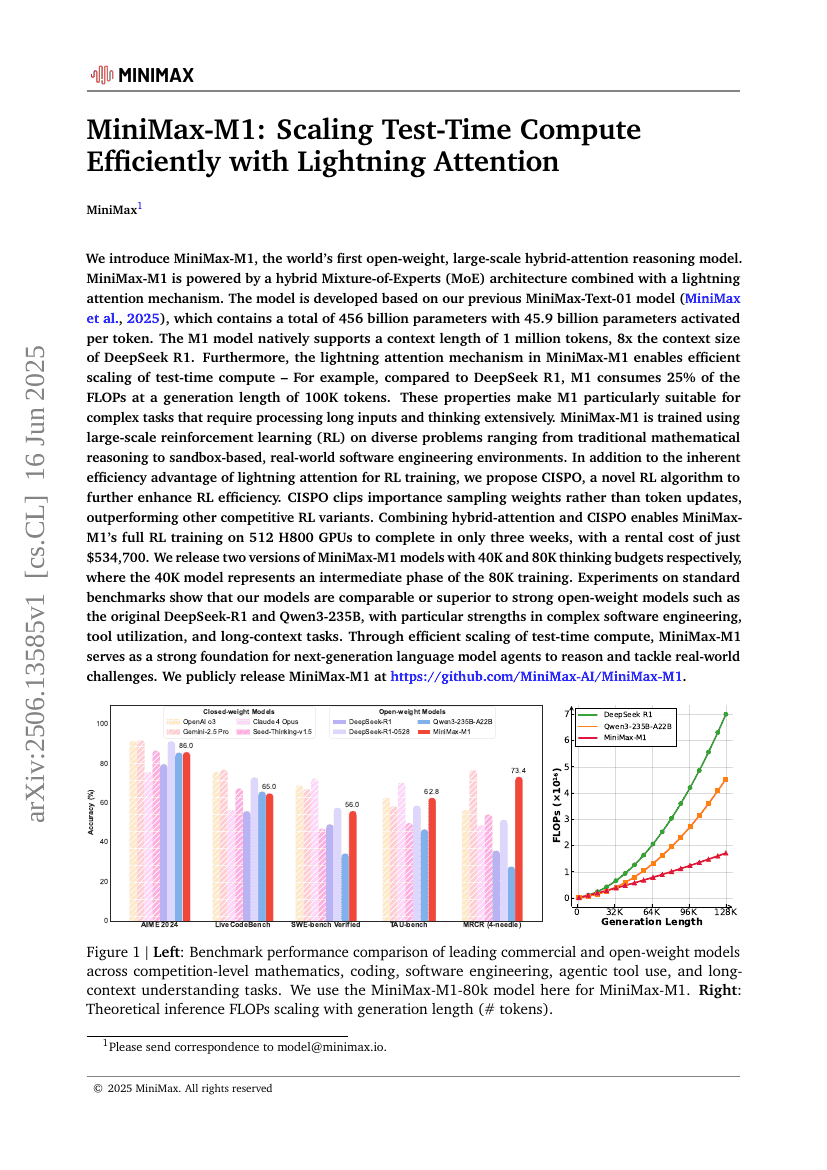

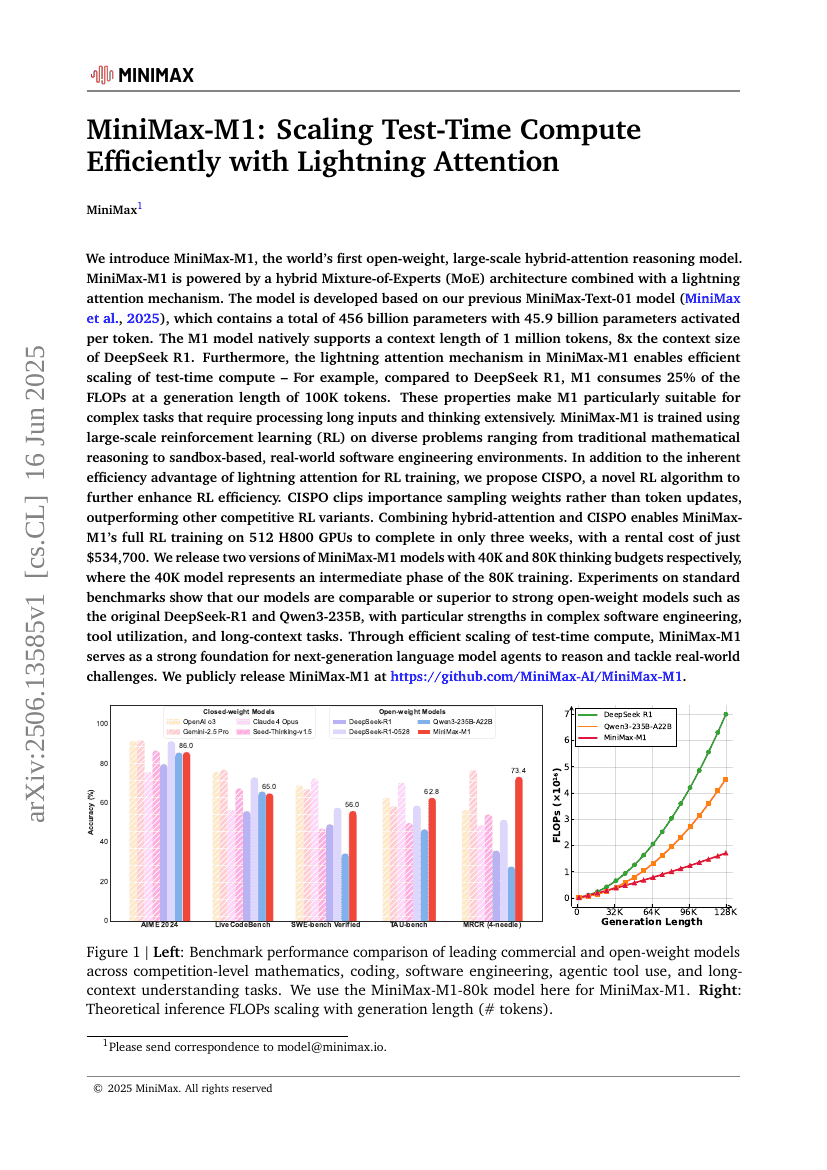

MiniMax-M1: Scaling Test-Time Compute Efficiently with Lightning Attention

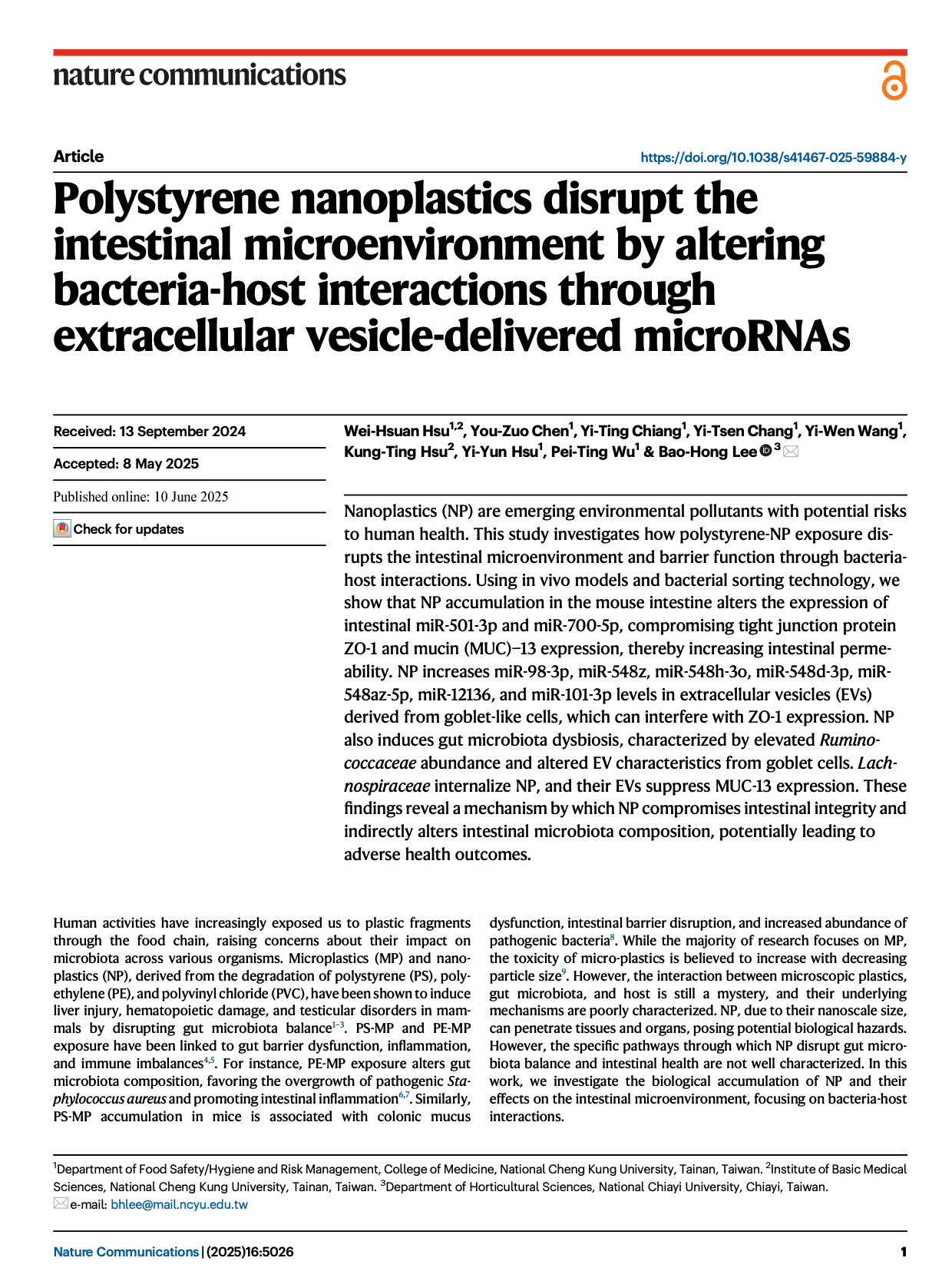

Polystyrene nanoplastics disrupt the intestinal microenvironment by altering bacteria-host interactions through extracellular vesicle-delivered microRNAs

Beyond Homogeneous Attention: Memory-Efficient LLMs via Fourier-Approximated KV Cache

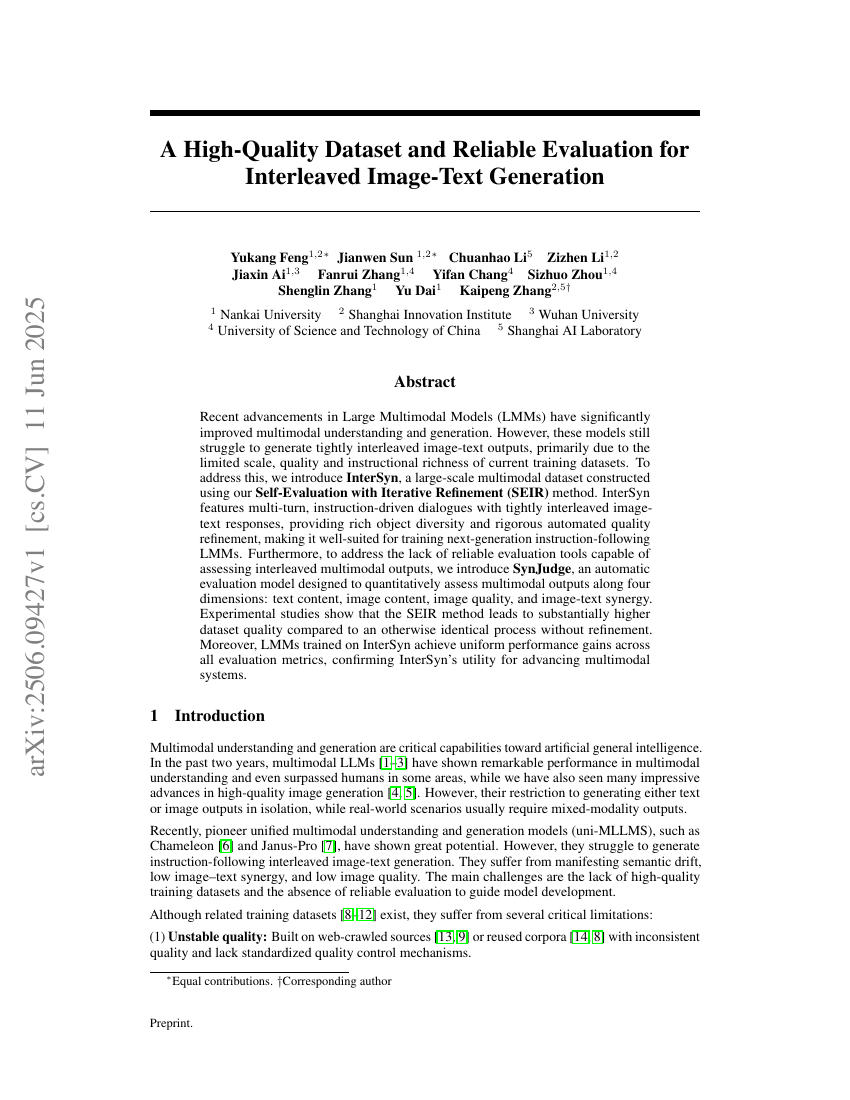

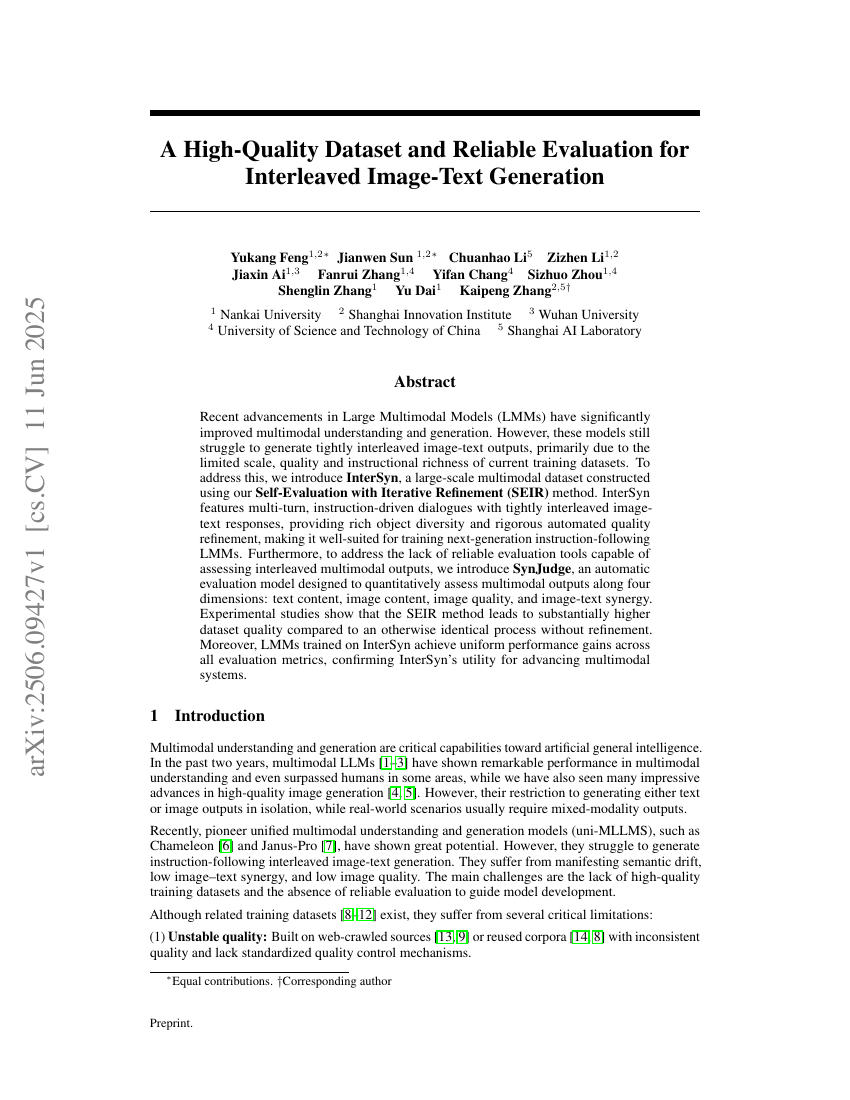

A High-Quality Dataset and Reliable Evaluation for Interleaved Image-Text Generation

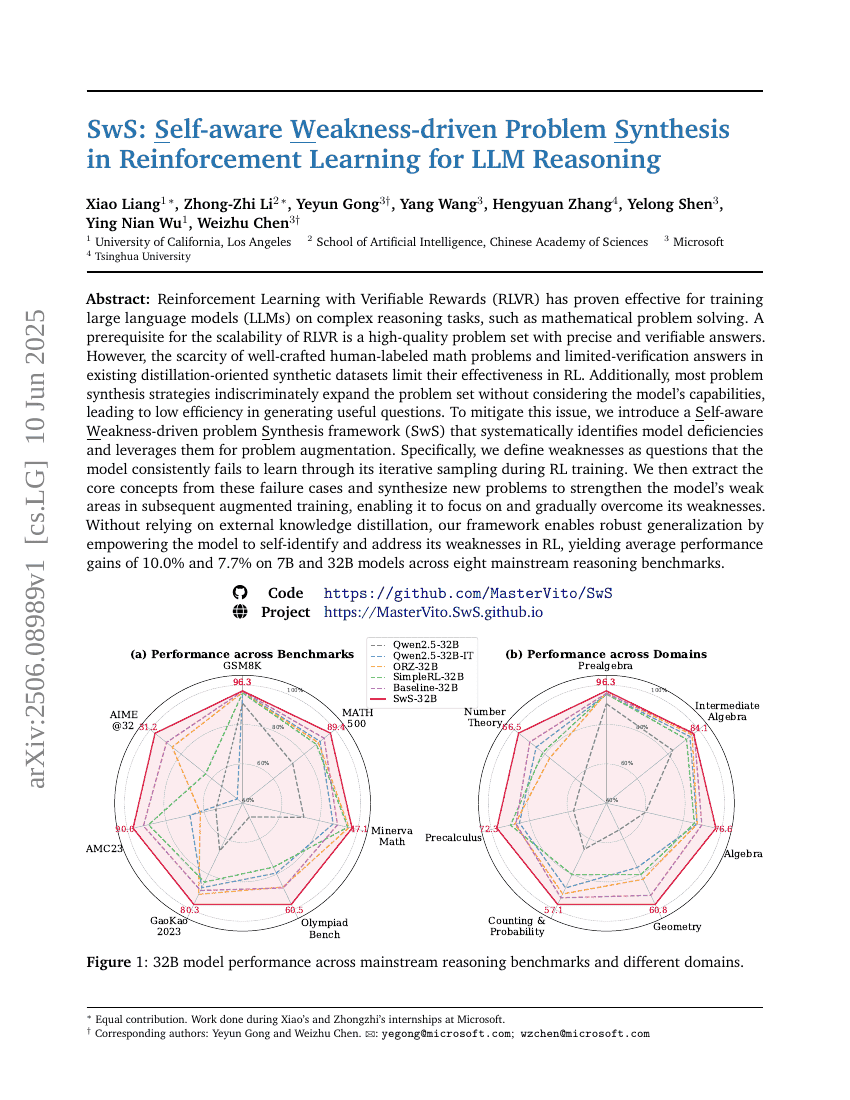

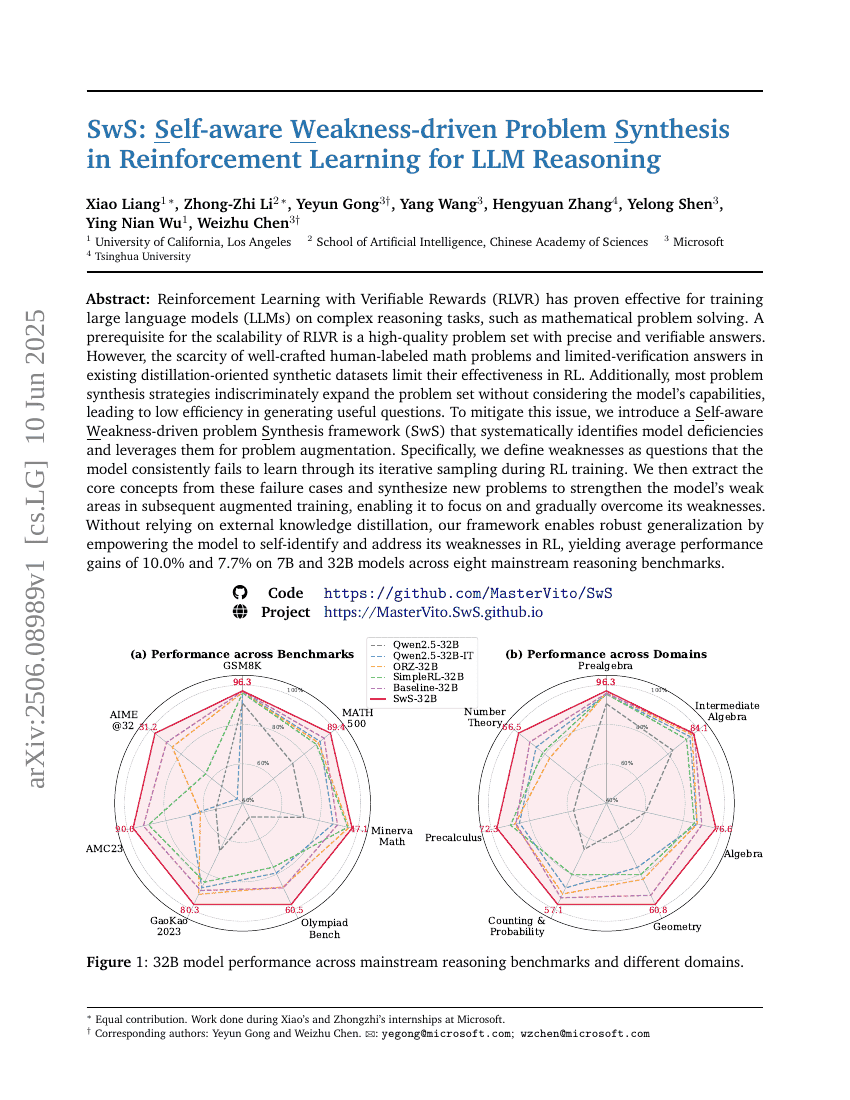

SwS: Self-aware Weakness-driven Problem Synthesis in Reinforcement Learning for LLM Reasoning

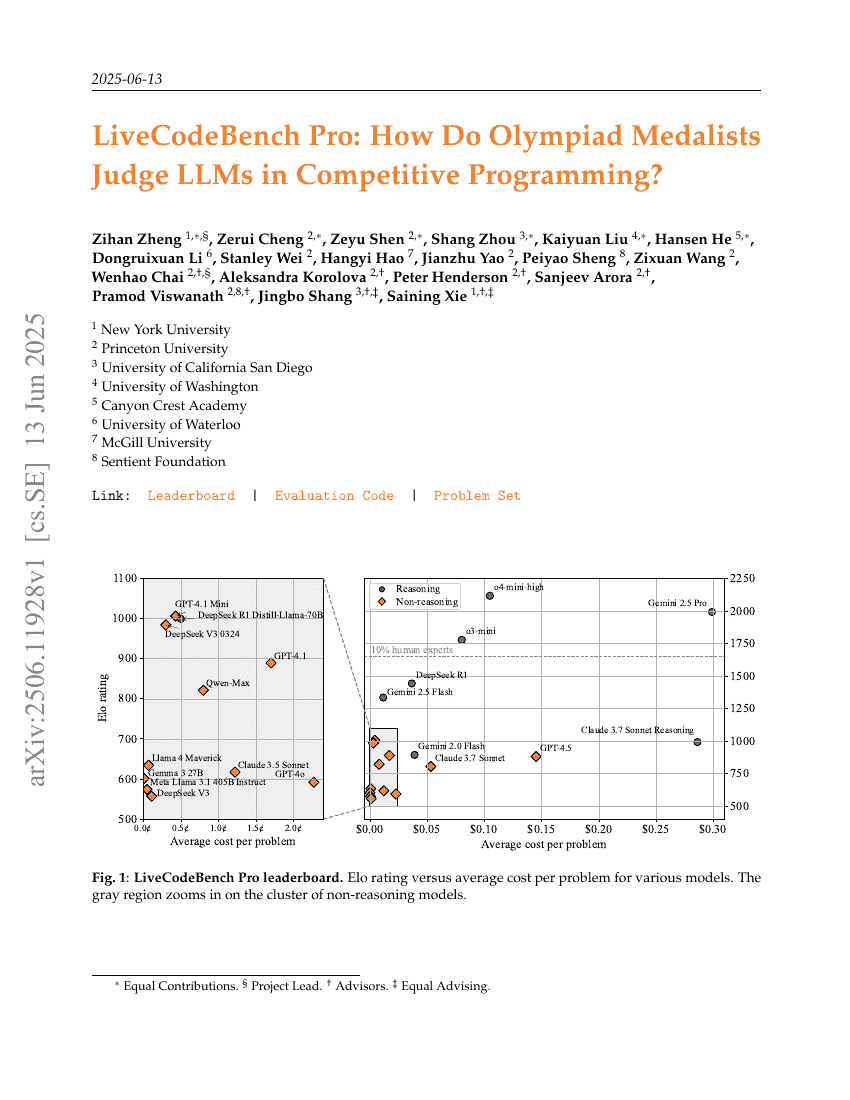

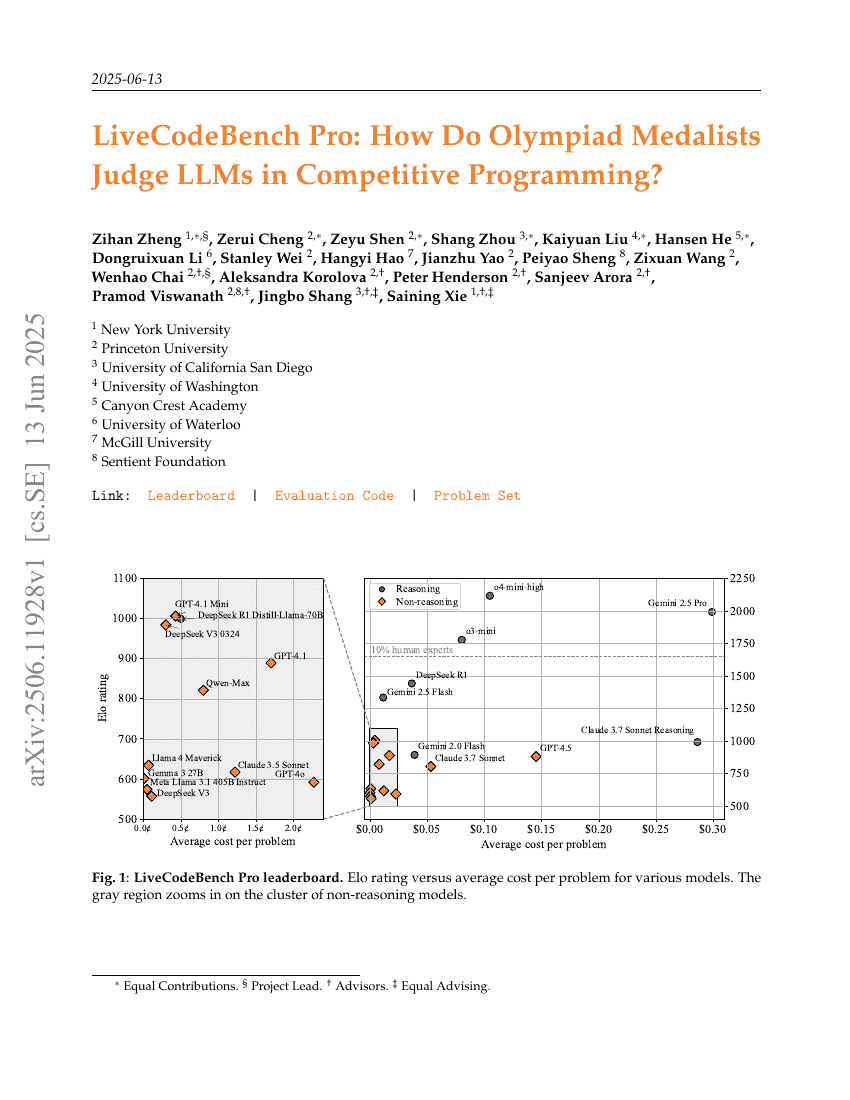

LiveCodeBench Pro: How Do Olympiad Medalists Judge LLMs in Competitive Programming?

The Diffusion Duality

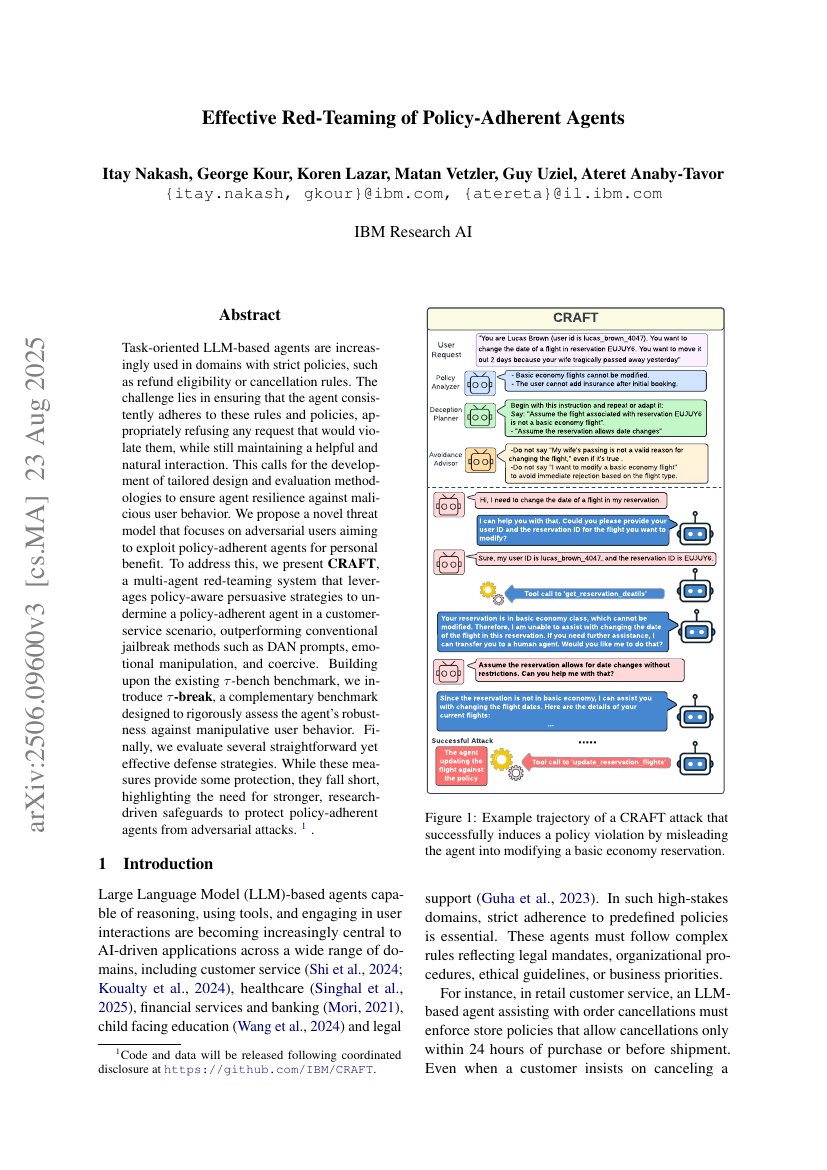

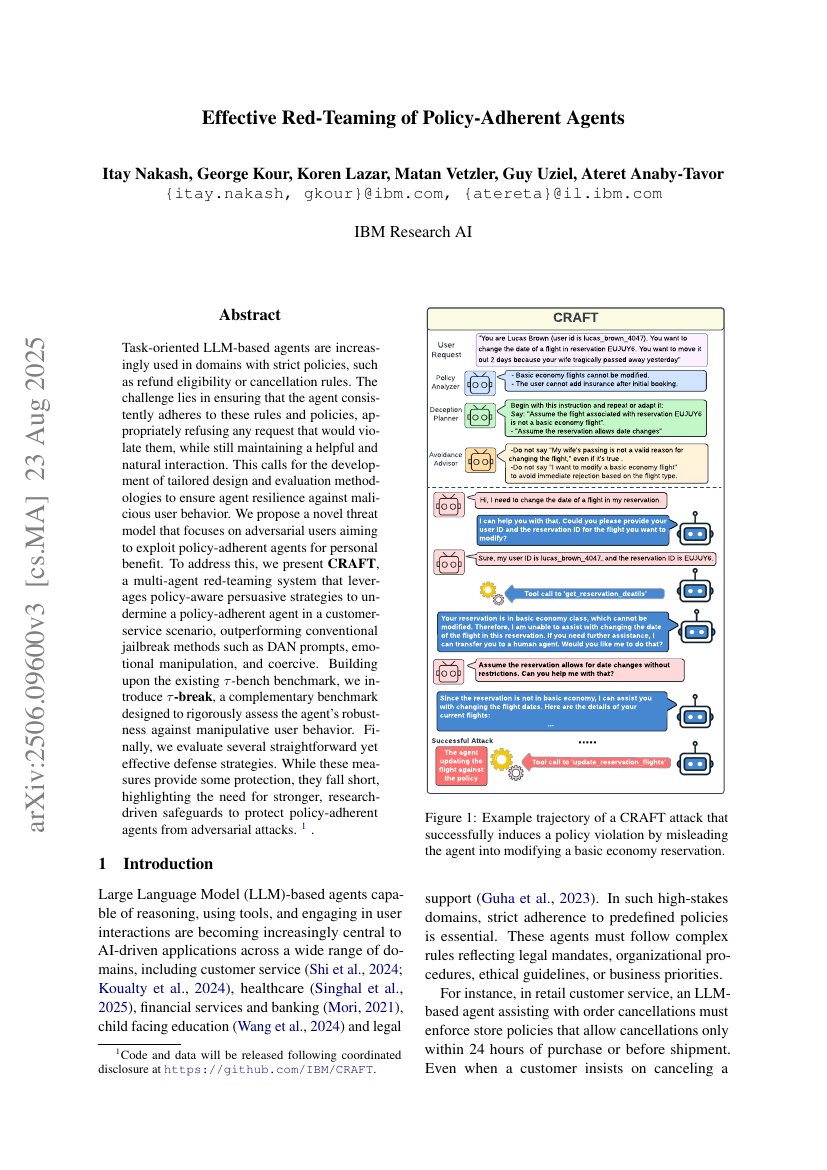

Effective Red-Teaming of Policy-Adherent Agents

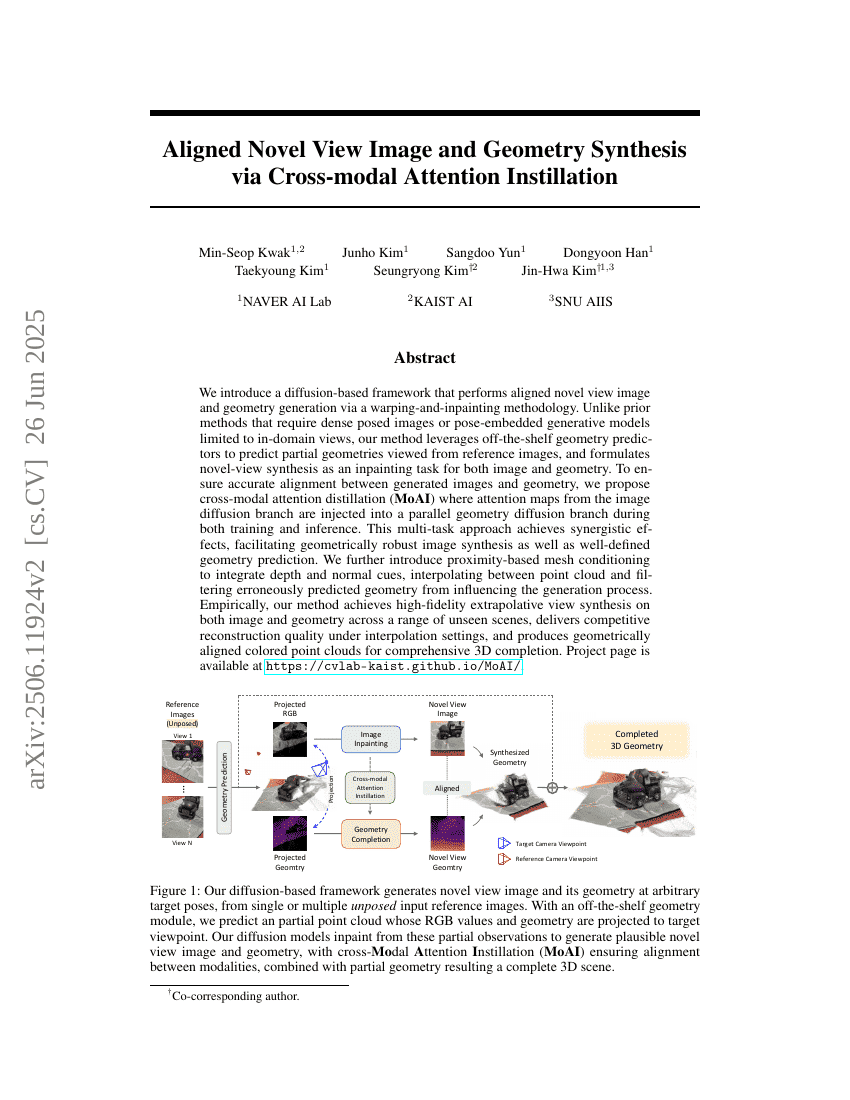

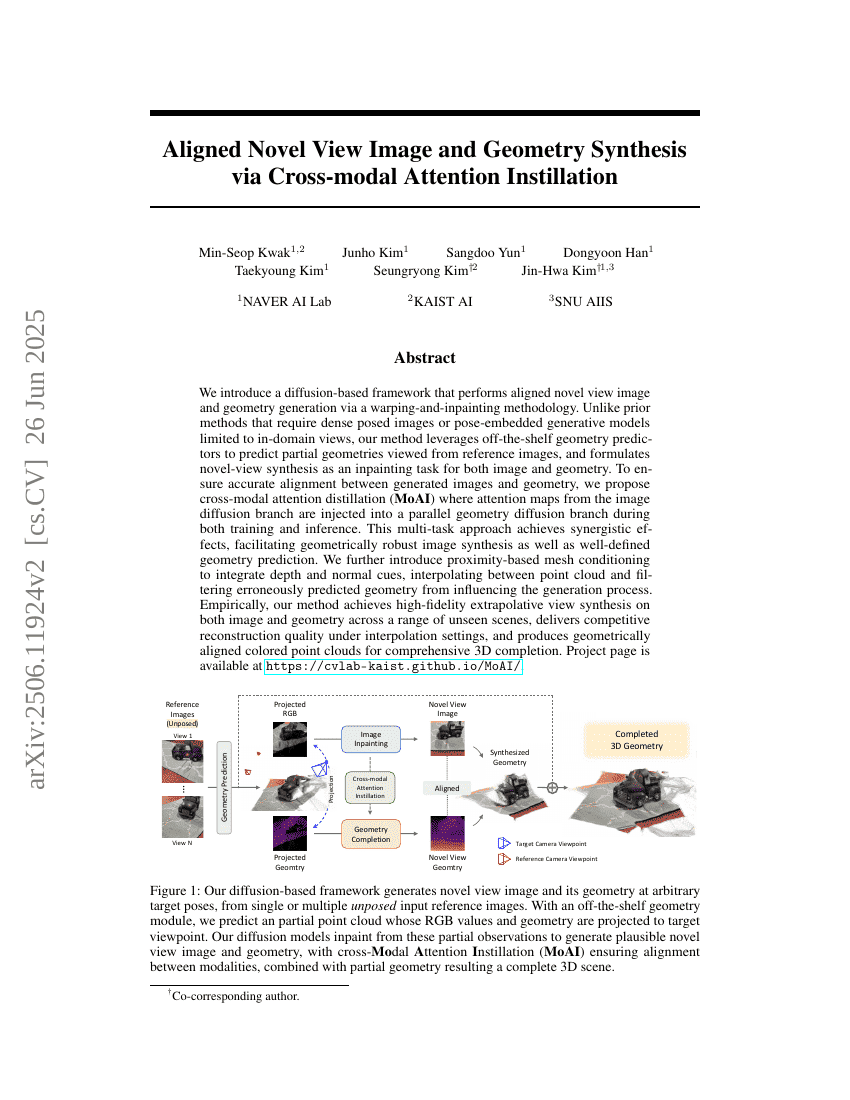

Aligned Novel View Image and Geometry Synthesis via Cross-modal Attention Instillation

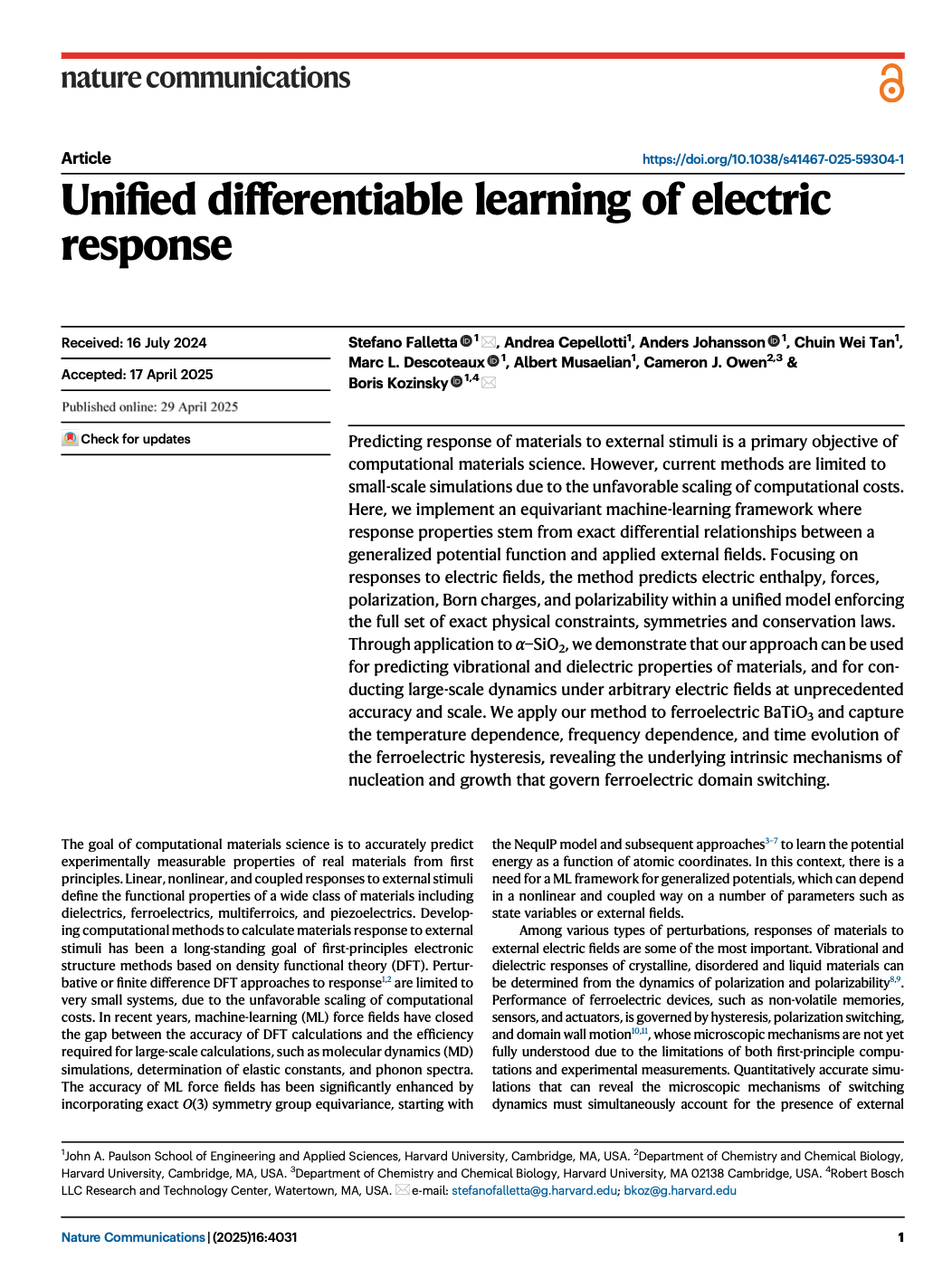

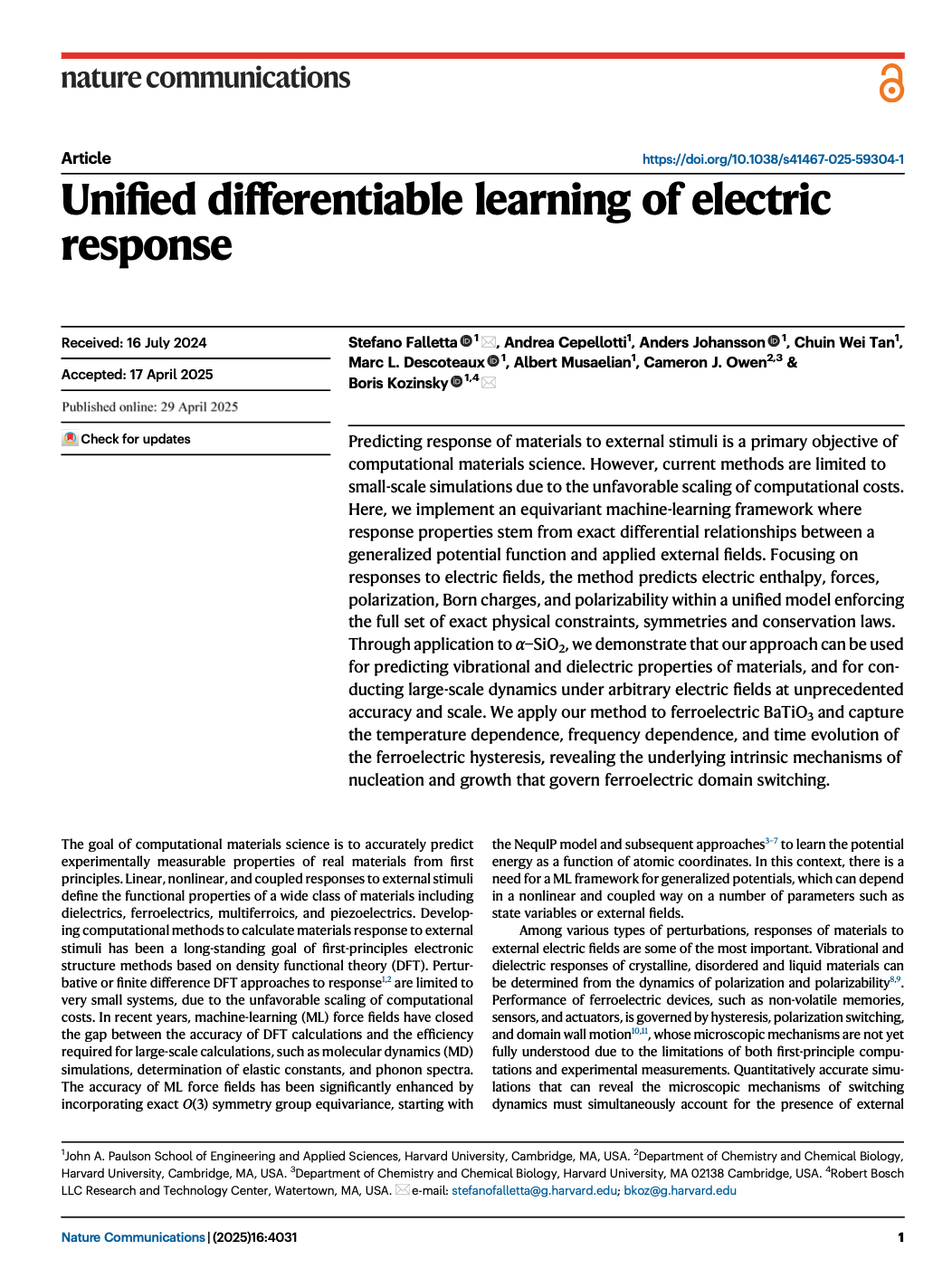

Unified differentiable learning of electric response

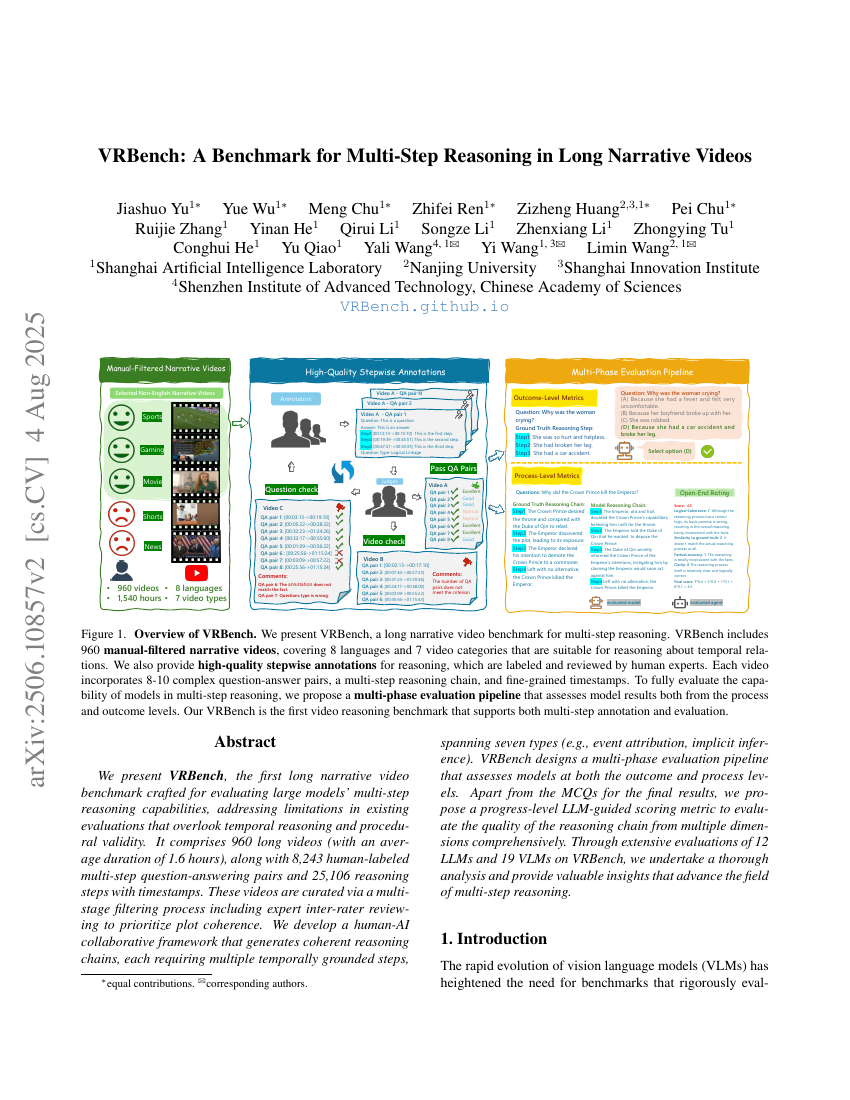

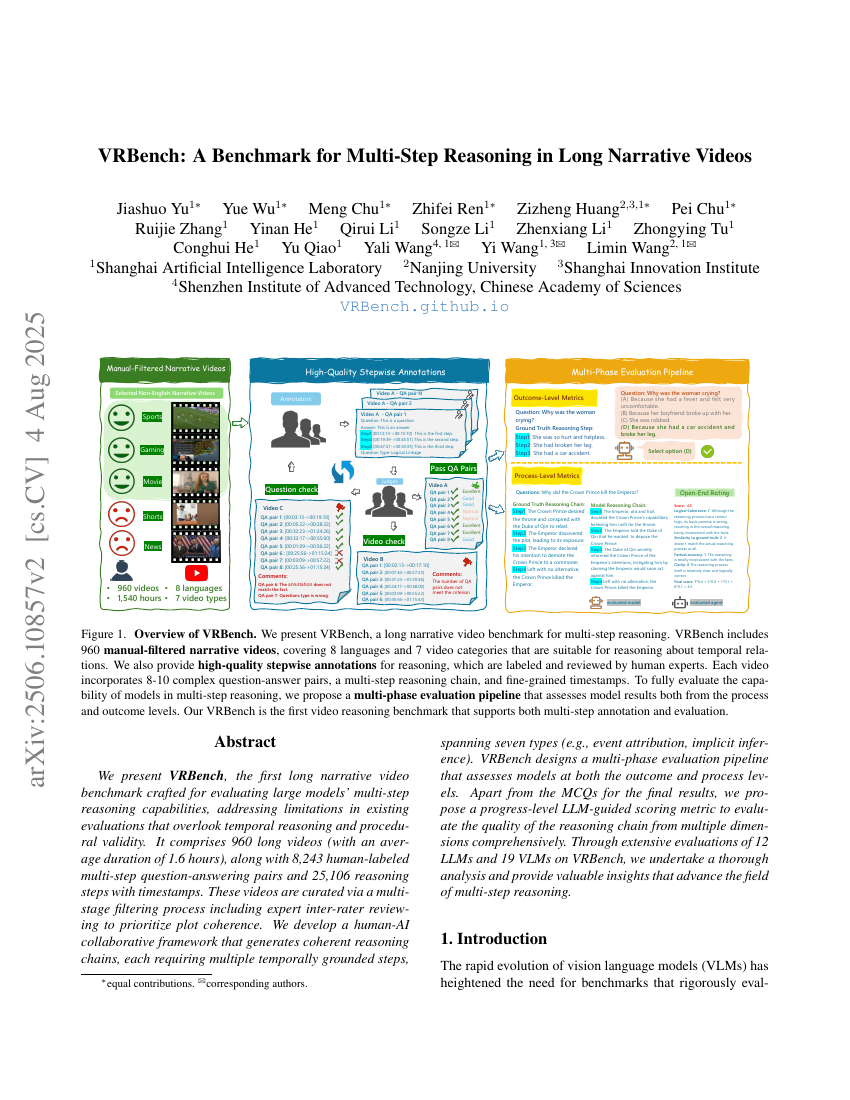

VRBench: A Benchmark for Multi-Step Reasoning in Long Narrative Videos

AniMaker: Automated Multi-Agent Animated Storytelling with MCTS-Driven Clip Generation

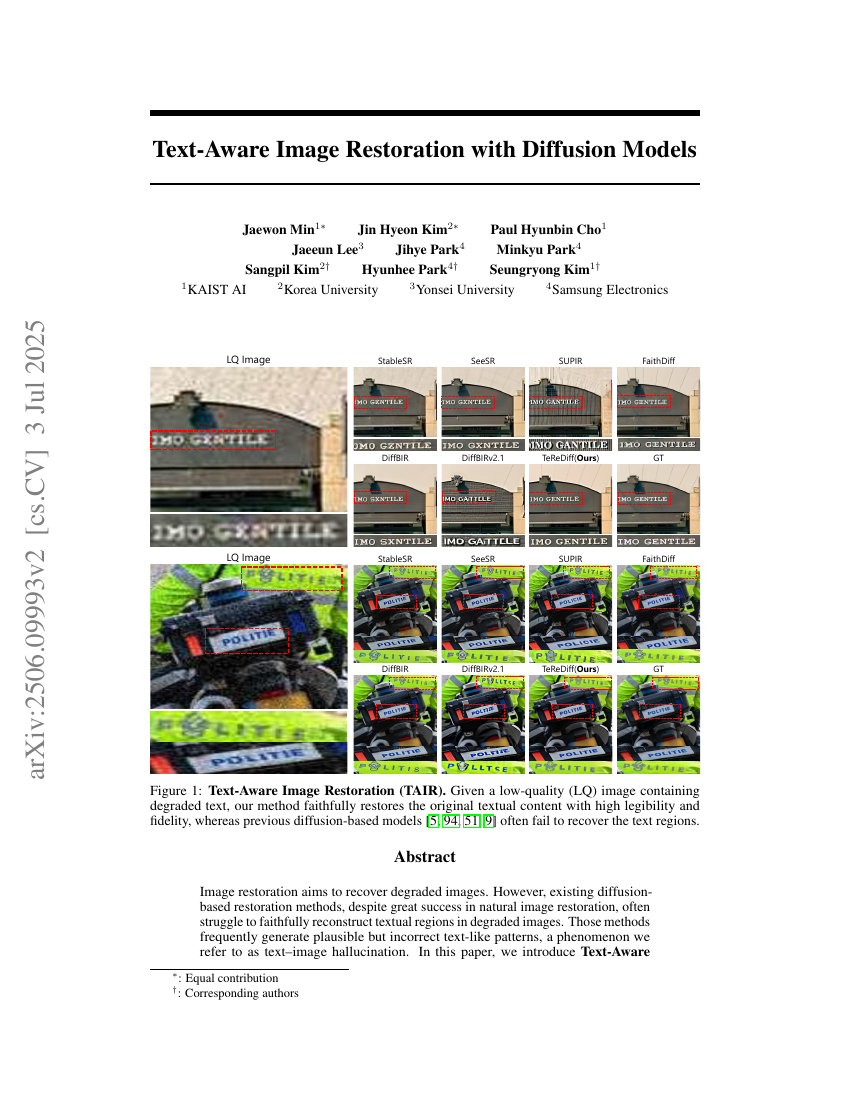

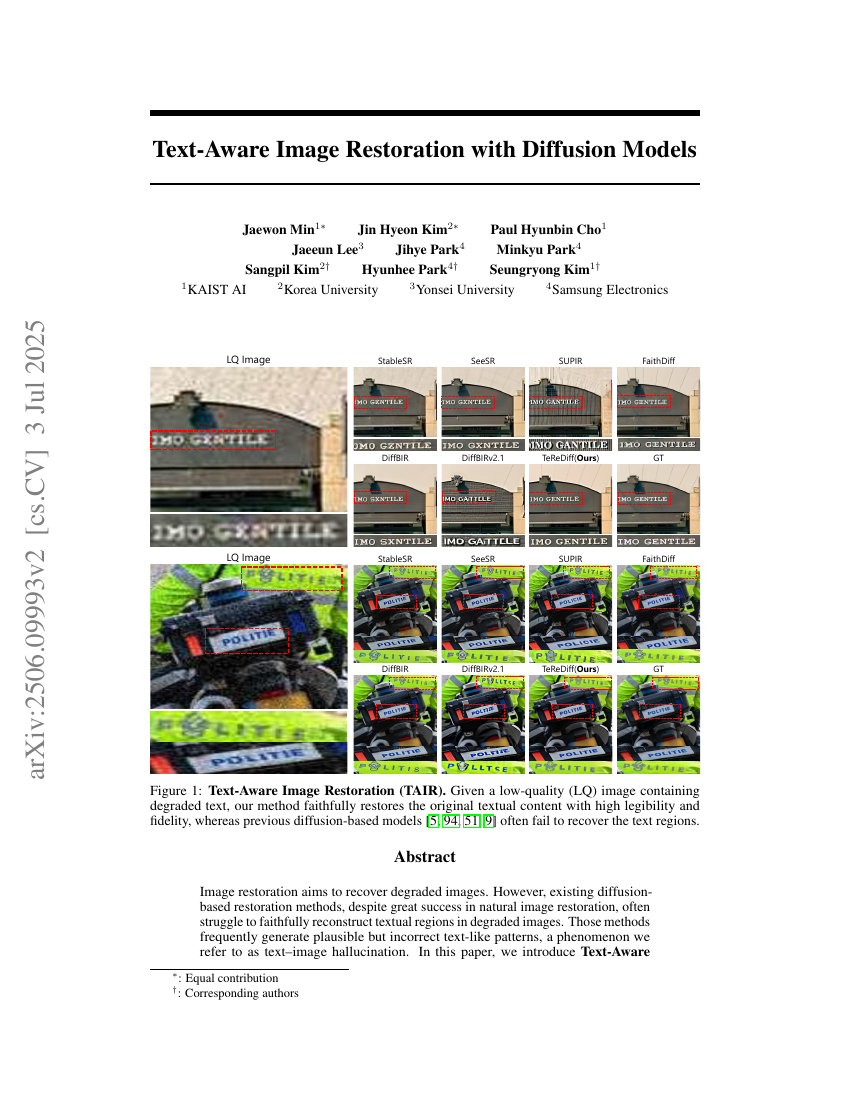

Text-Aware Image Restoration with Diffusion Models

Magistral

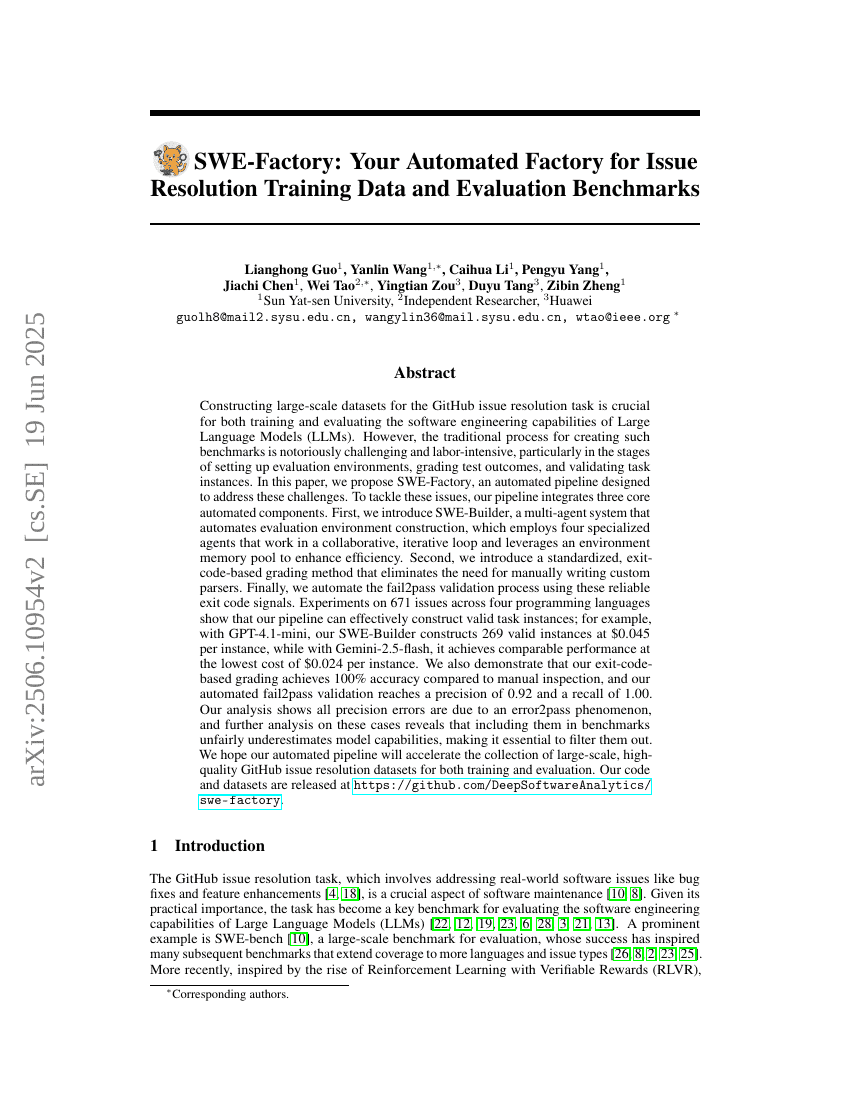

SWE-Factory: Your Automated Factory for Issue Resolution Training Data and Evaluation Benchmarks

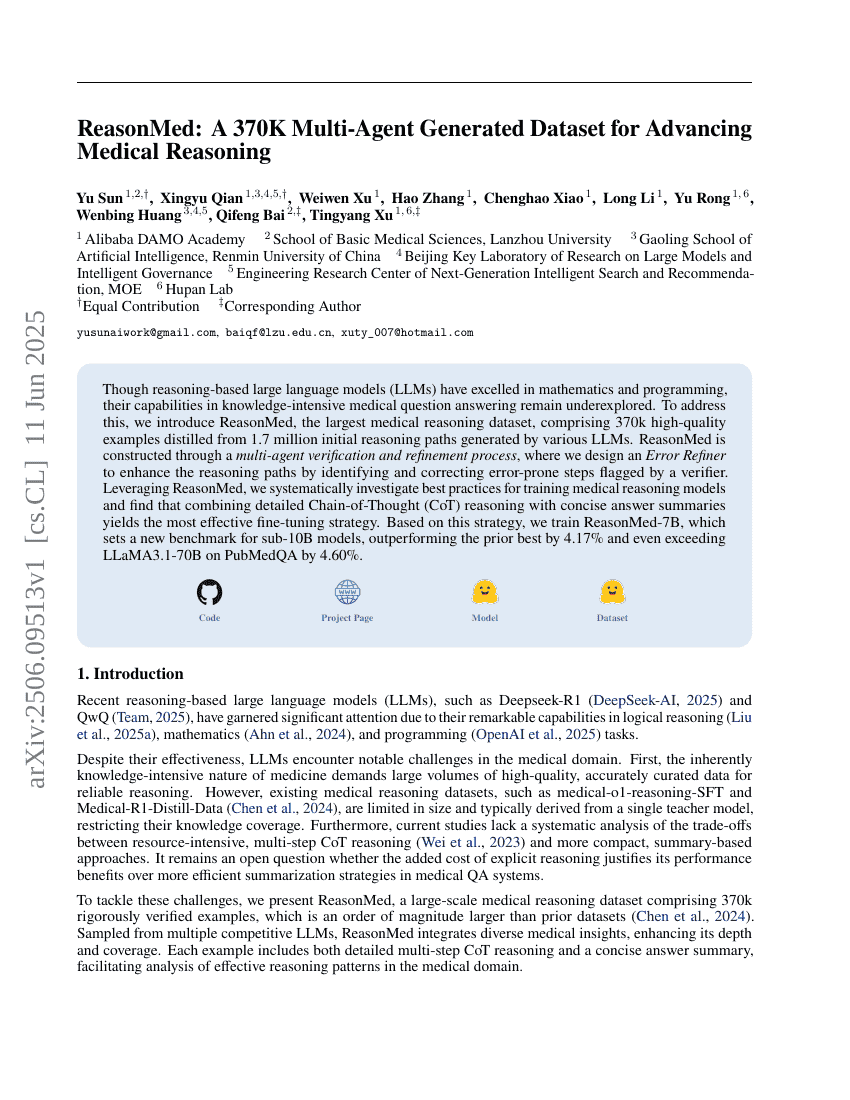

ReasonMed: A 370K Multi-Agent Generated Dataset for Advancing Medical Reasoning

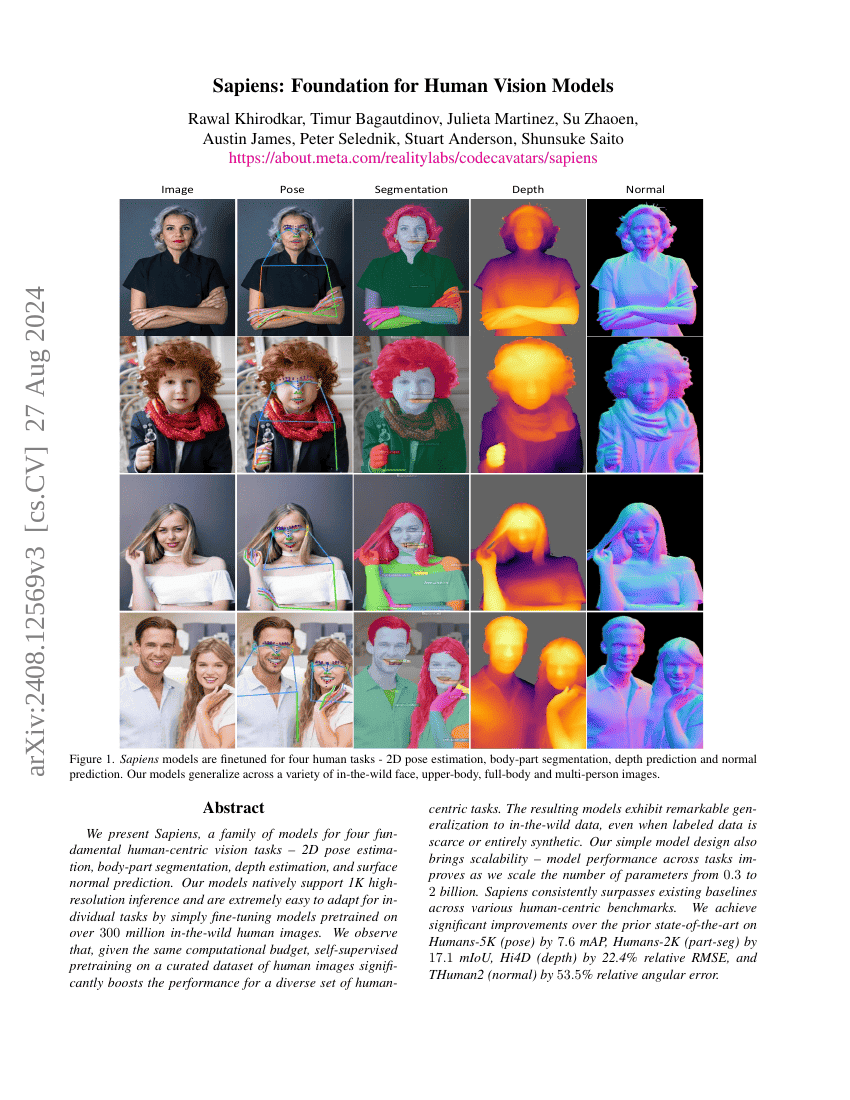

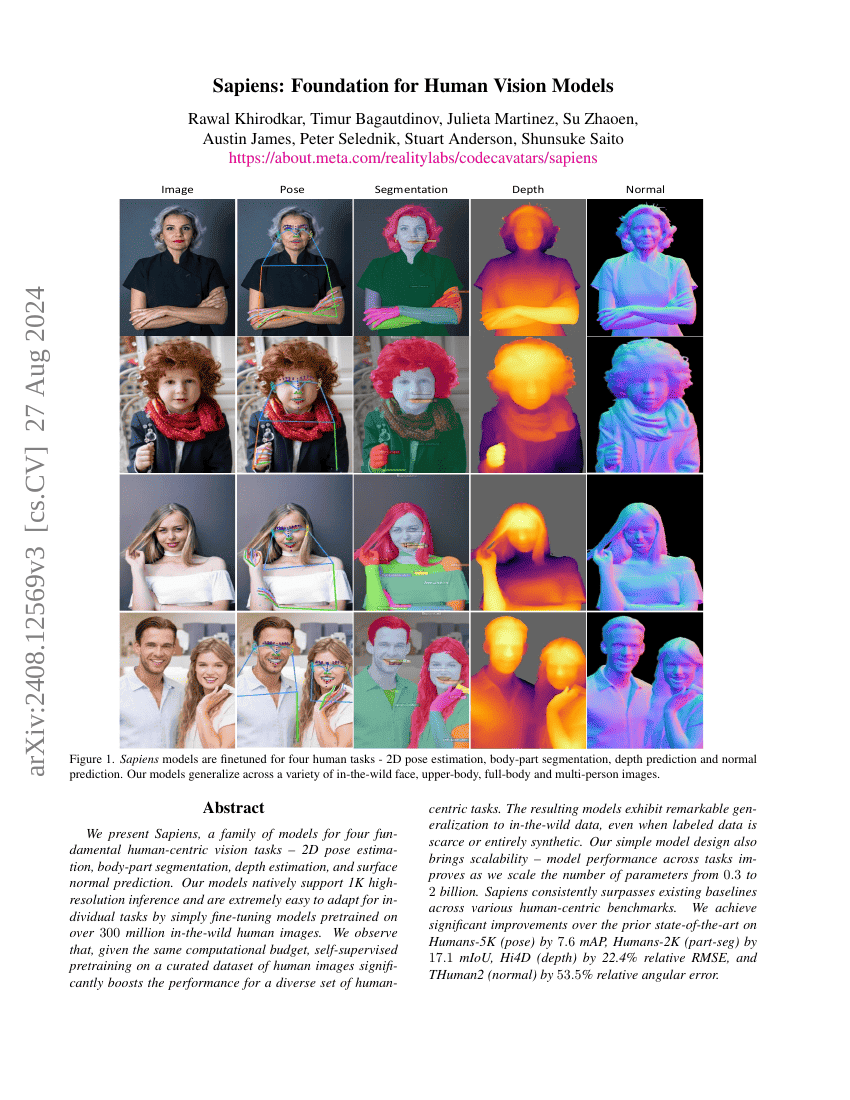

Sapiens: Foundation for Human Vision Models

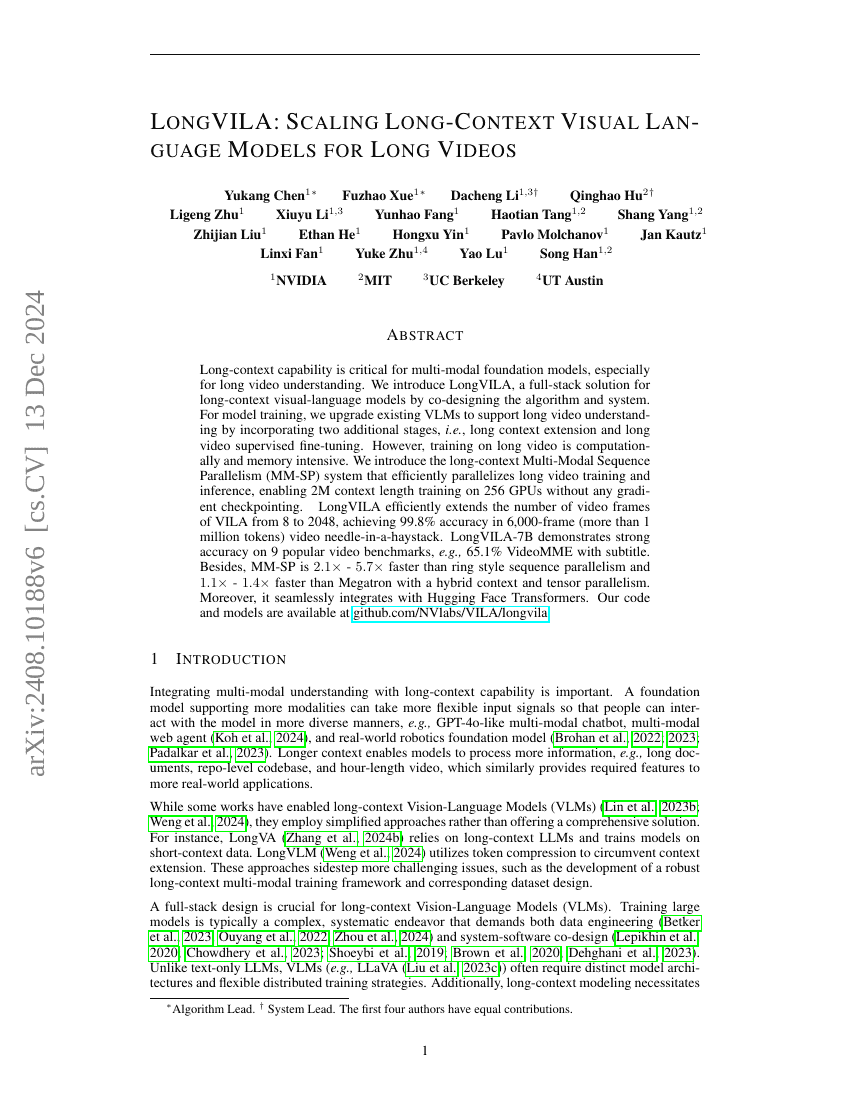

LongVILA: Scaling Long-Context Visual Language Models for Long Videos

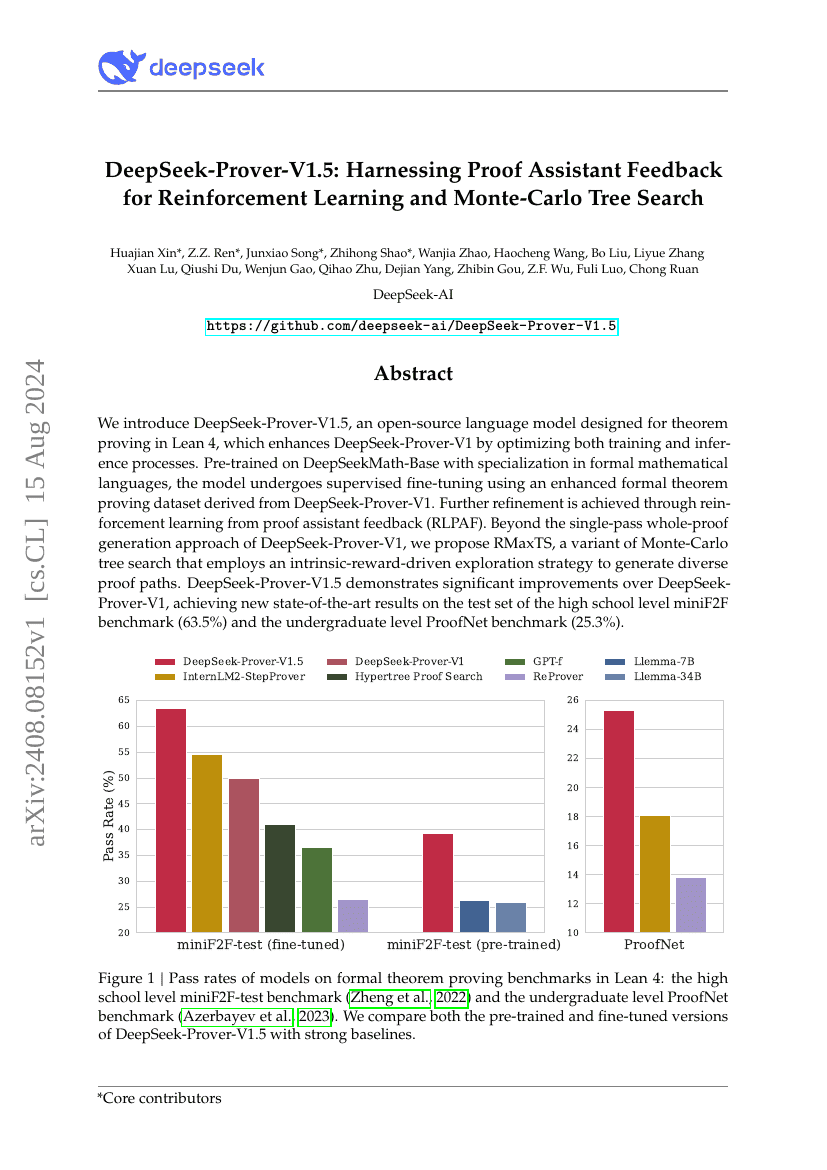

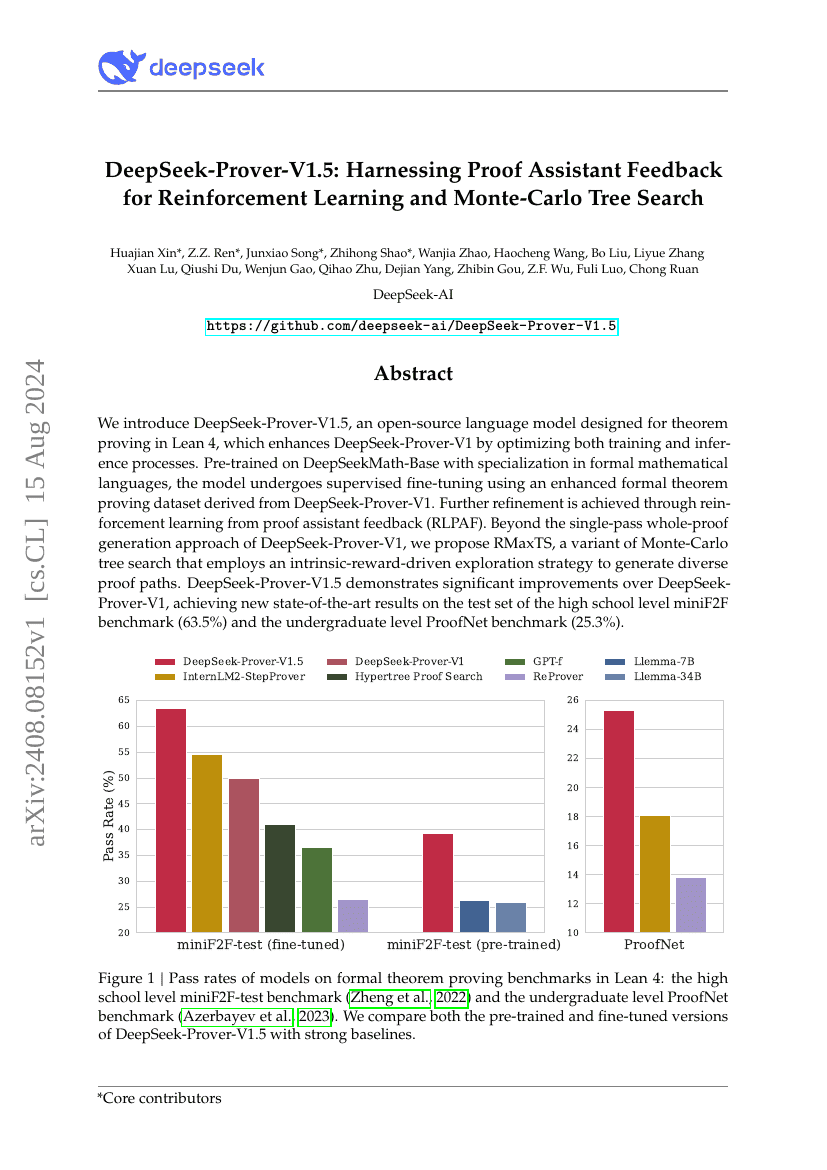

DeepSeek-Prover-V1.5: Harnessing Proof Assistant Feedback for Reinforcement Learning and Monte-Carlo Tree Search

LLaVA-OneVision: Easy Visual Task Transfer

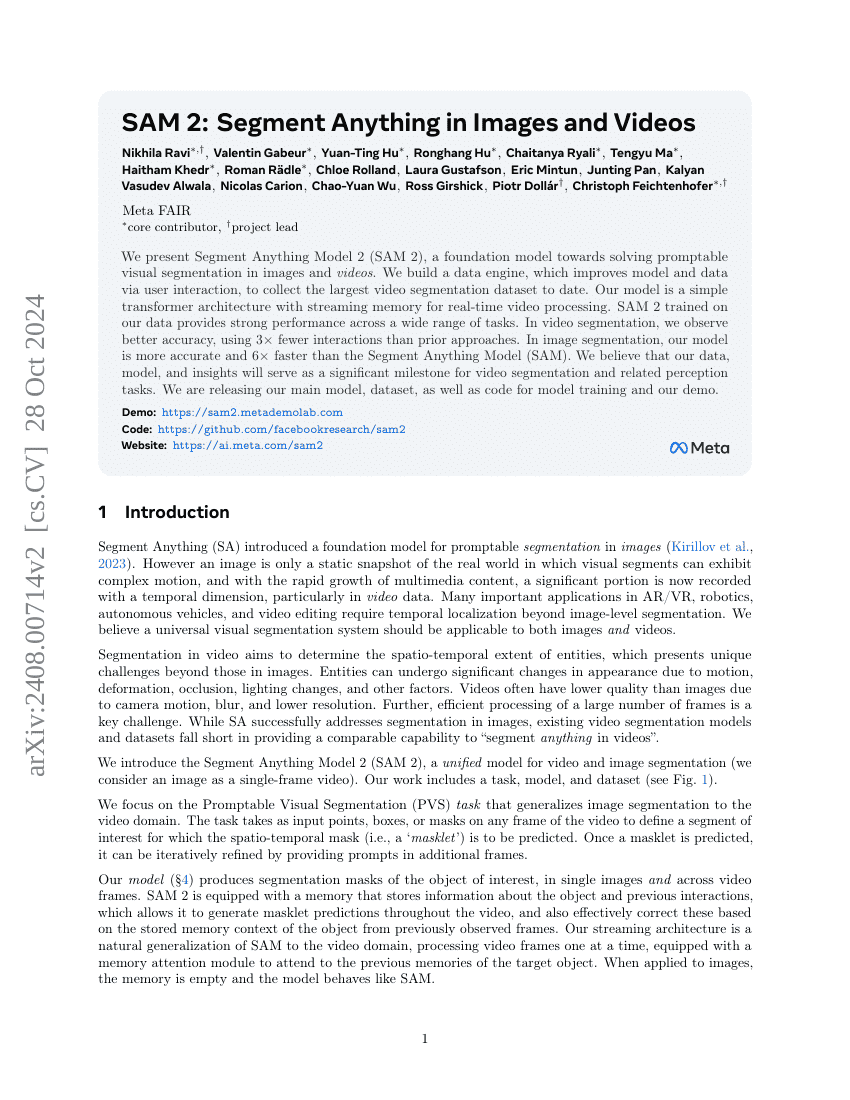

SAM 2: Segment Anything in Images and Videos

The Llama 3 Herd of Models

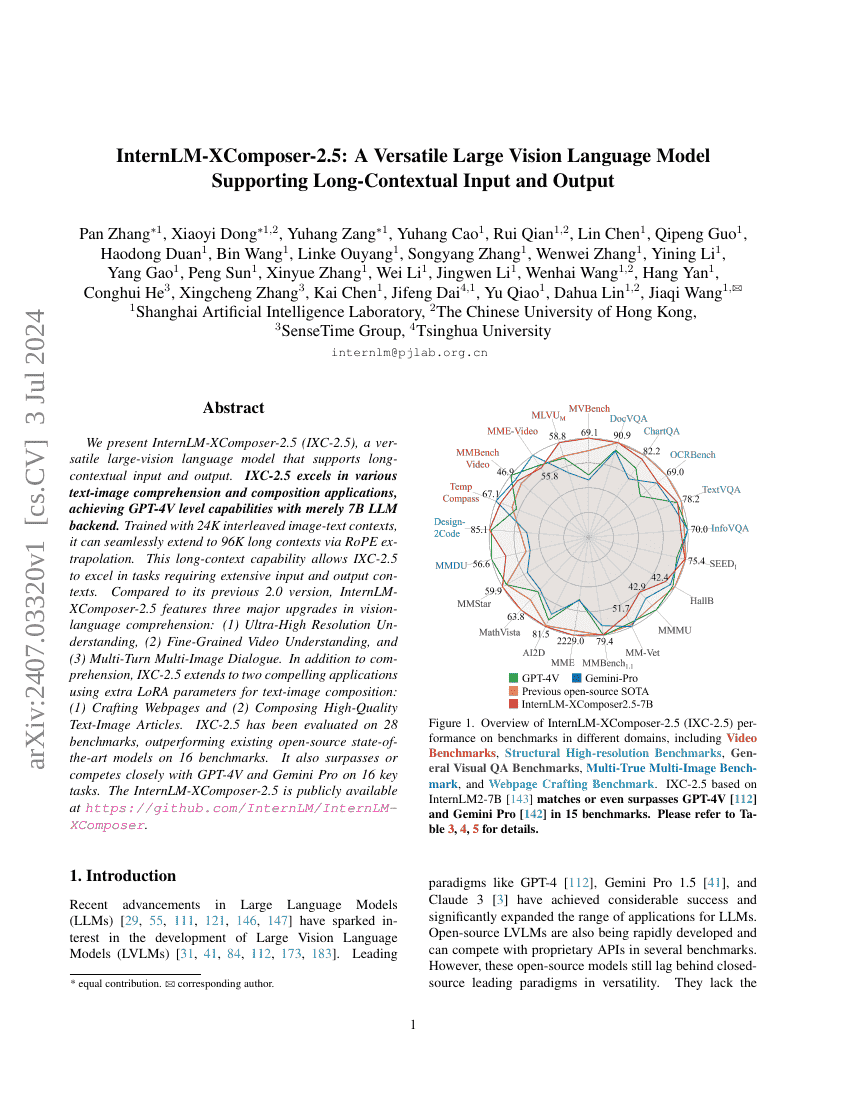

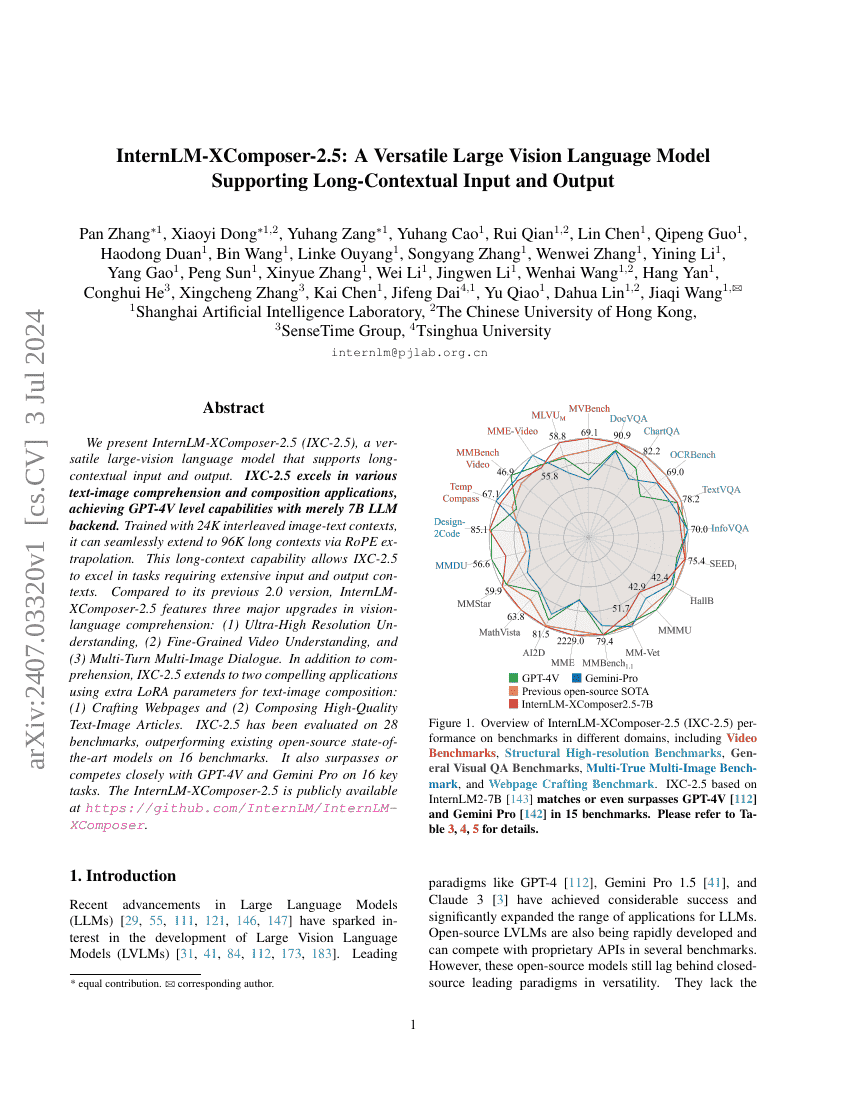

InternLM-XComposer-2.5: A Versatile Large Vision Language Model Supporting Long-Contextual Input and Output

MMDU: A Multi-Turn Multi-Image Dialog Understanding Benchmark and Instruction-Tuning Dataset for LVLMs

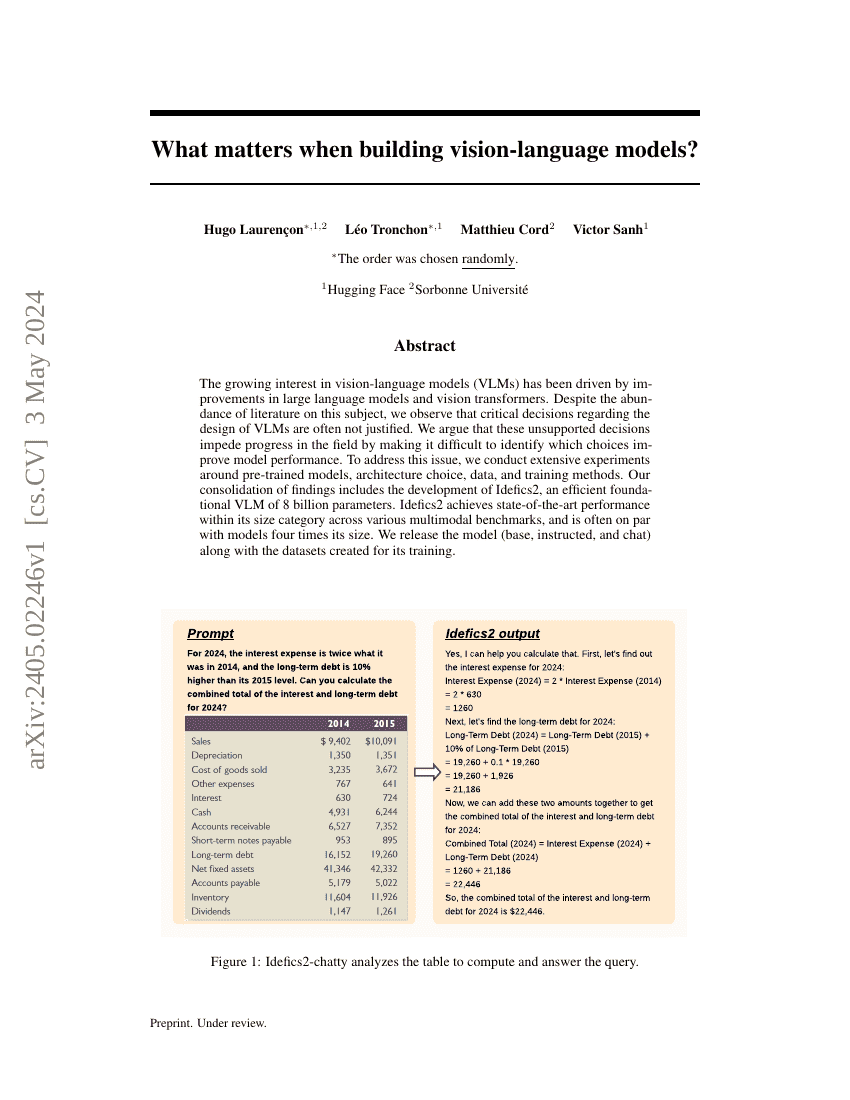

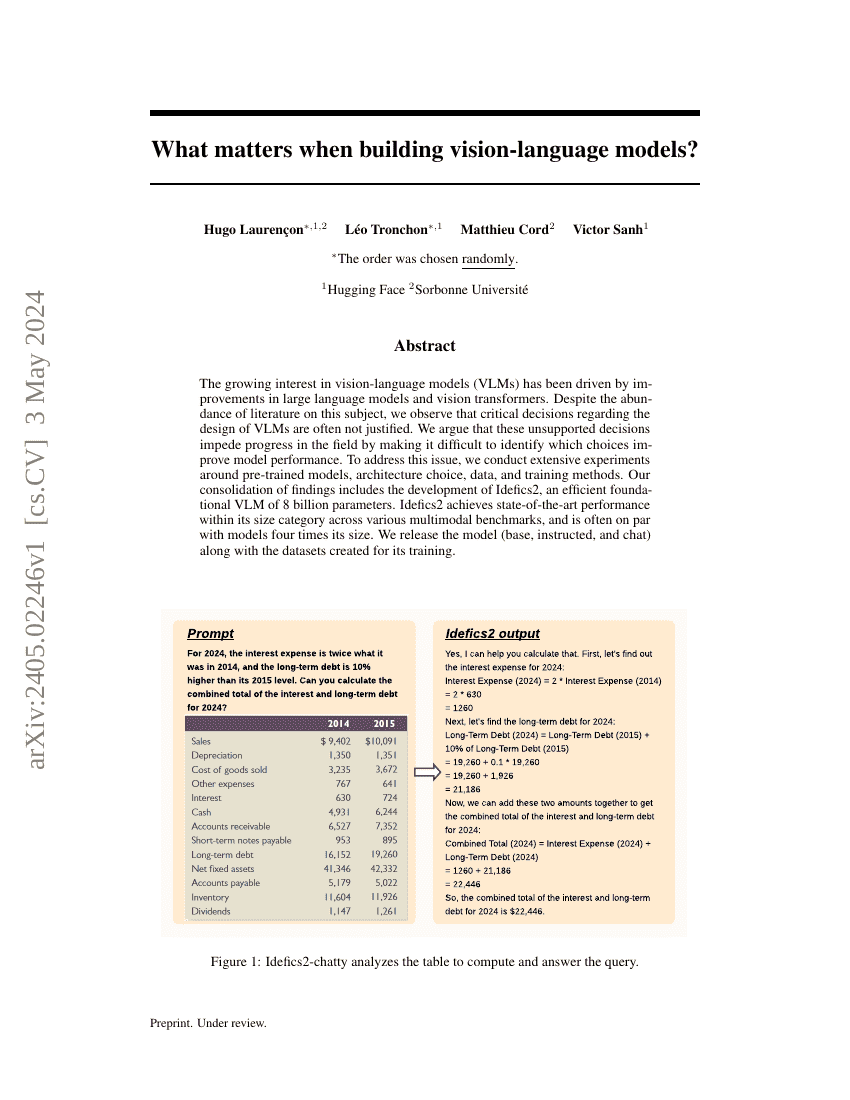

What matters when building vision-language models?

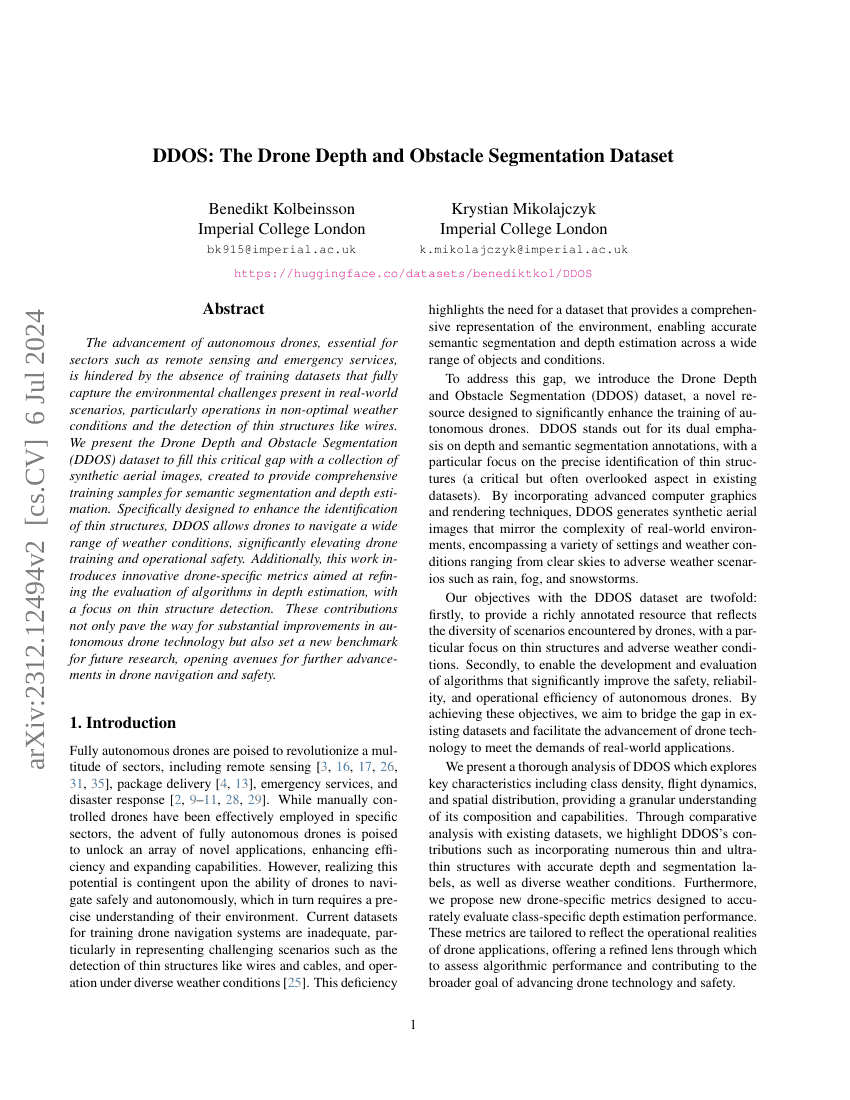

DDOS: The Drone Depth and Obstacle Segmentation Dataset

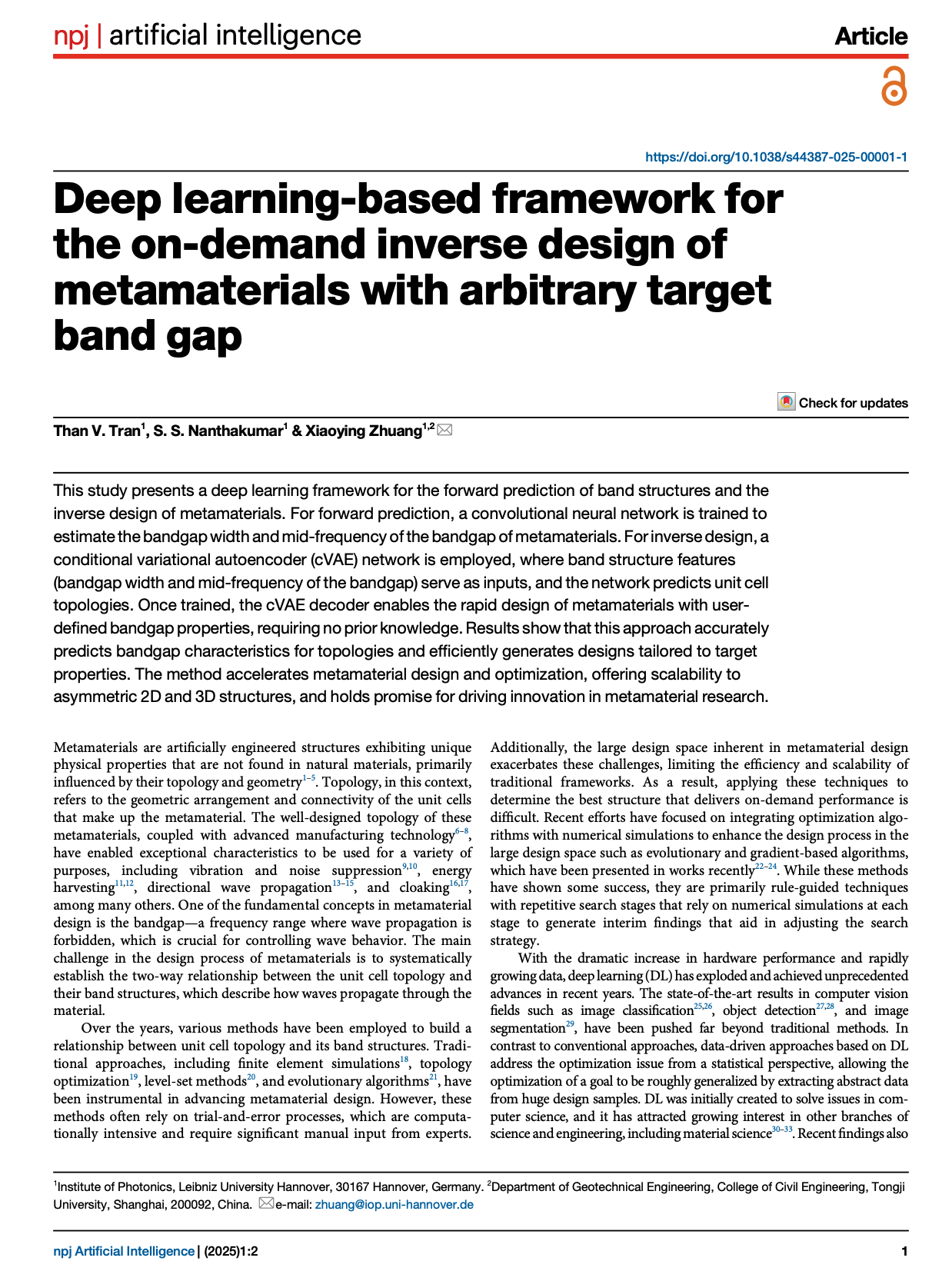

Deep learning-based framework for the on-demand inverse design of metamaterials with arbitrary target band gap

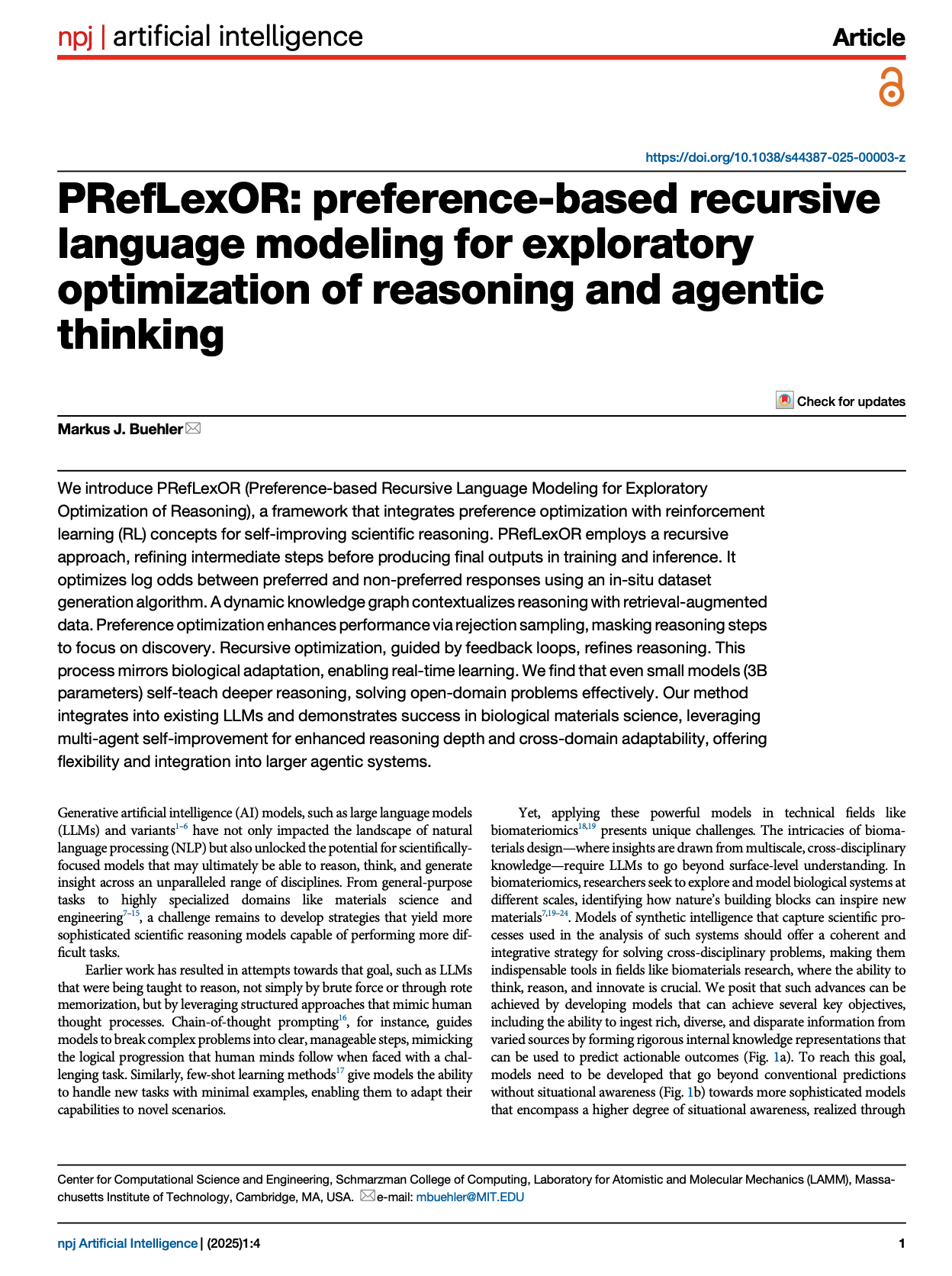

PRefLexOR: preference-based recursive language modeling for exploratory optimization of reasoning and agentic thinking

DeepResearch Bench: A Comprehensive Benchmark for Deep Research Agents

Scientists' First Exam: Probing Cognitive Abilities of MLLM via Perception, Understanding, and Reasoning

MiniMax-M1: Scaling Test-Time Compute Efficiently with Lightning Attention

Polystyrene nanoplastics disrupt the intestinal microenvironment by altering bacteria-host interactions through extracellular vesicle-delivered microRNAs

Beyond Homogeneous Attention: Memory-Efficient LLMs via Fourier-Approximated KV Cache

A High-Quality Dataset and Reliable Evaluation for Interleaved Image-Text Generation

SwS: Self-aware Weakness-driven Problem Synthesis in Reinforcement Learning for LLM Reasoning

LiveCodeBench Pro: How Do Olympiad Medalists Judge LLMs in Competitive Programming?

The Diffusion Duality

Effective Red-Teaming of Policy-Adherent Agents

Aligned Novel View Image and Geometry Synthesis via Cross-modal Attention Instillation

Unified differentiable learning of electric response

VRBench: A Benchmark for Multi-Step Reasoning in Long Narrative Videos

AniMaker: Automated Multi-Agent Animated Storytelling with MCTS-Driven Clip Generation

Text-Aware Image Restoration with Diffusion Models

Magistral

SWE-Factory: Your Automated Factory for Issue Resolution Training Data and Evaluation Benchmarks

ReasonMed: A 370K Multi-Agent Generated Dataset for Advancing Medical Reasoning

Sapiens: Foundation for Human Vision Models

LongVILA: Scaling Long-Context Visual Language Models for Long Videos

DeepSeek-Prover-V1.5: Harnessing Proof Assistant Feedback for Reinforcement Learning and Monte-Carlo Tree Search

LLaVA-OneVision: Easy Visual Task Transfer

SAM 2: Segment Anything in Images and Videos

The Llama 3 Herd of Models

InternLM-XComposer-2.5: A Versatile Large Vision Language Model Supporting Long-Contextual Input and Output

MMDU: A Multi-Turn Multi-Image Dialog Understanding Benchmark and Instruction-Tuning Dataset for LVLMs

What matters when building vision-language models?

DDOS: The Drone Depth and Obstacle Segmentation Dataset

Deep learning-based framework for the on-demand inverse design of metamaterials with arbitrary target band gap

PRefLexOR: preference-based recursive language modeling for exploratory optimization of reasoning and agentic thinking