Command Palette

Search for a command to run...

Papers

Daily updated cutting-edge AI research papers to help you keep up with the latest AI trends

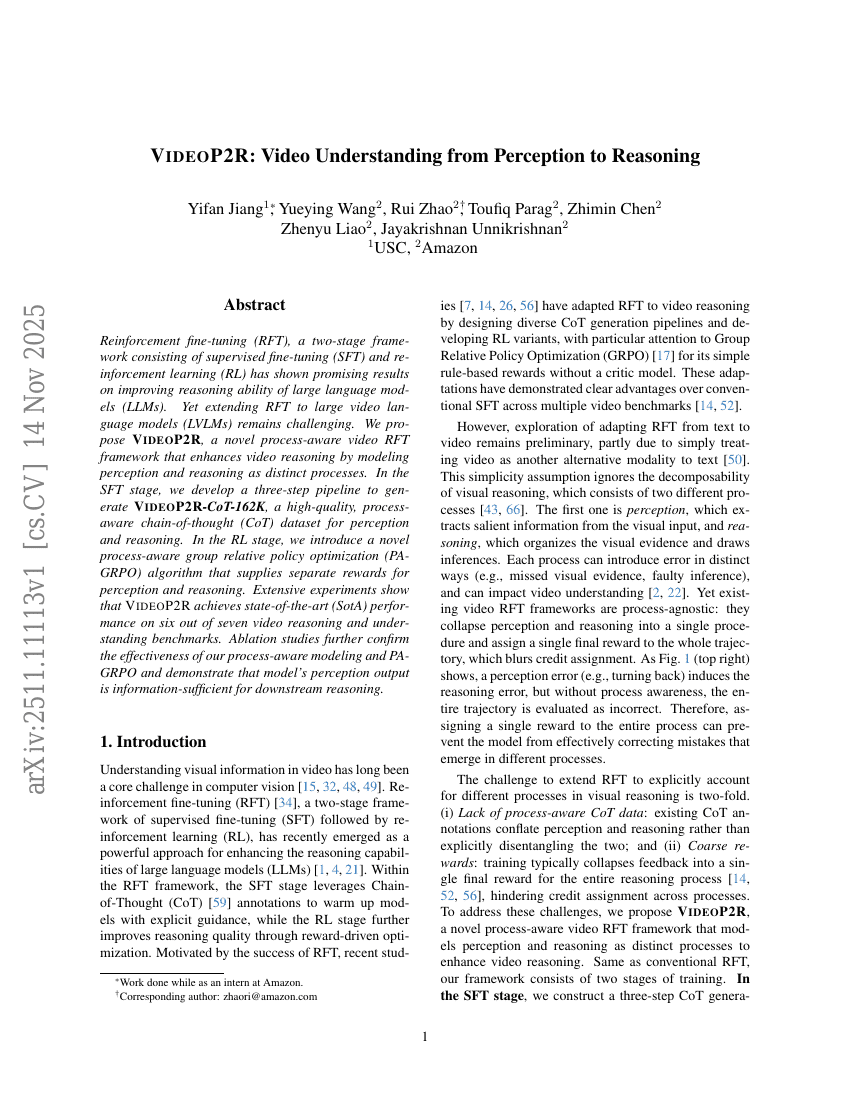

VIDEOP2R: Video Understanding from Perception to Reasoning

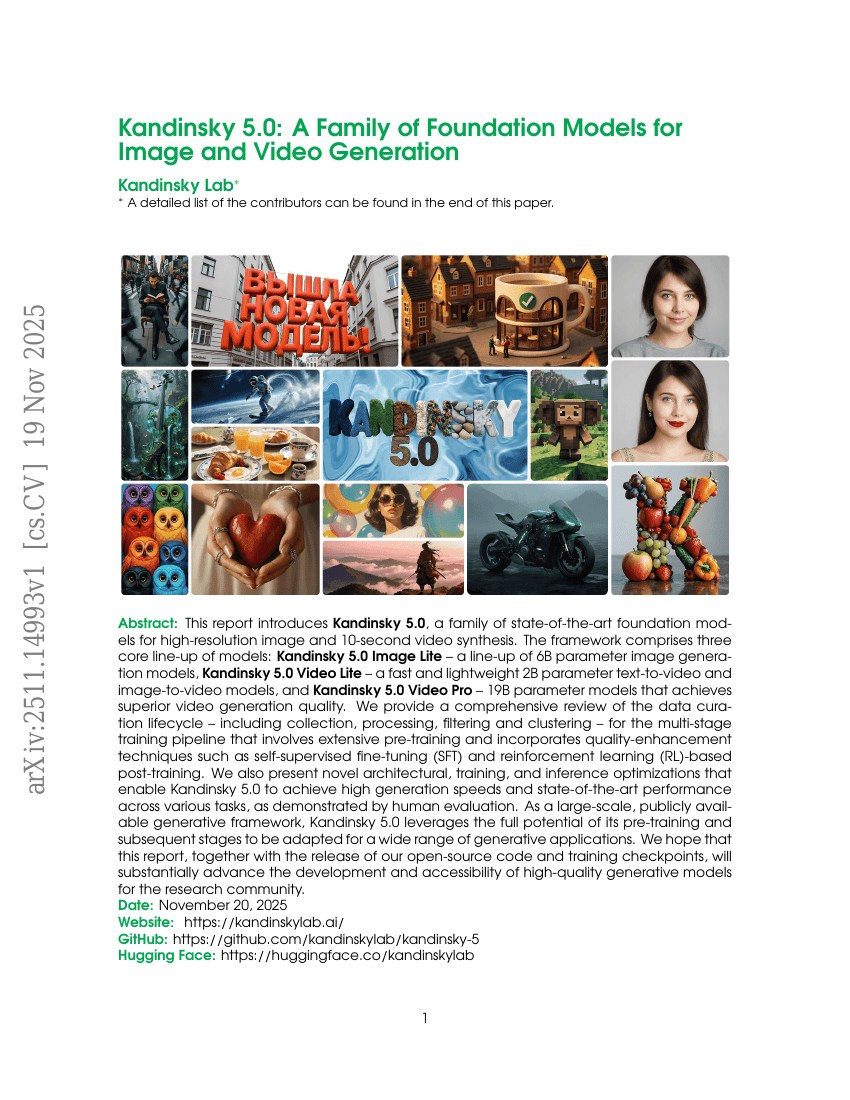

Kandinsky 5.0: A Family of Foundation Models for Image and Video Generation

VIDEOP2R: Video Understanding from Perception to Reasoning

Kandinsky 5.0: A Family of Foundation Models for Image and Video Generation

JAM-2: Fully computational design of drug-like antibodies with high success rates

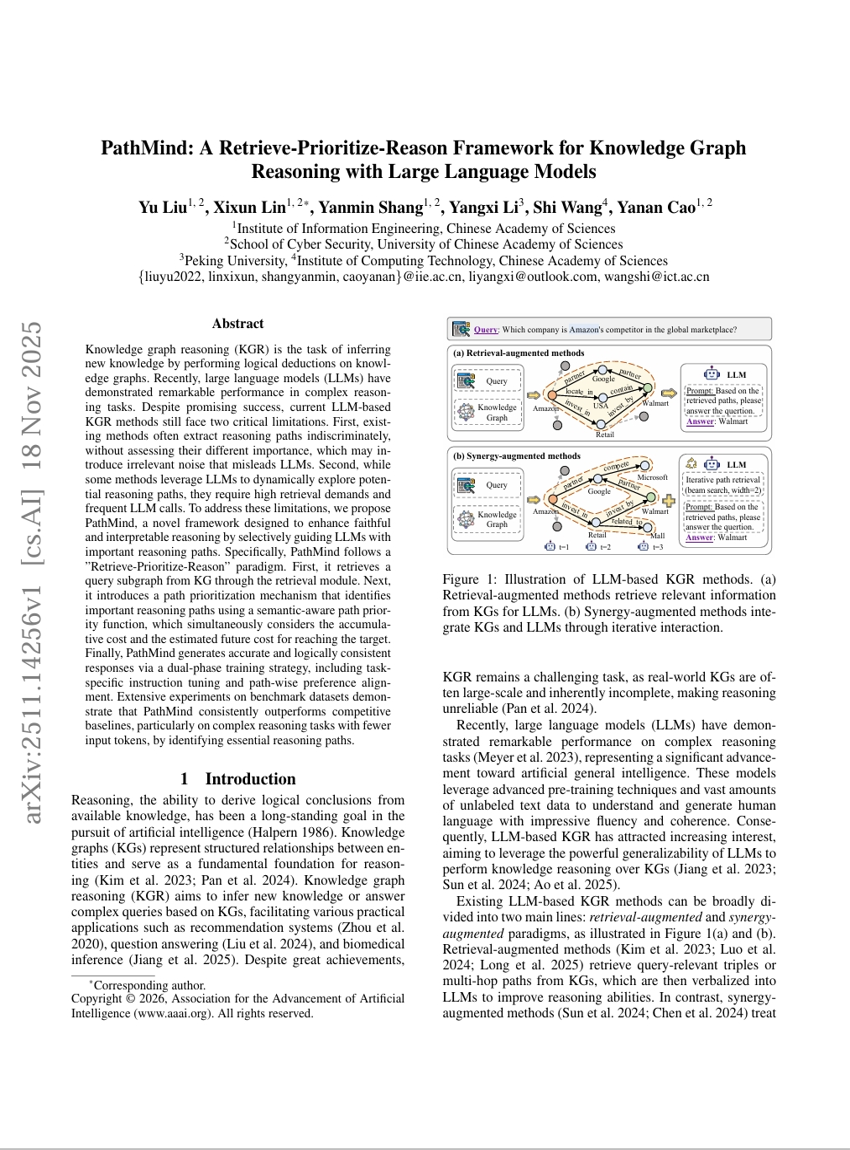

PathMind: A Retrieve-Prioritize-Reason Framework for Knowledge Graph Reasoning with Large Language Models

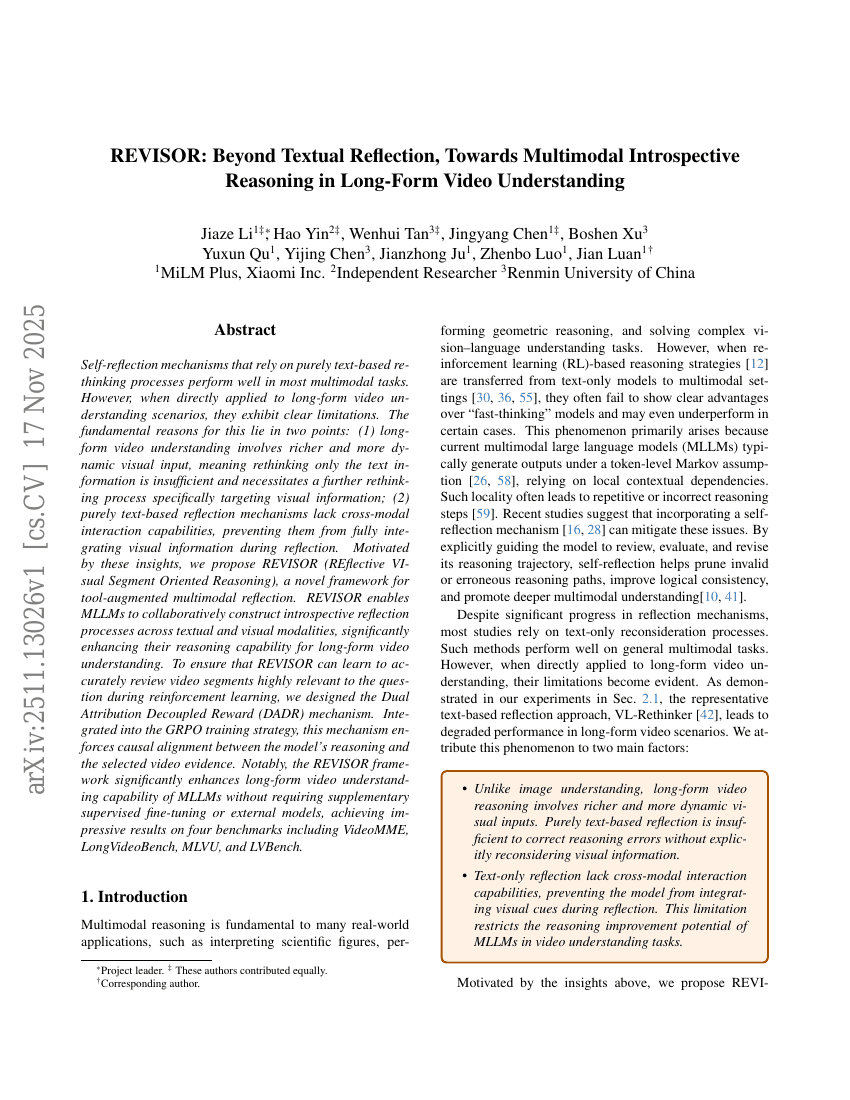

REVISOR: Beyond Textual Reflection, Towards Multimodal Introspective Reasoning in Long-Form Video Understanding

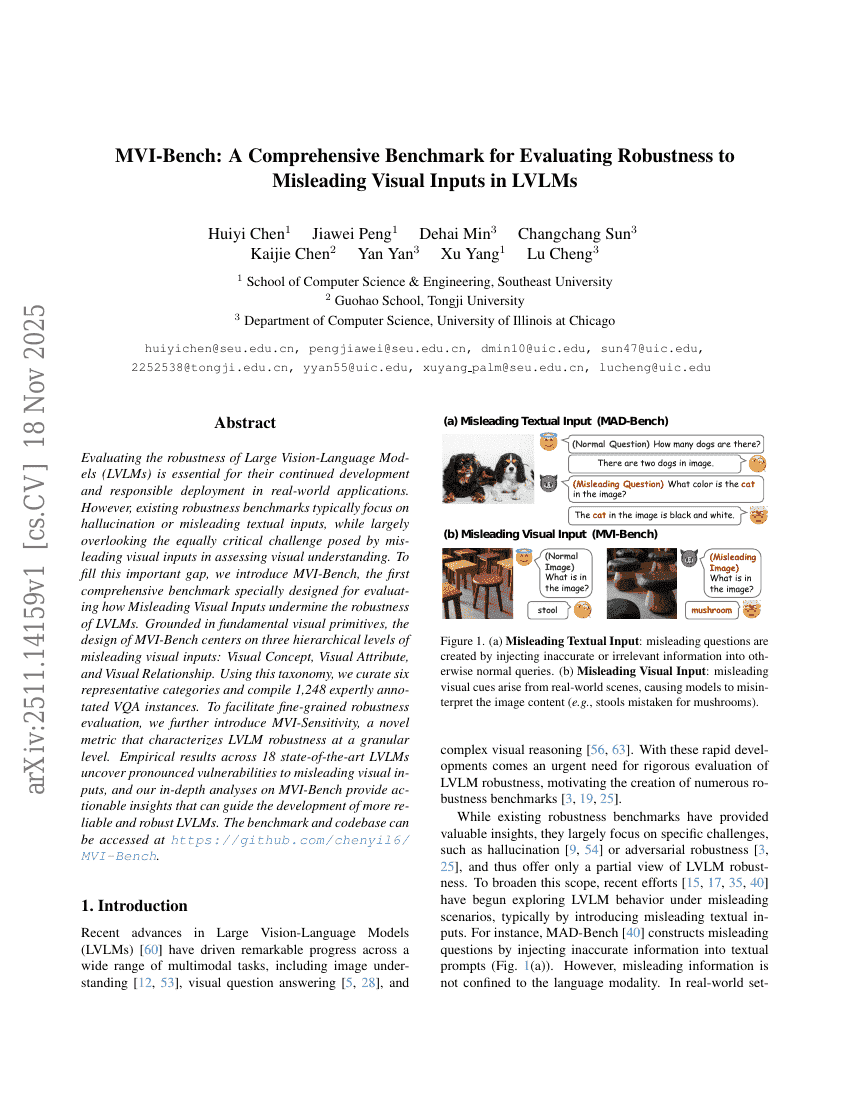

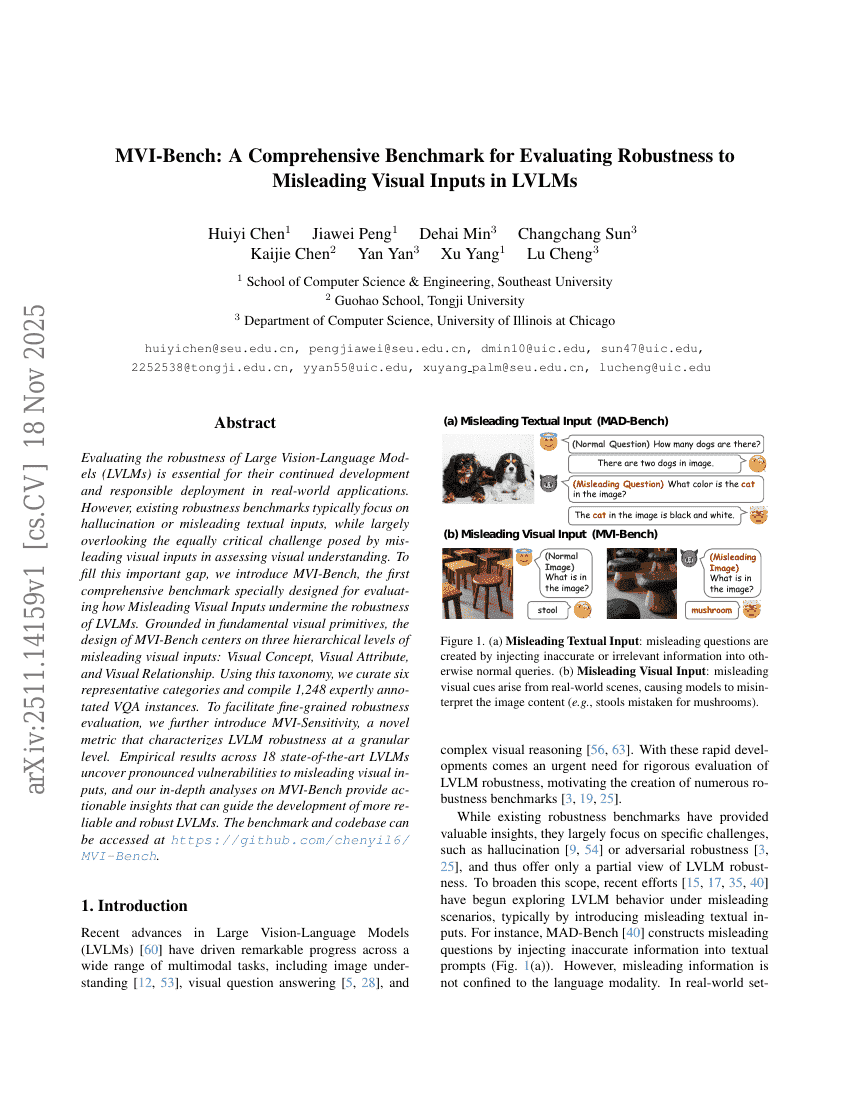

MVI-Bench: A Comprehensive Benchmark for Evaluating Robustness to Misleading Visual Inputs in LVLMs

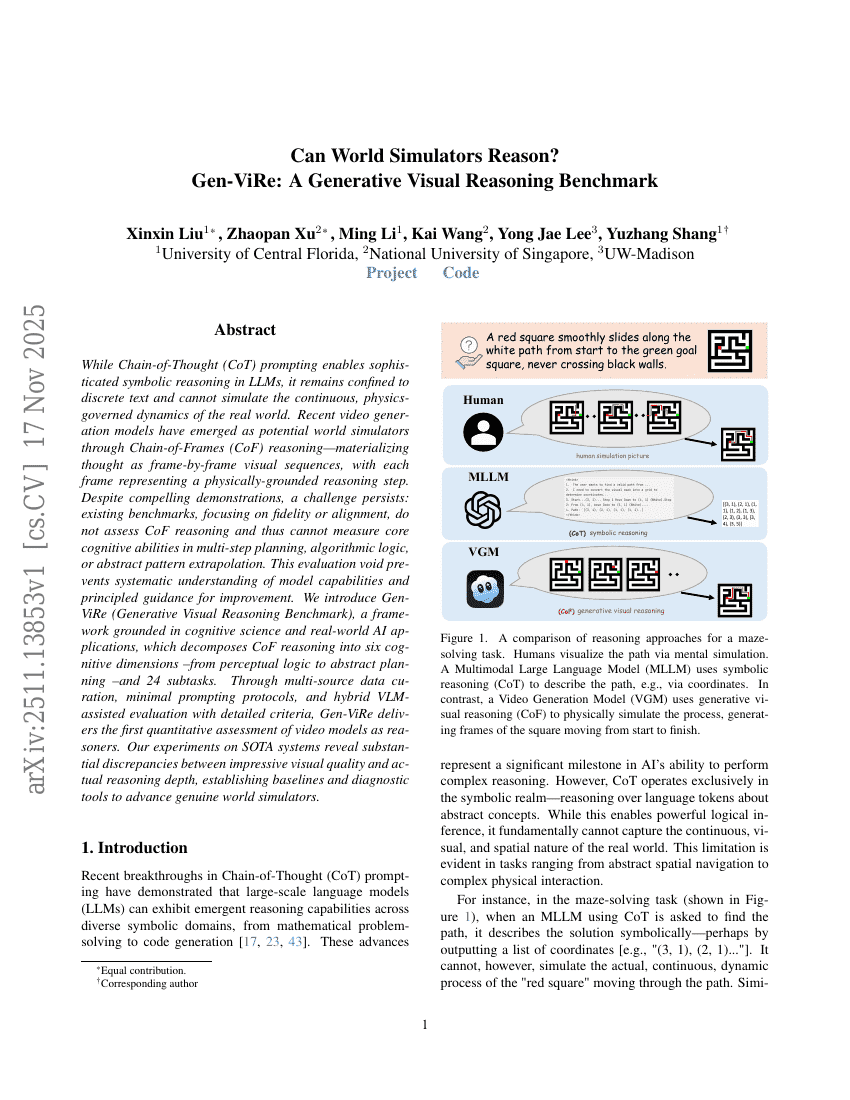

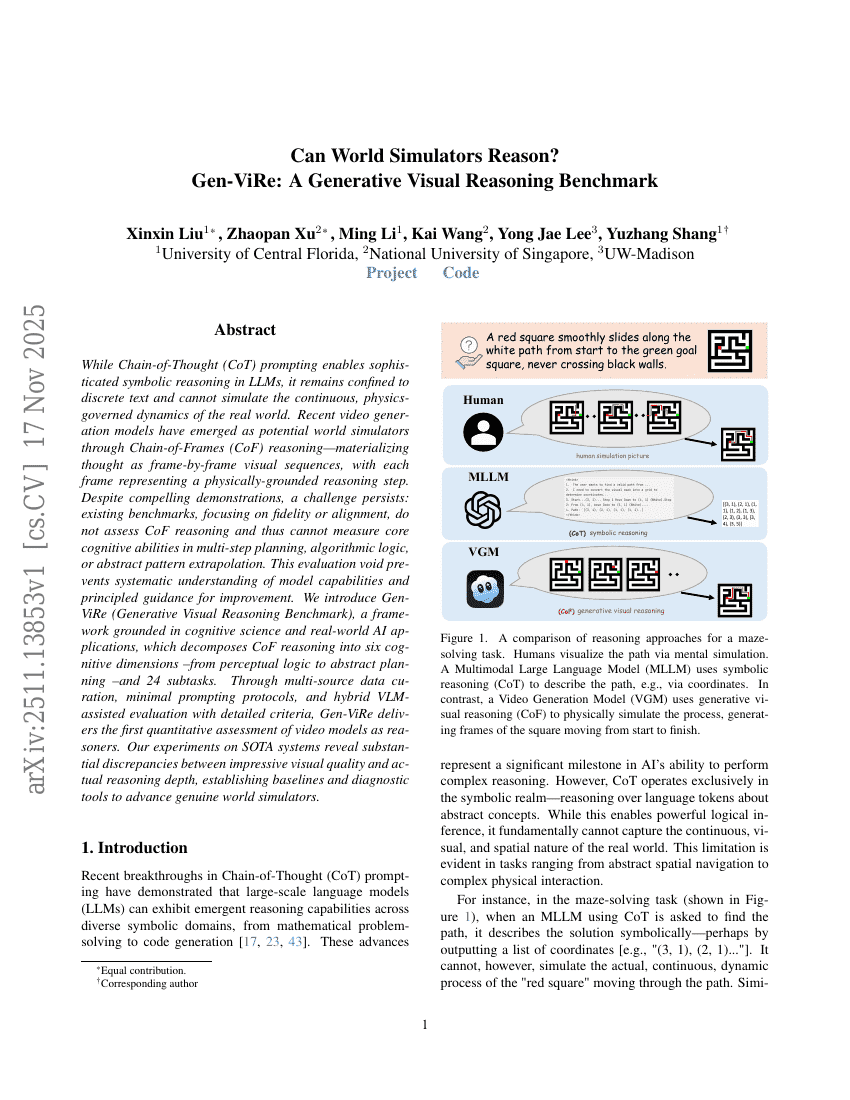

Can World Simulators Reason? Gen-ViRe: A Generative Visual Reasoning Benchmark

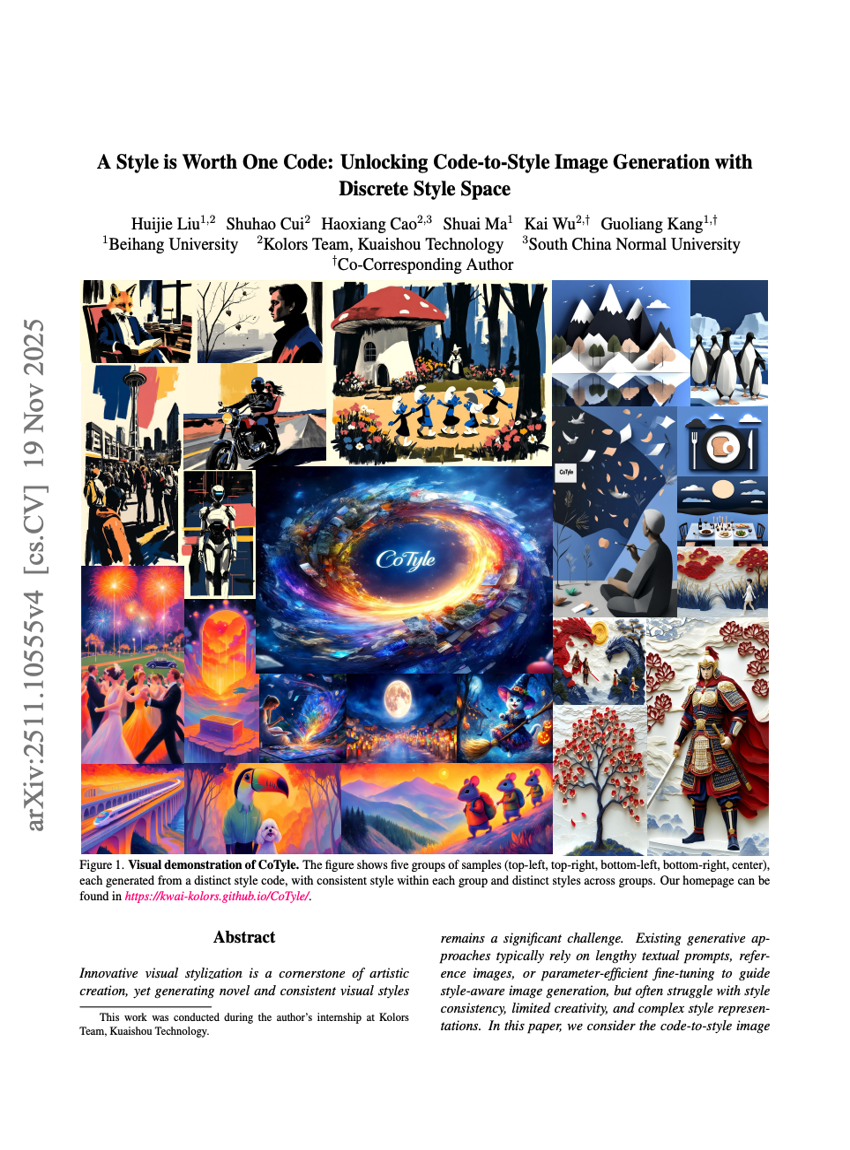

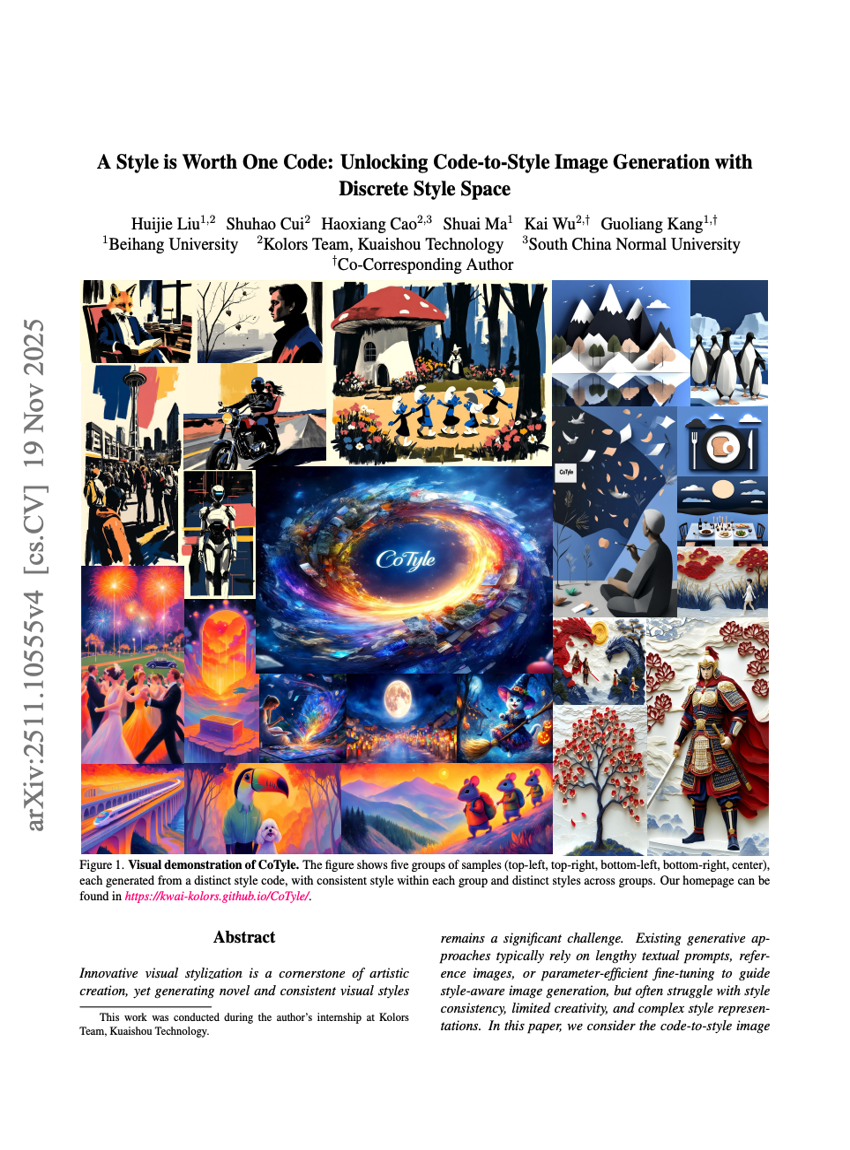

A Style is Worth One Code: Unlocking Code-to-Style Image Generation with Discrete Style Space

AraLingBench A Human-Annotated Benchmark for Evaluating Arabic Linguistic Capabilities of Large Language Models

Think-at-Hard: Selective Latent Iterations to Improve Reasoning Language Models

HumanSense: From Multimodal Perception to Empathetic Context-Aware Responses through Reasoning MLLMs

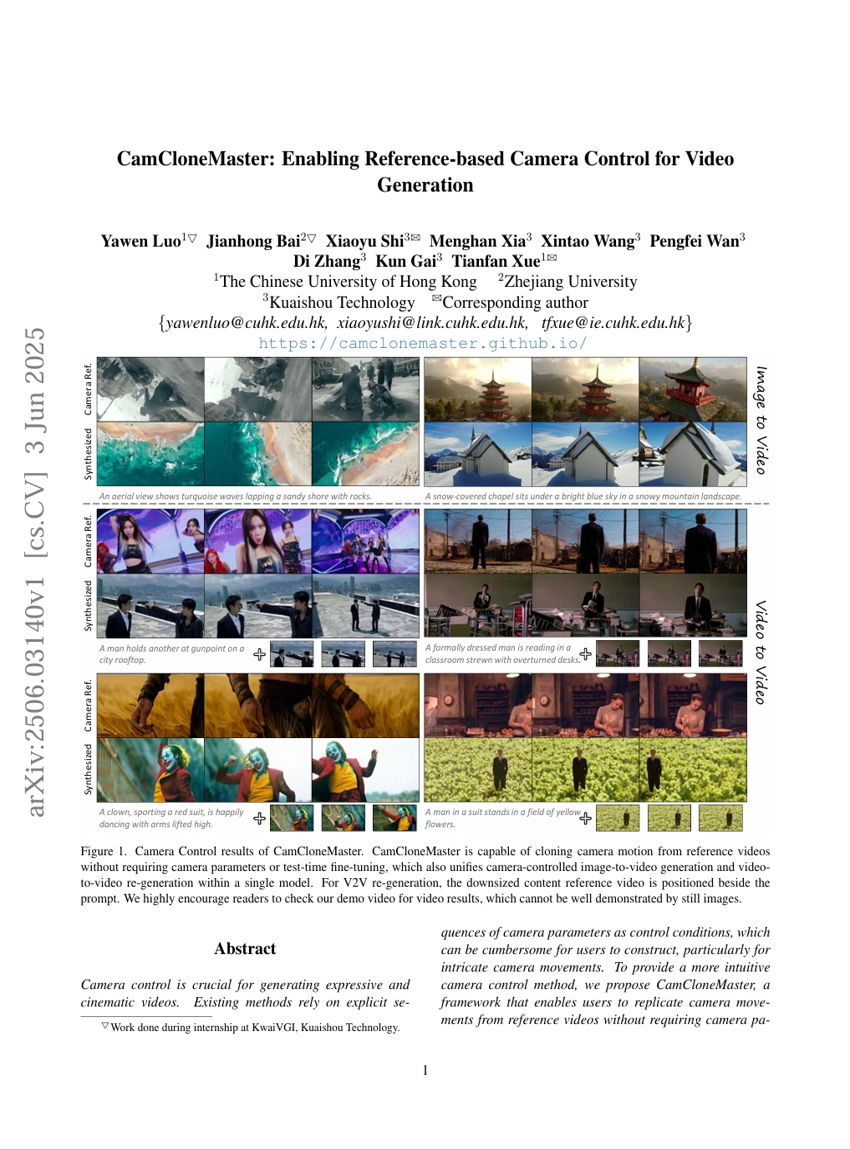

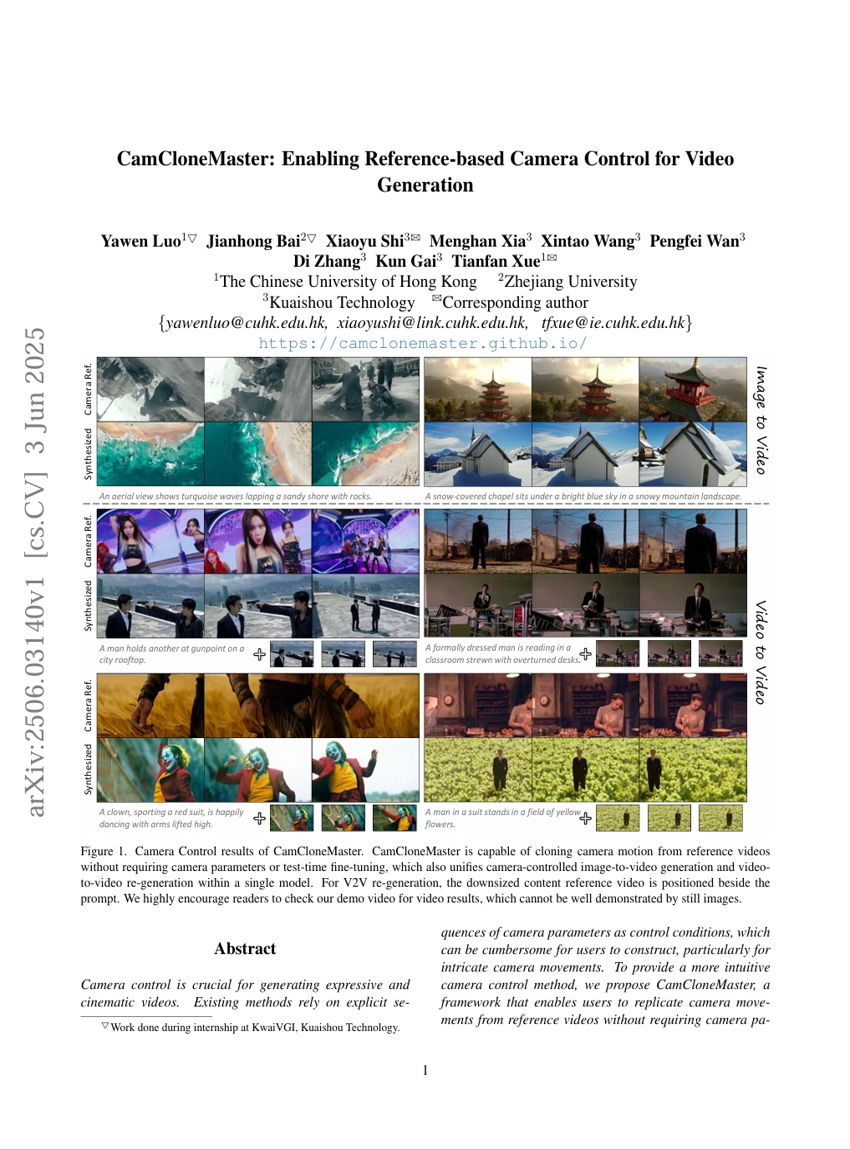

CamCloneMaster: Enabling Reference-based Camera Control for Video Generation

EditScore: Unlocking Online RL for Image Editing via High-Fidelity Reward Modeling

InteractMove: Text-Controlled Human-Object Interaction Generation in 3D Scenes with Movable Objects

WebCoach: Self-Evolving Web Agents with Cross-Session Memory Guidance

Learning to Trust: Bayesian Adaptation to Varying Suggester Reliability in Sequential Decision Making

GroupRank: A Groupwise Reranking Paradigm Driven by Reinforcement Learning

MMaDA-Parallel: Multimodal Large Diffusion Language Models for Thinking-Aware Editing and Generation

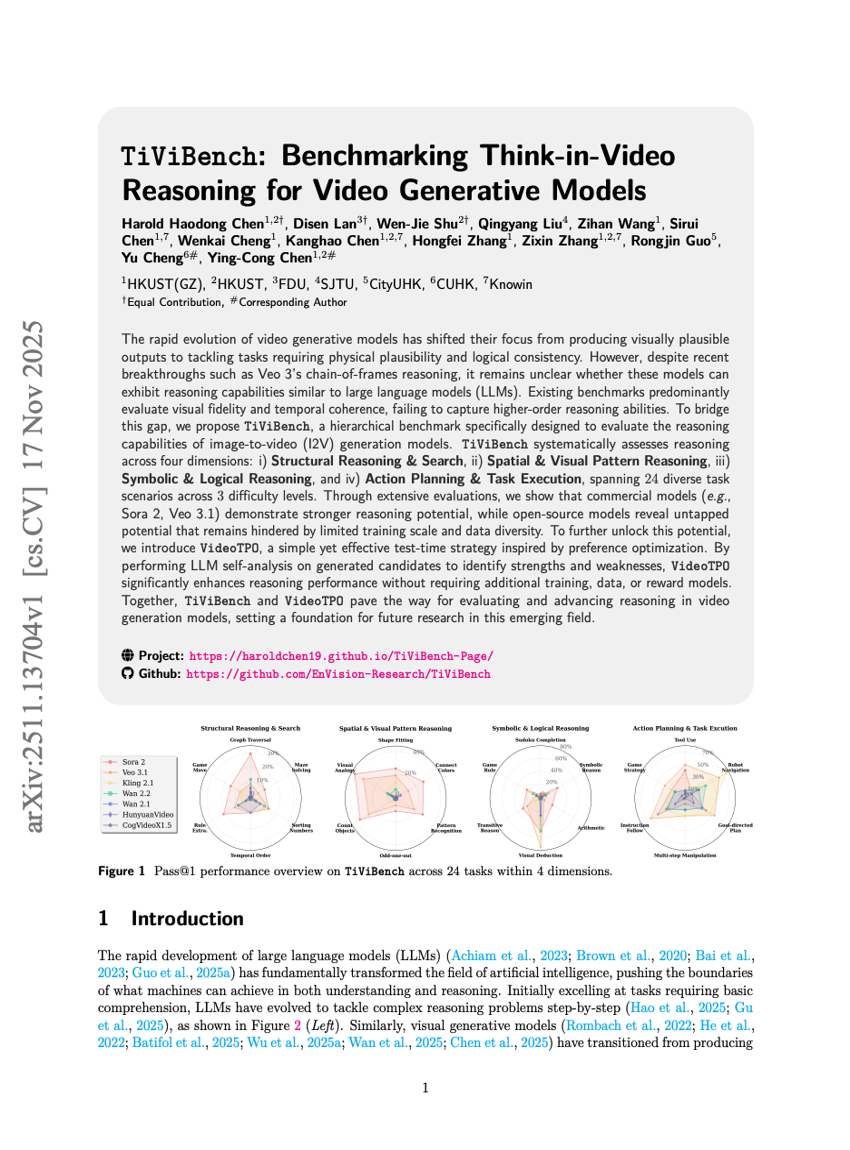

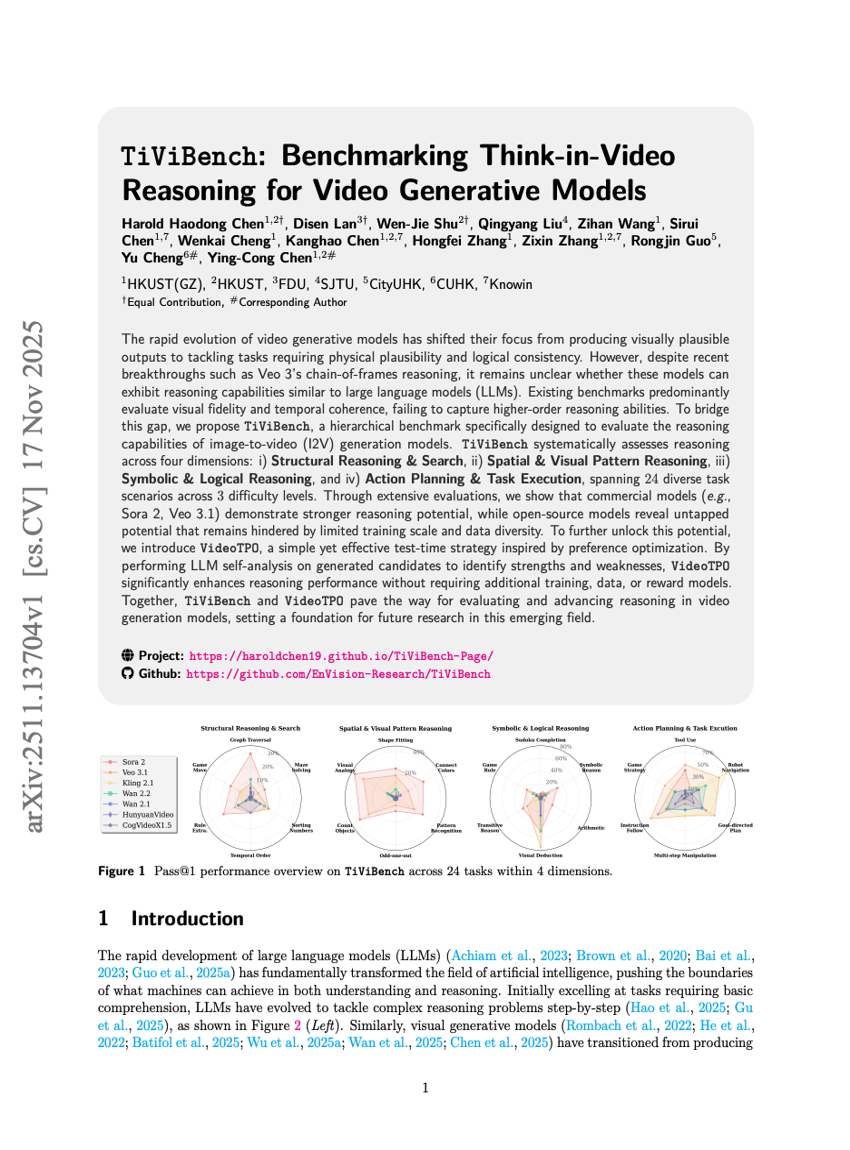

TiViBench: Benchmarking Think-in-Video Reasoning for Video Generative Models

Part-X-MLLM: Part-aware 3D Multimodal Large Language Model

Uni-MoE-2.0-Omni: Scaling Language-Centric Omnimodal Large Model with Advanced MoE, Training and Data

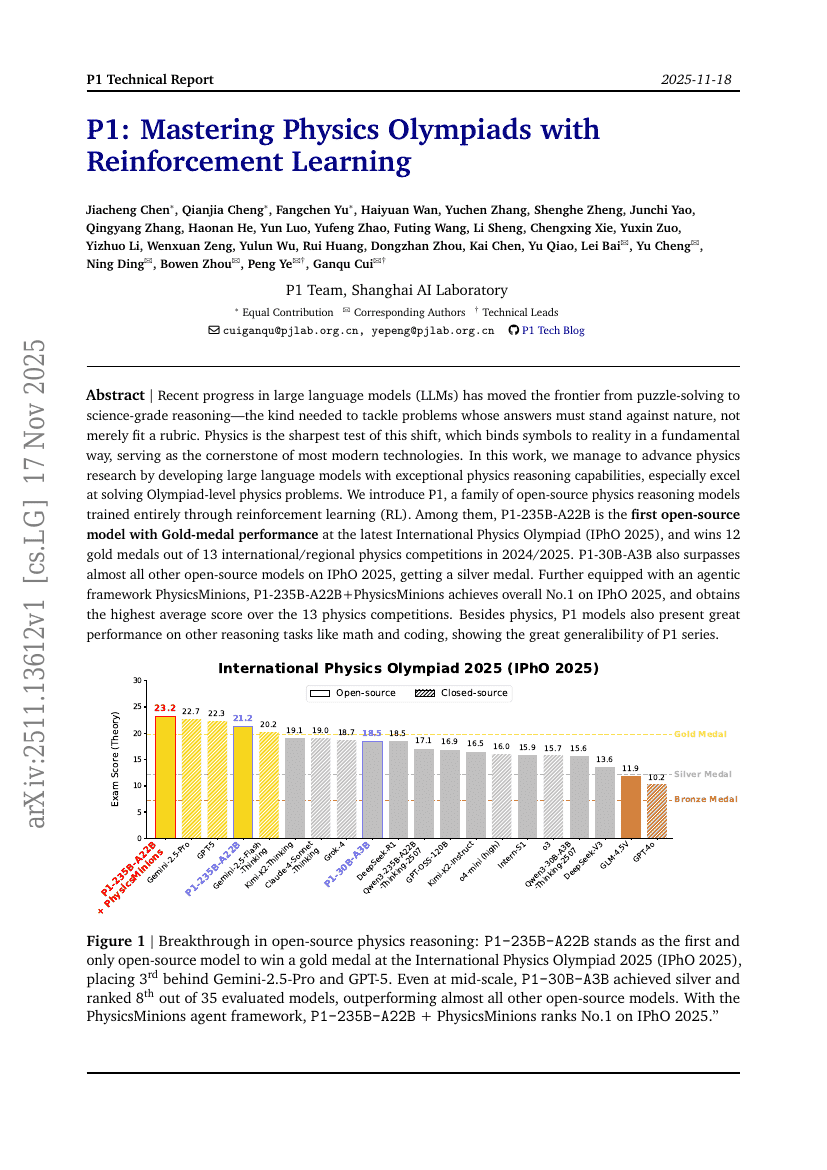

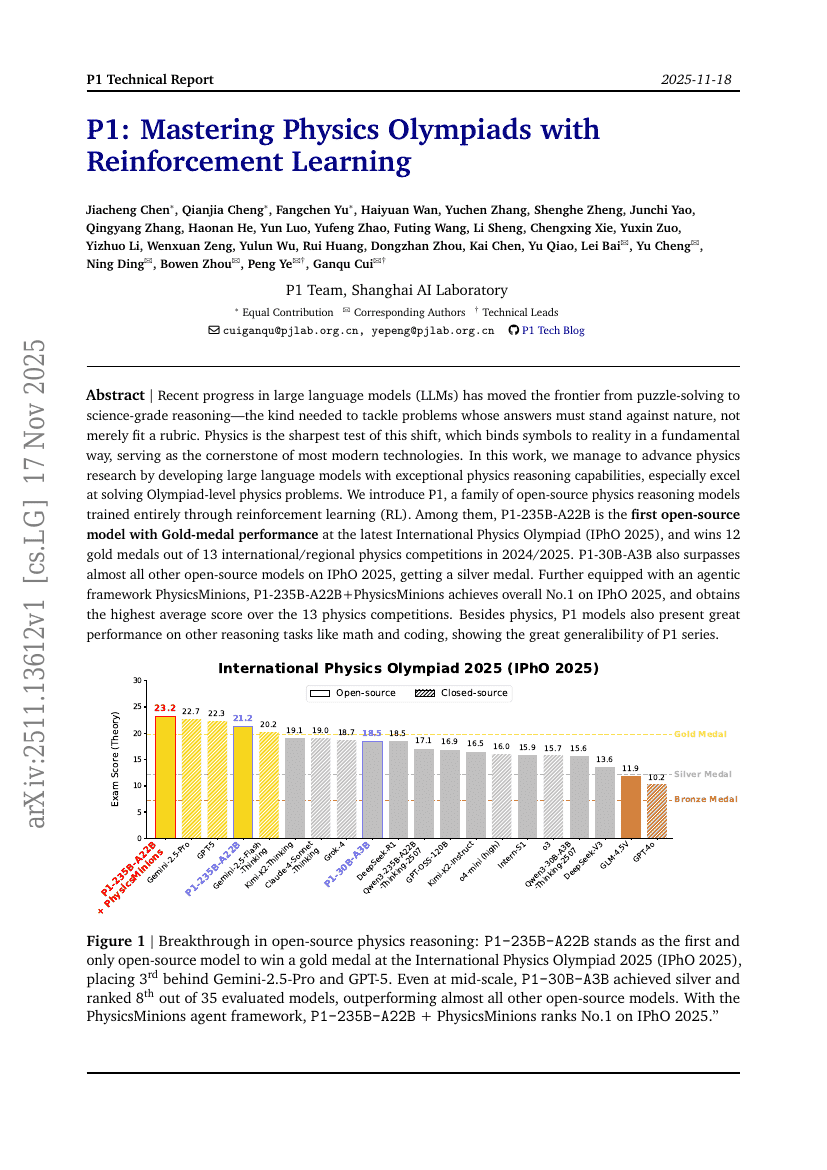

P1: Mastering Physics Olympiads with Reinforcement Learning

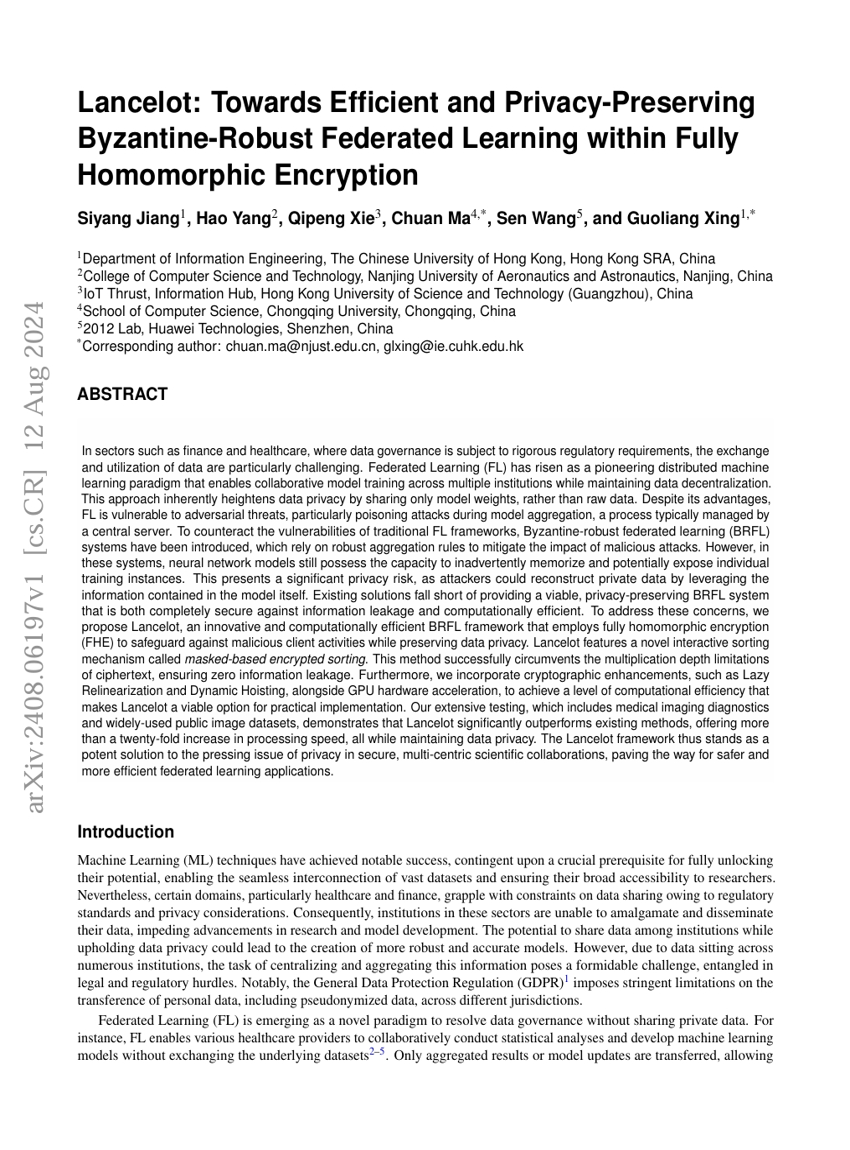

Lancelot: Towards Efficient and Privacy-Preserving Byzantine-Robust Federated Learning within Fully Homomorphic Encryption

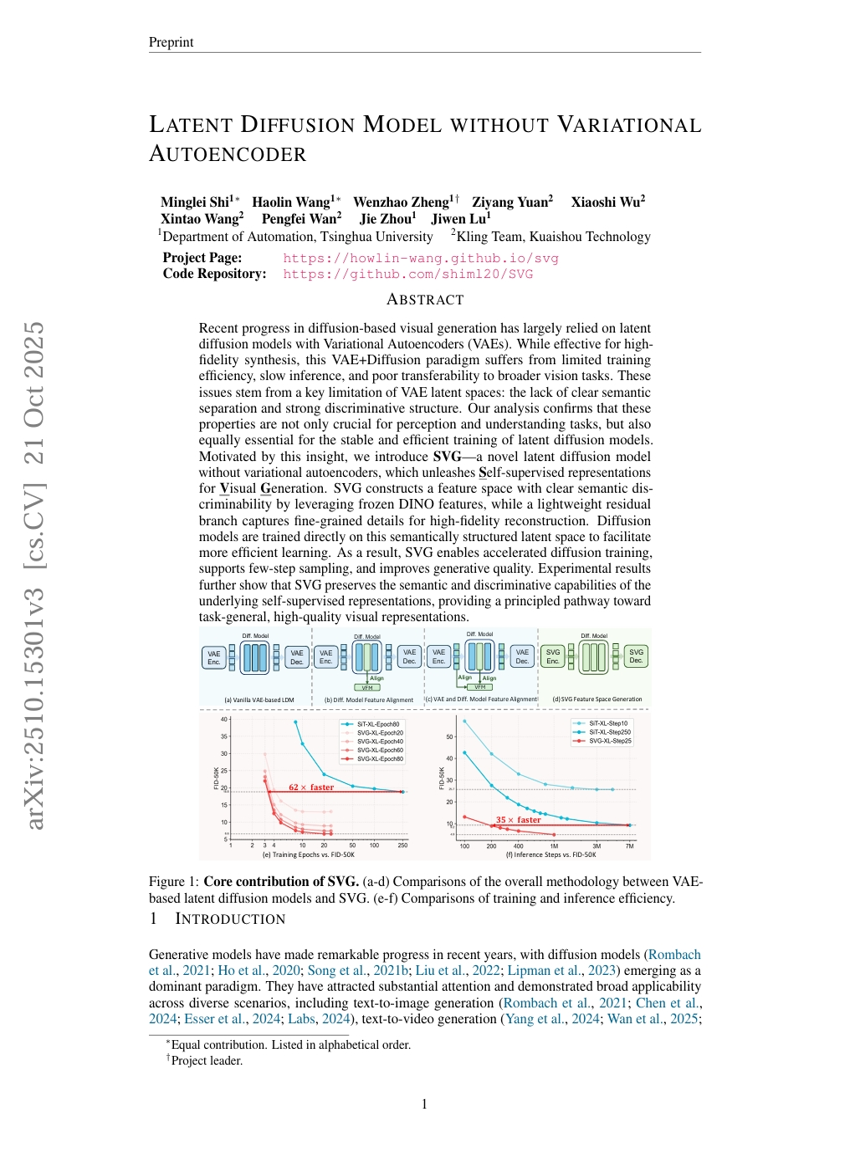

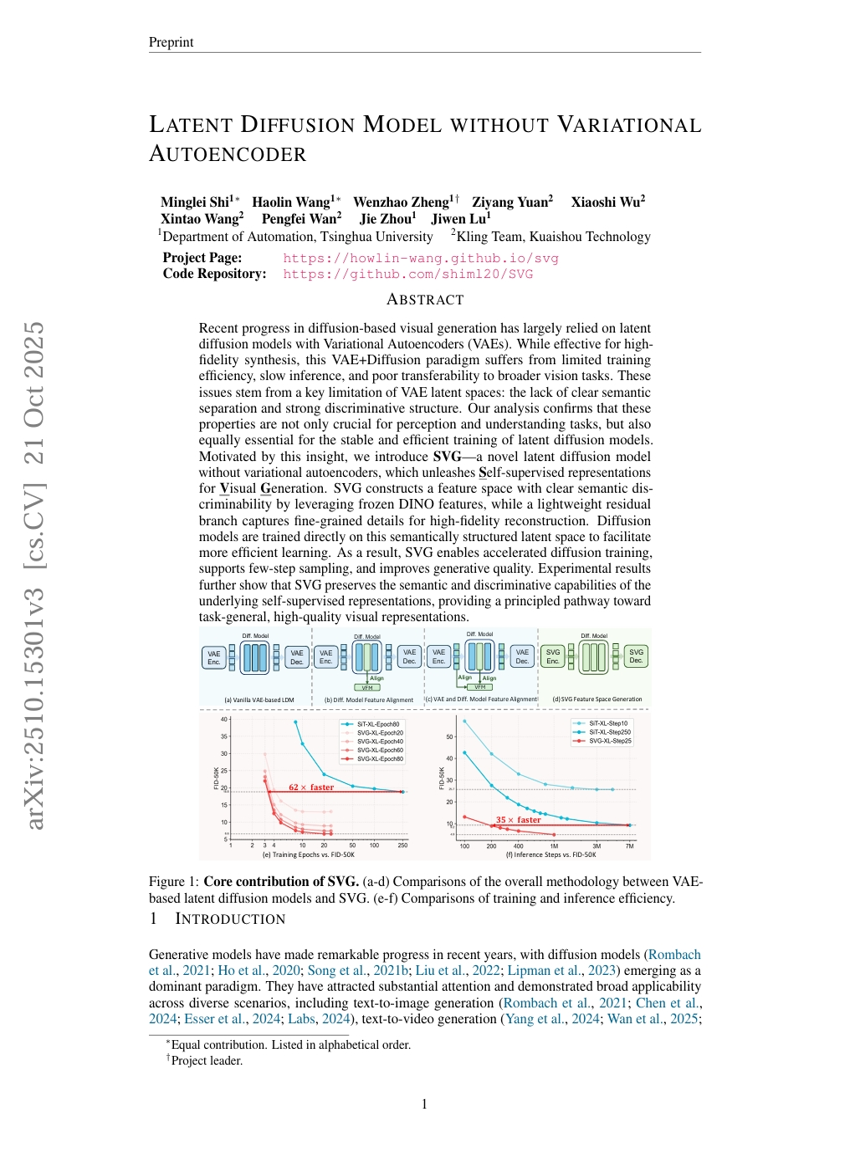

Latent Diffusion Model without Variational Autoencoder

RewardMap: Tackling Sparse Rewards in Fine-grained Visual Reasoning via Multi-Stage Reinforcement Learning

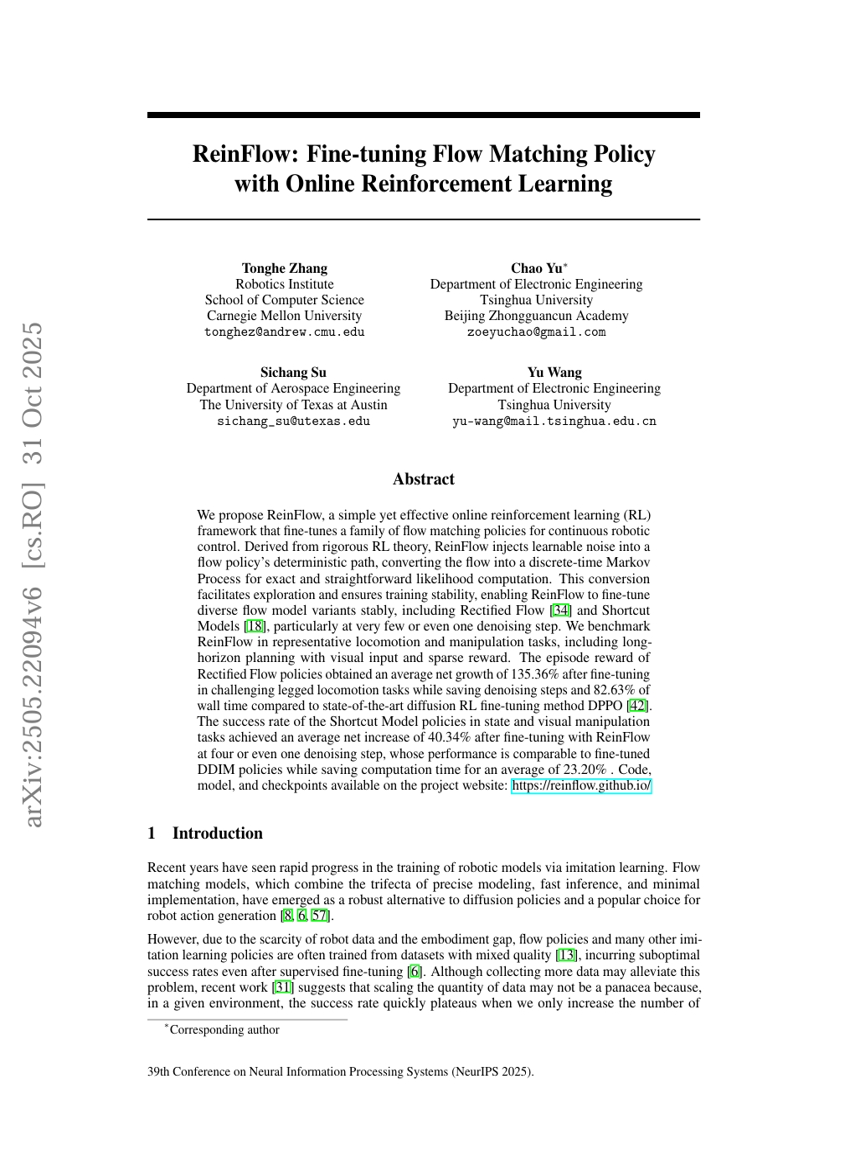

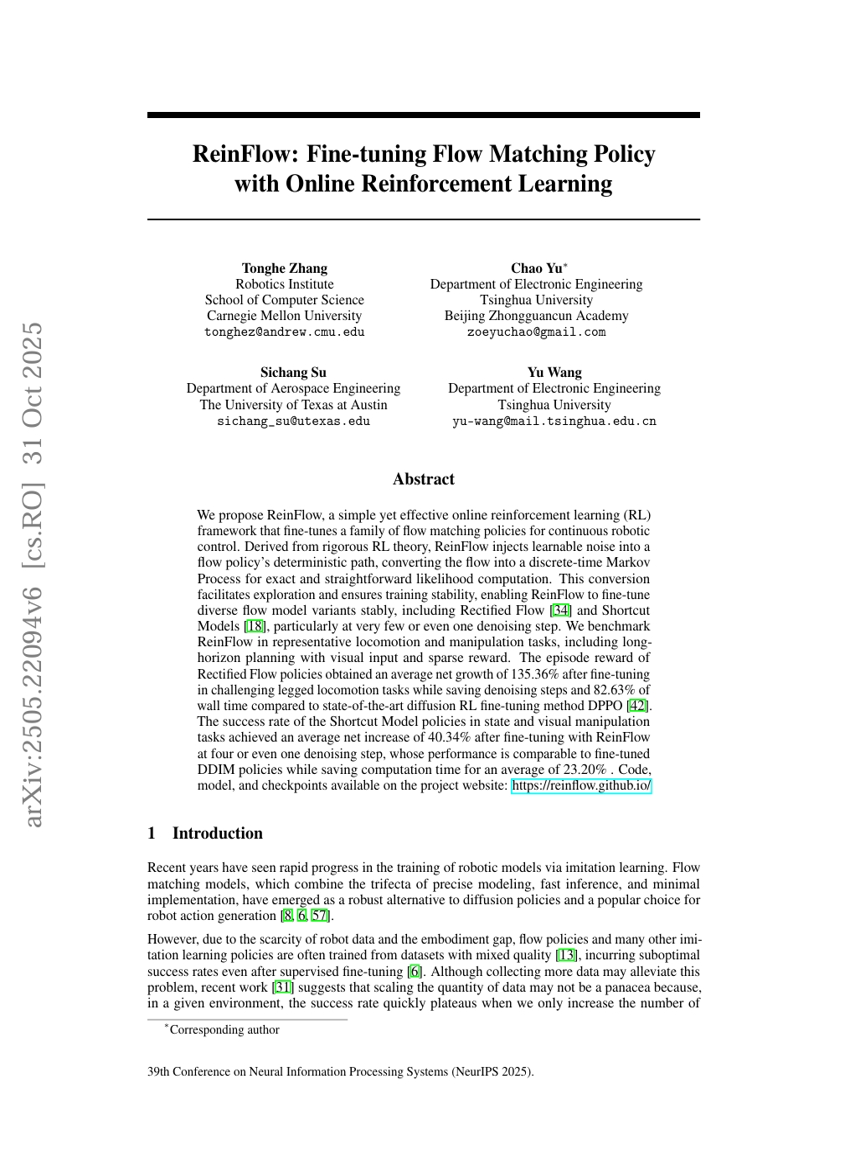

ReinFlow: Fine-tuning Flow Matching Policy with Online Reinforcement Learning

Voice Evaluation of Reasoning Ability: Diagnosing the Modality-Induced Performance Gap

MarsRL: Advancing Multi-Agent Reasoning System via Reinforcement Learning with Agentic Pipeline Parallelism

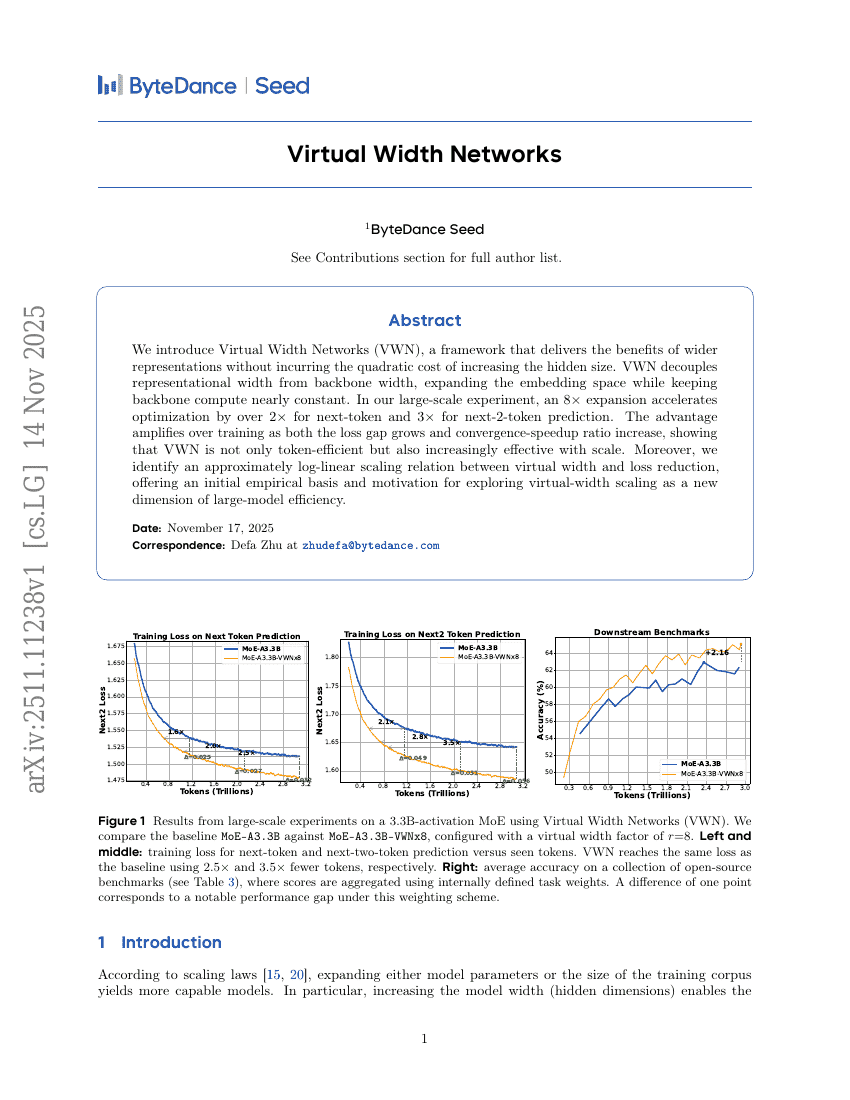

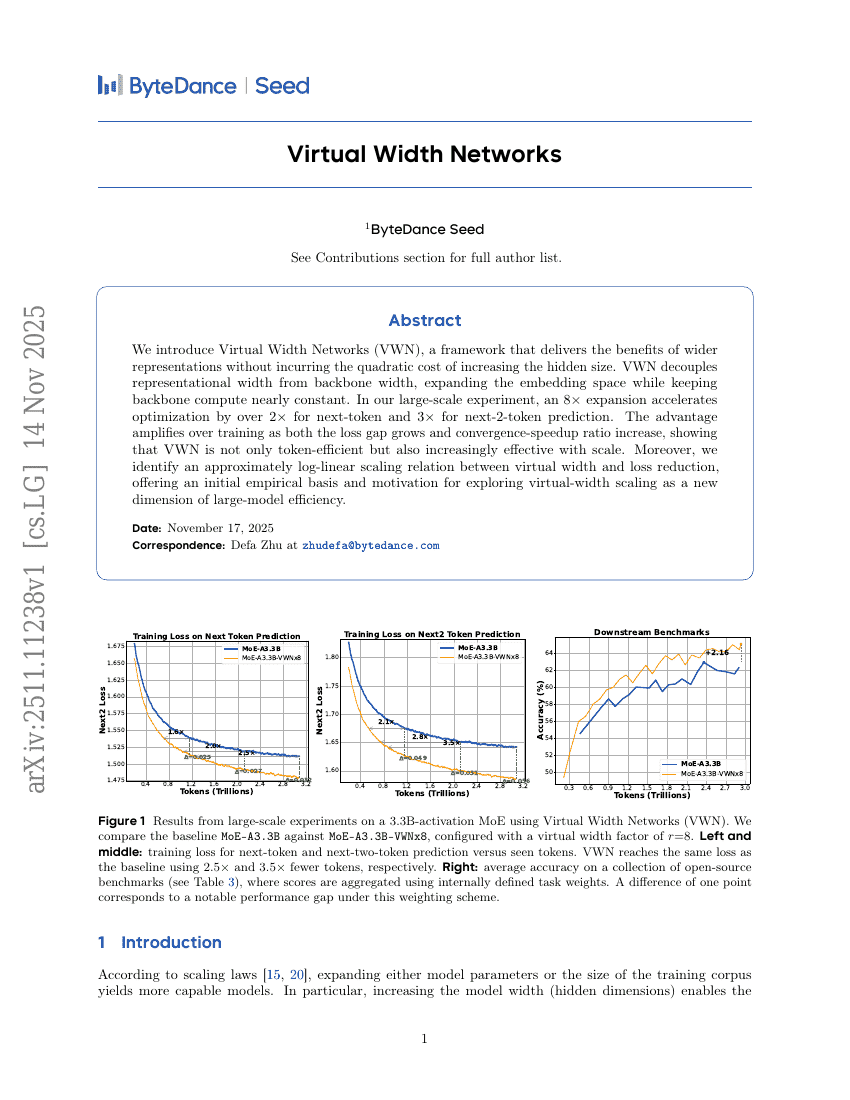

Virtual Width Networks

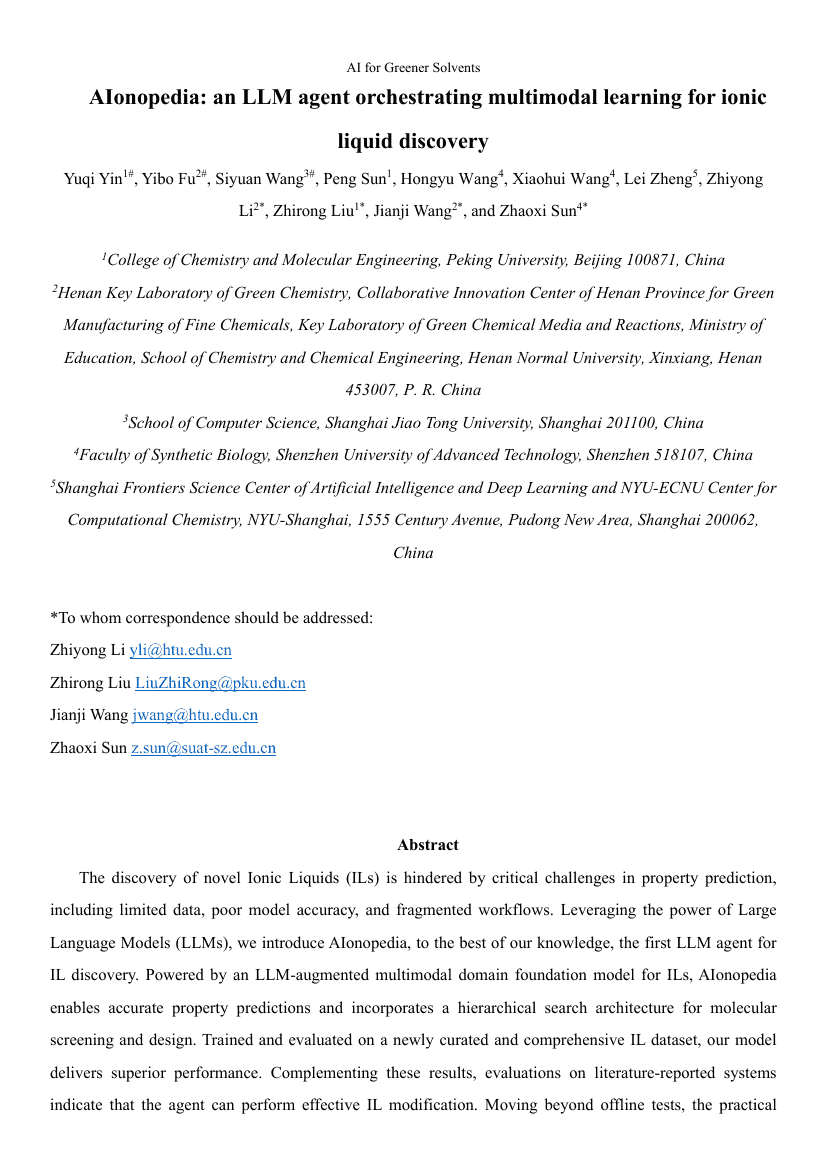

AIonopedia: an LLM agent orchestrating multimodal learning for ionic liquid discovery

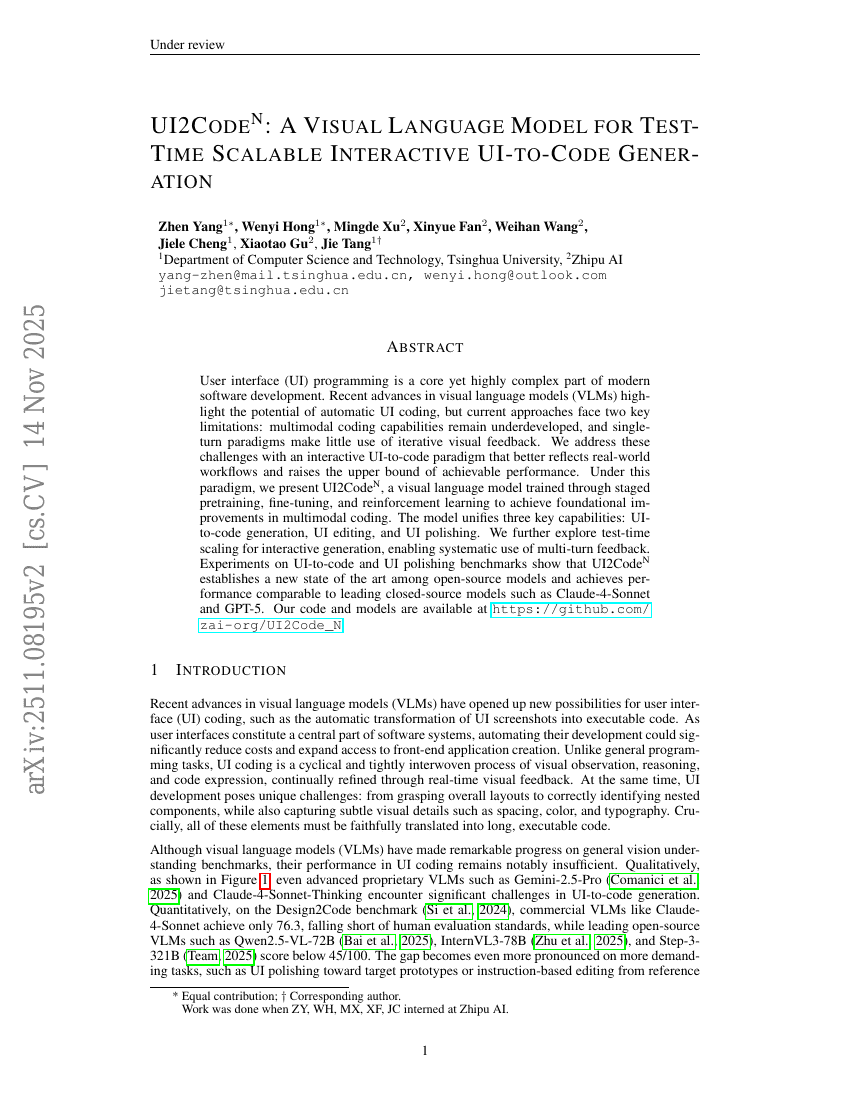

UI2CodeN: A Visual Language Model for Test-Time Scalable Interactive UI-to-Code Generation

GGBench: A Geometric Generative Reasoning Benchmark for Unified Multimodal Models

JAM-2: Fully computational design of drug-like antibodies with high success rates

PathMind: A Retrieve-Prioritize-Reason Framework for Knowledge Graph Reasoning with Large Language Models

REVISOR: Beyond Textual Reflection, Towards Multimodal Introspective Reasoning in Long-Form Video Understanding

MVI-Bench: A Comprehensive Benchmark for Evaluating Robustness to Misleading Visual Inputs in LVLMs

Can World Simulators Reason? Gen-ViRe: A Generative Visual Reasoning Benchmark

A Style is Worth One Code: Unlocking Code-to-Style Image Generation with Discrete Style Space

AraLingBench A Human-Annotated Benchmark for Evaluating Arabic Linguistic Capabilities of Large Language Models

Think-at-Hard: Selective Latent Iterations to Improve Reasoning Language Models

HumanSense: From Multimodal Perception to Empathetic Context-Aware Responses through Reasoning MLLMs

CamCloneMaster: Enabling Reference-based Camera Control for Video Generation

EditScore: Unlocking Online RL for Image Editing via High-Fidelity Reward Modeling

InteractMove: Text-Controlled Human-Object Interaction Generation in 3D Scenes with Movable Objects

WebCoach: Self-Evolving Web Agents with Cross-Session Memory Guidance

Learning to Trust: Bayesian Adaptation to Varying Suggester Reliability in Sequential Decision Making

GroupRank: A Groupwise Reranking Paradigm Driven by Reinforcement Learning

MMaDA-Parallel: Multimodal Large Diffusion Language Models for Thinking-Aware Editing and Generation

TiViBench: Benchmarking Think-in-Video Reasoning for Video Generative Models

Part-X-MLLM: Part-aware 3D Multimodal Large Language Model

Uni-MoE-2.0-Omni: Scaling Language-Centric Omnimodal Large Model with Advanced MoE, Training and Data

P1: Mastering Physics Olympiads with Reinforcement Learning

Lancelot: Towards Efficient and Privacy-Preserving Byzantine-Robust Federated Learning within Fully Homomorphic Encryption

Latent Diffusion Model without Variational Autoencoder

RewardMap: Tackling Sparse Rewards in Fine-grained Visual Reasoning via Multi-Stage Reinforcement Learning

ReinFlow: Fine-tuning Flow Matching Policy with Online Reinforcement Learning

Voice Evaluation of Reasoning Ability: Diagnosing the Modality-Induced Performance Gap

MarsRL: Advancing Multi-Agent Reasoning System via Reinforcement Learning with Agentic Pipeline Parallelism

Virtual Width Networks

AIonopedia: an LLM agent orchestrating multimodal learning for ionic liquid discovery

UI2CodeN: A Visual Language Model for Test-Time Scalable Interactive UI-to-Code Generation

GGBench: A Geometric Generative Reasoning Benchmark for Unified Multimodal Models