Command Palette

Search for a command to run...

Fast and Accurate! Cohere Releases open-source Transcription Model; Accurate Parsing of Complex Scenarios: Chandra-ocr-2 Visual Language Model Achieves Precise OCR.

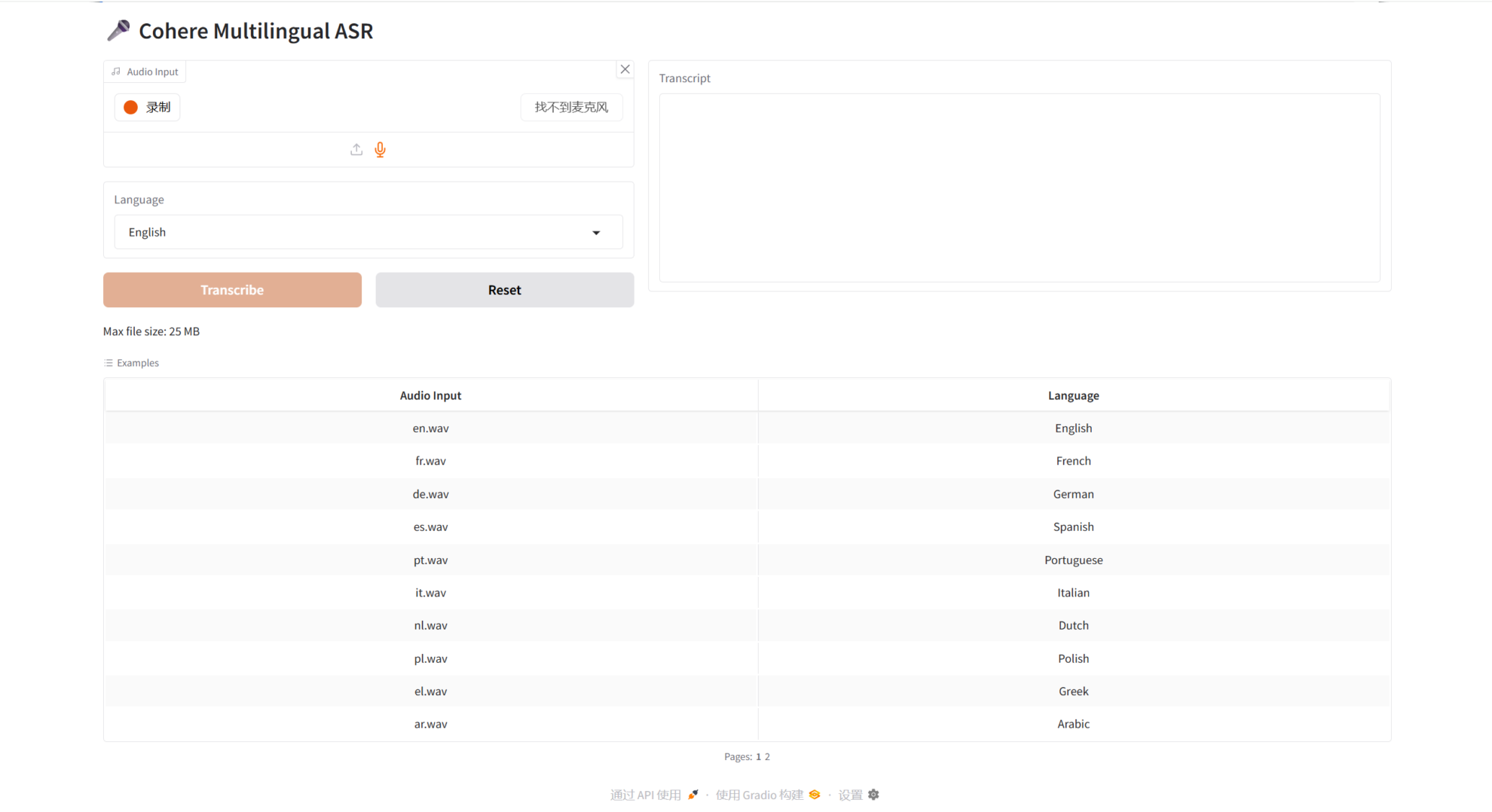

In the current wave of accelerated global digital transformation, voice data has become a new source of business value for enterprises. However, how to break through the bottlenecks of inference cost and processing speed while ensuring high transcription accuracy has always been an unresolved problem. Cohere released an open-source speech recognition model, Cohere-transcribe-03-2026, in March 2026.This dedicated transcription model, with its 2 billion parameters, is lightweight, highly productive, and highly accurate, setting a new technical standard for "precise speech processing" in the era of large models.

The most notable feature of Cohere-transcribe is its extreme inference efficiency and accuracy. The R&D team adopted an asymmetric encoder-decoder architecture, concentrating over 901 TP3T of computing power on the Fast-Conformer encoder. This significantly reduces the computational overhead of autoregressive inference through a simplified decoder, solving the problems of high deployment costs and slow response times in traditional ASR models.

In terms of data engineering, it relies on 500,000 hours of carefully selected speech transcription pairs.By combining proprietary cleaning pipelines and synthetic data augmentation through multiple rounds of error analysis, the model has developed a "golden ear" that can accurately hear even in noisy environments. The model is also equipped with a flexibly customizable punctuation prompting mechanism that can automatically add punctuation or adjust the format according to the user's needs. This not only solves the problem of many original data lacking punctuation, but also makes the final generated text read smoothly and naturally, truly achieving both speed and accuracy.

The HyperAI website now features the "Cohere Transcribe Open Source Lightweight Speech Model," so give it a try!

Online use:https://go.hyper.ai/DonpU

A quick overview of hyper.ai's official website updates from April 6th to April 12th:

* High-quality public datasets: 4

* A selection of high-quality tutorials: 9

* Community article analysis: 2 articles

* Popular encyclopedia entries: 5

Top conferences with April deadlines: 3

Visit the official website:hyper.ai

Selected public datasets

1. ToolACE Complex Tool Learning Dialogue Dataset

ToolACE is an automated agent pipeline dataset for tool learning tasks, released in 2024 by Shanghai Jiao Tong University in collaboration with the University of Science and Technology of China, Huawei Noah's Ark Lab, and other institutions. This dataset aims to generate accurate, complex, and diverse tool learning data, specifically addressing practical challenges in tool learning such as insufficient data quality and limited scenario diversity.

Direct use:https://go.hyper.ai/RDx6d

2. CHOCLO Latin American Cultural Benchmark Dataset

The CHOCLO dataset is a benchmark dataset designed to evaluate the performance of language models on tasks involving Latin American cultural knowledge. It aims to assess the accuracy of language models in representing Latin American culture, with a particular focus on real-world issues in model training and output, such as underrepresentation, omissions, and biases related to the region's culture.

Direct use:https://go.hyper.ai/dnYtT

3. DRACO Cross-Disciplinary In-Depth Research Benchmark Dataset

DRACO (Cross-Domain Deep Research Accuracy, Completeness, and Objectivity Benchmark Dataset) is a dataset released by the Perplexity team to evaluate complex research tasks. This dataset contains 100 complex research tasks covering 40 countries and regions across five continents. These tasks involve 10 major application domains, including finance, shopping/product comparison, academia, and technology. Each task is a multi-step, multi-source information retrieval and analysis problem, accompanied by evaluation criteria designed and validated by 26 domain experts.

Direct use:https://go.hyper.ai/SdAUn

4. COCO-2017-Vietnamese Vietnamese Image Detection Dataset

COCO-2017-Vietnamese is a Vietnamese localization extension dataset based on the Microsoft Common Objects in Context (COCO) 2017 dataset, meticulously maintained and released by the AI Enthusiasm community. This dataset provides high-quality Vietnamese translations of original English image captions, offering a comprehensive bilingual benchmark dataset suitable for tasks such as image captioning and multimodal learning.

Direct use:https://go.hyper.ai/KSv2V

Selected Public Tutorials

1. Cohere Transcribe: An open-source, lightweight speech model

Cohere Transcribe is a lightweight speech model open-sourced by Cohere in March 2026. This model boasts 2 billion parameters and is designed specifically for edge devices, aiming to overcome the latency bottleneck caused by the large size of previous speech models. Cohere Transcribe was trained on 14 languages, including Chinese, Japanese, French, and Hebrew.

Run online:https://go.hyper.ai/DonpU

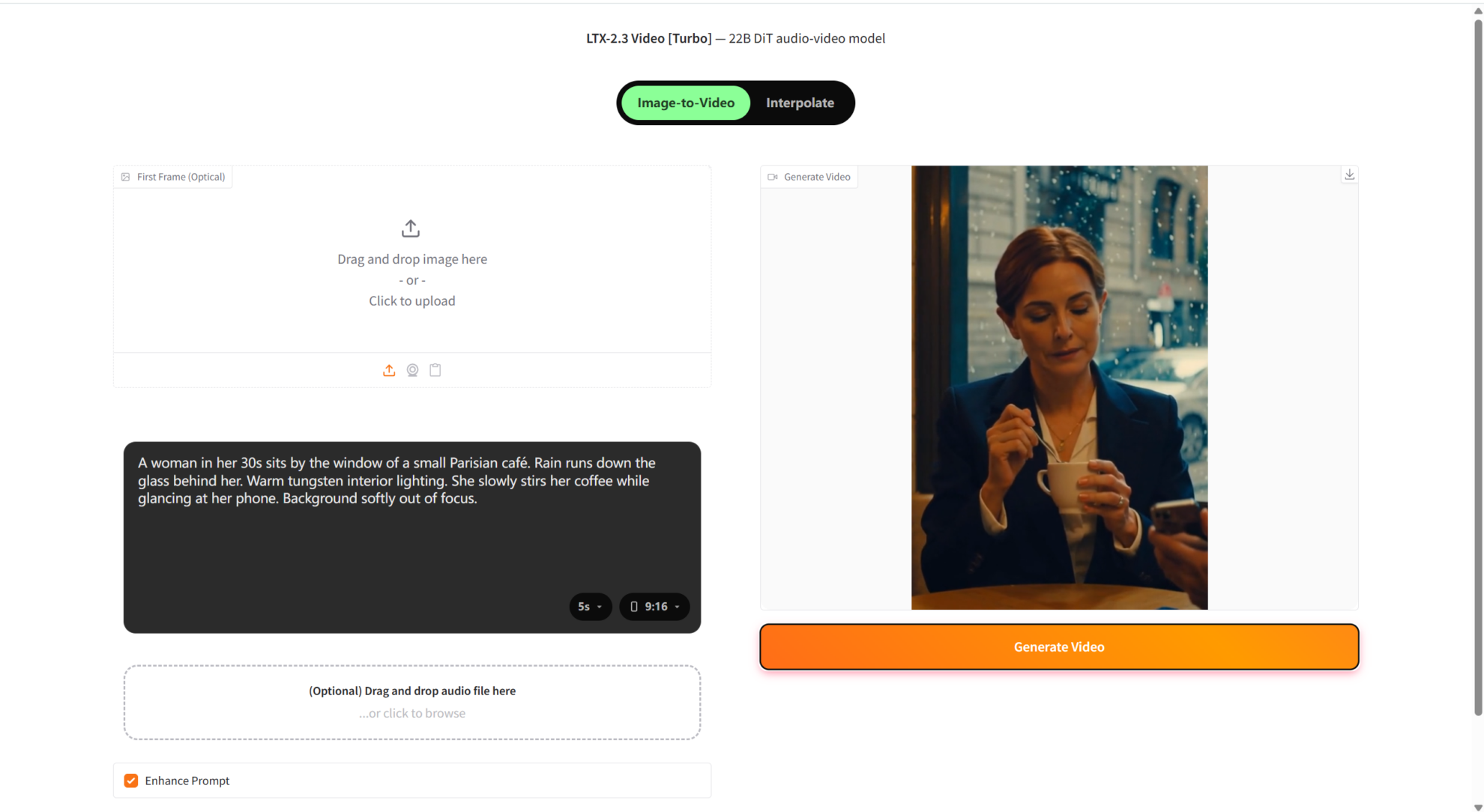

2. LTX-2.3-turbo Video Generator

LTX-2.3-turbo is an open-source video generation model released by Lightricks in March 2026, designed to push the limits of open-source video generation capabilities. This model employs an advanced diffusion transformer architecture and combines it with multimodal understanding capabilities to achieve high-quality, multi-resolution video content generation.

Run online:https://go.hyper.ai/tkiw4

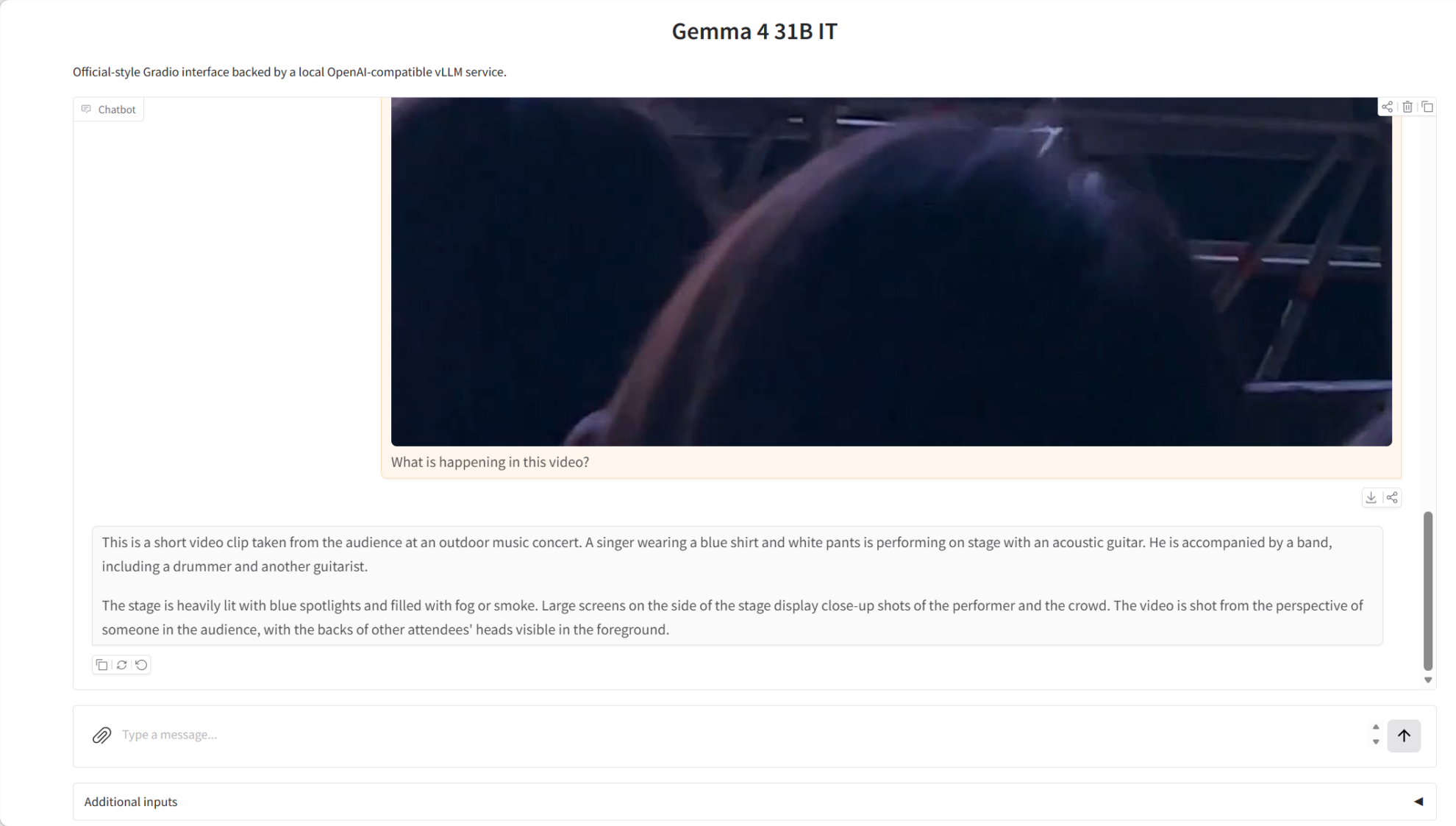

3. One-click deployment of Gemma-4-31B-it

Gemma 4 31B IT, released by Google DeepMind on April 2, 2026, is a 3.1 billion-bit instruction-intensive model in the Gemma 4 series. It supports text and image input as well as text output, provides a context window of up to 256K words, and natively supports inference, function calls, and system hints, making it ideal for high-quality question answering, coding assistance, and agent services. It supports over 140 programming languages and is primarily designed for inference, programming, agent workflows, and multimodal understanding tasks.

Run online:https://go.hyper.ai/RLgK9

4. One-click deployment of gemma-4-26B-A4B-it

Gemma 4 26B A4B IT was released by Google DeepMind on April 2, 2026. It supports text and image input as well as text output, with a context window of up to 256K words. It natively supports inference, function calls, and system hints, making it ideal for high-quality question answering, coding assistance, and agent services. It supports over 140 languages and is primarily designed for inference, programming, agent workflows, and multimodal understanding tasks.

Run online:https://go.hyper.ai/blUyh

5. OmniCoder-9B: For agent coding tasks

OmniCoder-9B was released by Teslatate in September 2025. It is a 9B-parameter coding proxy model based on a hybrid Qwen3.5-9B architecture, positioned as an open-source coding assistant that can be deployed on a single GPU. OmniCoder-9B is specifically optimized for real-world software engineering workflows, focusing on coherent multi-step inference, terminal operations, tool usage, and code modification processes. It is particularly suitable for coding tasks that require understanding, modification, and verification, rather than tasks that only return a one-time answer.

Run online:https://go.hyper.ai/LfNz9

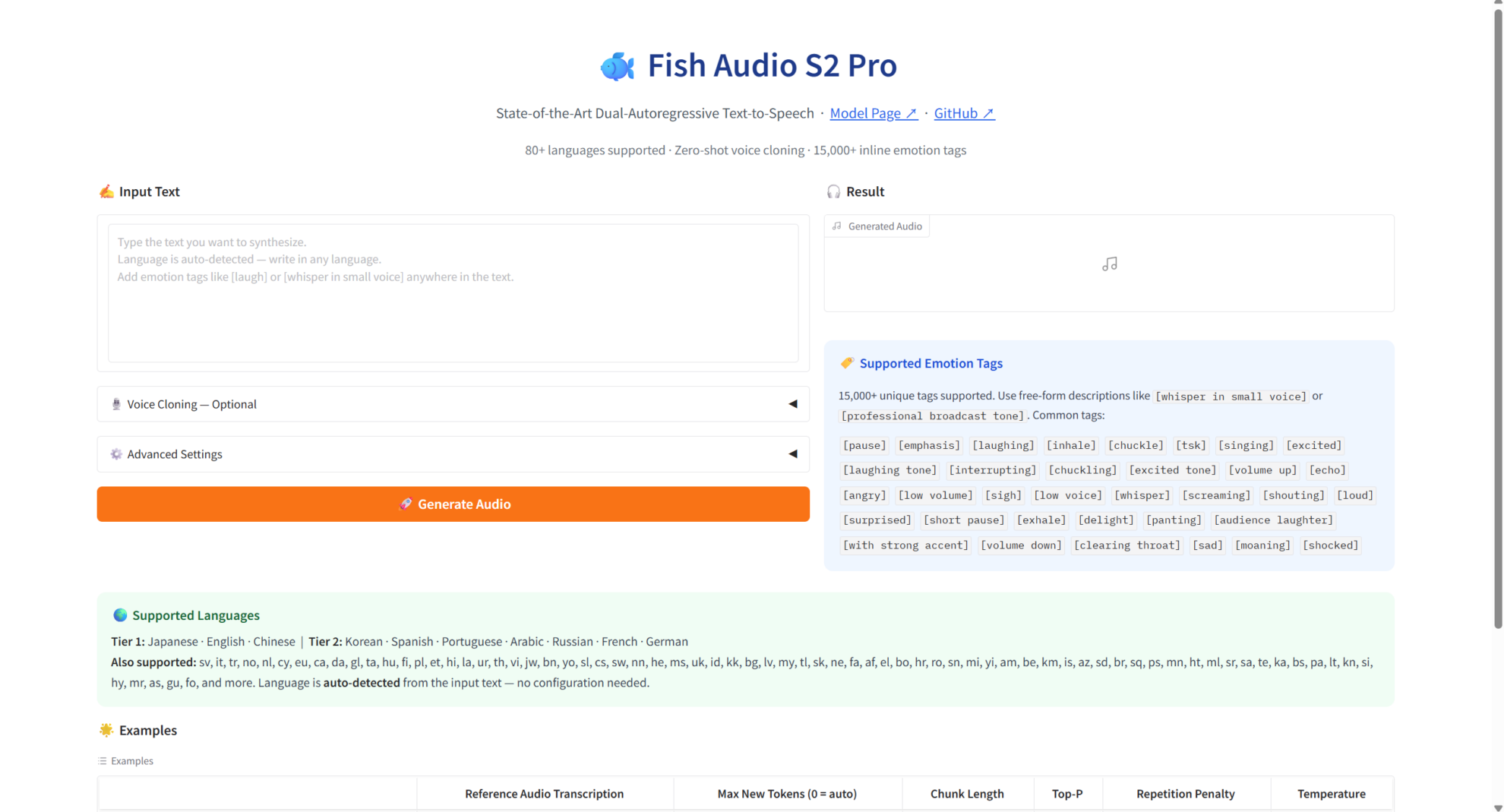

6. Fish Audio S2-Pro Natural Language Control Voice Emotion

In March 2026, Fish Audio released FishAudio-S2-Pro, an end-to-end dual-autoregressive (Dual-AR) text-to-speech (TTS) model with 5 billion parameters (4 billion slow autoregressives + 400 million fast autoregressives). It is deeply optimized for scenarios such as multilingual speech synthesis, personalized speech cloning, and emotional speech generation, and is designed specifically for speech synthesis tasks with high naturalness and high controllability.

Run online:https://go.hyper.ai/QEAJZ

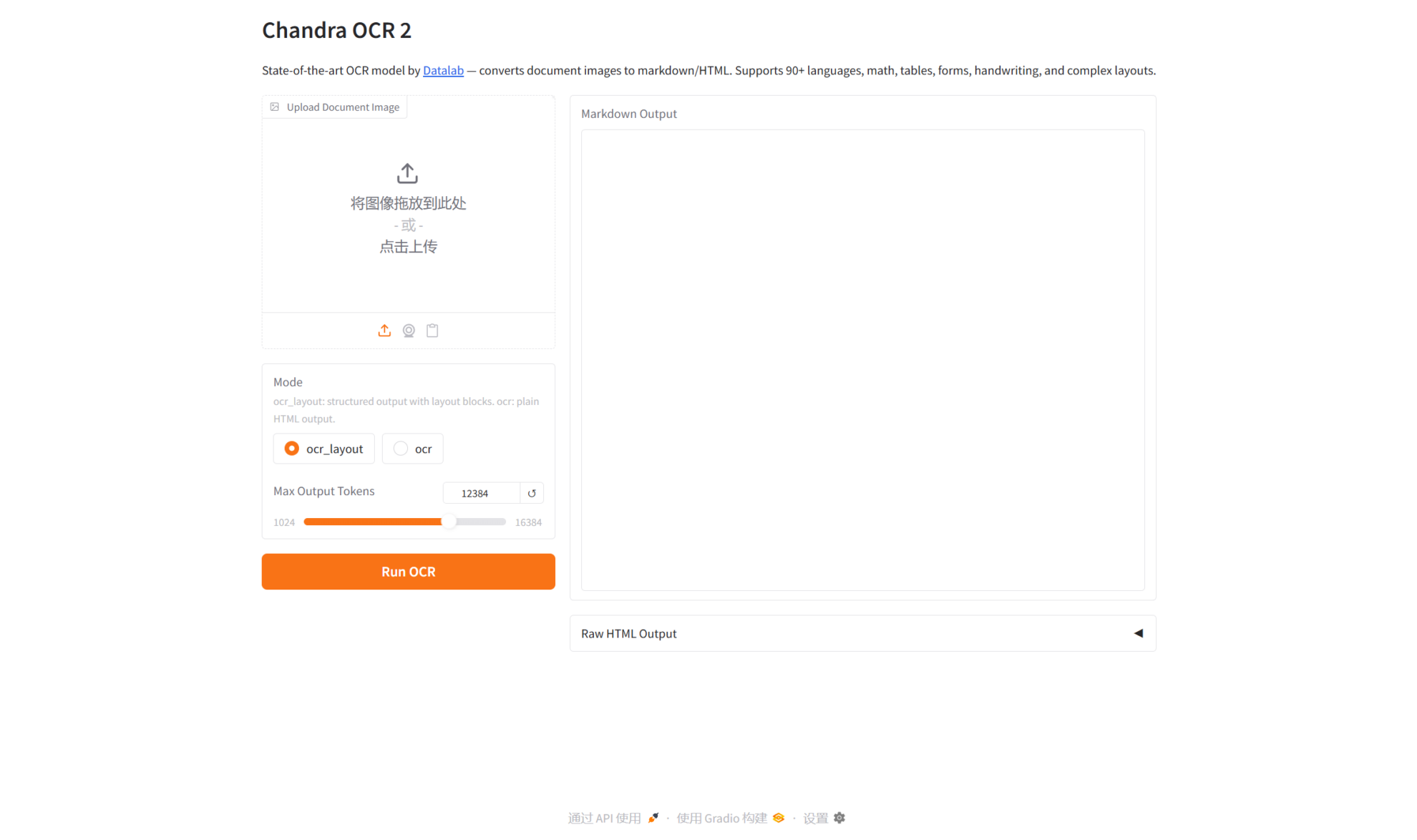

7. Chandra-ocr-2 accurately converts mathematical/spreadsheet/handwritten content into structured content.

Chandra-ocr-2 is a next-generation optical character recognition system launched by the Datalab team in March 2026, focusing on text recognition and structured output in complex scenarios. This model is fine-tuned based on advanced visual language pre-training technology, enabling it to intelligently recognize uploaded image content and return formatted text results.

Run online:https://go.hyper.ai/3KobP

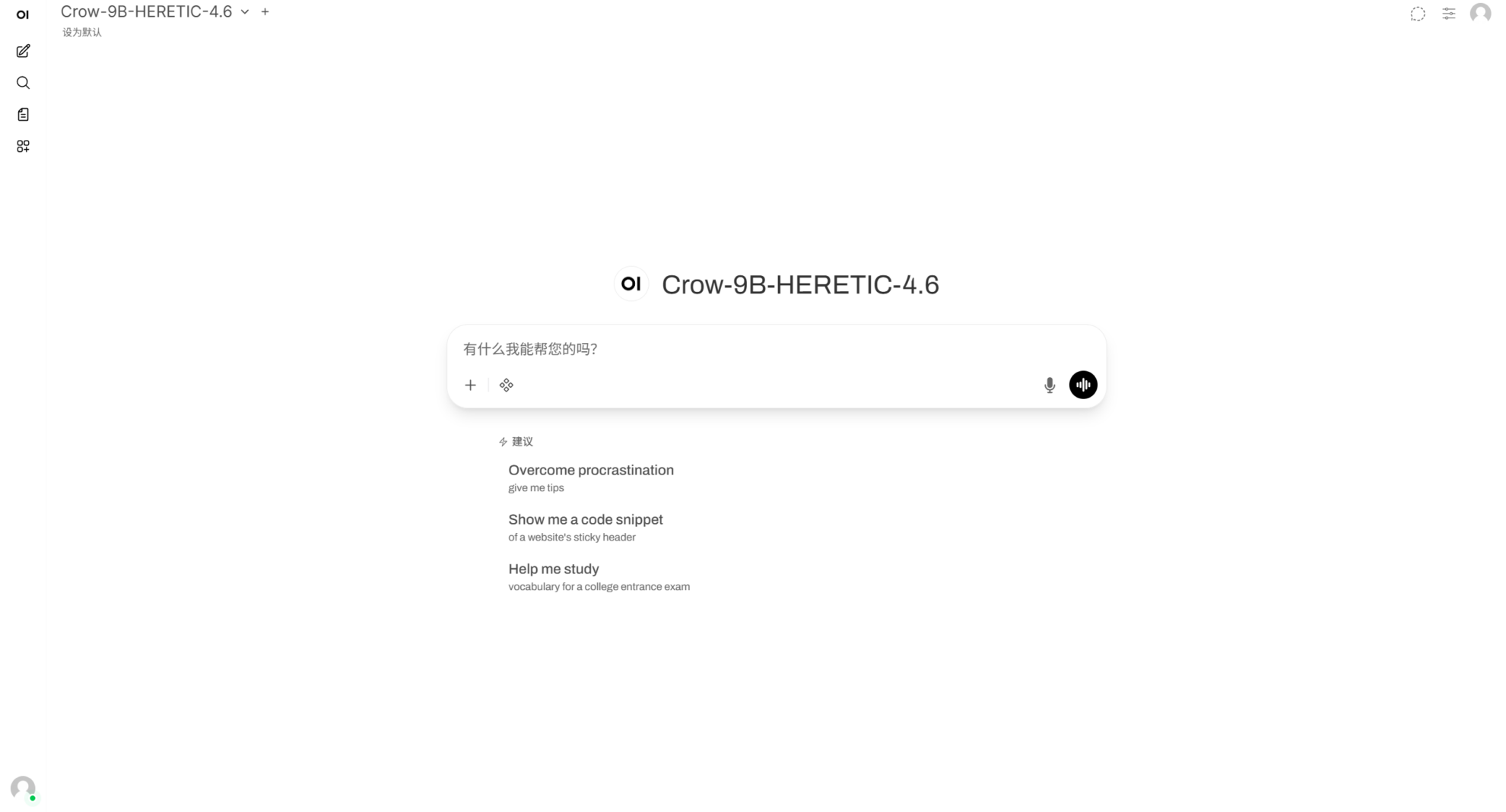

8. Crow-9B-HERETIC-4.6: A locally invoked dialogue model

Crow-9B-HERETIC-4.6 was released by Crownelius in 2025. Built on the Qwen 3.5 architecture, this model has 9 parameters and is released as a Distilled LLM. It is optimized for tasks such as high-quality general dialogue, logical reasoning, long text writing, code assistance, and multi-turn interactions. As a local large language model emphasizing directness, completeness, and structured expression in responses, Crow-9B-HERETIC-4.6 is suitable for use as a general intelligent assistant, learning aid, and text generation model.

Run online:https://go.hyper.ai/DrpSp

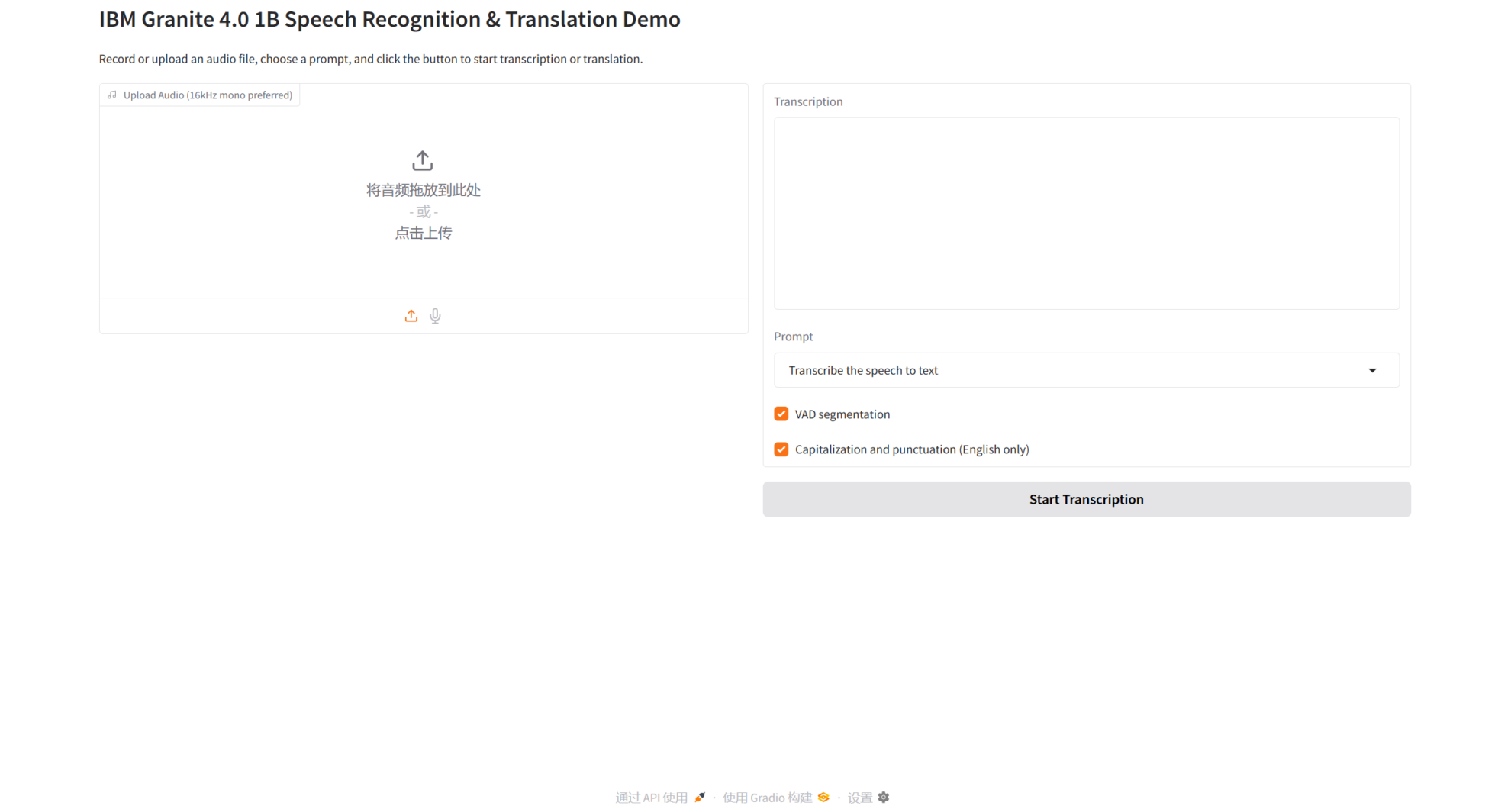

9. Granite 4.0 1B Speech: Offline Speech Recognition and Translation Deployment

IBM Granite released Granite 4.0 1B Speech in March 2026. It is a compact speech model with approximately 1 billion parameters, designed for multilingual automatic speech recognition and two-way speech translation, supporting multiple languages including English, French, German, Spanish, Portuguese, and Japanese. This model emphasizes deployment on resource-constrained devices and is well-suited for offline service workflows built on local weighted directories and standardized service interfaces.

Run online:https://go.hyper.ai/kzFhl

Community article interpretation

1. Cornell University has developed EMSeek, a multi-agent platform that can transform electron microscope images into materials science insights in just 2-5 minutes.

A research team from Cornell University proposed EMSeek, a modular multi-agent platform with source tracing capabilities. Evaluation results on 20 material systems and five task categories show that it achieves approximately twice the speed and higher accuracy of Segment Anything in segmentation tasks. Furthermore, with calibration using only about 2% labeled data, it meets or exceeds the performance of strong single-expert models on three out-of-distribution property prediction benchmarks. A complete query takes only 2 to 5 minutes per image, approximately 50 times faster than an expert workflow.

View the full report:https://go.hyper.ai/1OlNI

2. Achieving 1.4-3.7x inference speedup, MIT proposes DRiffusion to overcome the sampling latency bottleneck of diffusion models.

Researchers at MIT have proposed the DRiffusion draft-refined diffusion model, which combines the advantages of system-level and mathematical methods to achieve significant acceleration without sacrificing generation quality. This provides a novel solution for balancing high fidelity and sampling efficiency in diffusion models.

View the full report:https://go.hyper.ai/lbzzK

Popular Encyclopedia Articles

1. Skills

2. Underfitting

3. Glitch Token (a term used to describe a glitch-related term)

4. Ground Truth

5. Reciprocal Rank Fusion

Here are hundreds of AI-related terms compiled to help you understand "artificial intelligence" here:

The above is all the content of this week’s editor’s selection. If you have resources that you want to include on the hyper.ai official website, you are also welcome to leave a message or submit an article to tell us!

See you next week!