Command Palette

Search for a command to run...

Tutorial Summary | Open-source Small Models Achieve Overall Intelligence Comparable to GPT-5; one-stop Evaluation of Popular Models Such As Qwen 3.5/Gemma 4.

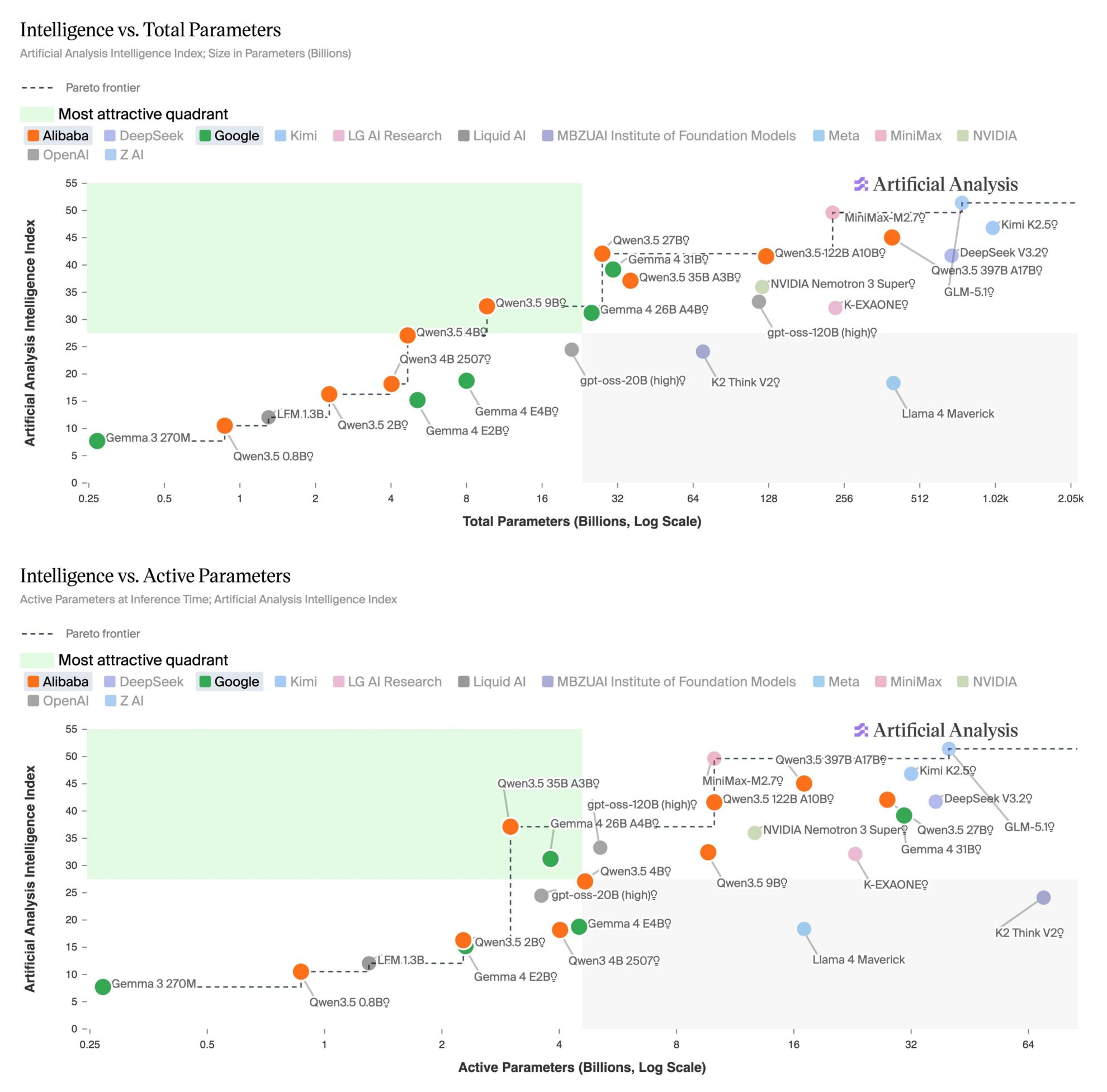

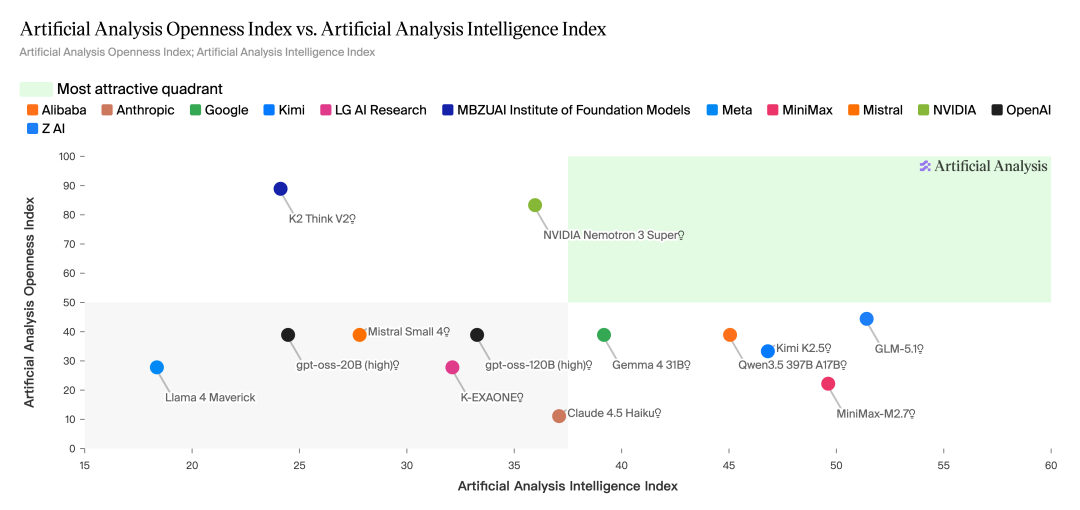

On April 14th, the third-party testing organization Artificial Analysis released a comparative report on open-source models with fewer than 32 bytes, showing that...The Qwen3.5 27B and Gemma 4 31B, two small-sized models, have caught up with the corresponding GPT-5 models in terms of overall intelligence.Among them, Qwen3.5 27B (inference version) scored 42 points on the Intelligence Index, which is comparable to GPT-5 (medium); Gemma 4 31B (inference version) scored 39 points, which matches GPT-5 (low).

The report indicates that this generation of 32B models shows significant improvements in reasoning ability and agent performance. Qwen3.5 27B achieved a score of 55 on the Agentic Index, surpassing GPT-5 (medium)'s 46 points; Gemma 4 31B also outperforms GPT-5 (low) on complex tasks such as TerminalBench Hard and HLE. Furthermore, both natively support multimodal input and perform among the top open-source models in their class on visual understanding tasks such as MMMU-Pro.

However, the smaller model still lags significantly behind in terms of knowledge accuracy and illusion control. The two models score -42 and -45 on the AA-Omniscience index, respectively, while the GPT-5 related version scores -10, demonstrating the continued impact of parameter size on knowledge retention capacity.

At the deployment level, the practicality of these models has been significantly improved. Both models mentioned above can run on a single NVIDIA H100 and can be deployed locally on personal devices through quantization, lowering the barrier to entry. Meanwhile, the open-source weighting community as a whole is rapidly catching up to the forefront, with large models such as GLM-5.1 narrowing the gap to single-digit scores.

All along,To help developers quickly get started and validate the latest open-source models, HyperAI continuously updates online deployment notebooks for popular models in the "Tutorials" section of its official website.This article summarizes the high-quality open-source models mentioned in the Artificial Analysis report and their one-click deployment tutorials. Come and experience the high performance that approximates closed-source models!

More online tutorials:

Welcome to visit our official website for more information:

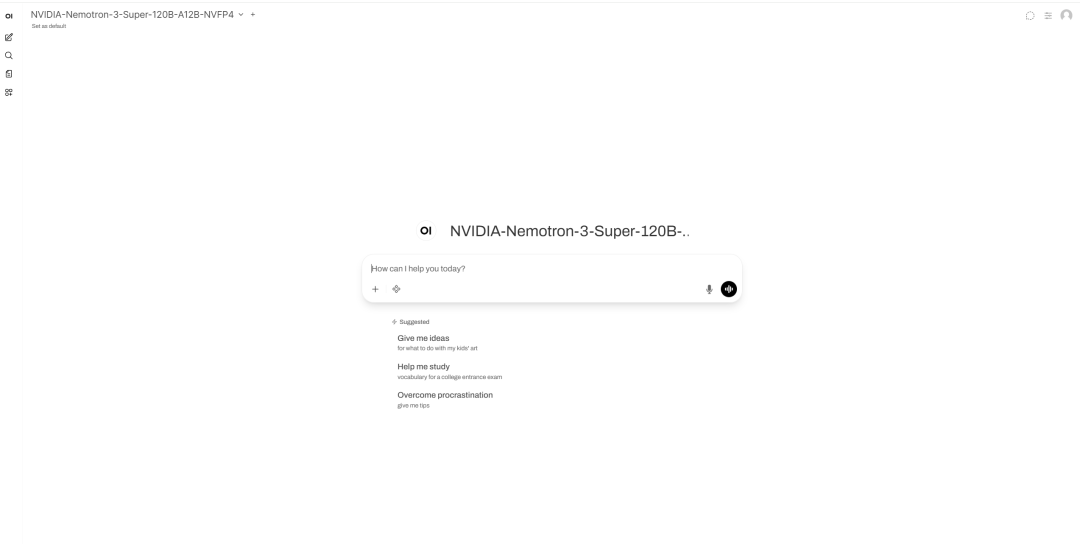

NVIDIA-Nemotron-3-Super-120B

The NVIDIA Nemotron 3 Super NVFP4 was released by NVIDIA Corporation in March 2026. This model is a large language model with 120 total parameters and 12 activation parameters, employing a LatentMoE hybrid architecture and supporting contexts up to 1M tokens.

This model is designed for scenarios involving long-context reasoning, agent workflows, tool calls, RAGs, and high-throughput question answering. In terms of interaction, the model supports both enabling and disabling a reasoning mode, and can switch between normal question answering and reasoning-enhanced modes using standardized chat template parameters.

Run online:

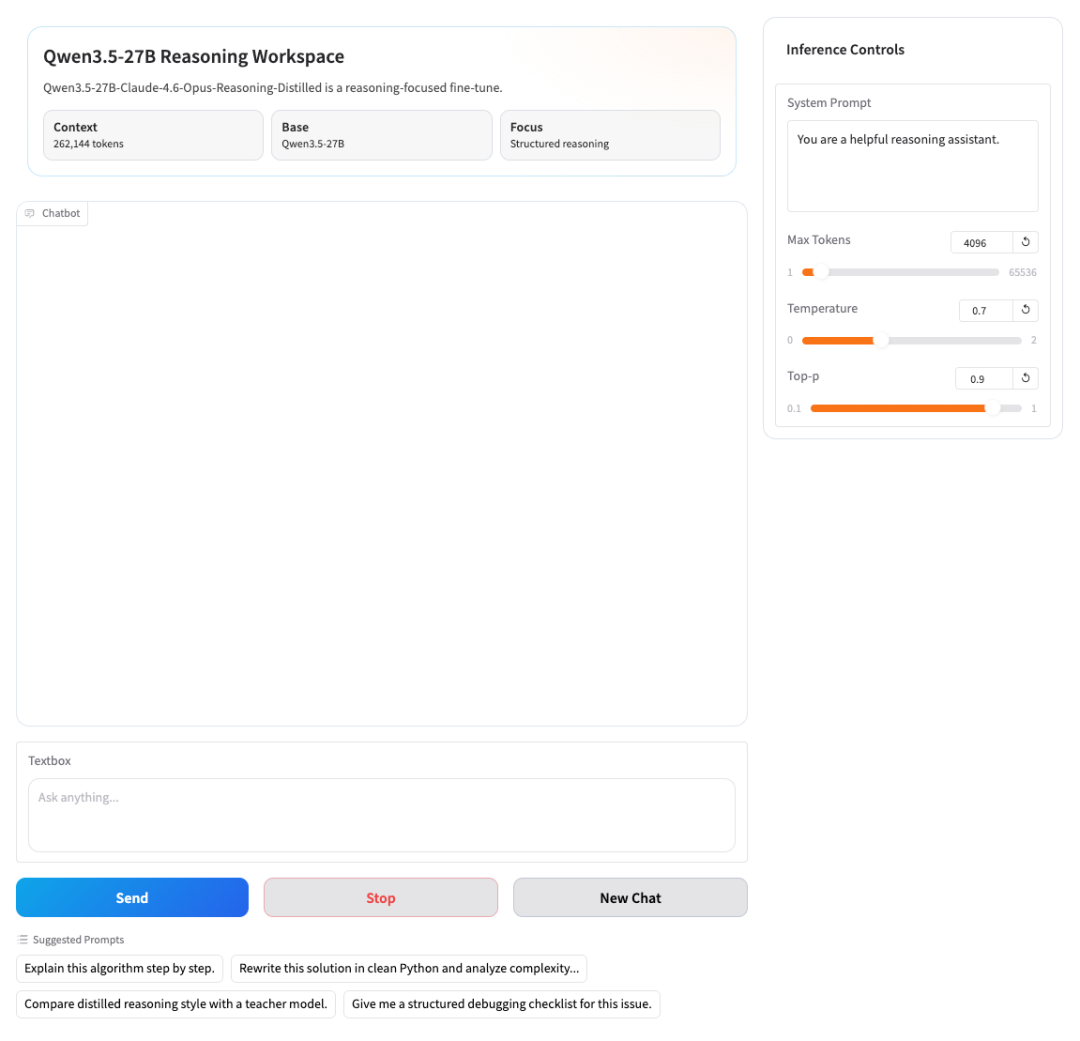

Qwen3.5-27B-Claude-4.6-Opus–Reasoning-Distilled

In March 2026, Jackrong open-sourced a high-performance reasoning model, Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled. It is built on the Qwen3.5-27B architecture and integrates advanced reasoning capabilities distilled from Claude-4.6 and Opus. While maintaining the original powerful language understanding and expression capabilities, it significantly enhances the performance of solving complex problems and multi-turn dialogue interaction.

At the core capability level, this model achieves a comprehensive upgrade in reasoning ability by introducing high-quality thought chain distillation technology, making it particularly outstanding in scenarios such as mathematical derivation, logical analysis, planning and decision-making, and multi-step task decomposition. Compared with traditional models, this system can not only generate answers, but also analyze problems step by step in a structured manner, breaking down complex tasks into clear and executable logical steps, thereby improving the overall reasoning stability and result reliability.

Run online:

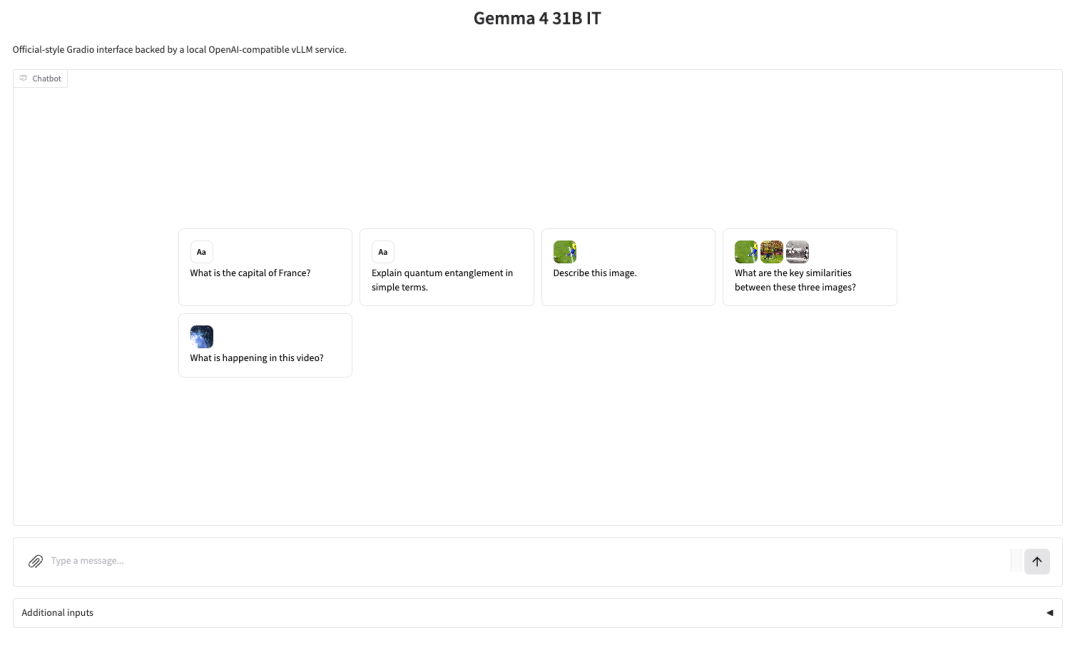

Gemma-4-31B-it

Google DeepMind's open-source Gemma 4 series models, based on the same technology system as Gemini 3, not only ranked among the top three in the Arena AI leaderboard, but also achieved performance close to or even surpassing that of larger-sized models with a parameter scale far smaller than its competitors.

From a product form perspective, Gemma 4 is not a single model, but rather a multi-size system covering E2B, E4B, 26B A4B to 31B, corresponding to different scenarios such as mobile devices, local deployments, and high-performance computing environments. Among them, the 31B version, as the performance ceiling of the current series, has a capability level that can even rival that of Qwen 3.5 397B.

In terms of application scenarios, version 31B supports image and text input and text output, has a context window with up to 256K tokens, and natively supports reasoning, function calls and system prompts. It also supports more than 140 languages, so it performs well in scenarios such as high-quality question answering, code assistance and agent services.

Run online:

CPU deployment Qwen3.5-9B-GGUF

Qwen3.5 is a new generation of multimodal large language model series released by Alibaba's Tongyi Qianwen team. It supports text and image input and generates text output, targeting tasks such as dialogue, reasoning, programming, and visual understanding. Among them, Qwen3.5-9B is the 9B parameter version of this series, which strikes a balance between capability and deployment cost, and is suitable for edge or local deployment inference in resource-constrained environments.

In this tutorial, we will use community-provided GGUF weights (Q4_K_M quantized version) in conjunction with a visual encoder (mmproj GGUF file). We will start a backend service compatible with the OpenAI interface via llama.cpp and connect to OpenWebUI to provide a browser-based interactive interface.

Run online:

Find more popular tutorials: