Command Palette

Search for a command to run...

Extremely Lightweight, yet With Undiminished Image Quality! ERNIE-Image-Turbo: Say Goodbye to Long Waits, lightning-fast Speed; Introducing dual-dimensional Metrics of Perception and Cognition: Alibaba's Unified Multimodal Parsing and Evaluation Dataset OmniParsingBench Is Now online.

ERNIE-Image-Turbo is a high-efficiency text-to-image model open-sourced by Baidu. Based on a single-stream diffusion transformer (DiT) architecture and deeply optimized with DMD and RL techniques, it can quickly generate high-fidelity, aesthetically pleasing images in just 8 inference steps. Its excellent lightweight design significantly lowers the hardware barrier for application and research.

While maintaining extremely high generation speed, this model demonstrates strong controllability and versatility. It can accurately execute instructions involving multiple objects and complex relationships, and greatly enhances its ability to render long, dense text and structured layouts, making it the ideal choice for poster design, multi-panel comics, and infographics. Furthermore, it fully supports various aesthetic styles, including realistic photography, design typography, and soft cinematic effects, making it an ideal tool that balances visual quality with industrial-grade creative efficiency.

The "ERNIE-Image-Turbo Raw Image Model" is now available on the HyperAI website. Give it a try!

Online use:https://go.hyper.ai/hmKUg

Welcome to visit our official website for more information:

A quick overview of hyper.ai's official website updates from April 18th to April 24th:

* High-quality public datasets: 9

* A selection of high-quality tutorials: 5

* Community article analysis: 2 articles

* Popular encyclopedia entries: 5

* Top conferences with deadline in April: 1

Visit the official website:hyper.ai

Selected public datasets

1. OmniParsingBench Multimodal Parsing Capability Evaluation Dataset

OmniParsingBench, released by Alibaba in 2026, is a benchmark dataset for evaluating the unified parsing capabilities of Multimodal Large Models (MLLM). This dataset contains approximately 5,294 samples, covering six modal domains (natural images, graphics, documents, audio, natural video, and text-dense video), and introduces three levels of evaluation metrics: perception (Perc.), cognition (Cog.), and overall (Ovr.). Each dataset includes an image or audio/video input and a corresponding structured parsing task.

Online use:https://go.hyper.ai/AqyDg

2.BRIGHT Disaster Building Assessment Dataset

BRIGHT is the first open-access, globally distributed, multimodal global disaster scene benchmark dataset with diverse event types, integrating optical imagery and SAR (Synthetic Aperture Radar) data. This dataset covers 14 regions and 7 types of disasters (5 natural disasters + 2 man-made disasters), containing approximately 4,200 paired imagery pairs, involving over 380,000 building instances, with a spatial resolution of approximately 0.3–1 meter. The data consists of pre-disaster imagery, post-disaster imagery, and target annotations.

Online use:https://go.hyper.ai/RifVg

3. Flower: A dataset of images of flowers in Bangladesh

The Flower Bangladesh Flower Image Dataset is a dataset designed for computer vision image classification tasks. This dataset contains real-world images of various flower species taken in Bangladesh. All images are original, non-synthetic, and were captured under natural lighting conditions, exhibiting rich color variations. The dataset covers a wide range of local flower varieties and their appearance characteristics, and is labeled by category.

Online use:https://go.hyper.ai/wirun

4. MIA Multi-Step Inference and Decision Trajectory Dataset

The MIA (Multi-Step Reasoning and Decision Trajectory) dataset, jointly released in April 2026 by East China Normal University, Shanghai Innovation Research Institute, and Harbin Institute of Technology, is a dataset used to train and evaluate intelligent agents with long-term memory and task execution capabilities. This dataset contains approximately 21,000 reasoning trajectories, covering the entire process of problem solving, planning, searching, and execution, and is suitable for agent reasoning and reinforcement learning research.

Online use:https://go.hyper.ai/XITit

5. PanScale Remote Sensing Pancolor Sharpening Dataset

PanScale is a benchmark dataset for large-scale inference and capability assessment, released in 2026 by the Chinese Academy of Sciences in conjunction with the University of Science and Technology of China and the Hong Kong University of Science and Technology. This dataset contains 7,559 pairs of multispectral (MS) and panchromatic (PAN) images in 8-bit TIFF format. It covers multiple subsets including jilin, landsat, and skysat, and extends to cross-scale versions such as fjilin, flandsat, and fskysat, supporting system evaluation of scenes from the same scale to multiple scales (up to 4.0x).

Online use:https://go.hyper.ai/mz2gh

6. Emotion-probes emotion detection dataset

Emotion-probes is a synthetic text dataset designed for emotion understanding and model interpretability research. It aims to extract emotion vectors and emotion masking capabilities from models, and is widely used in emotion classification, model alignment, security research, and analysis of the internal mechanisms of large models. The dataset contains approximately 447,000 samples. Each sample includes fields such as true emotion, expressed emotion, text content, and role information.

Online use:https://go.hyper.ai/jw5FA

7. OpenMementos Context Memory Compressed Dataset

OpenMementos is a context-memory compression dataset released by Microsoft in 2026, designed for modeling long-chain inference and context management capabilities of large models. This dataset aims to train models to perform context compression and continuous inference, thereby supporting complex multi-step inference tasks within a limited context window. It is widely applicable to research scenarios such as long-chain inference modeling, memory-enhanced model training, and efficient generation.

Online use:https://go.hyper.ai/RwCkt

8. ParseBench document parsing capability evaluation dataset

The ParseBench document parsing capability evaluation dataset was released by the LlamaIndex team in 2024–2025. This dataset contains approximately 2,000 manually validated and annotated pages and 169,011 test rules across five dimensions. These pages are taken from publicly available enterprise documents covering insurance, finance, government, and other sectors, encompassing various page types including PDFs, scanned images, and pages containing tables and page layouts. Standardized parsing results are provided aligned with human annotations to evaluate the model's performance in structural understanding and information extraction.

Online use:https://go.hyper.ai/FfFR6

9. SOHL-multidish-yolo dataset for detecting multidish Indian foods.

SOHL Multi-Dish YOLO is a food recognition dataset for multi-object detection tasks in computer vision. Built based on the YOLOv8 annotation specification, it focuses on the problem of detecting multiple dishes in complex scenes. The dataset contains 377 annotated images with 377 corresponding annotations, covering 16 food categories. Each image contains 2–6 food objects, exhibiting characteristics such as overlap, multi-scale, and complex layouts.

Online use:https://go.hyper.ai/u5Lng

Selected Public Tutorials

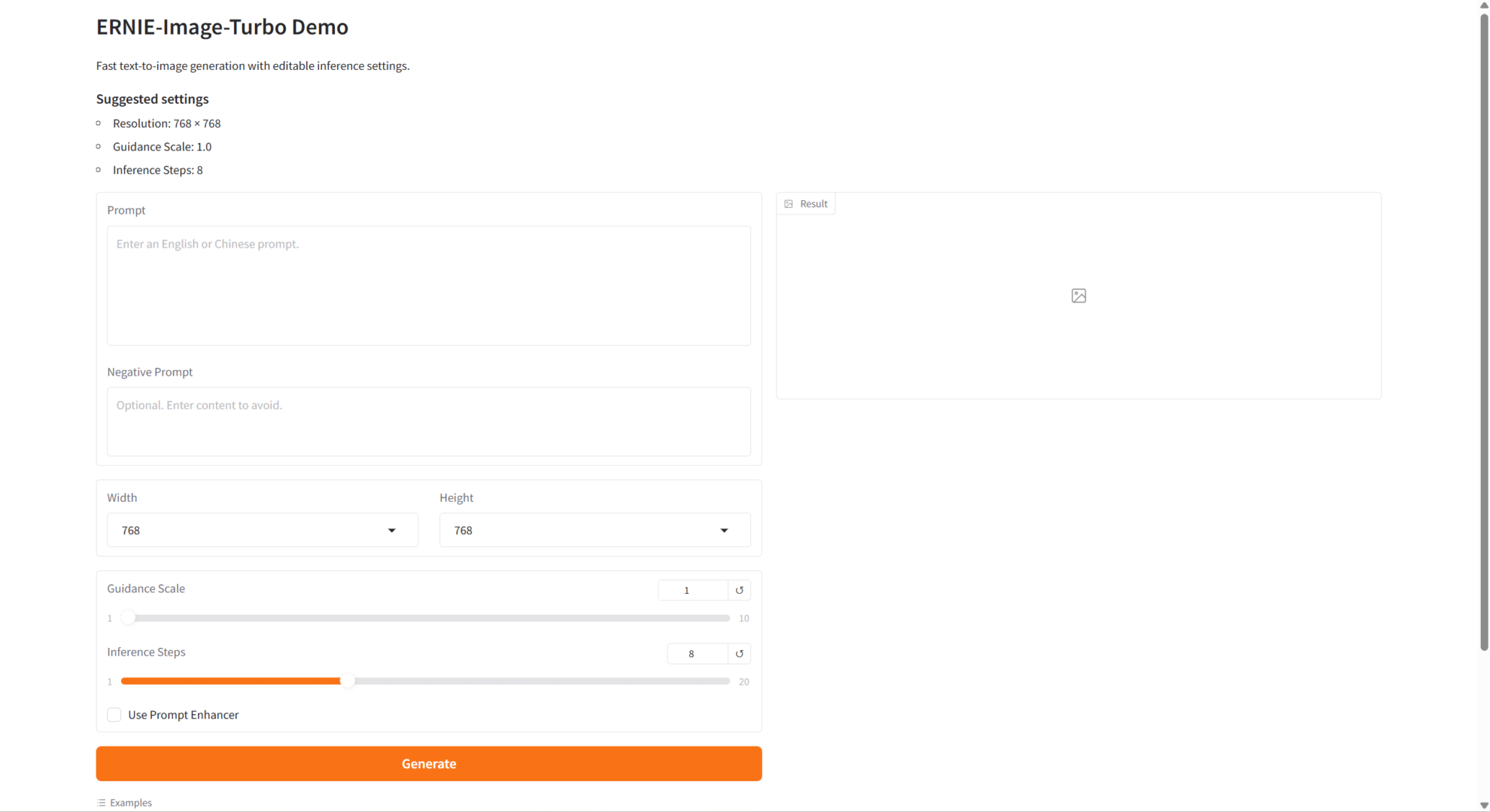

1. ERNIE-Image-Turbo Raw Image Model

ERNIE-Image-Turbo is an open-source text-to-image generation model released by the Baidu ERNIE-Image team in April 2026. ERNIE-Image-Turbo features complex instruction tracing, text rendering, poster layout generation, structured image generation, and broad style coverage, making it suitable for creative content workflows such as poster design, illustration generation, and interface concept sketching.

Run online:https://go.hyper.ai/hmKUg

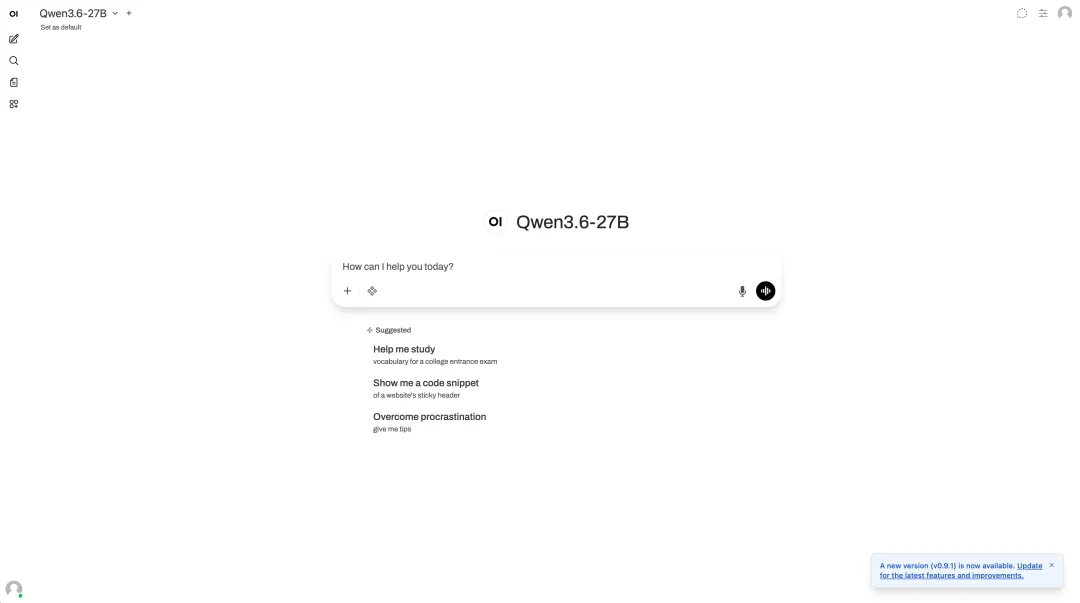

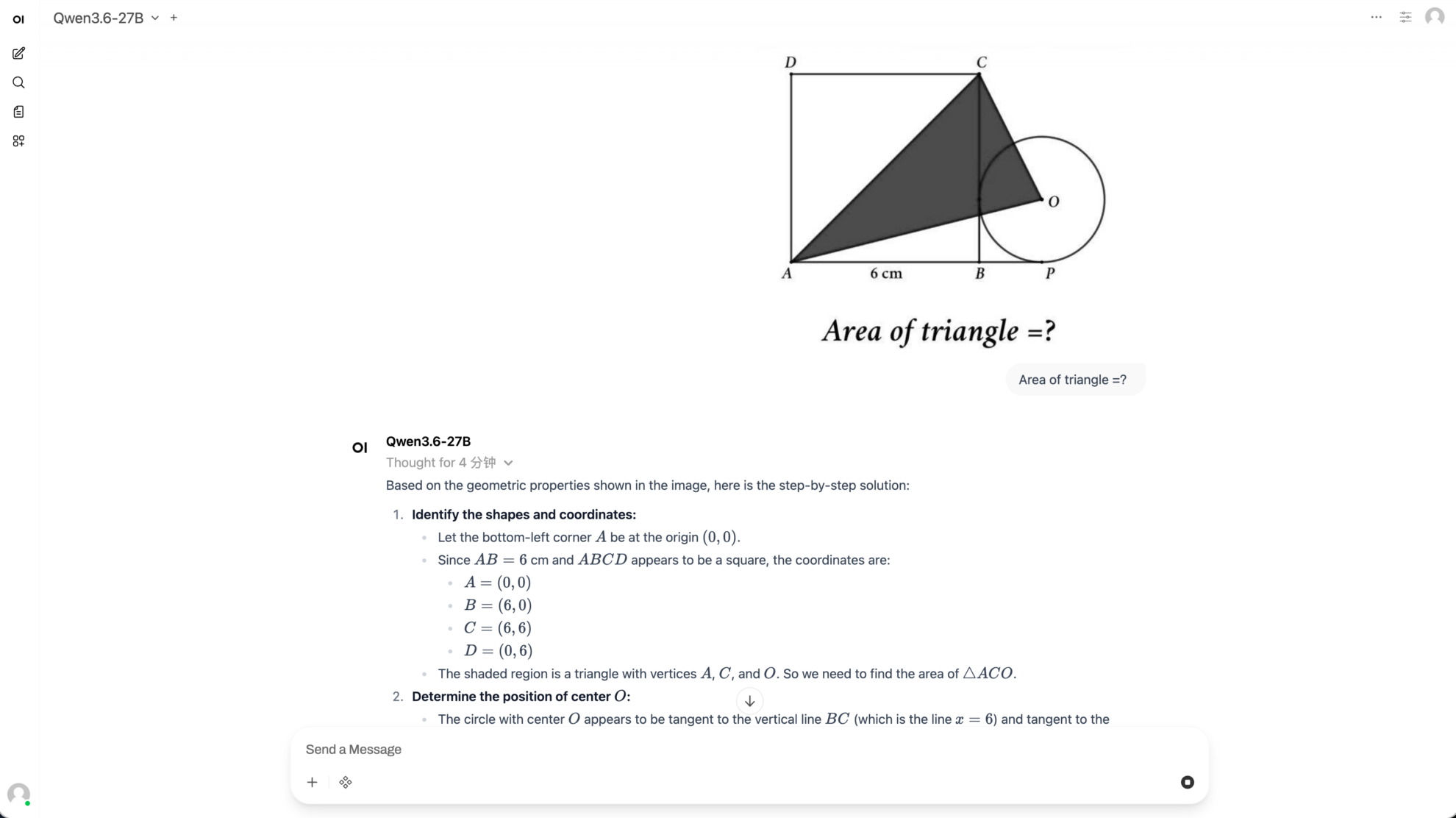

2. One-click deployment of Qwen 3.6-27B

Qwen3.6-27B is a dense multimodal model with 27 billion parameters, open-sourced by the Tongyi Qianwen team. This model still supports multimodal thinking and non-thinking modes, achieving flagship-level performance in agent programming, comprehensively surpassing its predecessor, the open-source flagship Qwen3.5-397B-A17B. As a dense architecture, it can be deployed without MoE routing, making it an ideal choice for developers seeking top-tier programming capabilities in a practical and widely deployable manner.

Run online:https://go.hyper.ai/GU9S2

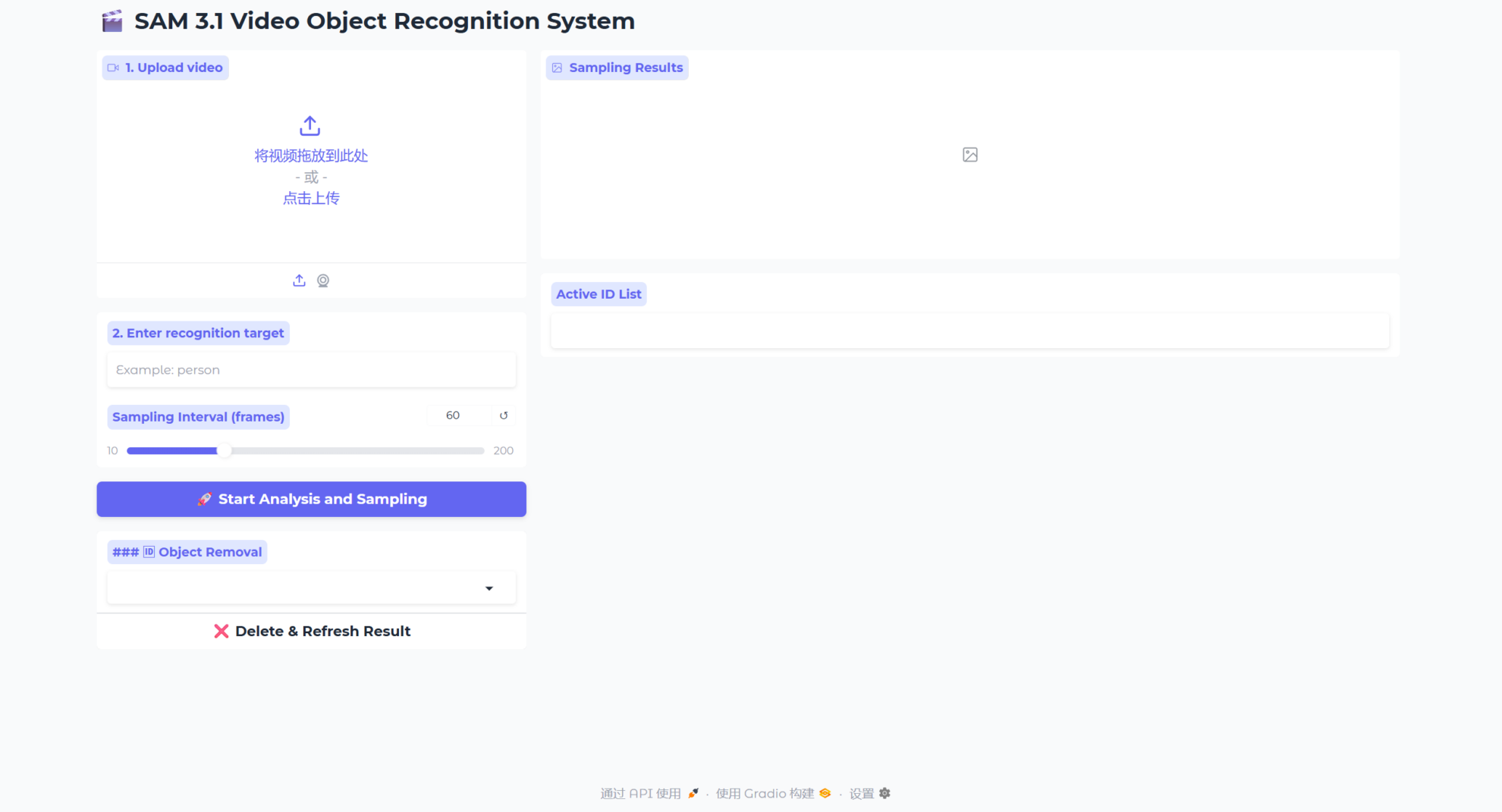

3. SAM3.1: Video Multi-Object Tracking and Segmentation

SAM3.1 (Segment Anything Model 3.1) is an open-vocabulary object tracking and segmentation system for video. This model achieves efficient multi-object video tracking by introducing object multiplexing technology.

Run online:https://go.hyper.ai/3e5qL

4. Qwen3.6-35B-A3B: A powerful tool for programming intelligent agents.

In April 2026, the Qwen team released the multimodal hybrid expert (MoE) model Qwen3.6-35B-A3B. This model has a total of 35 billion parameters, but only 3 billion parameters are activated in each inference, thus significantly reducing inference costs while maintaining high performance.

Run online:https://go.hyper.ai/Gc7bp

5. Building Neural Networks from Scratch: A NumPy Tutorial

This tutorial guides users to build a simple neural network framework from scratch using only the NumPy library, comprehensively covering core concepts from neurons, weights, forward propagation to hidden layers, activation, and loss functions. It also helps users understand the principles behind deep learning model construction, going beyond simply calling framework APIs.

Run online:https://go.hyper.ai/OmyS0

Community article interpretation

1. ICLR 2026 | 125x Reduction in Trainable Parameters per Task! New Method Task Tokens Helps Embodied Intelligence Enhance Complex Task Capabilities

A research team from the Technion – Israel Institute of Technology has proposed a method called Task Tokens, which effectively adapts BFM to specific tasks while maintaining its flexibility. Compared to standard baseline methods, the new method reduces the trainable parameters per task by up to 125 times and improves convergence speed by up to 6 times. The researchers also validated the effectiveness of Task Tokens on various tasks, including out-of-distribution scenarios, and demonstrated its compatibility with other cueing methods.

View the full report:https://go.hyper.ai/vs0C6

2. The University of Toronto and others proposed dnaHNet, which improves inference speed by 3 times and reduces the computational cost of genome learning by nearly 4 times.

The dnaHNet model, jointly proposed by the University of Toronto, the Vector Institute for Artificial Intelligence in Canada, and the Arc Institute in the United States, offers a new approach to achieving a better balance between computational feasibility and biological fidelity.

View the full report:https://go.hyper.ai/dRnYT

Popular Encyclopedia Articles

1. Skills

2. Ground Truth

3. Triplet Loss Function

4. Kolmogorov-Arnold Networks

5. Reciprocal Rank Fusion

Here are hundreds of AI-related terms compiled to help you understand "artificial intelligence" here:

The above is all the content of this week’s editor’s selection. If you have resources that you want to include on the hyper.ai official website, you are also welcome to leave a message or submit an article to tell us!

See you next week!

About HyperAI

HyperAI (hyper.ai) is the leading artificial intelligence and high-performance computing community in China.We are committed to becoming the infrastructure in the field of data science in China and providing rich and high-quality public resources for domestic developers. So far, we have:

* Provides domestic accelerated download nodes for 2100+ public datasets

* Includes 700+ classic and popular online tutorials

* Analyzing 300+ AI4Science Paper Cases

* Supports searching for 700+ related terms

* Hosting the first complete Apache TVM Chinese documentation in China

Visit the official website to start your learning journey: