Command Palette

Search for a command to run...

Papers

Daily updated cutting-edge AI research papers to help you keep up with the latest AI trends

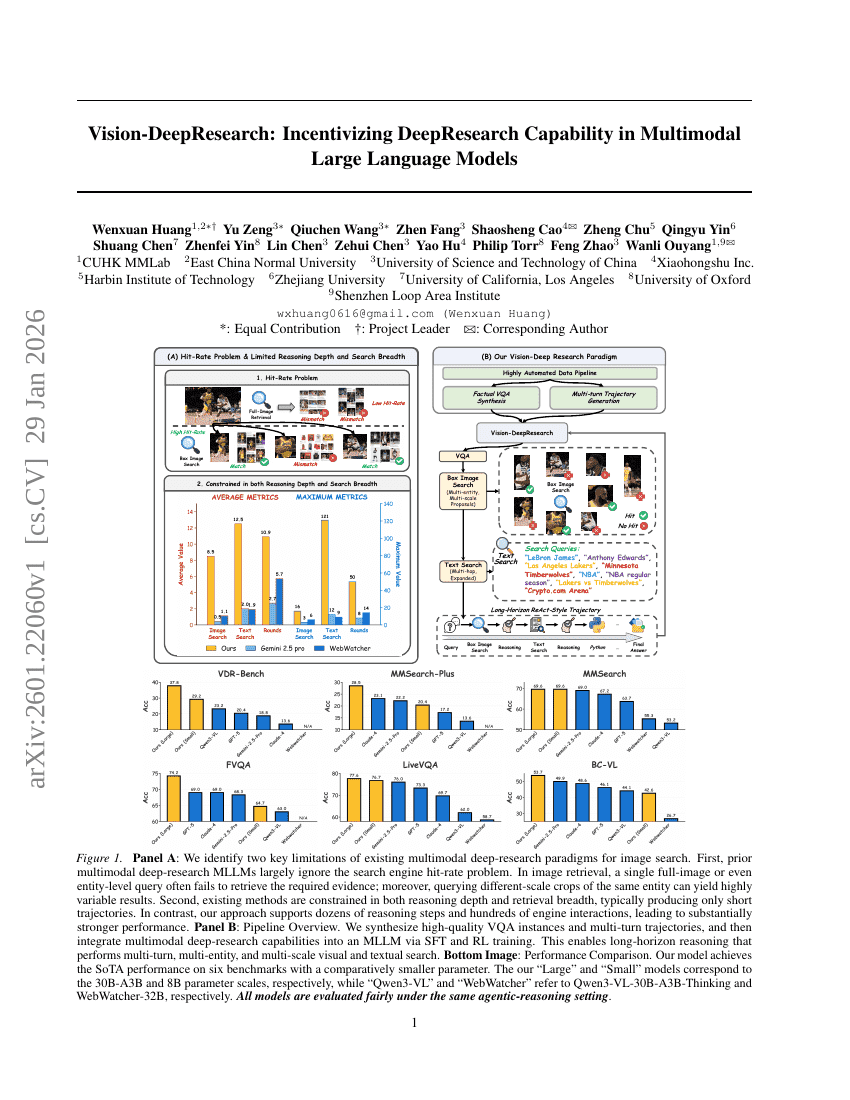

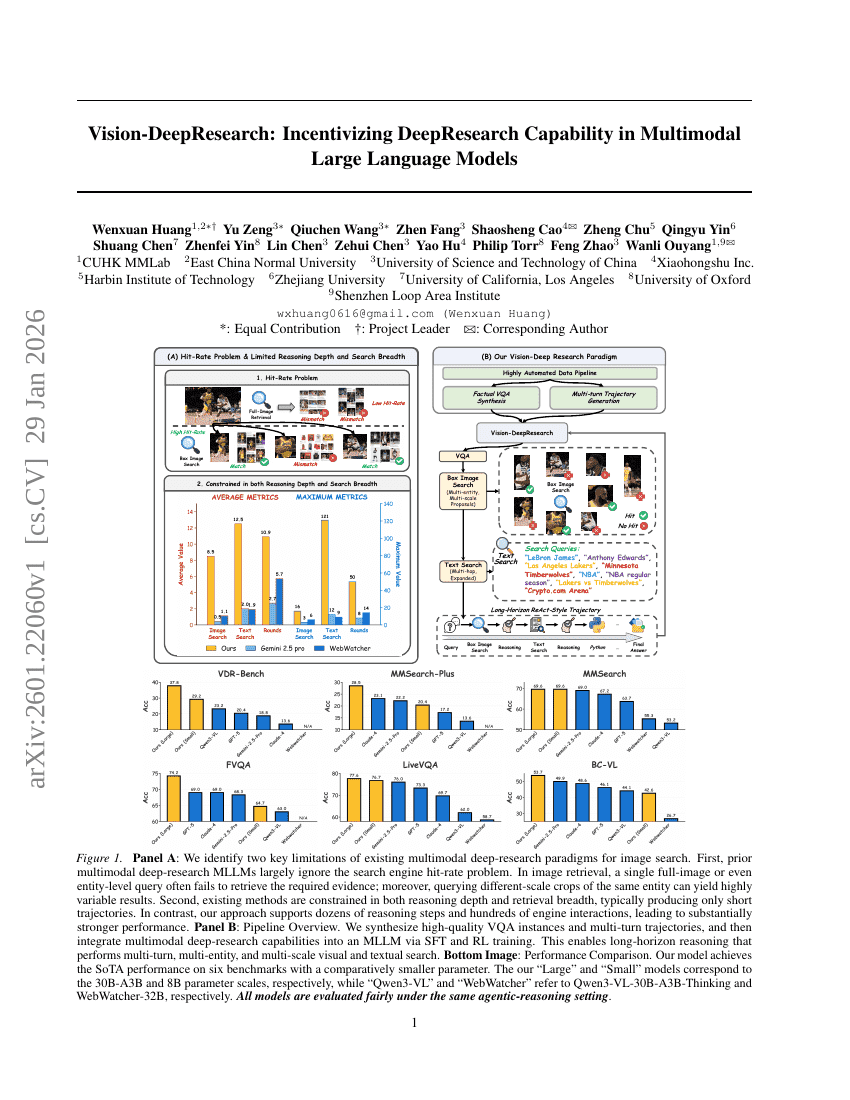

Vision-DeepResearch: Incentivizing DeepResearch Capability in Multimodal Large Language Models

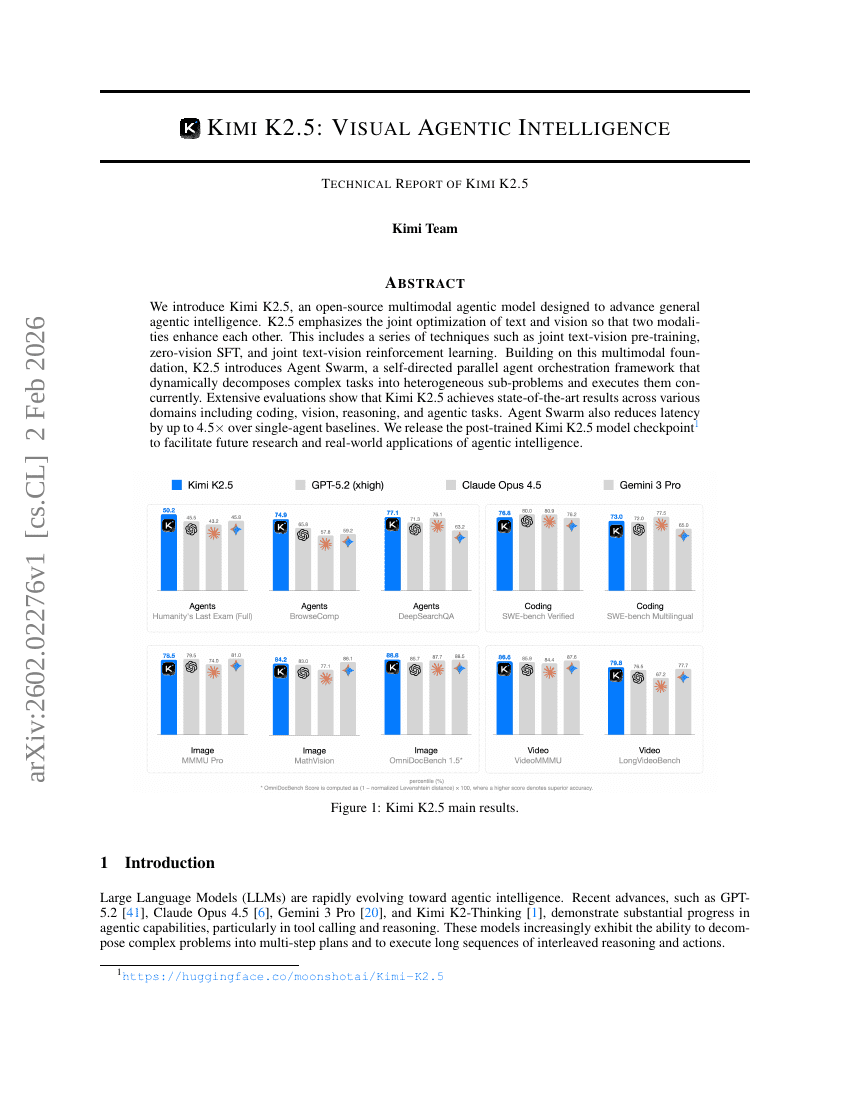

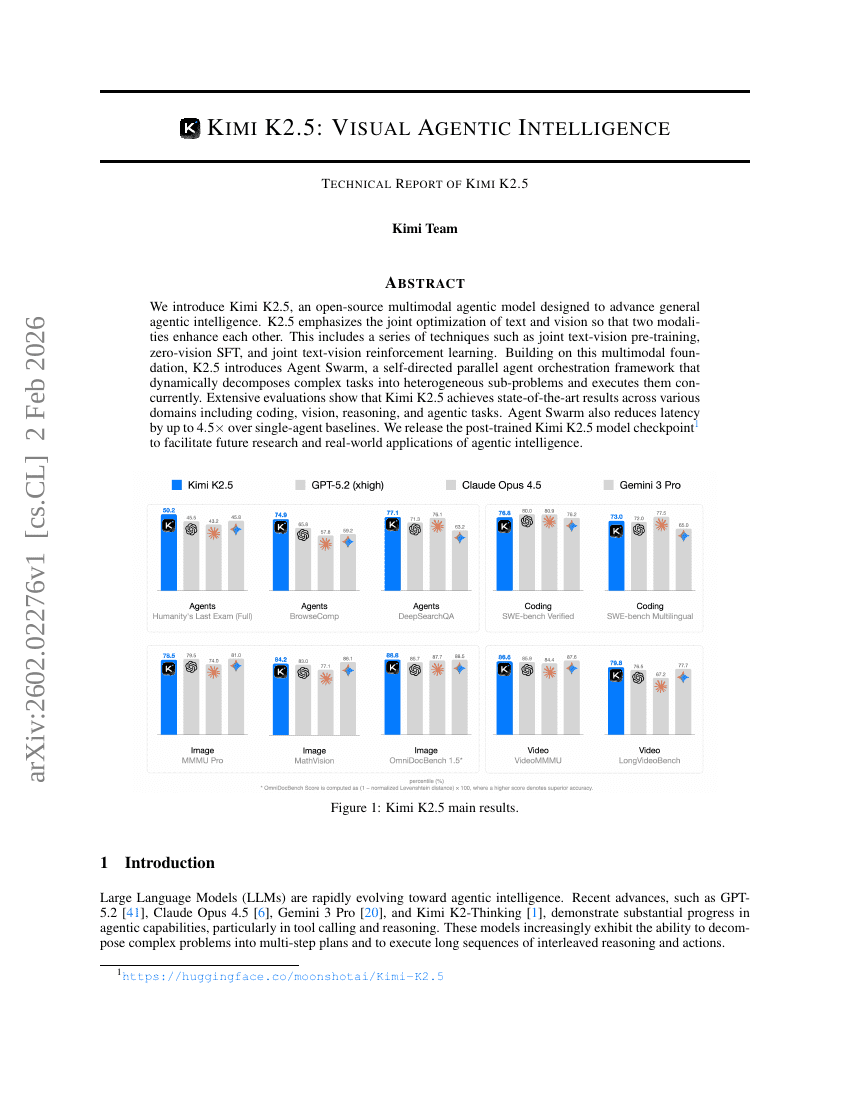

Kimi K2.5: Visual Agentic Intelligence

Vision-DeepResearch: Incentivizing DeepResearch Capability in Multimodal Large Language Models

Kimi K2.5: Visual Agentic Intelligence

Green-VLA: Staged Vision-Language-Action Model for Generalist Robots

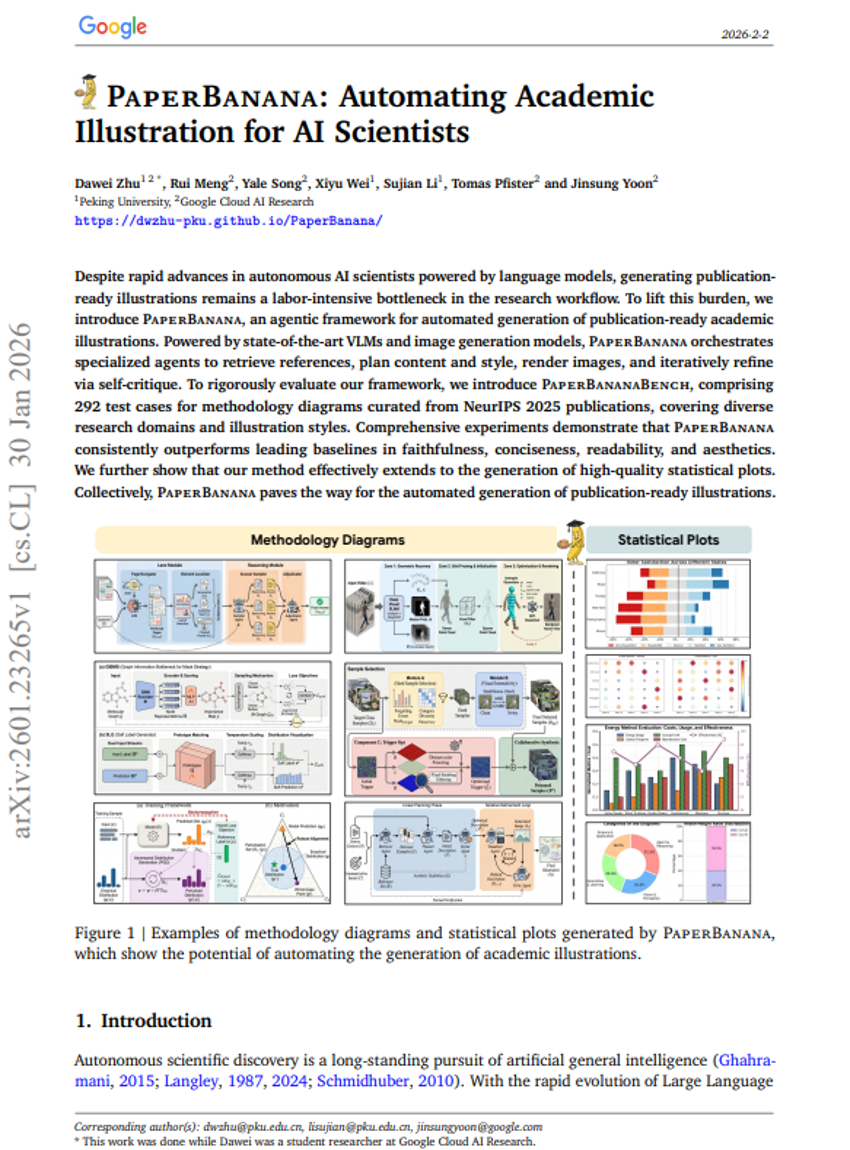

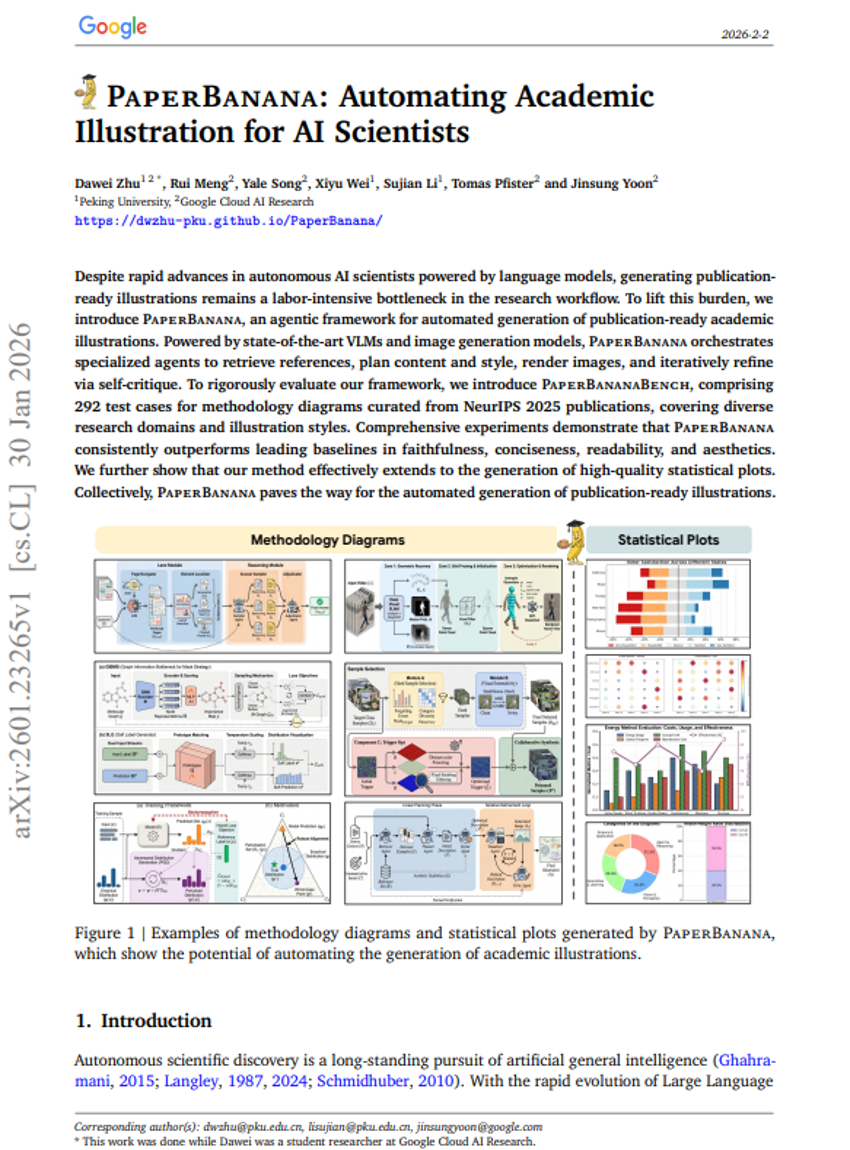

PaperBanana: Automating Academic Illustration for AI Scientists

Semi-Autonomous Mathematics Discovery with Gemini: A Case Study on the Erdős Problems

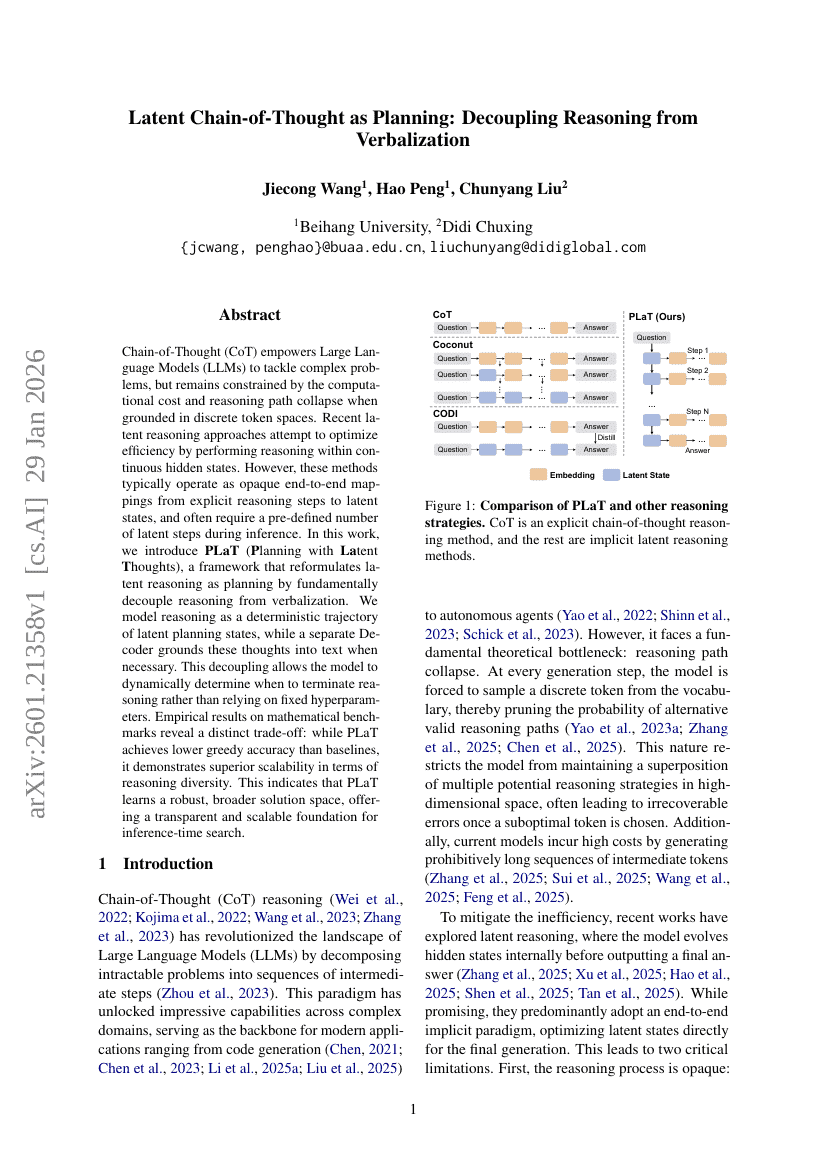

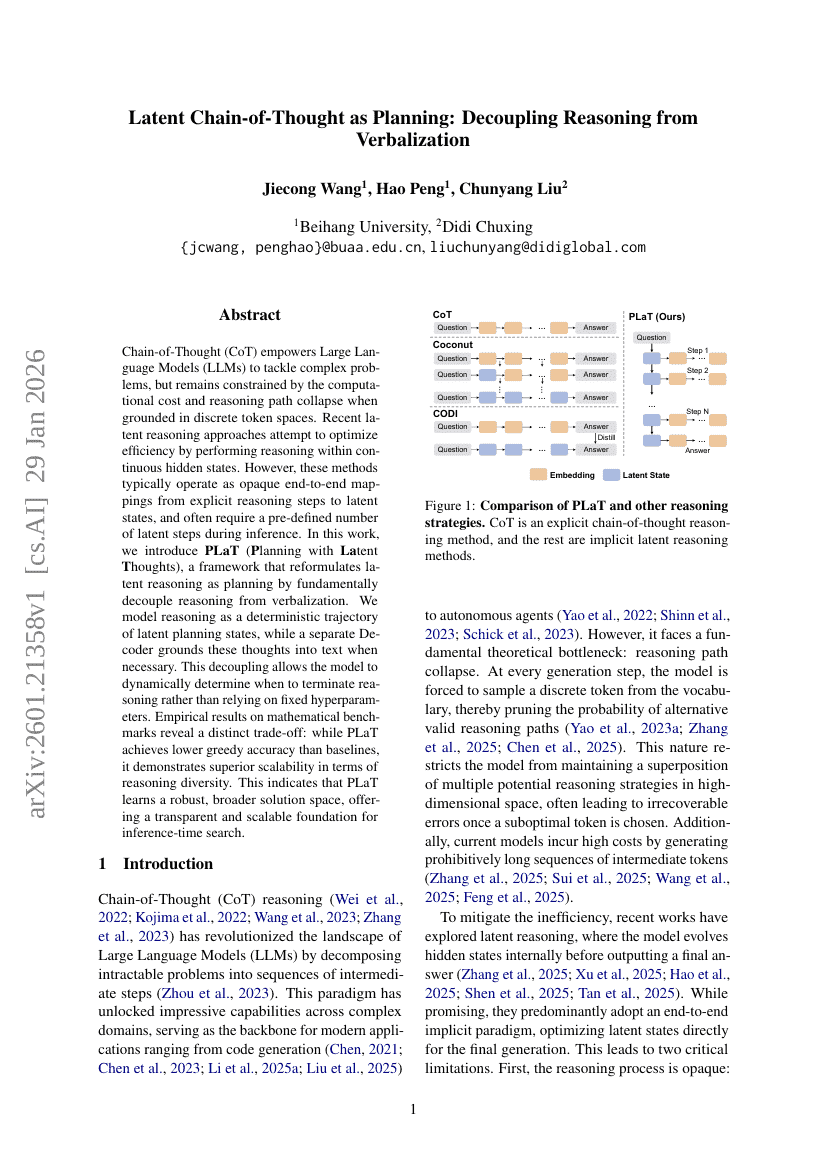

Latent Chain-of-Thought as Planning: Decoupling Reasoning from Verbalization

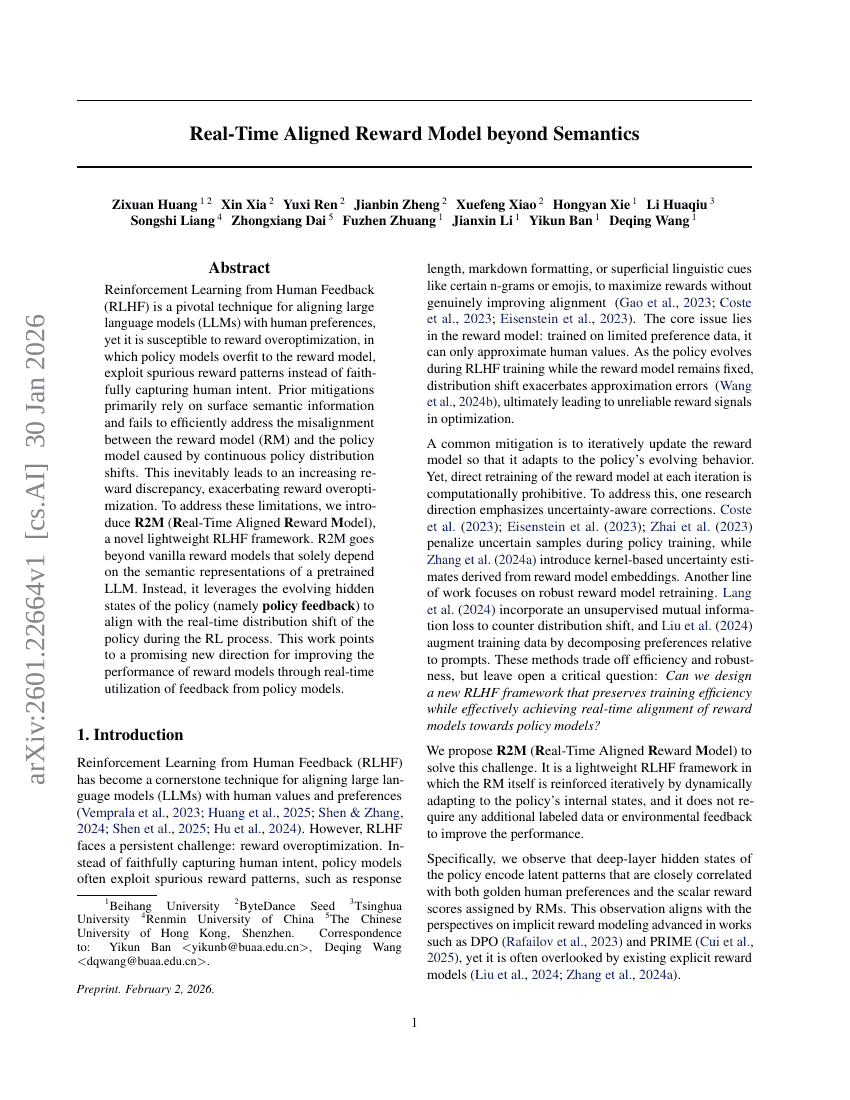

Real-Time Aligned Reward Model beyond Semantics

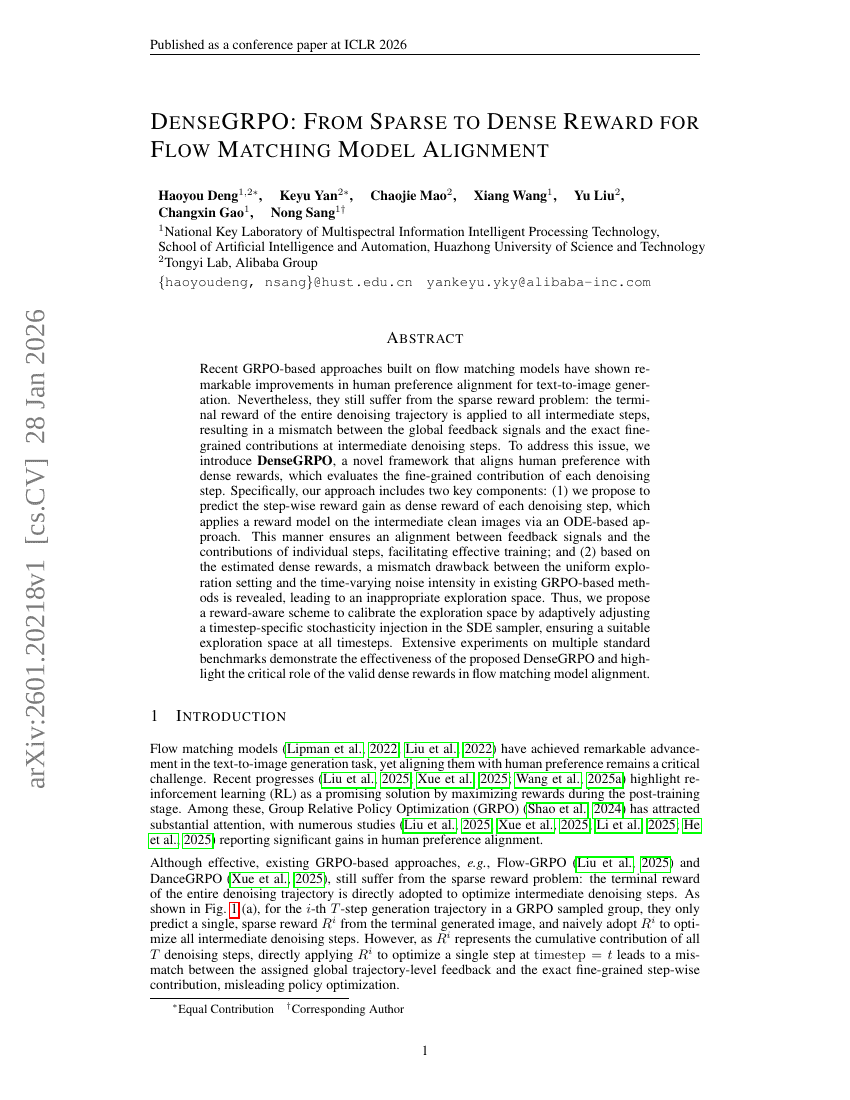

DenseGRPO: From Sparse to Dense Reward for Flow Matching Model Alignment

DreamActor-M2: Universal Character Image Animation via Spatiotemporal In-Context Learning

TTCS: Test-Time Curriculum Synthesis for Self-Evolving

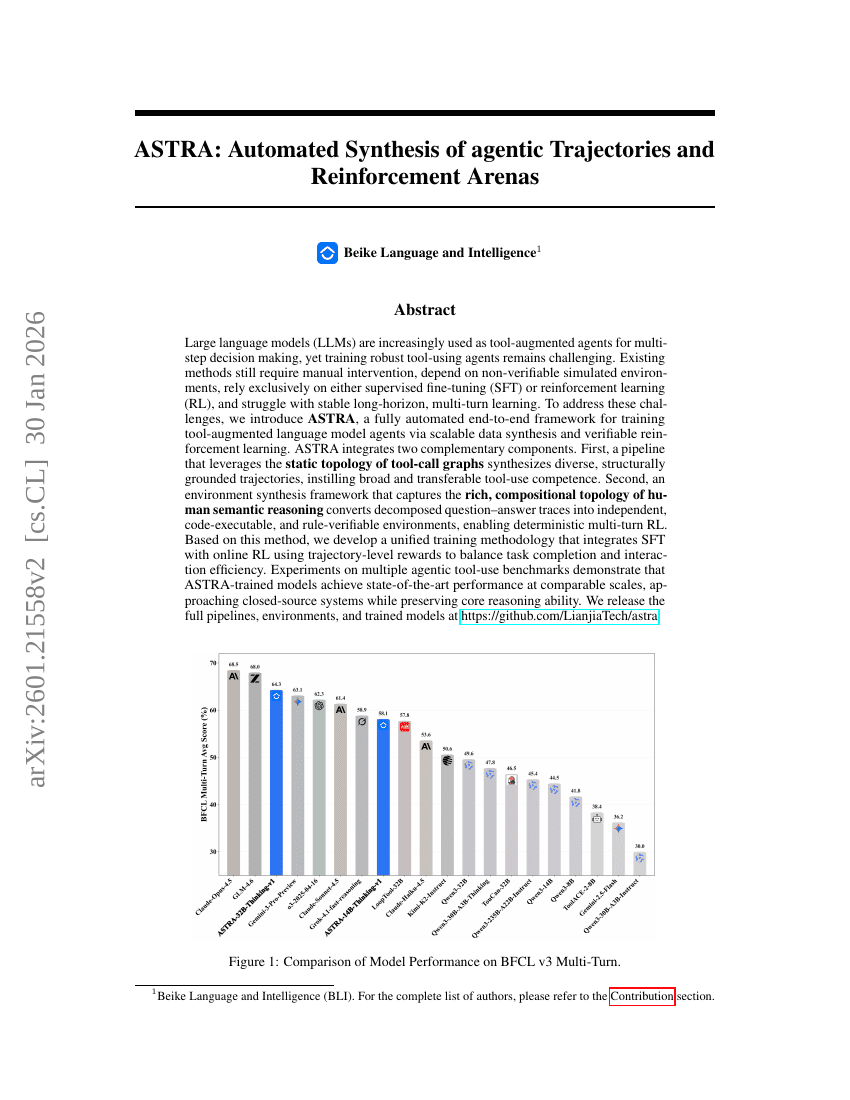

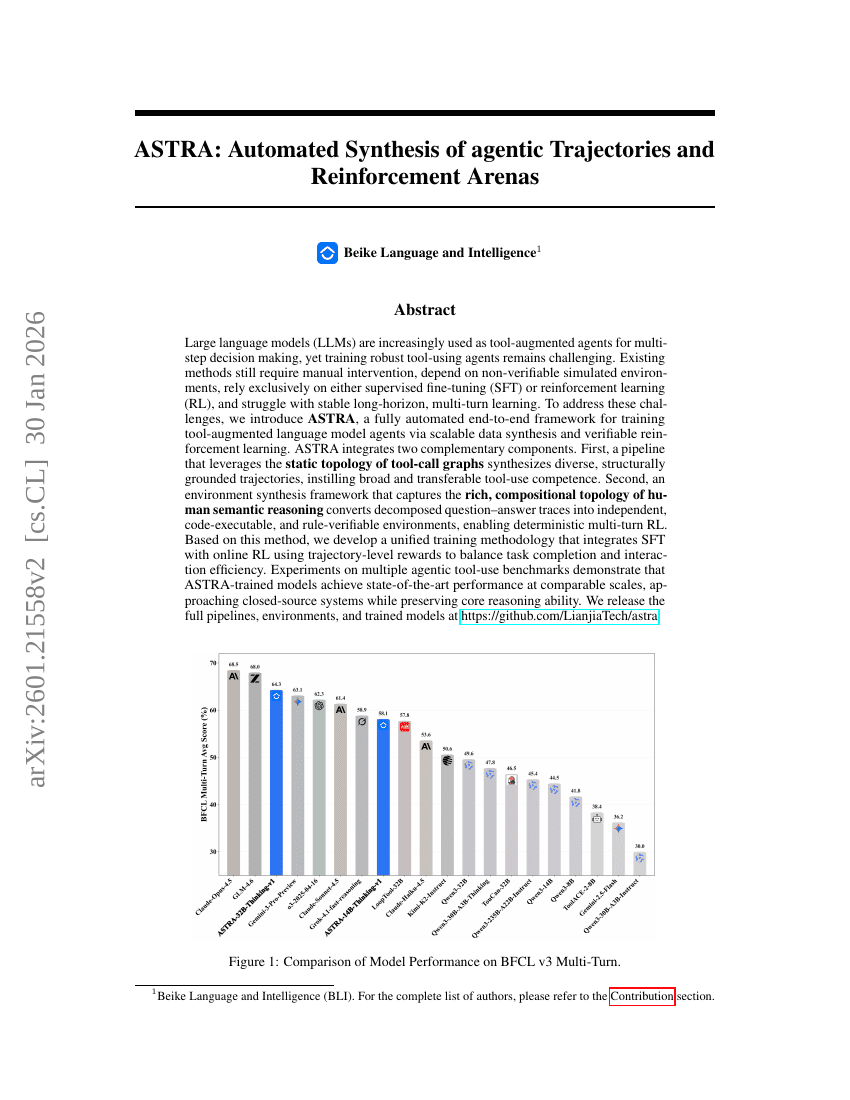

ASTRA: Automated Synthesis of agentic Trajectories and Reinforcement Arenas

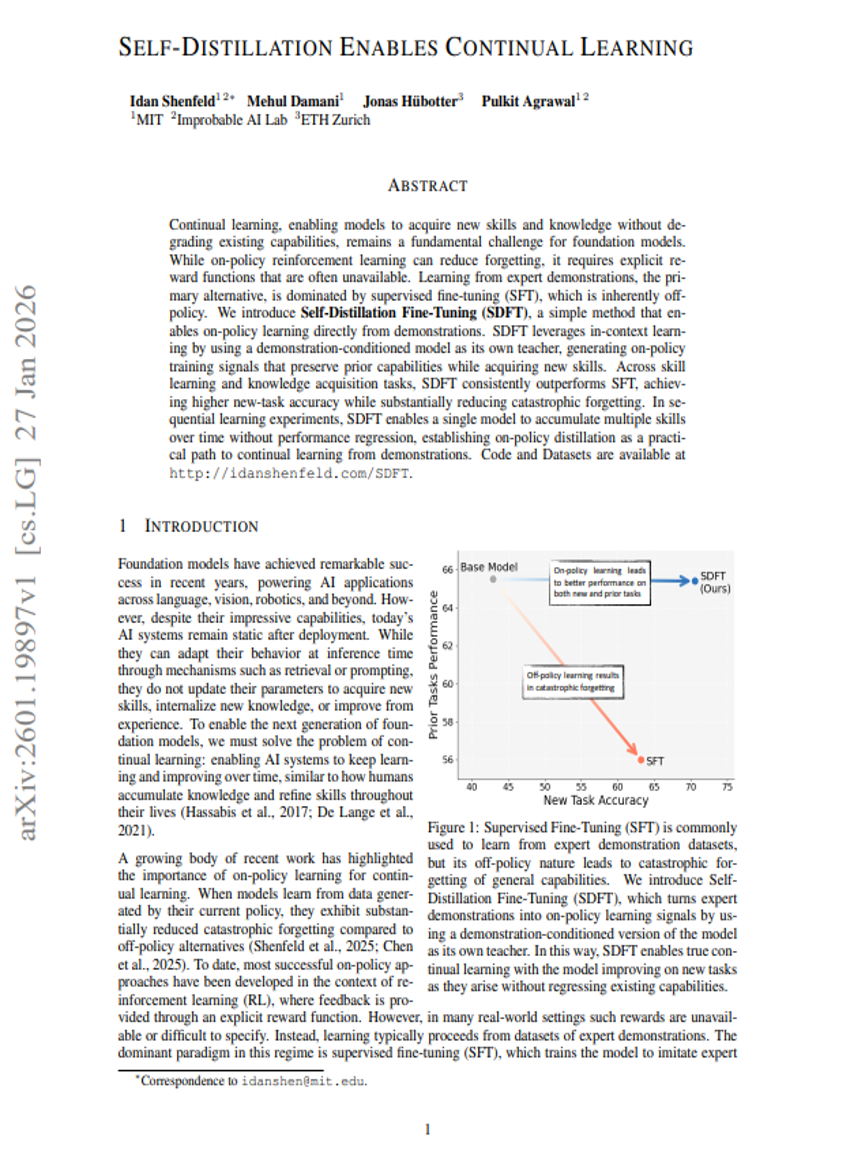

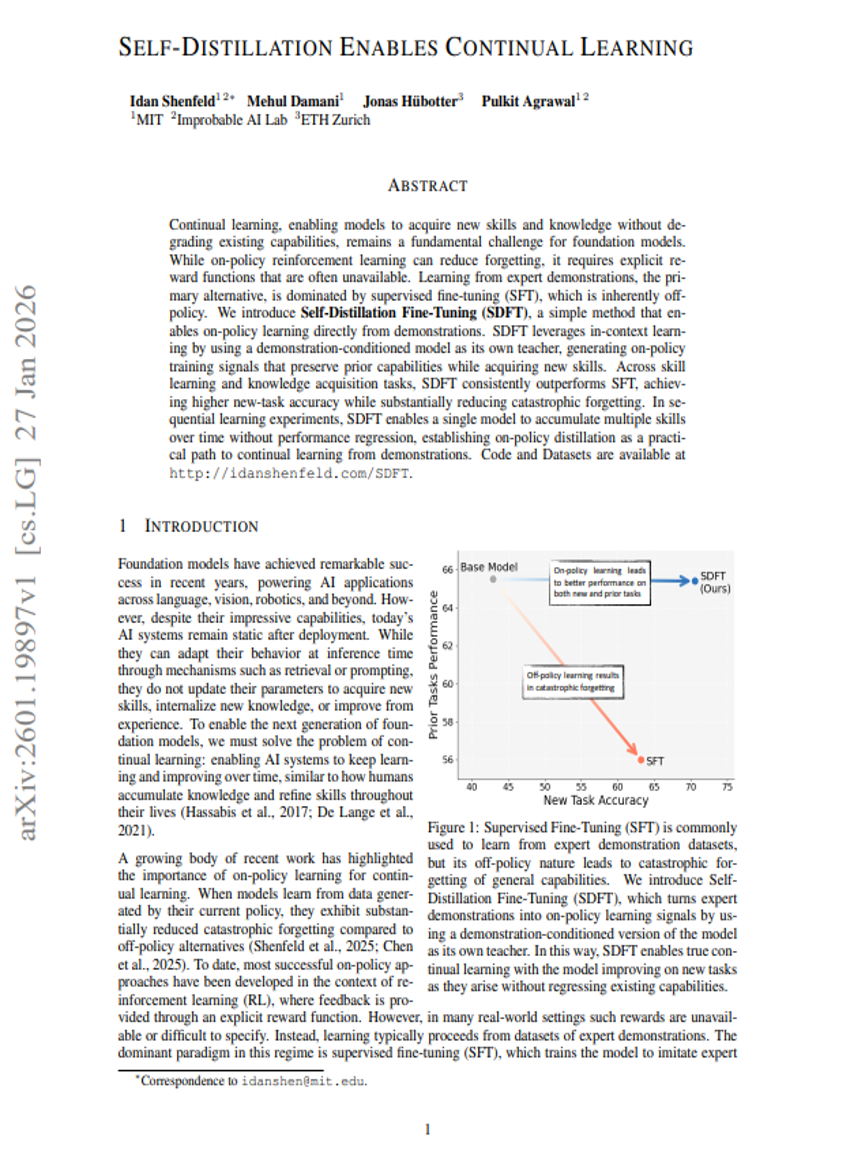

Self-Distillation Enables Continual Learning

Towards Execution-Grounded Automated AI Research

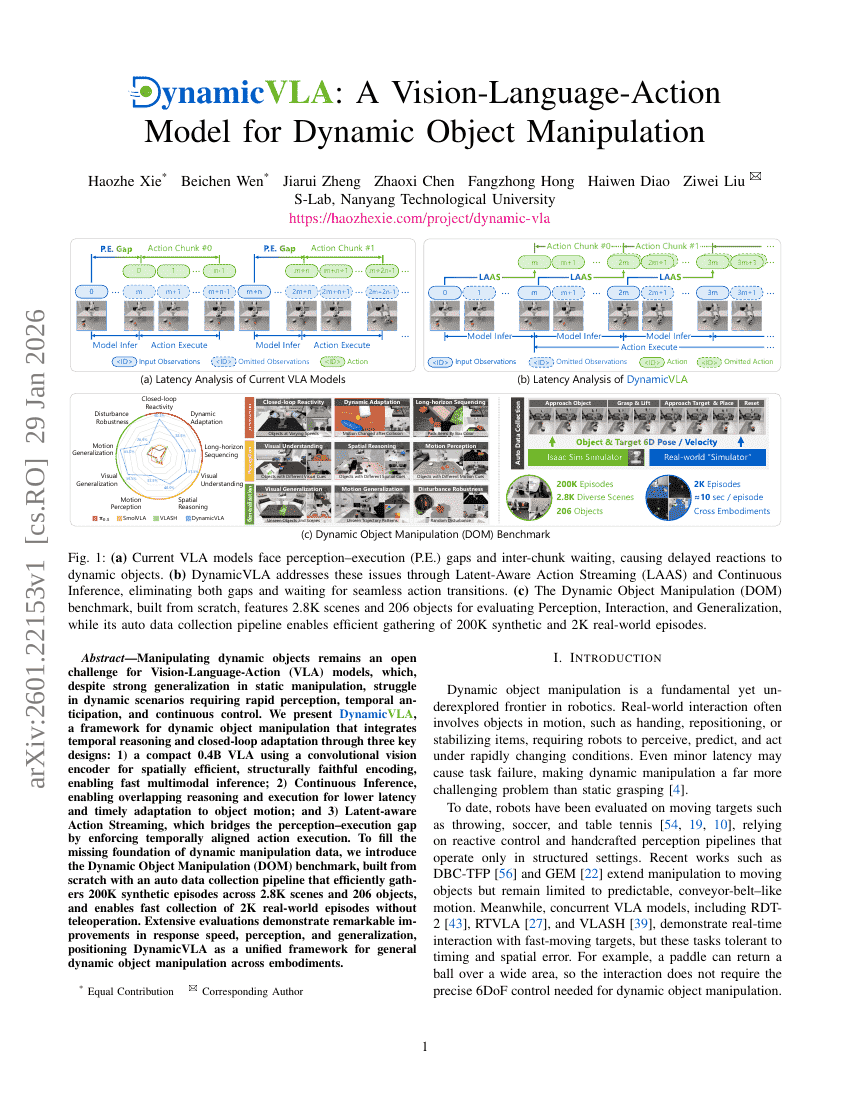

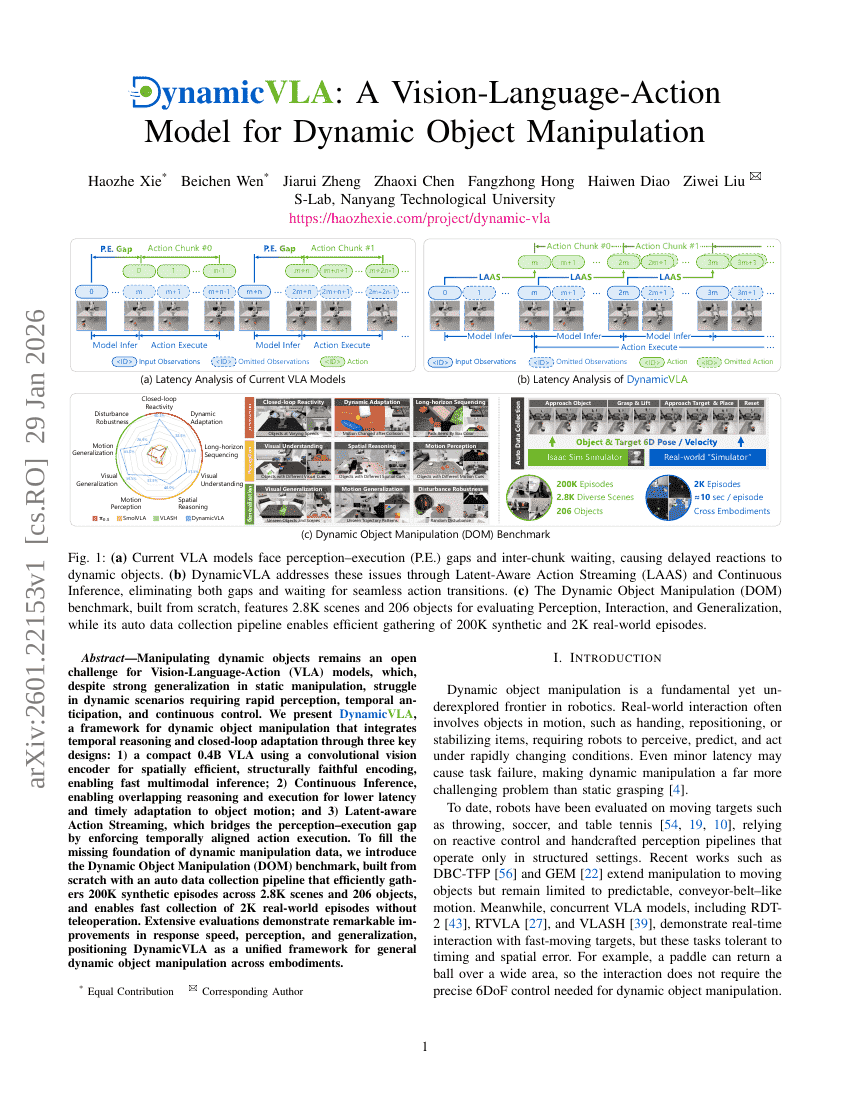

DynamicVLA: A Vision-Language-Action Model for Dynamic Object Manipulation

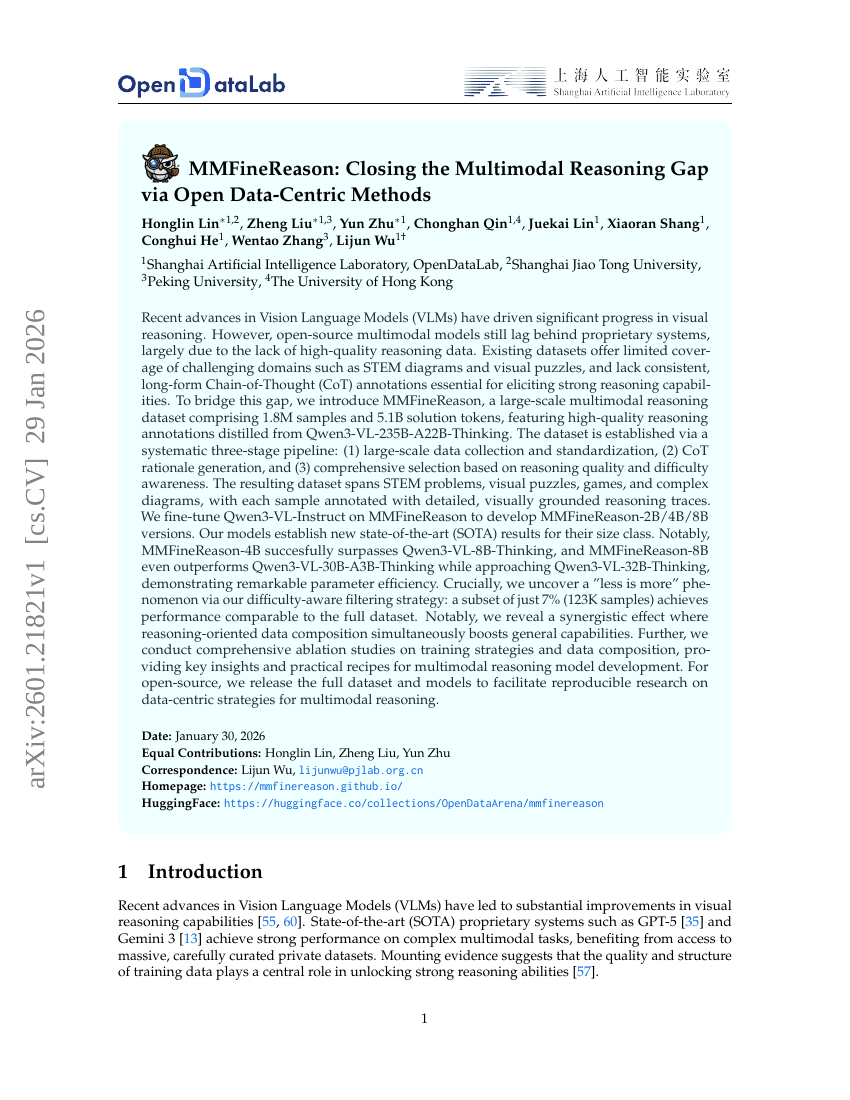

MMFineReason: Closing the Multimodal Reasoning Gap via Open Data-Centric Methods

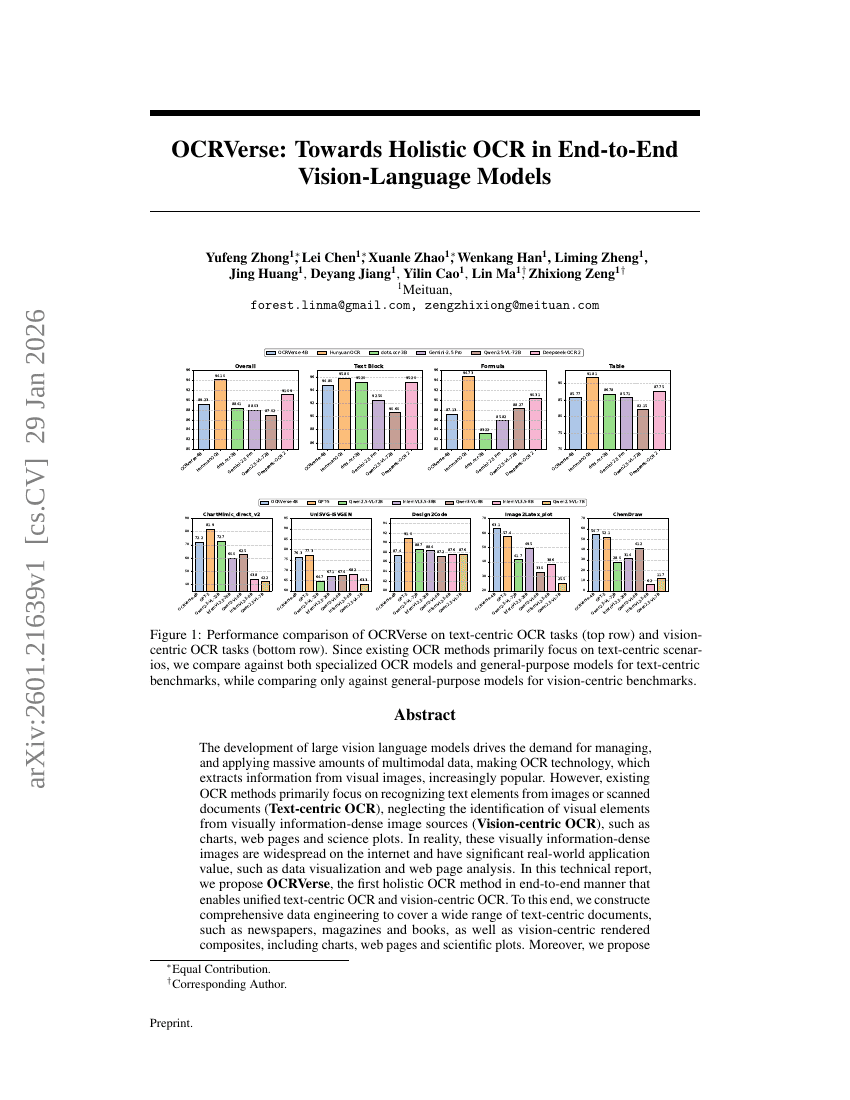

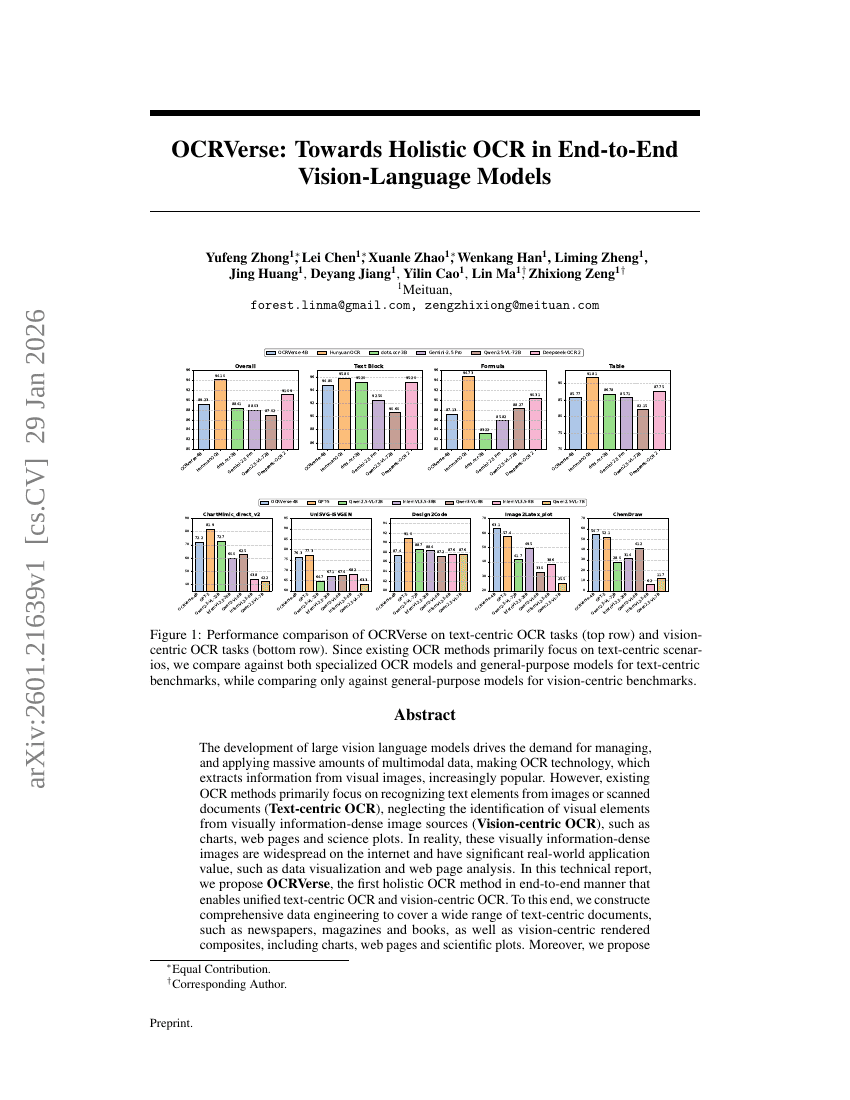

OCRVerse: Towards Holistic OCR in End-to-End Vision-Language Models

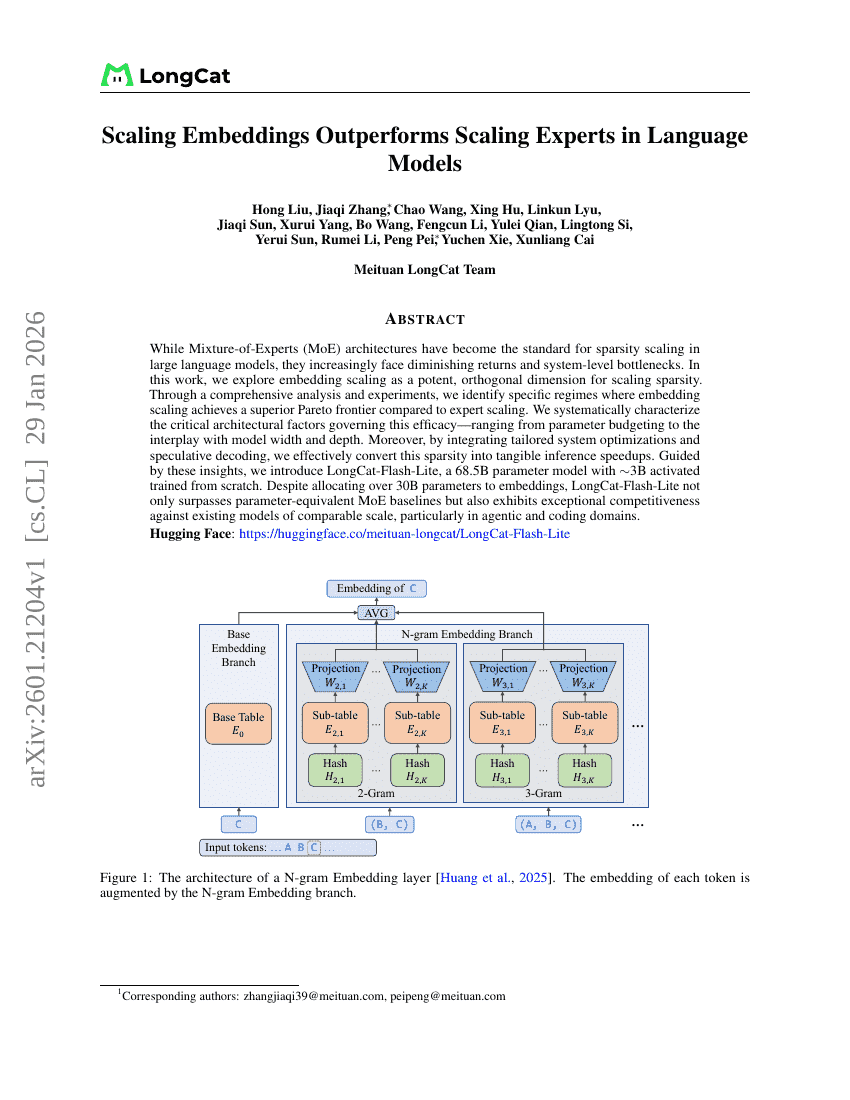

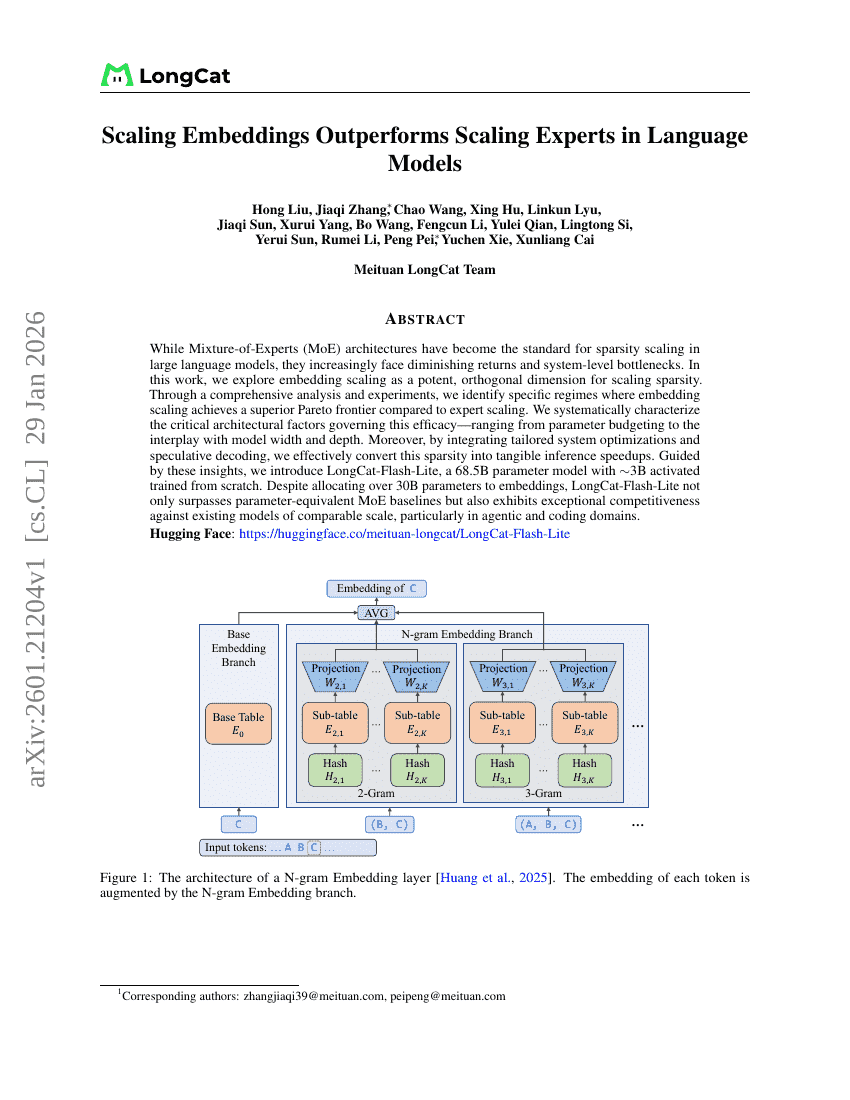

Scaling Embeddings Outperforms Scaling Experts in Language Models

Idea2Story: An Automated Pipeline for Transforming Research Concepts into Complete Scientific Narratives

Everything in Its Place: Benchmarking Spatial Intelligence of Text-to-Image Models

Qwen3-ASR Technical Report

Insight Agents: An LLM-Based Multi-Agent System for Data Insights

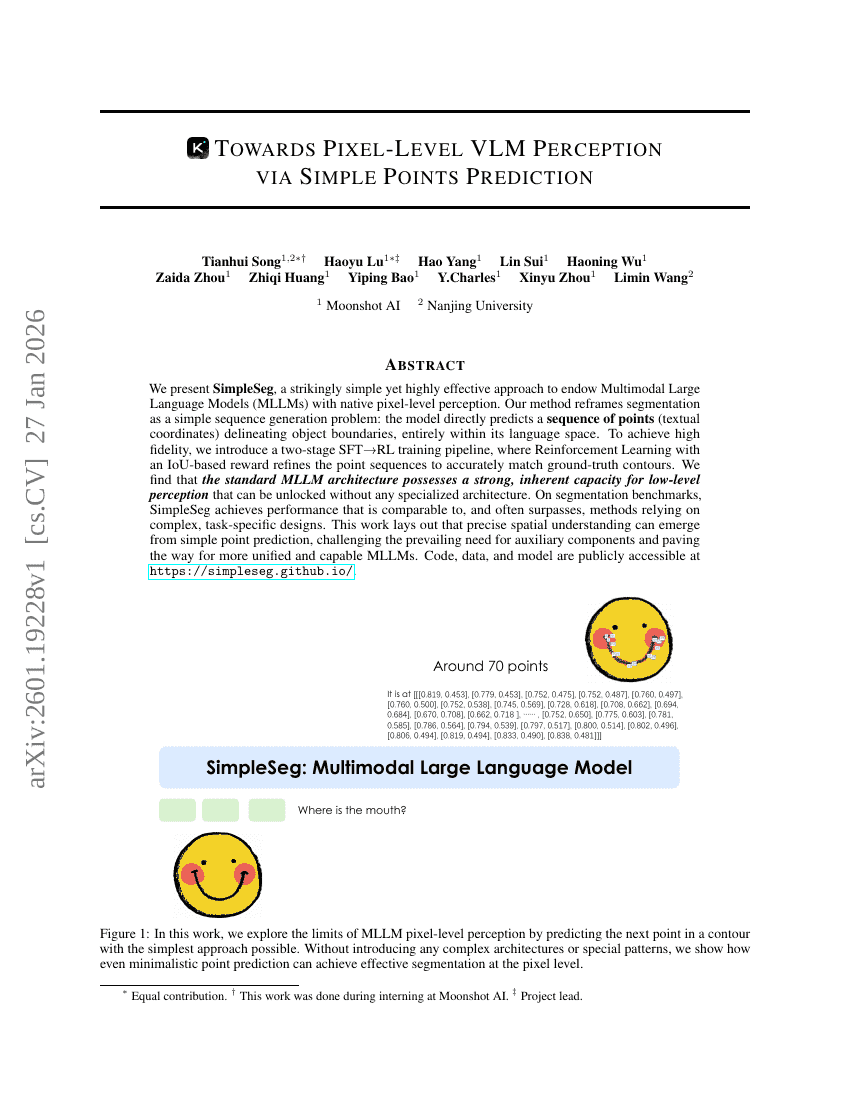

Towards Pixel-Level VLM Perception via Simple Points Prediction

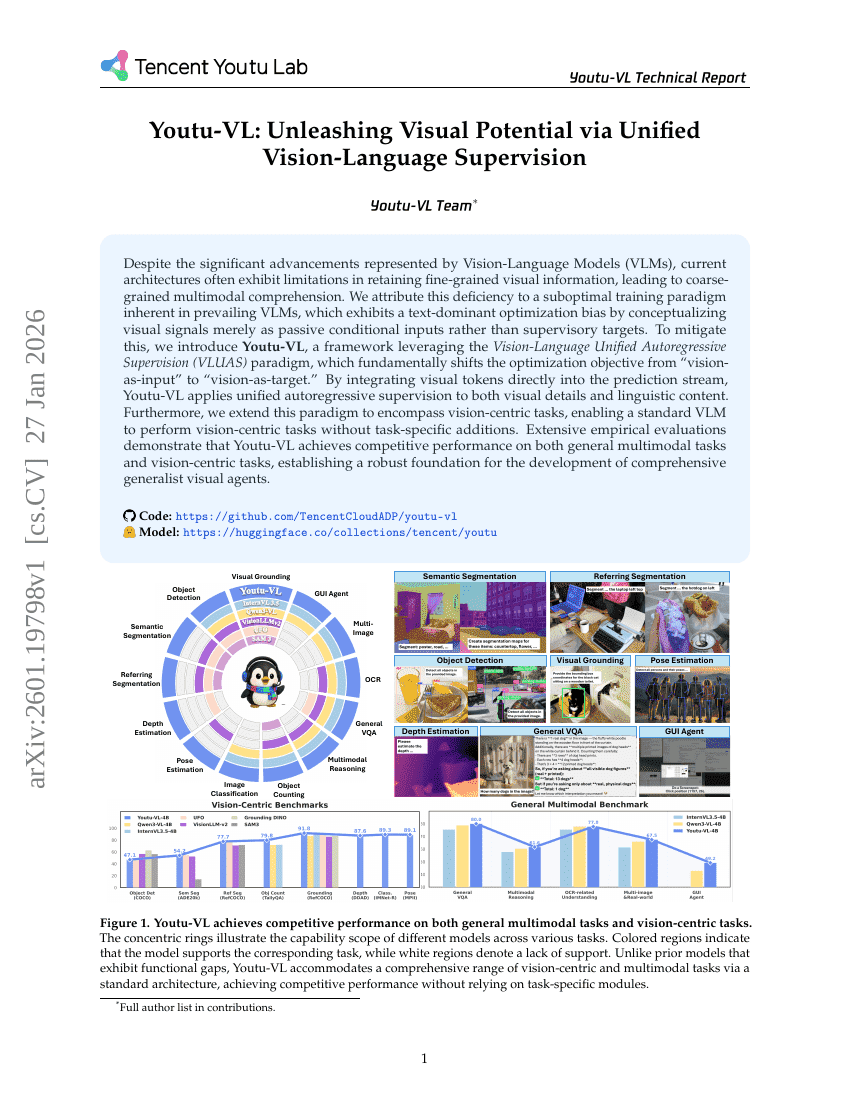

Youtu-VL: Unleashing Visual Potential via Unified Vision-Language Supervision

Innovator-VL: A Multimodal Large Language Model for Scientific Discovery

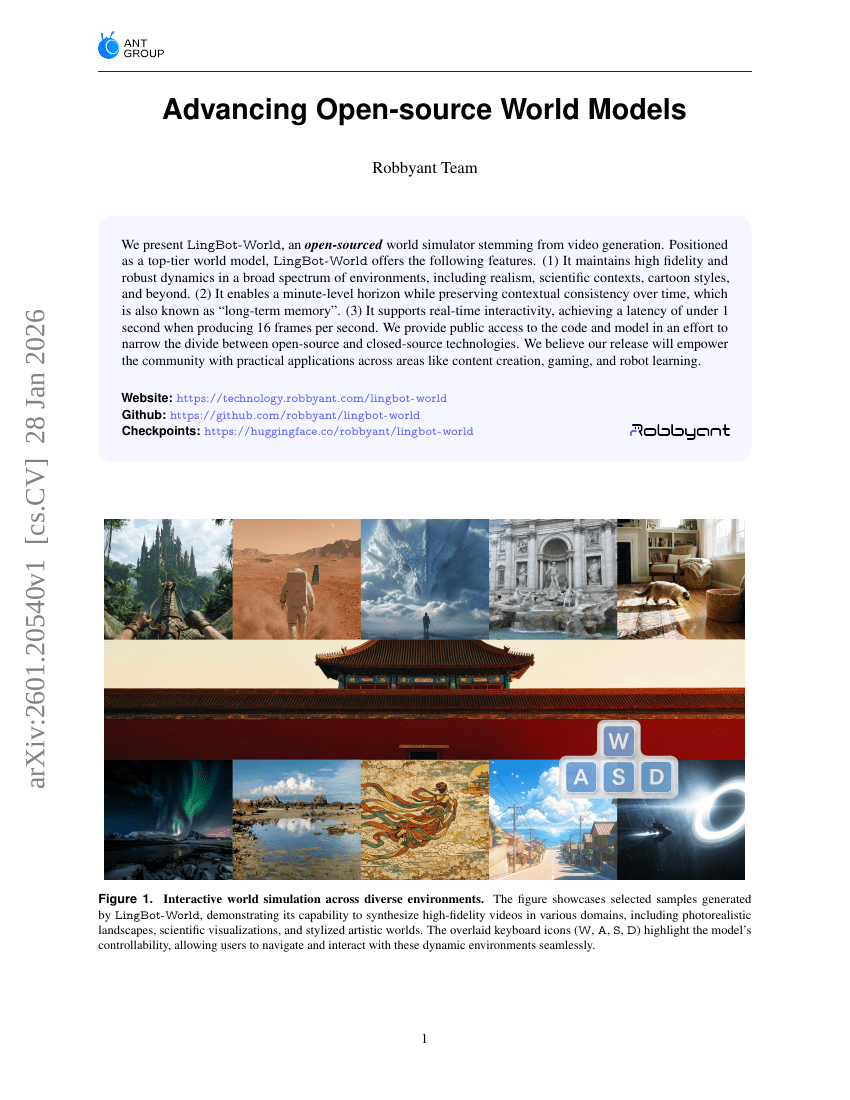

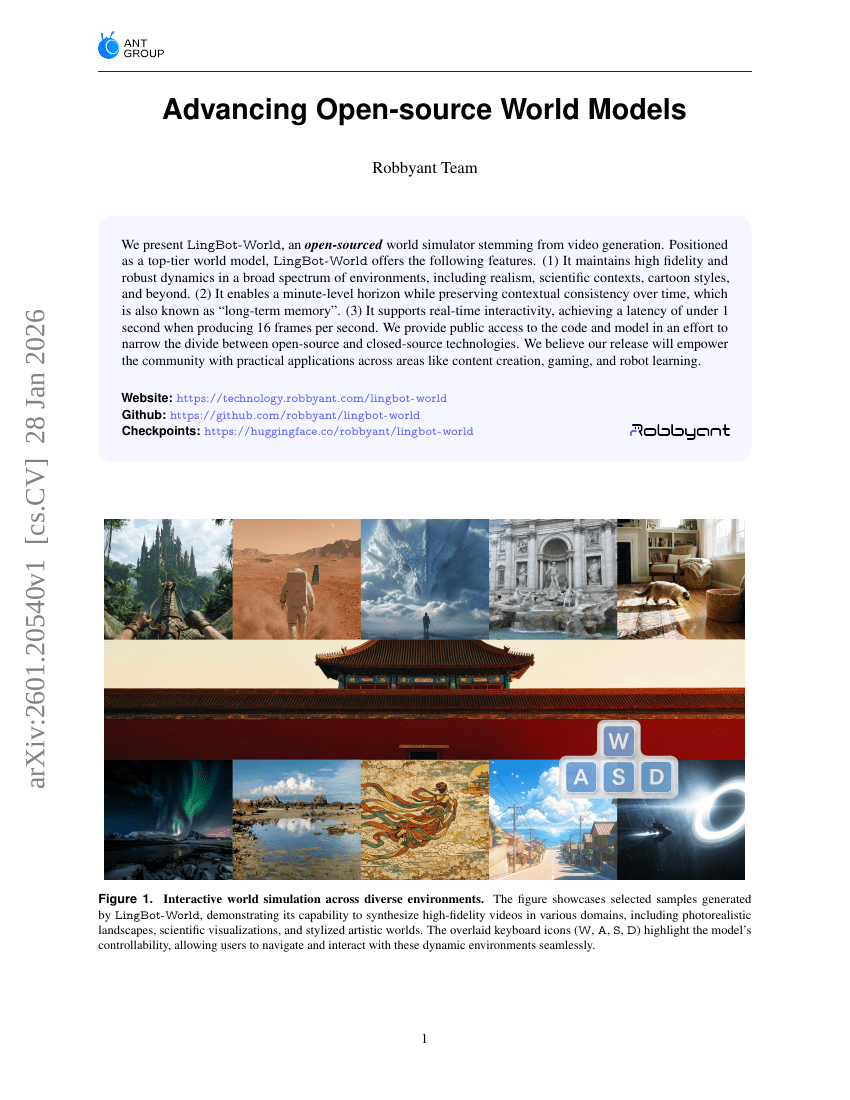

Advancing Open-source World Models

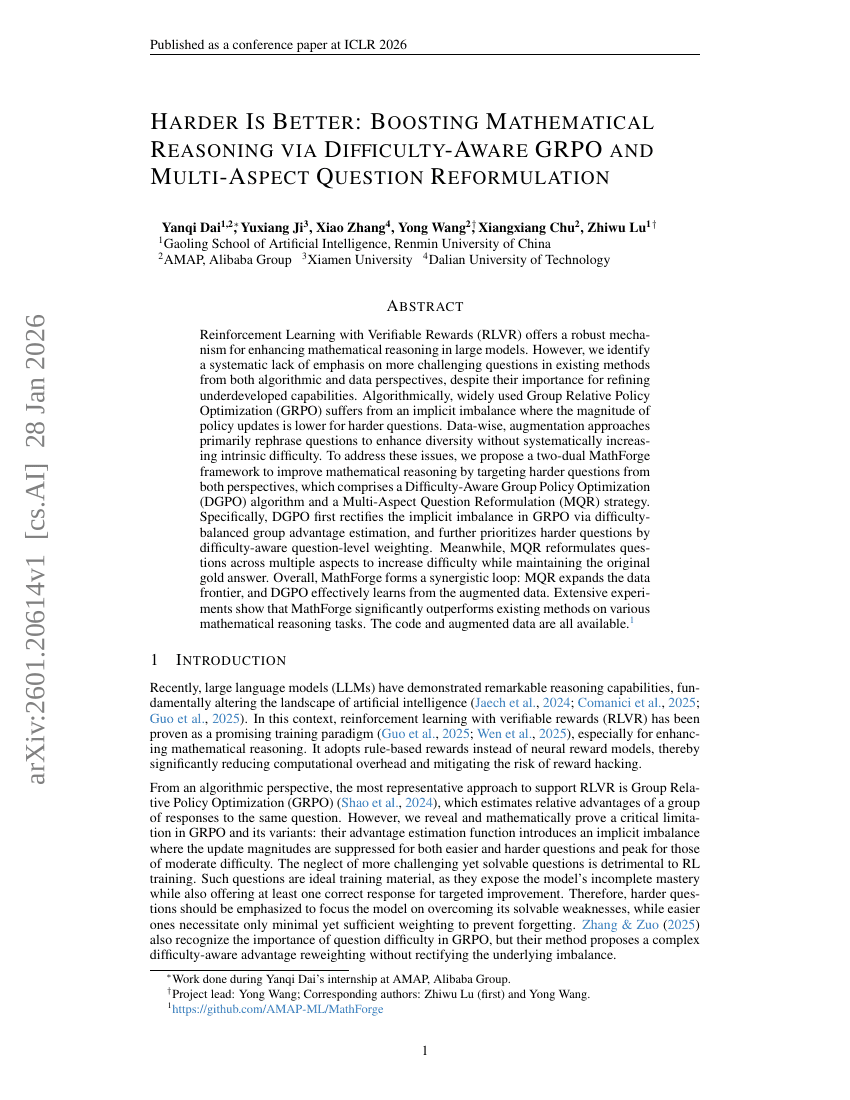

Harder Is Better: Boosting Mathematical Reasoning via Difficulty-Aware GRPO and Multi-Aspect Question Reformulation

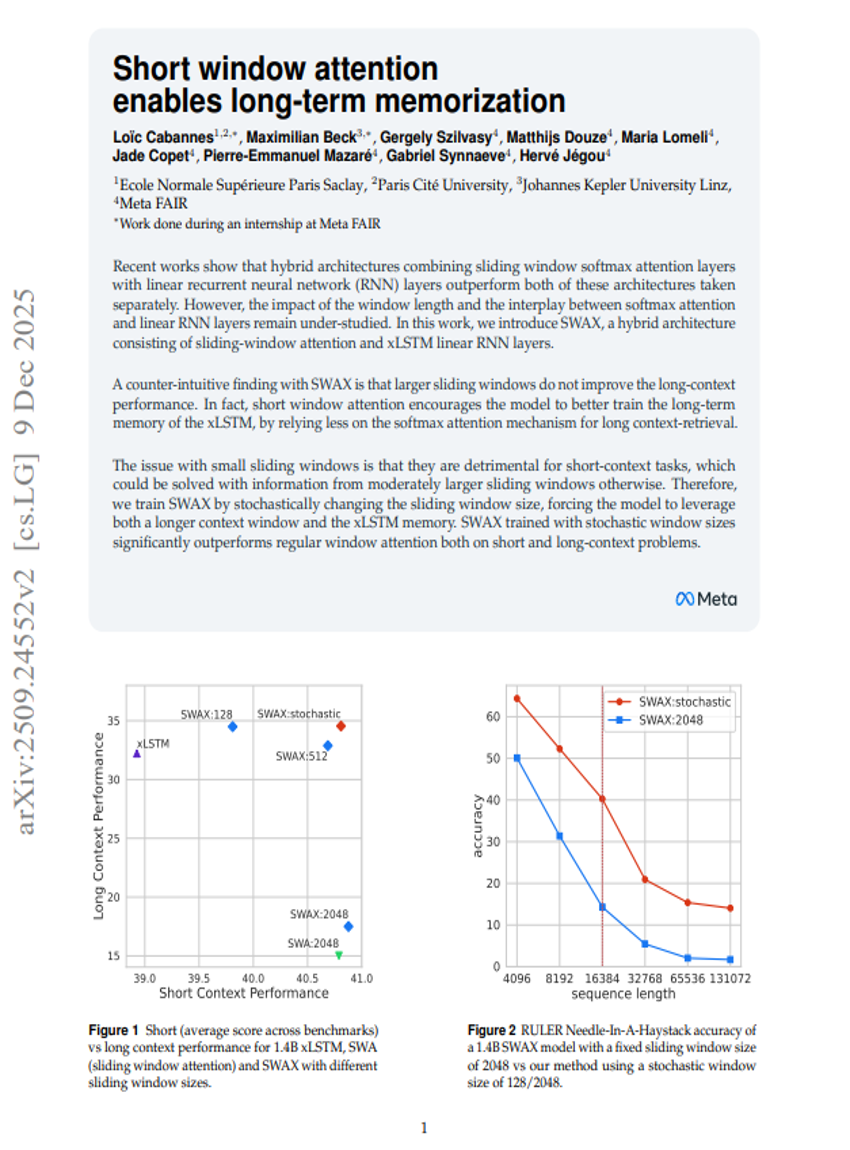

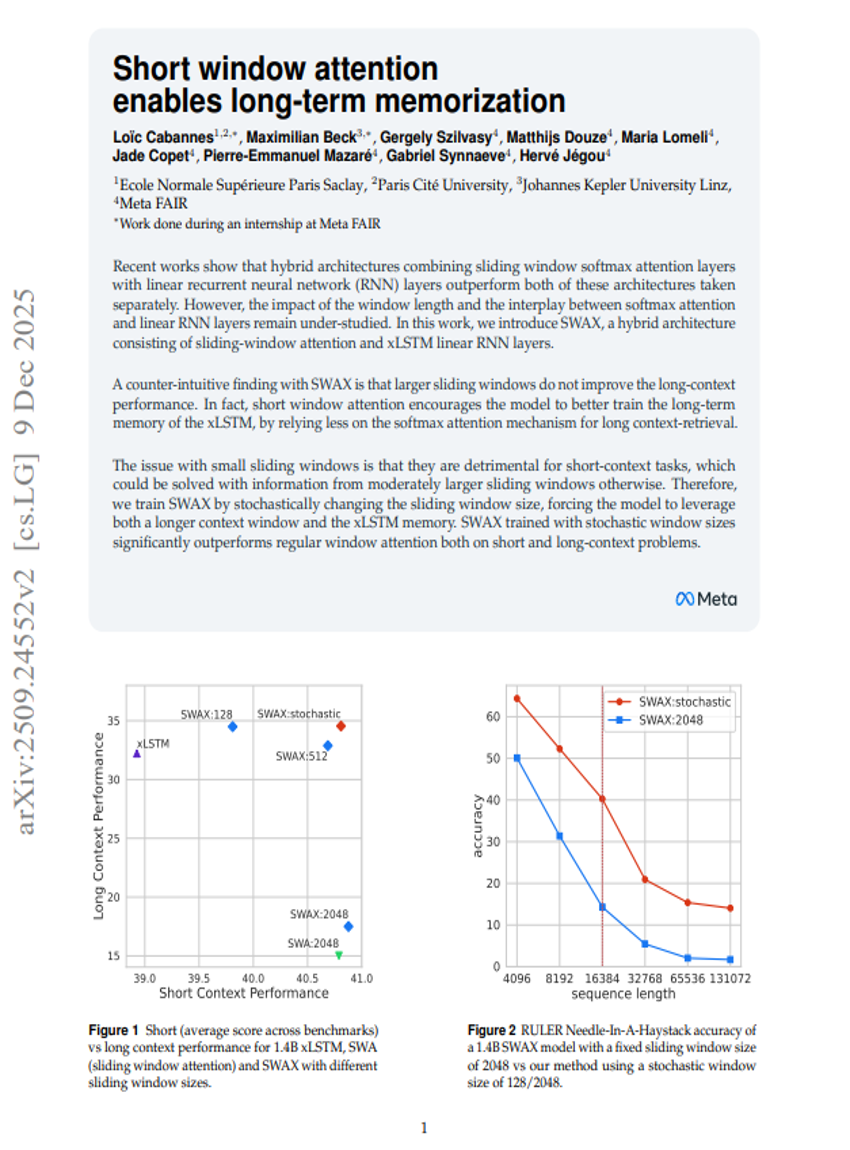

Short window attention enables long-term memorization

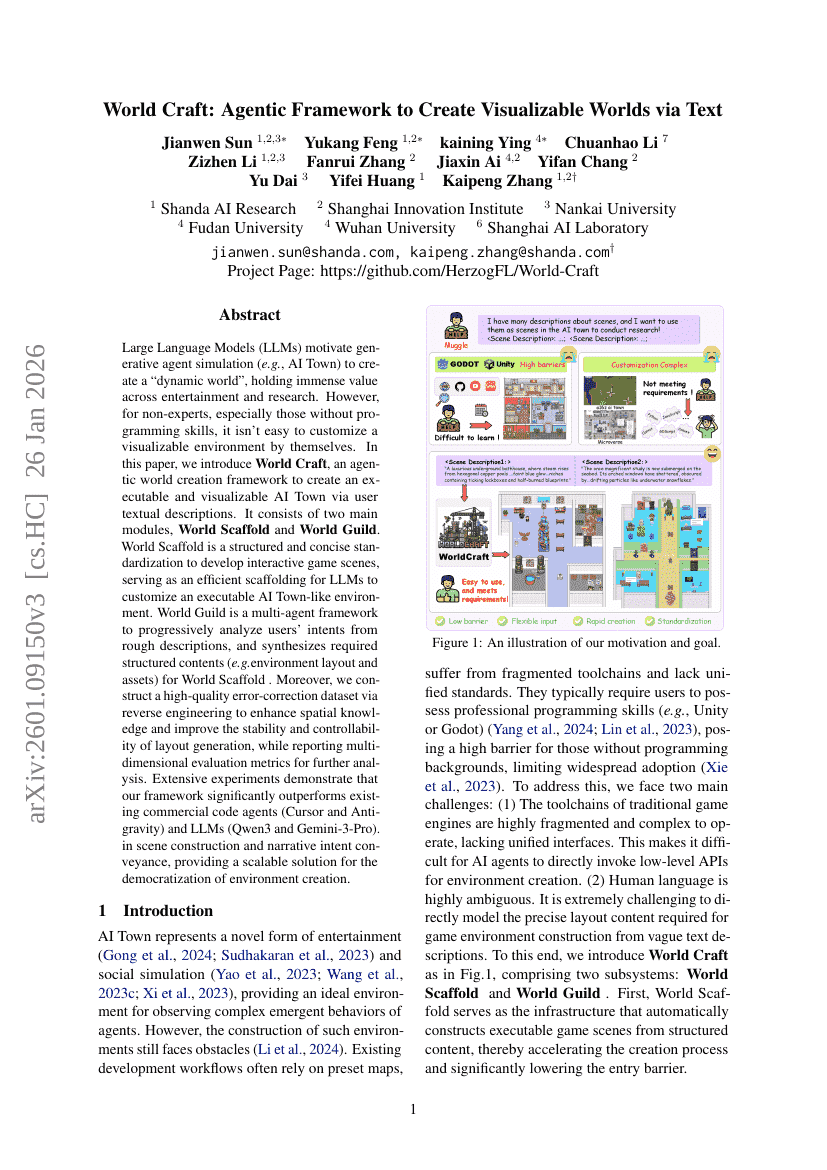

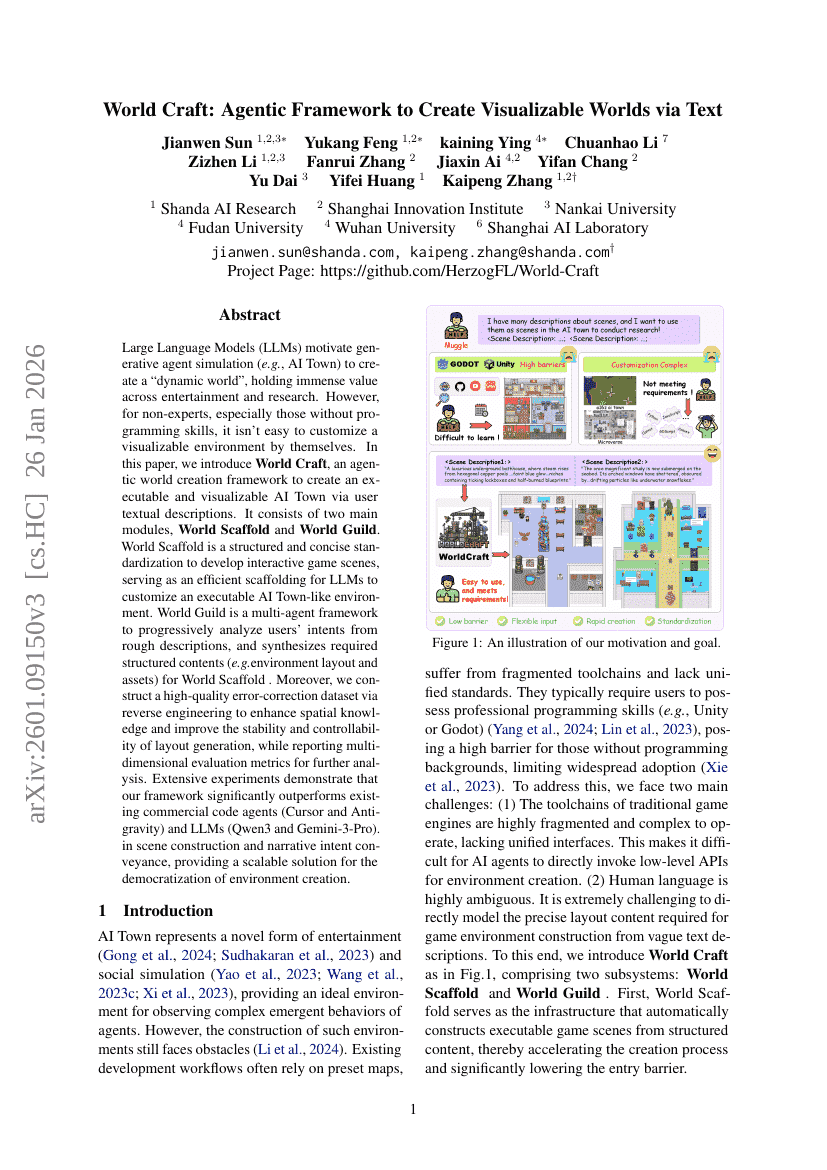

World Craft: Agentic Framework to Create Visualizable Worlds via Text

Visual Generation Unlocks Human-Like Reasoning through Multimodal World Models

Masked Depth Modeling for Spatial Perception

A Pragmatic VLA Foundation Model

AdaReasoner: Dynamic Tool Orchestration for Iterative Visual Reasoning

Green-VLA: Staged Vision-Language-Action Model for Generalist Robots

PaperBanana: Automating Academic Illustration for AI Scientists

Semi-Autonomous Mathematics Discovery with Gemini: A Case Study on the Erdős Problems

Latent Chain-of-Thought as Planning: Decoupling Reasoning from Verbalization

Real-Time Aligned Reward Model beyond Semantics

DenseGRPO: From Sparse to Dense Reward for Flow Matching Model Alignment

DreamActor-M2: Universal Character Image Animation via Spatiotemporal In-Context Learning

TTCS: Test-Time Curriculum Synthesis for Self-Evolving

ASTRA: Automated Synthesis of agentic Trajectories and Reinforcement Arenas

Self-Distillation Enables Continual Learning

Towards Execution-Grounded Automated AI Research

DynamicVLA: A Vision-Language-Action Model for Dynamic Object Manipulation

MMFineReason: Closing the Multimodal Reasoning Gap via Open Data-Centric Methods

OCRVerse: Towards Holistic OCR in End-to-End Vision-Language Models

Scaling Embeddings Outperforms Scaling Experts in Language Models

Idea2Story: An Automated Pipeline for Transforming Research Concepts into Complete Scientific Narratives

Everything in Its Place: Benchmarking Spatial Intelligence of Text-to-Image Models

Qwen3-ASR Technical Report

Insight Agents: An LLM-Based Multi-Agent System for Data Insights

Towards Pixel-Level VLM Perception via Simple Points Prediction

Youtu-VL: Unleashing Visual Potential via Unified Vision-Language Supervision

Innovator-VL: A Multimodal Large Language Model for Scientific Discovery

Advancing Open-source World Models

Harder Is Better: Boosting Mathematical Reasoning via Difficulty-Aware GRPO and Multi-Aspect Question Reformulation

Short window attention enables long-term memorization

World Craft: Agentic Framework to Create Visualizable Worlds via Text

Visual Generation Unlocks Human-Like Reasoning through Multimodal World Models

Masked Depth Modeling for Spatial Perception

A Pragmatic VLA Foundation Model

AdaReasoner: Dynamic Tool Orchestration for Iterative Visual Reasoning